Hadoop Compatibility in Flink

18 Nov 2014 by Fabian Hüske (@fhueske)

Apache Hadoop is an industry standard for scalable analytical data processing. Many data analysis applications have been implemented as Hadoop MapReduce jobs and run in clusters around the world. Apache Flink can be an alternative to MapReduce and improves it in many dimensions. Among other features, Flink provides much better performance and offers APIs in Java and Scala, which are very easy to use. Similar to Hadoop, Flink’s APIs provide interfaces for Mapper and Reducer functions, as well as Input- and OutputFormats along with many more operators. While being conceptually equivalent, Hadoop’s MapReduce and Flink’s interfaces for these functions are unfortunately not source compatible.

Flink’s Hadoop Compatibility Package

To close this gap, Flink provides a Hadoop Compatibility package to wrap functions implemented against Hadoop’s MapReduce interfaces and embed them in Flink programs. This package was developed as part of a Google Summer of Code 2014 project.

With the Hadoop Compatibility package, you can reuse all your Hadoop

InputFormats(mapred and mapreduce APIs)OutputFormats(mapred and mapreduce APIs)Mappers(mapred API)Reducers(mapred API)

in Flink programs without changing a line of code. Moreover, Flink also natively supports all Hadoop data types (Writables and WritableComparable).

The following code snippet shows a simple Flink WordCount program that solely uses Hadoop data types, InputFormat, OutputFormat, Mapper, and Reducer functions.

// Definition of Hadoop Mapper function

public class Tokenizer implements Mapper<LongWritable, Text, Text, LongWritable> { ... }

// Definition of Hadoop Reducer function

public class Counter implements Reducer<Text, LongWritable, Text, LongWritable> { ... } public static void main(String[] args) {

final String inputPath = args[0];

final String outputPath = args[1]; final ExecutionEnvironment env = ExecutionEnvironment.getExecutionEnvironment(); // Setup Hadoop’s TextInputFormat

HadoopInputFormat<LongWritable, Text> hadoopInputFormat =

new HadoopInputFormat<LongWritable, Text>(

new TextInputFormat(), LongWritable.class, Text.class, new JobConf());

TextInputFormat.addInputPath(hadoopInputFormat.getJobConf(), new Path(inputPath)); // Read a DataSet with the Hadoop InputFormat

DataSet<Tuple2<LongWritable, Text>> text = env.createInput(hadoopInputFormat);

DataSet<Tuple2<Text, LongWritable>> words = text

// Wrap Tokenizer Mapper function

.flatMap(new HadoopMapFunction<LongWritable, Text, Text, LongWritable>(new Tokenizer()))

.groupBy(0)

// Wrap Counter Reducer function (used as Reducer and Combiner)

.reduceGroup(new HadoopReduceCombineFunction<Text, LongWritable, Text, LongWritable>(

new Counter(), new Counter())); // Setup Hadoop’s TextOutputFormat

HadoopOutputFormat<Text, LongWritable> hadoopOutputFormat =

new HadoopOutputFormat<Text, LongWritable>(

new TextOutputFormat<Text, LongWritable>(), new JobConf());

hadoopOutputFormat.getJobConf().set("mapred.textoutputformat.separator", " ");

TextOutputFormat.setOutputPath(hadoopOutputFormat.getJobConf(), new Path(outputPath)); // Output & Execute

words.output(hadoopOutputFormat);

env.execute("Hadoop Compat WordCount");

}

As you can see, Flink represents Hadoop key-value pairs as Tuple2<key, value> tuples. Note, that the program uses Flink’s groupBy() transformation to group data on the key field (field 0 of the Tuple2<key, value>) before it is given to the Reducer function. At the moment, the compatibility package does not evaluate custom Hadoop partitioners, sorting comparators, or grouping comparators.

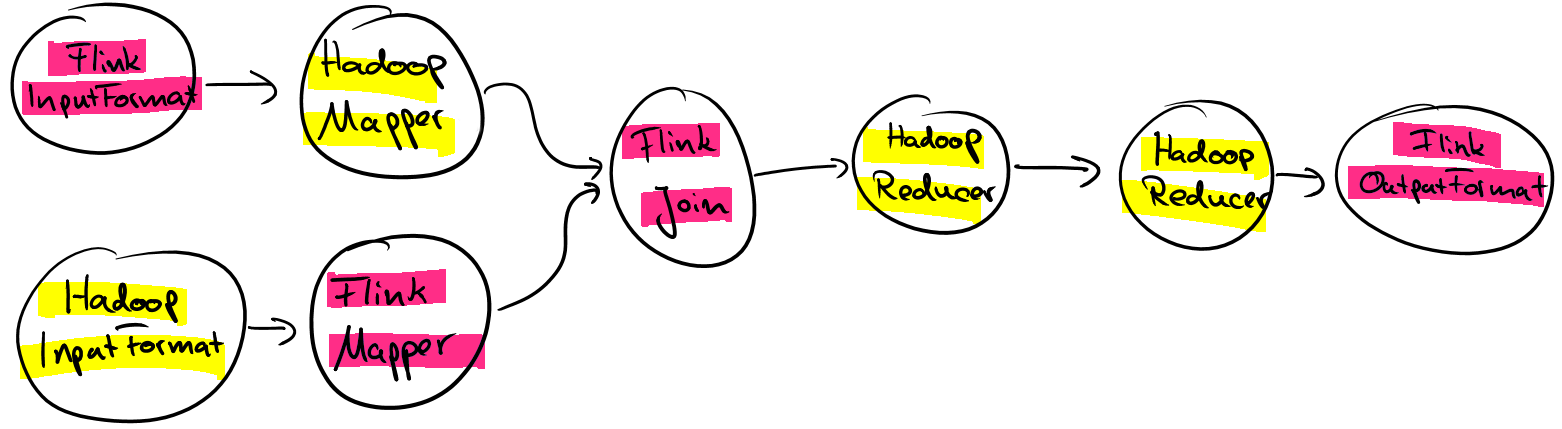

Hadoop functions can be used at any position within a Flink program and of course also be mixed with native Flink functions. This means that instead of assembling a workflow of Hadoop jobs in an external driver method or using a workflow scheduler such as Apache Oozie, you can implement an arbitrary complex Flink program consisting of multiple Hadoop Input- and OutputFormats, Mapper and Reducer functions. When executing such a Flink program, data will be pipelined between your Hadoop functions and will not be written to HDFS just for the purpose of data exchange.

What comes next?

While the Hadoop compatibility package is already very useful, we are currently working on a dedicated Hadoop Job operation to embed and execute Hadoop jobs as a whole in Flink programs, including their custom partitioning, sorting, and grouping code. With this feature, you will be able to chain multiple Hadoop jobs, mix them with Flink functions, and other operations such as Spargel operations (Pregel/Giraph-style jobs).

Summary

Flink lets you reuse a lot of the code you wrote for Hadoop MapReduce, including all data types, all Input- and OutputFormats, and Mapper and Reducers of the mapred-API. Hadoop functions can be used within Flink programs and mixed with all other Flink functions. Due to Flink’s pipelined execution, Hadoop functions can arbitrarily be assembled without data exchange via HDFS. Moreover, the Flink community is currently working on a dedicated Hadoop Job operation to supporting the execution of Hadoop jobs as a whole.

If you want to use Flink’s Hadoop compatibility package checkout our documentation.

Hadoop Compatibility in Flink的更多相关文章

- Hadoop,Spark,Flink 相关KB

Hive: https://stackoverflow.com/questions/17038414/difference-between-hive-internal-tables-and-exter ...

- flink hadoop yarn

新一代大数据处理引擎 Apache Flink https://www.ibm.com/developerworks/cn/opensource/os-cn-apache-flink/ 新一代大数据处 ...

- Flink学习笔记:Flink开发环境搭建

本文为<Flink大数据项目实战>学习笔记,想通过视频系统学习Flink这个最火爆的大数据计算框架的同学,推荐学习课程: Flink大数据项目实战:http://t.cn/EJtKhaz ...

- flink学习笔记-各种Time

说明:本文为<Flink大数据项目实战>学习笔记,想通过视频系统学习Flink这个最火爆的大数据计算框架的同学,推荐学习课程: Flink大数据项目实战:http://t.cn/EJtKh ...

- Flink Program Guide (1) -- 基本API概念(Basic API Concepts -- For Java)

false false false false EN-US ZH-CN X-NONE /* Style Definitions */ table.MsoNormalTable {mso-style-n ...

- 新一代大数据处理引擎 Apache Flink

https://www.ibm.com/developerworks/cn/opensource/os-cn-apache-flink/index.html 大数据计算引擎的发展 这几年大数据的飞速发 ...

- Flink知识点

1. Flink.Storm.Sparkstreaming对比 Storm只支持流处理任务,数据是一条一条的源源不断地处理,而MapReduce.spark只支持批处理任务,spark-streami ...

- 什么是Apache Flink

大数据计算引擎的发展 这几年大数据的飞速发展,出现了很多热门的开源社区,其中著名的有 Hadoop.Storm,以及后来的 Spark,他们都有着各自专注的应用场景.Spark 掀开了内存计算的先河, ...

- Flink 部署文档

Flink 部署文档 1 先决条件 2 下载 Flink 二进制文件 3 配置 Flink 3.1 flink-conf.yaml 3.2 slaves 4 将配置好的 Flink 分发到其他节点 5 ...

随机推荐

- 【Python3爬虫】猫眼电影爬虫(破解字符集反爬)

一.页面分析 首先打开猫眼电影,然后点击一个正在热播的电影(比如:毒液).打开开发者工具,点击左上角的箭头,然后用鼠标点击网页上的票价,可以看到源码中显示的不是数字,而是某些根本看不懂的字符,这是因为 ...

- 你可能不知道的css-doodle

好久没写文章了,下笔突然陌生了许多. 第一个原因是刚找到一份前端的工作,业务上都需要尽快的了解,第二个原因就是懒还有拖延的习惯,一旦今天没有写文章,就由可能找个理由托到下一周,进而到了下一周又有千万条 ...

- C#3.0 Lamdba表达式与表达式树

Lamdba表达式与表达式树 Lamdba表达式 C#2.0中的匿名方法使得创建委托变得简单起来,甚至想不到还有什么方式可以更加的简化,而C#3.0中的lamdba则给了我们答案. lamdba的行为 ...

- linux下的powerline安装教程

powerline是一款比较炫酷的状态栏工具,多用于vim和终端命令行.先上两张效果图,然后介绍一下具体的安装教程. 图 1 powerline在shell下的效果图 图 2 powerline在vi ...

- Win 7 家庭普通版系统升级密钥

VQB3X-Q3KP8-WJ2H8-R6B6D-7QJB7 (高级版)FJGCP-4DFJD-GJY49-VJBQ7-HYRR2 (旗舰版)要先升级到高级版再升级旗舰版,不然(可能)会出错.

- angr初使用(1)

angr是早在几年前就出来了的一款很好用的工具,如今也出了docker,所以想直接安个docker来跑一跑. docker pull angr/angr .下载下来以后,进入docker ,这时并没有 ...

- JDBC与ORM发展与联系 JDBC简介(九)

概念回顾 回顾下JDBC的概念: JDBC(Java Data Base Connectivity,java数据库连接)是一种用于执行SQL语句的Java API,可以为多种关系数据库提供统一访问,它 ...

- Python机器学习笔记 异常点检测算法——Isolation Forest

Isolation,意为孤立/隔离,是名词,其动词为isolate,forest是森林,合起来就是“孤立森林”了,也有叫“独异森林”,好像并没有统一的中文叫法.可能大家都习惯用其英文的名字isolat ...

- SpringBoot系列——i18n国际化

前言 国际化是项目中不可或缺的功能,本文将实现springboot + thymeleaf的HTML页面.js代码.java代码国际化过程记录下来. 代码编写 工程结构 每个文件里面的值(按工程结构循 ...

- react create-react-app 跨域

"proxy":"http://youAddr.com" 直接到根目录package.json里增加上面这行就行了,改成自己需要的地址.