【Hadoop测试程序】编写MapReduce测试Hadoop环境

- 我们使用之前搭建好的Hadoop环境,可参见:

- 示例程序为《Hadoop权威指南3》中的获取最高温度的示例程序;

数据准备

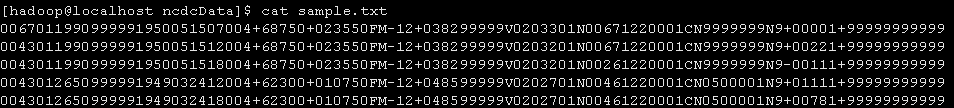

输入数据为:sample.txt

0067011990999991950051507004+68750+023550FM-12+038299999V0203301N00671220001CN9999999N9+00001+999999999990043011990999991950051512004+68750+023550FM-12+038299999V0203201N00671220001CN9999999N9+00221+999999999990043011990999991950051518004+68750+023550FM-12+038299999V0203201N00261220001CN9999999N9-00111+999999999990043012650999991949032412004+62300+010750FM-12+048599999V0202701N00461220001CN0500001N9+01111+999999999990043012650999991949032418004+62300+010750FM-12+048599999V0202701N00461220001CN0500001N9+00781+99999999999

将samle.txt上传至HDFS

hadoop fs -put /home/hadoop/ncdcData/sample.txt input

项目结构

MaxTemperatureMapper类

package com.ll.maxTemperature;import java.io.IOException;import org.apache.hadoop.io.IntWritable;import org.apache.hadoop.io.LongWritable;import org.apache.hadoop.io.Text;import org.apache.hadoop.mapreduce.Mapper;public class MaxTemperatureMapper extendsMapper<LongWritable, Text, Text, IntWritable> {private static final int MISSING = 9999;@Overridepublic void map(LongWritable key, Text value, Context context)throws IOException, InterruptedException {String line = value.toString();String year = line.substring(15, 19);int airTemperature;if (line.charAt(87) == '+') { // parseInt doesn't like leading plus// signsairTemperature = Integer.parseInt(line.substring(88, 92));} else {airTemperature = Integer.parseInt(line.substring(87, 92));}String quality = line.substring(92, 93);if (airTemperature != MISSING && quality.matches("[01459]")) {context.write(new Text(year), new IntWritable(airTemperature));}}}// ^^ MaxTemperatureMapper

MaxTemperatureReducer类

package com.ll.maxTemperature;import java.io.IOException;import org.apache.hadoop.io.IntWritable;import org.apache.hadoop.io.Text;import org.apache.hadoop.mapreduce.Reducer;public class MaxTemperatureReducer extendsReducer<Text, IntWritable, Text, IntWritable> {@Overridepublic void reduce(Text key, Iterable<IntWritable> values, Context context)throws IOException, InterruptedException {int maxValue = Integer.MIN_VALUE;for (IntWritable value : values) {maxValue = Math.max(maxValue, value.get());}context.write(key, new IntWritable(maxValue));}}// ^^ MaxTemperatureReducer

MaxTemperature类(主函数)

package com.ll.maxTemperature;import org.apache.hadoop.fs.Path;import org.apache.hadoop.io.IntWritable;import org.apache.hadoop.io.Text;import org.apache.hadoop.mapreduce.Job;import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;public class MaxTemperature {public static void main(String[] args) throws Exception {if (args.length != 2) {args = new String[] {"hdfs://localhost:9000/user/hadoop/input/sample.txt","hdfs://localhost:9000/user/hadoop/out2" };}Job job = new Job(); // 指定作业执行规范job.setJarByClass(MaxTemperature.class);job.setJobName("Max temperature");FileInputFormat.addInputPath(job, new Path(args[0]));FileOutputFormat.setOutputPath(job, new Path(args[1])); // Reduce函数输出文件的写入路径job.setMapperClass(MaxTemperatureMapper.class);job.setCombinerClass(MaxTemperatureReducer.class);job.setReducerClass(MaxTemperatureReducer.class);job.setOutputKeyClass(Text.class);job.setOutputValueClass(IntWritable.class);System.exit(job.waitForCompletion(true) ? 0 : 1);}}// ^^ MaxTemperature

- hdfs://localhost:9000/;

- /user/hadoop/input/sample.txt

pom.xml

<project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd"><modelVersion>4.0.0</modelVersion><groupId>com.ll</groupId><artifactId>MapReduceTest</artifactId><version>0.0.1-SNAPSHOT</version><packaging>jar</packaging><name>MapReduceTest</name><url>http://maven.apache.org</url><properties><project.build.sourceEncoding>UTF-8</project.build.sourceEncoding><hadoopVersion>1.2.1</hadoopVersion><junit.version>3.8.1</junit.version></properties><dependencies><dependency><groupId>junit</groupId><artifactId>junit</artifactId><version>${junit.version}</version><scope>test</scope></dependency><!-- Hadoop --><dependency><groupId>org.apache.hadoop</groupId><artifactId>hadoop-core</artifactId><version>${hadoopVersion}</version><!-- Hadoop --></dependency></dependencies></project>

程序测试

Hadoop环境准备

生成jar包

上传服务器并运行测试

hadoop jar mc.jar

hadoop jar /home/hadoop/jars/mc.jar hdfs://localhost:9000/user/hadoop/input/sample.txt hdfs://localhost:9000/user/hadoop/out5

【Hadoop测试程序】编写MapReduce测试Hadoop环境的更多相关文章

- hive--构建于hadoop之上、让你像写SQL一样编写MapReduce程序

hive介绍 什么是hive? hive:由Facebook开源用于解决海量结构化日志的数据统计 hive是基于hadoop的一个数据仓库工具,可以将结构化的数据映射为数据库的一张表,并提供类SQL查 ...

- Hadoop实战5:MapReduce编程-WordCount统计单词个数-eclipse-java-windows环境

Hadoop研发在java环境的拓展 一 背景 由于一直使用hadoop streaming形式编写mapreduce程序,所以目前的hadoop程序局限于python语言.下面为了拓展java语言研 ...

- Hadoop实战3:MapReduce编程-WordCount统计单词个数-eclipse-java-ubuntu环境

之前习惯用hadoop streaming环境编写python程序,下面总结编辑java的eclipse环境配置总结,及一个WordCount例子运行. 一 下载eclipse安装包及hadoop插件 ...

- Hadoop:使用Mrjob框架编写MapReduce

Mrjob简介 Mrjob是一个编写MapReduce任务的开源Python框架,它实际上对Hadoop Streaming的命令行进行了封装,因此接粗不到Hadoop的数据流命令行,使我们可以更轻松 ...

- Hadoop学习笔记(4) ——搭建开发环境及编写Hello World

Hadoop学习笔记(4) ——搭建开发环境及编写Hello World 整个Hadoop是基于Java开发的,所以要开发Hadoop相应的程序就得用JAVA.在linux下开发JAVA还数eclip ...

- [Hadoop in Action] 第4章 编写MapReduce基础程序

基于hadoop的专利数据处理示例 MapReduce程序框架 用于计数统计的MapReduce基础程序 支持用脚本语言编写MapReduce程序的hadoop流式API 用于提升性能的Combine ...

- Hadoop学习笔记:使用Mrjob框架编写MapReduce

1.mrjob介绍 一个通过mapreduce编程接口(streamming)扩展出来的Python编程框架. 2.安装方法 pip install mrjob,略.初学,叙述的可能不是很细致,可以加 ...

- Hadoop通过HCatalog编写Mapreduce任务访问hive库中schema数据

1.dirver package com.kangaroo.hadoop.drive; import java.util.Map; import java.util.Properties; impor ...

- hadoop研究:mapreduce研究前的准备工作

继续研究hadoop,有童鞋问我,为啥不接着写hive的文章了,原因主要是时间不够,我对hive的研究基本结束,现在主要是hdfs和mapreduce,能写文章的时间也不多,只有周末才有时间写文章,所 ...

随机推荐

- linux性能监控基础命令

压力测试监控下系统性能方法之一 #top 该命令监控的是进程的信息 看图逐行意义 top:执行命令的之间 up:已经执行了277天 2users:目前有两个使用者,使用#who可以查看具体的使用者详情 ...

- Trinity 安装

http://trinityrnaseq.github.io/ 安装包下载地址: https://github.com/trinityrnaseq/trinityrnaseq/releases 解压 ...

- 240. Search a 2D Matrix II

Write an efficient algorithm that searches for a value in an m x n matrix. This matrix has the follo ...

- Websphere发布时遇到的问题

在发布时遇到了data source配置的问题,搞了好久没搞定,最后问题出现在 JDBC providers那边,Implementation class name 改成: com.ibm.db2.j ...

- python爬虫抓网页的总结

python爬虫抓网页的总结 更多 python 爬虫 学用python也有3个多月了,用得最多的还是各类爬虫脚本:写过抓代理本机验证的脚本,写过在discuz论坛中自动登录自动发贴的脚本,写过自 ...

- leetcode 135. Candy ----- java

There are N children standing in a line. Each child is assigned a rating value. You are giving candi ...

- c语言学习笔记

为什么需要输出控制符: 1: 01组成的代码可以表示数据亦可以表示指令: 2:如果01组成的代码表示的是数据的话,那么同样的01代码组合以不同的输出格式输出就会有不同的输出结果.. %d --- ...

- js获取ifram对象

1.获取iframe对象 var doc=document.getElementById('frameId').contentWindow.document; //var doc=parent.doc ...

- Google Java Style Guide

https://google.github.io/styleguide/javaguide.html Table of Contents 1 Introduction 1.1 Terminolog ...

- 对象属性操作-包含kvc---ios

#import <Foundation/Foundation.h> @class Author; @interface Books : NSObject{ @private NSStrin ...