每日一个机器学习算法——adaboost

在网上找到一篇好文,直接粘贴过来,加上一些补充和自己的理解,算作此文。

My education in the fundamentals of machine learning has mainly come from Andrew Ng’s excellent Coursera course on the topic. One thing that wasn’t covered in that course, though, was the topic of “boosting” which I’ve come across in a number of different contexts now. Fortunately, it’s a relatively straightforward topic if you’re already familiar with machine learning classification.

Whenever I’ve read about something that uses boosting, it’s always been with the “AdaBoost” algorithm, so that’s what this post covers.

AdaBoost is a popular boosting technique which helps you combine multiple “weak classifiers” into a single “strong classifier”. A weak classifier is simply a classifier that performs poorly, but performs better than random guessing. A simple example might be classifying a person as male or female based on their height. You could say anyone over 5′ 9″ is a male and anyone under that is a female. You’ll misclassify a lot of people that way, but your accuracy will still be greater than 50%.

AdaBoost can be applied to any classification algorithm, so it’s really a technique that builds on top of other classifiers as opposed to being a classifier itself.

You could just train a bunch of weak classifiers on your own and combine the results, so what does AdaBoost do for you? There’s really two things it figures out for you:

1. It helps you choose the training set for each new classifier that you train based on the results of the previous classifier.

2. It determines how much weight should be given to each classifier’s proposed answer when combining the results.

Training Set Selection

Each weak classifier should be trained on a random subset of the total training set. The subsets can overlap–it’s not the same as, for example, dividing the training set into ten portions. AdaBoost assigns a “weight” to each training example, which determines the probability that each example should appear in the training set. Examples with higher weights are more likely to be included in the training set, and vice versa. After training a classifier, AdaBoost increases the weight on the misclassified examples so that these examples will make up a larger part of the next classifiers training set, and hopefully the next classifier trained will perform better on them.

The equation for this weight update step is detailed later on.

adaboost只会训练整个训练集的一个子集,而不是全部。子集从训练集中随机挑选而来,而训练集的每个样本有一个权值,不是每个样本都能被选入子集,adaboost通过权值来决定该样本能进入子集的可能性。

Classifier Output Weights

After each classifier is trained, the classifier’s weight is calculated based on its accuracy. More accurate classifiers are given more weight. A classifier with 50% accuracy is given a weight of zero, and a classifier with less than 50% accuracy (kind of a funny concept) is given negative weight.

根据测试结果计算错误率,从而计算该弱分类器的权值。(不是样本权值)

Formal Definition

To learn about AdaBoost, I read through a tutorial written by one of the original authors of the algorithm, Robert Schapire. The tutorial is available here.

Below, I’ve tried to offer some intuition into the relevant equations.

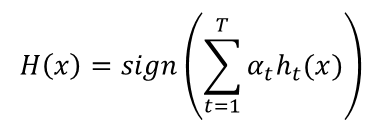

Let’s look first at the equation for the final classifier.

The final classifier consists of ‘T’ weak classifiers. h_t(x) is the output of weak classifier ‘t’ (in this paper, the outputs are limited to -1 or +1). Alpha_t is the weight applied to classifier ‘t’ as determined by AdaBoost. So the final output is just a linear combination of all of the weak classifiers, and then we make our final decision simply by looking at the sign of this sum.

The classifiers are trained one at a time. After each classifier is trained, we update the probabilities of each of the training examples appearing in the training set for the next classifier.

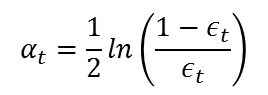

The first classifier (t = 1) is trained with equal probability given to all training examples. After it’s trained, we compute the output weight (alpha) for that classifier.

The output weight, alpha_t, is fairly straightforward. It’s based on the classifier’s error rate, ‘e_t’. e_t is just the number of misclassifications over the training set divided by the training set size.

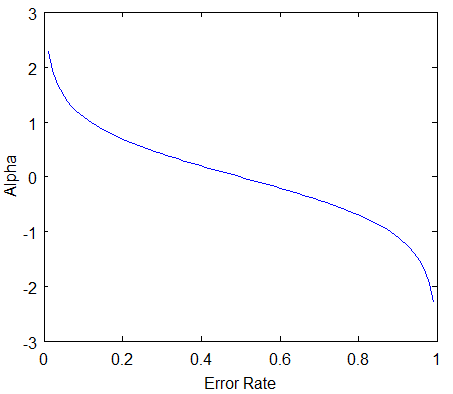

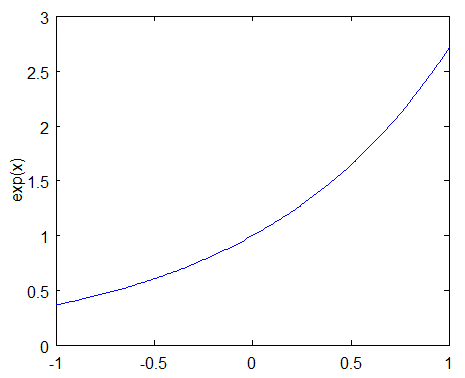

Here’s a plot of what alpha_t will look like for classifiers with different error rates.

There are three bits of intuition to take from this graph:

- The classifier weight grows exponentially as the error approaches 0. Better classifiers are given exponentially more weight.

- The classifier weight is zero if the error rate is 0.5. A classifier with 50% accuracy is no better than random guessing, so we ignore it.

- The classifier weight grows exponentially negative as the error approaches 1. We give a negative weight to classifiers with worse worse than 50% accuracy. “Whatever that classifier says, do the opposite!”.

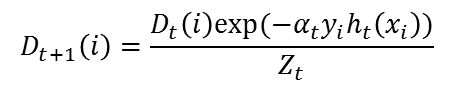

After computing the alpha for the first classifier, we update the training example weights using the following formula.

The variable D_t is a vector of weights, with one weight for each training example in the training set. ‘i’ is the training example number. This equation shows you how to update the weight for the ith training example.

The paper describes D_t as a distribution. This just means that each weight D(i) represents the probability that training example i will be selected as part of the training set.

To make it a distribution, all of these probabilities should add up to 1. To ensure this, we normalize the weights by dividing each of them by the sum of all the weights, Z_t. So, for example, if all of the calculated weights added up to 12.2, then we would divide each of the weights by 12.2 so that they sum up to 1.0 instead.

This vector is updated for each new weak classifier that’s trained. D_t refers to the weight vector used when training classifier ‘t’.

This equation needs to be evaluated for each of the training samples ‘i’ (x_i, y_i). Each weight from the previous training round is going to be scaled up or down by this exponential term.

To understand how this exponential term behaves, let’s look first at how exp(x) behaves.

The function exp(x) will return a fraction for negative values of x, and a value greater than one for positive values of x. So the weight for training sample i will be either increased or decreased depending on the final sign of the term “-alpha * y * h(x)”. For binary classifiers whose output is constrained to either -1 or +1, the terms y and h(x) only contribute to the sign and not the magnitude.

y_i is the correct output for training example ‘i’, and h_t(x_i) is the predicted output by classifier t on this training example. If the predicted and actual output agree, y * h(x) will always be +1 (either 1 * 1 or -1 * -1). If they disagree, y * h(x) will be negative.

Ultimately, misclassifications by a classifier with a positive alpha will cause this training example to be given a larger weight. And vice versa.

Note that by including alpha in this term, we are also incorporating the classifier’s effectiveness into consideration when updating the weights. If a weak classifier misclassifies an input, we don’t take that as seriously as a strong classifier’s mistake.

概括的说,-h*y的结果可能为1或者-1,而alpha则是一个可正可负的数,和错误率的变化是相反的,错误率越小,alpha越大。若错误率小于1/2,则alpha>0,此刻,对于正确分类的样本,样本权值减小,对于误分类的样本,权值加大。如果错误率大于1/2,则alpha<0,此刻,对于正确分类的样本,权值加大,对于误分类的样本,权值减小。

可以这样理解,错误率较低的分类器,ok,对于被正确分类的样本而言表现不错,我们应该更关注那些被错分的样本。也就是说当前分类器对正确分类的样本辨识度较高。错误率较高的分类器,对于被正确分类的样本而言表现都很差,也就是说,当前分类器对正确分类的样本的辨识度都不高,那么就不应该急着去分类那些被错分的样本,还是规规矩矩把当前的样本分好再说。

Practical Application

One of the biggest applications of AdaBoost that I’ve encountered is the Viola-Jones face detector, which seems to be the standard algorithm for detecting faces in an image. The Viola-Jones face detector uses a “rejection cascade” consisting of many layers of classifiers. If at any layer the detection window is not recognized as a face, it’s rejected and we move on to the next window. The first classifier in the cascade is designed to discard as many negative windows as possible with minimal computational cost.

In this context, AdaBoost actually has two roles. Each layer of the cascade is a strong classifier built out of a combination of weaker classifiers, as discussed here. However, the principles of AdaBoost are also used to find the best features to use in each layer of the cascade.

The rejection cascade concept seems to be an important one; in addition to the Viola-Jones face detector, I’ve seen it used in a couple of highly-cited person detector algorithms (here and here). If you’re interested in learning more about the rejection cascade technique, I recommend reading the original paper, which I think is very clear and well written. (Note that the topics of Haar wavelet features and integral images are not essential to the concept of rejection cascades).

adaboost官方算法流程图:

每日一个机器学习算法——adaboost的更多相关文章

- 每日一个机器学习算法——k近邻分类

K近邻很简单. 简而言之,对于未知类的样本,按照某种计算距离找出它在训练集中的k个最近邻,如果k个近邻中多数样本属于哪个类别,就将它判决为那一个类别. 由于采用k投票机制,所以能够减小噪声的影响. 由 ...

- 每日一个机器学习算法——LR(逻辑回归)

本系列文章用于汇集知识点,查漏补缺,面试找工作之用.数学公式较多,解释较少. 1.假设 2.sigmoid函数: 3.假设的含义: 4.性质: 5.找一个凸损失函数 6.可由最大似然估计推导出 单个样 ...

- 机器学习算法-Adaboost

本章内容 组合类似的分类器来提高分类性能 应用AdaBoost算法 处理非均衡分类问题 主题:利用AdaBoost元算法提高分类性能 1.基于数据集多重抽样的分类器 - AdaBoost 长处 泛化错 ...

- ML(6)——改进机器学习算法

现在我们要预测的是未来的房价,假设选择了回归模型,使用的损失函数是: 通过梯度下降或其它方法训练出了模型函数hθ(x),当使用hθ(x)预测新数据时,发现准确率非常低,此时如何处理? 在前面的章节中我 ...

- 机器学习算法的Python实现 (1):logistics回归 与 线性判别分析(LDA)

先收藏............ 本文为笔者在学习周志华老师的机器学习教材后,写的课后习题的的编程题.之前放在答案的博文中,现在重新进行整理,将需要实现代码的部分单独拿出来,慢慢积累.希望能写一个机器学 ...

- 机器学习之Adaboost (自适应增强)算法

注:本篇博文是根据其他优秀博文编写的,我只是对其改变了知识的排序,另外代码是<机器学习实战>中的.转载请标明出处及参考资料. 1 Adaboost 算法实现过程 1.1 什么是 Adabo ...

- 机器学习算法总结(三)——集成学习(Adaboost、RandomForest)

1.集成学习概述 集成学习算法可以说是现在最火爆的机器学习算法,参加过Kaggle比赛的同学应该都领略过集成算法的强大.集成算法本身不是一个单独的机器学习算法,而是通过将基于其他的机器学习算法构建多个 ...

- 机器学习之Adaboost算法原理

转自:http://www.cnblogs.com/pinard/p/6133937.html 在集成学习原理小结中,我们讲到了集成学习按照个体学习器之间是否存在依赖关系可以分为两类,第一个是个体学习 ...

- 机器学习&数据挖掘笔记_16(常见面试之机器学习算法思想简单梳理)

前言: 找工作时(IT行业),除了常见的软件开发以外,机器学习岗位也可以当作是一个选择,不少计算机方向的研究生都会接触这个,如果你的研究方向是机器学习/数据挖掘之类,且又对其非常感兴趣的话,可以考虑考 ...

随机推荐

- 自动清空Tomcat日志的办法

cd /usr/local/tomcat7/logs #清空日志 echo > catalina.out vi r.sh #!/bin/sh ########################## ...

- 通过Eclipse生成可运行的jar包

本来转自http://www.cnblogs.com/lanxuezaipiao/p/3291641.html 我是个追新潮的人,早早用上了MyEclipse10.最近需要使用Fat jar来帮我对一 ...

- android studio 设置

1.设置启动不打开最近项目 2.设置字体 3.安装逍游模拟器,并与android studio 进行链接 adb connect 127.0.0.1:21503 4.添加第三方包 文件jar.Modu ...

- laravel自定义公共函数

1.在app/Helpers/下新建一个文件functions.php,当然这个文件位置和名称你可以自己定义,创建一些函数用于全局调用: 2.在composer.json中的autoload下增加如下 ...

- ITerms2在mac系统下的安装和配色,并和go2shell关联

官网下载并安装 拖到应用文件夹使其在应用中展示 熟悉快捷键 无鼠标复制: cmd+f:查找首字母,再按tab向右选择词汇,按shift+tab向左选择词汇 分屏 cmd+d:垂直分屏 cmd+shif ...

- MYSQL的内外连接

1.内联接(典型的联接运算,使用像 = 或 <> 之类的比较运算符).包括相等联接和自然联接. 内联接使用比较运算符根据每个表共有的列的值匹配两个表中的行.例如,检索 stude ...

- HDU 4355.Party All the Time-三分

Party All the Time Time Limit: 6000/2000 MS (Java/Others) Memory Limit: 65536/32768 K (Java/Other ...

- MySql笔记之数据表

数据表:行称为记录 列称为字段 用来存储数据 一.数据类型 数据类型是指列.存储过程参数.表达式和局部变量的数据特征,它决定了数据的存储格式,代表了不同的信息类型. 在我们存储不同类型的数据时,为了 ...

- 优先队列priority_queue

优先队列容器与队列一样,只能从队尾插入元素,从队首删除元素.但是它有一个特性,就是队列中最大的元素总是位于队首,所以出队时,并非按照先进先出的原则进行,而是将当前队列中最大的元素出队.这点类似于给队列 ...

- 【dfs】【哈希表】bzoj2783 [JLOI2012]树

因为所有点权都是正的,所以对每个结点u来说,每条从根到它的路径上只有最多一个结点v符合d(u,v)=S. 所以我们可以边dfs边把每个结点的前缀和pre[u]存到一个数据结构里面,同时查询pre[u] ...