在Eclipse上运行Spark(Standalone,Yarn-Client)

欢迎转载,且请注明出处,在文章页面明显位置给出原文连接。

原文链接:http://www.cnblogs.com/zdfjf/p/5175566.html

我们知道有eclipse的Hadoop插件,能够在eclipse上操作hdfs上的文件和新建mapreduce程序,以及以Run On Hadoop方式运行程序。那么我们可不可以直接在eclipse上运行Spark程序,提交到集群上以YARN-Client方式运行,或者以Standalone方式运行呢?

答案是可以的。下面我来介绍一下如何在eclipse上运行Spark的wordcount程序。我用的hadoop 版本为2.6.2,spark版本为1.5.2。

1.Standalone方式运行

1.1 新建一个普通的java工程即可,下面直接上代码,

/*

* Licensed to the Apache Software Foundation (ASF) under one or more

* contributor license agreements. See the NOTICE file distributed with

* this work for additional information regarding copyright ownership.

* The ASF licenses this file to You under the Apache License, Version 2.0

* (the "License"); you may not use this file except in compliance with

* the License. You may obtain a copy of the License at

*

* http://www.apache.org/licenses/LICENSE-2.0

*

* Unless required by applicable law or agreed to in writing, software

* distributed under the License is distributed on an "AS IS" BASIS,

* WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

* See the License for the specific language governing permissions and

* limitations under the License.

*/ package com.frank.spark; import scala.Tuple2;

import org.apache.spark.SparkConf;

import org.apache.spark.api.java.JavaPairRDD;

import org.apache.spark.api.java.JavaRDD;

import org.apache.spark.api.java.JavaSparkContext;

import org.apache.spark.api.java.function.FlatMapFunction;

import org.apache.spark.api.java.function.Function2;

import org.apache.spark.api.java.function.PairFunction; import java.util.Arrays;

import java.util.List;

import java.util.regex.Pattern; public final class JavaWordCount {

private static final Pattern SPACE = Pattern.compile(" "); public static void main(String[] args) throws Exception { if (args.length < 1) {

System.err.println("Usage: JavaWordCount <file>");

System.exit(1);

} SparkConf sparkConf = new SparkConf().setAppName("JavaWordCount");

sparkConf.setMaster("spark://192.168.0.1:7077");

JavaSparkContext ctx = new JavaSparkContext(sparkConf);

ctx.addJar("C:\\Users\\Frank\\sparkwordcount.jar");

JavaRDD<String> lines = ctx.textFile(args[0], 1); JavaRDD<String> words = lines.flatMap(new FlatMapFunction<String, String>() {

@Override

public Iterable<String> call(String s) {

return Arrays.asList(SPACE.split(s));

}

}); JavaPairRDD<String, Integer> ones = words.mapToPair(new PairFunction<String, String, Integer>() {

@Override

public Tuple2<String, Integer> call(String s) {

return new Tuple2<String, Integer>(s, 1);

}

}); JavaPairRDD<String, Integer> counts = ones.reduceByKey(new Function2<Integer, Integer, Integer>() {

@Override

public Integer call(Integer i1, Integer i2) {

return i1 + i2;

}

}); List<Tuple2<String, Integer>> output = counts.collect();

for (Tuple2<?,?> tuple : output) {

System.out.println(tuple._1() + ": " + tuple._2());

}

ctx.stop();

}

}

代码直接从spark安装包解压后在examples/src/main/java/org/apache/spark/examples/JavaWordCount.java拷贝出来,唯一不同的地方在增加了44行和46行,44行设置了Master,为hadoop的master 结点的IP,端口号为7077。46行设置了工程打包后放置在windows上的路径。

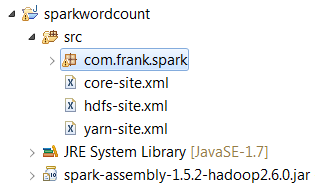

1.2 加入spark依赖包spark-assembly-1.5.2-hadoop2.6.0.jar,这个包可以从spark 安装包解压 后在lib目录下。

1.3 配置要统计的文件在hdfs上的路径

Run As->Run Configurations

点击Arguments,因为程序中47行要求输入被统计的文件路径,所以在这里配置以下,文件必须放在hdfs上,所以这里的ip也是你的hadoop的master机器的ip.

1.4 接下来就是Run程序了,统计的结果会显示在eclipse的控制台。你也可以通过spark的web页面查看刚才提交的程序。

2. 以YARN-Client方式运行

2.1 先上代码

/*

* Licensed to the Apache Software Foundation (ASF) under one or more

* contributor license agreements. See the NOTICE file distributed with

* this work for additional information regarding copyright ownership.

* The ASF licenses this file to You under the Apache License, Version 2.0

* (the "License"); you may not use this file except in compliance with

* the License. You may obtain a copy of the License at

*

* http://www.apache.org/licenses/LICENSE-2.0

*

* Unless required by applicable law or agreed to in writing, software

* distributed under the License is distributed on an "AS IS" BASIS,

* WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

* See the License for the specific language governing permissions and

* limitations under the License.

*/ package com.frank.spark; import scala.Tuple2;

import org.apache.spark.SparkConf;

import org.apache.spark.api.java.JavaPairRDD;

import org.apache.spark.api.java.JavaRDD;

import org.apache.spark.api.java.JavaSparkContext;

import org.apache.spark.api.java.function.FlatMapFunction;

import org.apache.spark.api.java.function.Function2;

import org.apache.spark.api.java.function.PairFunction; import java.util.Arrays;

import java.util.List;

import java.util.regex.Pattern; public final class JavaWordCount {

private static final Pattern SPACE = Pattern.compile(" "); public static void main(String[] args) throws Exception { 38 System.setProperty("HADOOP_USER_NAME", "hadoop"); if (args.length < 1) {

System.err.println("Usage: JavaWordCount <file>");

System.exit(1);

} SparkConf sparkConf = new SparkConf().setAppName("JavaWordCountByFrank01");

sparkConf.setMaster("yarn-client");

sparkConf.set("spark.yarn.dist.files", "C:\\software\\workspace\\sparkwordcount\\src\\yarn-site.xml");

sparkConf.set("spark.yarn.jar", "hdfs://192.168.0.1:9000/user/hadoop/spark-assembly-1.5.2-hadoop2.6.0.jar"); JavaSparkContext ctx = new JavaSparkContext(sparkConf);

ctx.addJar("C:\\Users\\Frank\\sparkwordcount.jar");

JavaRDD<String> lines = ctx.textFile(args[0], 1); JavaRDD<String> words = lines.flatMap(new FlatMapFunction<String, String>() {

@Override

public Iterable<String> call(String s) {

return Arrays.asList(SPACE.split(s));

}

}); JavaPairRDD<String, Integer> ones = words.mapToPair(new PairFunction<String, String, Integer>() {

@Override

public Tuple2<String, Integer> call(String s) {

return new Tuple2<String, Integer>(s, 1);

}

}); JavaPairRDD<String, Integer> counts = ones.reduceByKey(new Function2<Integer, Integer, Integer>() {

@Override

public Integer call(Integer i1, Integer i2) {

return i1 + i2;

}

}); List<Tuple2<String, Integer>> output = counts.collect();

for (Tuple2<?,?> tuple : output) {

System.out.println(tuple._1() + ": " + tuple._2());

}

ctx.stop();

}

}2.2 程序解释

38行,如果你的windows用户名和集群上用户名不一样,这里就应该配置一下。比如我windows用户名为Frank,而装有hadoop的集群username为hadoop,这里我就以38行这样设置。

46行,这里配置以yarn-client方式

48行,以这种方式运行时候,每一次运行都会把spark-assembly-1.5.2-hadoop2.6.0.jar包上传到hdfs下这次生成的application-id文件夹下,会耗费几分钟时间,这里也可以配置spark.yarn.jar,先把spark-assembly-1.5.2-hadoop2.6.0.jar上传到hdfs一个目录下,这样就不用每次从windows上传到hdfs下了。参考https://spark.apache.org/docs/1.5.2/running-on-yarn.html

spark.yarn.jar :The location of the Spark jar file, in case overriding the default location is desired. By default, Spark on YARN will use a Spark jar installed locally, but the Spark jar can also be in a world-readable location on HDFS. This allows YARN to cache it on nodes so that it doesn't need to be distributed each time an application runs. To point to a jar on HDFS, for example, set this configuration to "hdfs:///some/path".

51行,把项目打包后放在windows上的路径。

2.3 程序配置

把3个配置文件放在src下,配置文件从hadoop的linux机器上拷贝下来。

2.4 配置要统计的文件在hdfs上的路径

参考1.3,同样结果显示在eclipse控制台。

在Eclipse上运行Spark(Standalone,Yarn-Client)的更多相关文章

- 运行 Spark on YARN

运行 Spark on YARN Spark 0.6.0 以上的版本添加了在yarn上执行spark application的功能支持,并在之后的版本中持续的 改进.关于本文的内容是翻译官网的内容,大 ...

- Spark学习之在集群上运行Spark

一.简介 Spark 的一大好处就是可以通过增加机器数量并使用集群模式运行,来扩展程序的计算能力.好在编写用于在集群上并行执行的 Spark 应用所使用的 API 跟本地单机模式下的完全一样.也就是说 ...

- 在集群上运行Spark

Spark 可以在各种各样的集群管理器(Hadoop YARN.Apache Mesos,还有Spark 自带的独立集群管理器)上运行,所以Spark 应用既能够适应专用集群,又能用于共享的云计算环境 ...

- Spark学习之在集群上运行Spark(6)

Spark学习之在集群上运行Spark(6) 1. Spark的一个优点在于可以通过增加机器数量并使用集群模式运行,来扩展程序的计算能力. 2. Spark既能适用于专用集群,也可以适用于共享的云计算 ...

- cdh 上安装spark on yarn

在cdh 上安装spark on yarn 还是比较简单的,不需要独立安装什么模块或者组件. 安装服务 选择on yarn 模式:上面 Spark 在spark 服务中添加 在yarn 服务中添加 g ...

- 《Spark 官方文档》在Mesos上运行Spark

本文转自:http://ifeve.com/spark-mesos-spark/ 在Mesos上运行Spark Spark可以在由Apache Mesos 管理的硬件集群中运行. 在Mesos集群中使 ...

- linux下在eclipse上运行hadoop自带例子wordcount

启动eclipse:打开windows->open perspective->other->map/reduce 可以看到map/reduce开发视图.设置Hadoop locati ...

- Windows下IntelliJ IDEA中运行Spark Standalone

ZHUAN http://www.cnblogs.com/one--way/archive/2016/08/29/5818989.html http://www.cnblogs.com/one--wa ...

- mac上eclipse上运行word count

1.打开eclipse之后,建立wordcount项目 package wordcount; import java.io.IOException; import java.util.StringTo ...

随机推荐

- 为什么stc15的单片机,运行了几秒后就蹦了

转载请注明出处:http://blog.csdn.net/qq_26093511/article/details/53534465 还是那个led显示屏的项目...... stc15的单片机 运行了几 ...

- 技术胖Flutter第三季-14布局RowWidget的详细讲解

flutter总的地址: https://jspang.com/page/freeVideo.html 视频地址: https://www.bilibili.com/video/av35800108/ ...

- 20个Flutter实例视频教程-第15节: 贝塞尔曲线切割

博客地址: https://jspang.com/post/flutterDemo.html#toc-61b 视频地址: https://www.bilibili.com/video/av397092 ...

- 前端基础 之css

css 介绍 css(层叠样式表)定义如何显示html 元素 当浏览器读到一个样式表, 他就会按照这个表对文档进行格式化(渲染) css语法 css实例 css 注释 注释是代码之母 /* 这是注释* ...

- PostgreSQL 务实应用(二/5)插入冲突

在项目中,有时会动态地按周期(如按月)封存统计数据,通常需要做这样的处理: 以按月封存为例,当月数据到达时,先需要检查该月是否有过记录,有则以更新的方式累加统计数字,无则添加一条记录. 假设我们创建以 ...

- phpstrom安装bootstrap3插件

1.步骤 File > > Settings > >Plugins > > 搜索bootstrap 3 然后点击 Browse repositories 就会有一个 ...

- 138. Copy List with Random Pointer (not do it by myself)

A linked list is given such that each node contains an additional random pointer which could point t ...

- hoj2798 Globulous Gumdrops

Globulous Gumdrops My Tags (Edit) Source : 2008 Stanford Programming Contest Time limit : 1 se ...

- Web前端篇:CSS常用格式化排版、盒模型、浮动、定位、背景边框属性

目录 Web前端篇:CSS常用格式化排版.盒模型.浮动.定位.背景边框属性 1.常用格式化排版 2.CSS盒模型 3.浮动 4.定位 5.背景属性和边框属性 6.网页中规范和错误问题 7.显示方式 W ...

- JQuery获取iframe中window对象的方法-contentWindow

document.getElementsByTagName('iframe')[0].contentWindow 获取到的就是iframe中的window对象.