Spark教程——(4)Spark-shell调用SQLContext(HiveContext)

启动Spark-shell:

[root@node1 ~]# spark-shell

Setting default log level to "WARN".

To adjust logging level use sc.setLogLevel(newLevel).

Welcome to

____ __

/ __/__ ___ _____/ /__

_\ \/ _ \/ _ `/ __/ '_/

/___/ .__/\_,_/_/ /_/\_\ version 1.6.0

/_/

Using Scala version 2.10.5 (Java HotSpot(TM) 64-Bit Server VM, Java 1.8.0_131)

Type in expressions to have them evaluated.

Type :help for more information.

Spark context available as sc (master = yarn-client, app id = application_1554951897984_0111).

SQL context available as sqlContext.

scala> sc

res0: org.apache.spark.SparkContext = org.apache.spark.SparkContext@272485a6

scala> sqlContext

res1: org.apache.spark.sql.SQLContext = org.apache.spark.sql.hive.HiveContext@11c95035

上下文已经包含 sc 和 sqlContext:

Spark context available as sc (master = yarn-client, app id = application_1554951897984_0111). SQL context available as sqlContext.

本地创建people07041119.json

{"name":"zhangsan","job number":"101","age":33,"gender":"male","deptno":1,"sal":18000}

{"name":"lisi","job number":"102","age":30,"gender":"male","deptno":2,"sal":20000}

{"name":"wangwu","job number":"103","age":35,"gender":"female","deptno":3,"sal":50000}

{"name":"zhaoliu","job number":"104","age":31,"gender":"male","deptno":1,"sal":28000}

{"name":"tianqi","job number":"105","age":36,"gender":"female","deptno":3,"sal":90000}

本地创建dept.json

{"name":"development","deptno":1}

{"name":"personnel","deptno":2}

{"name":"testing","deptno":3}

将本地文件上传到HDFS上:

bash-4.2$ hadoop dfs -put /home/**/data/people07041119.json /user/** bash-4.2$ hadoop dfs -put /home/**/data/dept.json /user/**

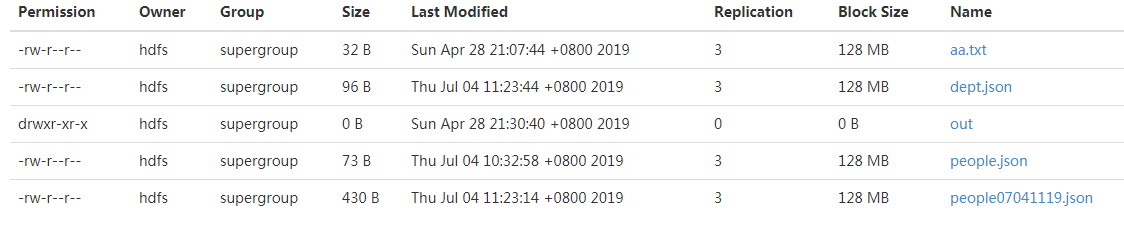

结果如下:

执行Scala脚本,加载文件:

scala> val people=sqlContext.jsonFile("/user/**/people07041119.json")

warning: there were deprecation warning(s); re-run with -deprecation for details

people: org.apache.spark.sql.DataFrame = [age: bigint, deptno: bigint, gender: string, job number: string, name: string, sal: bigint]

scala> val dept=sqlContext.jsonFile("/user/**/dept.json")

warning: there were deprecation warning(s); re-run with -deprecation for details

people: org.apache.spark.sql.DataFrame = [deptno: bigint, name: string]

执行Scala脚本,查看文件内容:

scala> people.show +---+------+------+----------+--------+-----+ |age|deptno|gender|job number| name| sal| +---+------+------+----------+--------+-----+ | | | male| |zhangsan|| | | | male| | lisi|| | | |female| | wangwu|| | | | male| | zhaoliu|| | | |female| | tianqi|| +---+------+------+----------+--------+-----+

显示前三条记录:

scala> people.show() +---+------+------+----------+--------+-----+ |age|deptno|gender|job number| name| sal| +---+------+------+----------+--------+-----+ | | | male| |zhangsan|| | | | male| | lisi|| | | |female| | wangwu|| +---+------+------+----------+--------+-----+ only showing top rows

查看列信息:

scala> people.columns res5: Array[String] = Array(age, deptno, gender, job number, name, sal)

添加过滤条件:

scala> people.filter("gender='male'").count

res6: Long =

参考:

https://blog.csdn.net/xiaolong_4_2/article/details/80886371

Spark教程——(4)Spark-shell调用SQLContext(HiveContext)的更多相关文章

- spark教程(二)-shell操作

spark 支持 shell 操作 shell 主要用于调试,所以简单介绍用法即可 支持多种语言的 shell 包括 scala shell.python shell.R shell.SQL shel ...

- spark教程(八)-SparkSession

spark 有三大引擎,spark core.sparkSQL.sparkStreaming, spark core 的关键抽象是 SparkContext.RDD: SparkSQL 的关键抽象是 ...

- spark教程(11)-sparkSQL 数据抽象

数据抽象 sparkSQL 的数据抽象是 DataFrame,df 相当于表格,它的每一行是一条信息,形成了一个 Row Row 它是 sparkSQL 的一个抽象,用于表示一行数据,从表现形式上看, ...

- spark教程(四)-SparkContext 和 RDD 算子

SparkContext SparkContext 是在 spark 库中定义的一个类,作为 spark 库的入口点: 它表示连接到 spark,在进行 spark 操作之前必须先创建一个 Spark ...

- Spark教程——(11)Spark程序local模式执行、cluster模式执行以及Oozie/Hue执行的设置方式

本地执行Spark SQL程序: package com.fc //import common.util.{phoenixConnectMode, timeUtil} import org.apach ...

- spark教程

某大神总结的spark教程, 地址 http://litaotao.github.io/introduction-to-spark?s=inner

- spark教程(七)-文件读取案例

sparkSession 读取 csv 1. 利用 sparkSession 作为 spark 切入点 2. 读取 单个 csv 和 多个 csv from pyspark.sql import Sp ...

- spark教程(一)-集群搭建

spark 简介 建议先阅读我的博客 大数据基础架构 spark 一个通用的计算引擎,专门为大规模数据处理而设计,与 mapreduce 类似,不同的是,mapreduce 把中间结果 写入 hdfs ...

- Spark教程——(10)Spark SQL读取Phoenix数据本地执行计算

添加配置文件 phoenixConnectMode.scala : package statistics.benefits import org.apache.hadoop.conf.Configur ...

- 一、spark入门之spark shell:wordcount

1.安装完spark,进入spark中bin目录: bin/spark-shell scala> val textFile = sc.textFile("/Users/admin/ ...

随机推荐

- Ubuntu python3 与 python2 的 pip调用

ubuntu 是默认装有pytthon2.x 与 python3.x 共存的 通常终端里 python 表示 python2 版本 python3 表示 python3 版本 (如果你没更改软链接设置 ...

- python opencv:代码执行时间计算

t1 = cv2.getTickCount() # ...... t2 = cv2.getTickCount() # 计算花费的时间:毫秒 time = (t2-t1) / cv2.getTickFr ...

- intellij idea设置(字体大小、背景)

1. 配置信息说明 Intellij Idea: 2017.2.5 2.具体设置 <1> 设置主题背景.字体大小 File---->Settings----->Appearan ...

- win10上的程序兼容win7、xp等

- 树莓派4B踩坑指南 - (6)安装常用软件及相关设置

安装软件 安装LibreOffice中文包 sudo apt-get install libreoffice-l10n-zh-cn sudo reboot 安装codeblocks并汉化: sudo ...

- 小白学 Python 爬虫:Selenium 获取某大型电商网站商品信息

目标 先介绍下我们本篇文章的目标,如图: 本篇文章计划获取商品的一些基本信息,如名称.商店.价格.是否自营.图片路径等等. 准备 首先要确认自己本地已经安装好了 Selenium 包括 Chrome ...

- 变量的注释(python3.6以后的功能)

有时候导入模块,然后使用这个变量的时候,却没点出后面的智能提示.用以下方法可以解决:https://www.cnblogs.com/xieqiankun/p/type_hints_in_python3 ...

- 前端面试:js数据类型

js数据类型是js中的基础知识点,也是前端面试中一定会被考察的内容.本文旨在知识的梳理和总结,希望读者通过阅读本文,能够对这一块知识有更清晰的认识.文中如果出现错误,请在评论区指出,谢谢. js数据类 ...

- Servlet 学习(八)

Filter 1.功能 Java Servlet 2.3 中新增加的功能,主要作用是对Servlet 容器的请求和响应进行检查和修改 Filter 本身并不生成请求和响应对象,它只提供过滤作用 在Se ...

- HashMap源码__resize

final Node<K,V>[] resize() { //创建一个Node数组用于存放table中的元素, Node<K,V>[] oldTab = table; //获取 ...