并发框架 LMAX Disruptor

Introduction

The best way to understand what the Disruptor is, is to compare it to something well understood and quite similar in purpose. In the case of the Disruptor this would be Java's BlockingQueue. Like a queue the purpose of the Disruptor is to move data (e.g. messages or events) between threads within the same process. However there are some key features that the Disruptor provides that distinguish it from a queue. They are:

- Multicast events to consumers, with consumer dependency graph.

- Pre-allocate memory for events.

- Optionally lock-free.

Core Concepts

Before we can understand how the Disruptor works, it is worthwhile defining a number of terms that will be used throughout the documentation and the code. For those with a DDD bent, think of this as the ubiquitous language of the Disruptor domain.

- Ring Buffer: The Ring Buffer is often considered the main aspect of the Disruptor, however from 3.0 onwards the Ring Buffer is only responsible for the storing and updating of the data (Events) that move through the Disruptor. And for some advanced use cases can be completely replaced by the user.

- Sequence: The Disruptor uses Sequences as a means to identify where a particular component is up to. Each consumer (EventProcessor) maintains a Sequence as does the Disruptor itself. The majority of the concurrent code relies on the the movement of these Sequence values, hence the Sequence supports many of the current features of an AtomicLong. In fact the only real difference between the 2 is that the Sequence contains additional functionality to prevent false sharing between Sequences and other values.

- Sequencer: The Sequencer is the real core of the Disruptor. The 2 implementations (single producer, multi producer) of this interface implement all of the concurrent algorithms use for fast, correct passing of data between producers and consumers.

- Sequence Barrier: The Sequence Barrier is produced by the Sequencer and contains references to the main published Sequence from the Sequencer and the Sequences of any dependent consumer. It contains the logic to determine if there are any events available for the consumer to process.

- Wait Strategy: The Wait Strategy determines how a consumer will wait for events to be placed into the Disruptor by a producer. More details are available in the section about being optionally lock-free.

- Event: The unit of data passed from producer to consumer. There is no specific code representation of the Event as it defined entirely by the user.

- EventProcessor: The main event loop for handling events from the Disruptor and has ownership of consumer's Sequence. There is a single representation called BatchEventProcessor that contains an efficient implementation of the event loop and will call back onto a used supplied implementation of the EventHandler interface.

- EventHandler: An interface that is implemented by the user and represents a consumer for the Disruptor.

- Producer: This is the user code that calls the Disruptor to enqueue Events. This concept also has no representation in the code.

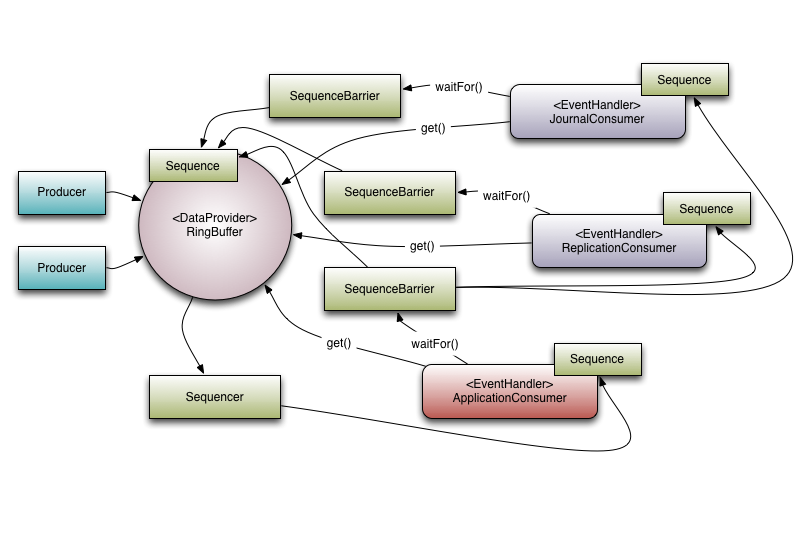

To put these elements into context, below is an example of how LMAX uses the Disruptor within its high performance core services, e.g. the exchange.

Figure 1. Disruptor with a set of dependent consumers.

Multicast Events

This is the biggest behavioural difference between queues and the Disruptor. When you have multiple consumers listening on the same Disruptor all events are published to all consumers in contrast to a queue where a single event will only be sent to a single consumer. The behaviour of the Disruptor is intended to be used in cases where you need to independent multiple parallel operations on the same data. The canonical example from LMAX is where we have three operations, journalling (writing the input data to a persistent journal file), replication (sending the input data to another machine to ensure that there is a remote copy of the data), and business logic (the real processing work). The Executor-style event processing, where scale is found by processing different events in parallel at the same is also possible using the WorkerPool. Note that is bolted on top of the existing Disruptor classes and is not treated with the same first class support, hence it may not be the most efficient way to achieve that particular goal.

Looking at Figure 1. is possible to see that there are 3 Event Handlers listening (JournalConsumer, ReplicationConsumer and ApplicationConsumer) to the Disruptor, each of these Event Handlers will receive all of the messages available in the Disruptor (in the same order). This allow for work for each of these consumers to operate in parallel.

Consumer Dependency Graph

To support real world applications of the parallel processing behaviour it was necessary to support co-ordination between the consumers. Referring back to the example described above, it necessary to prevent the business logic consumer from making progress until the journalling and replication consumers have completed their tasks. We call this concept gating, or more correctly the feature that is a super-set of this behaviour is called gating. Gating happens in two places. Firstly we need to ensure that the producers do not overrun consumers. This is handled by adding the relevant consumers to the Disruptor by calling RingBuffer.addGatingConsumers(). Secondly, the case referred to previously is implemented by constructing a SequenceBarrier containing Sequences from the components that must complete their processing first.

Referring to Figure 1. there are 3 consumers listening for Events from the Ring Buffer. There is a dependency graph in this example. The ApplicationConsumer depends on the JournalConsumer and ReplicationConsumer. This means that the JournalConsumer and ReplicationConsumer can run freely in parallel with each other. The dependency relationship can be seen by the connection from the ApplicationConsumer's SequenceBarrier to the Sequences of the JournalConsumer and ReplicationConsumer. It is also worth noting the relationship that the Sequencer has with the downstream consumers. One of its roles is to ensure that publication does not wrap the Ring Buffer. To do this none of the downstream consumer may have a Sequence that is lower than the Ring Buffer's Sequence less the size of the Ring Buffer. However using the graph of dependencies an interesting optimisation can be made. Because the ApplicationConsumers Sequence is guaranteed to be less than or equal to JournalConsumer and ReplicationConsumer (that is what that dependency relationship ensures) the Sequencer need only look at the Sequence of the ApplicationConsumer. In a more general sense the Sequencer only needs to be aware of the Sequences of the consumers that are the leaf nodes in the dependency tree.

Event Preallocation

One of the goals of the Disruptor was to enable use within a low latency environment. Within low-latency systems it is necessary to reduce or remove memory allocations. In Java-based system the purpose is to reduce the number stalls due to garbage collection (in low-latency C/C++ systems, heavy memory allocation is also problematic due to the contention that be placed on the memory allocator).

To support this the user is able to preallocate the storage required for the events within the Disruptor. During construction and EventFactory is supplied by the user and will be called for each entry in the Disruptor's Ring Buffer. When publishing new data to the Disruptor the API will allow the user to get hold of the constructed object so that they can call methods or update fields on that store object. The Disruptor provides guarantees that these operations will be concurrency-safe as long as they are implemented correctly.

Optionally Lock-free

Another key implementation detail pushed by the desire for low-latency is the extensive use of lock-free algorithms to implement the Disruptor. All of the memory visibility and correctness guarantees are implemented using memory barriers and/or compare-and-swap operations. There is only one use-case where a actual lock is required and that is within the BlockingWaitStrategy. This is done solely for the purpose of using a Condition so that a consuming thread can be parked while waiting for new events to arrive. Many low-latency systems will use a busy-wait to avoid the jitter that can be incurred by using a Condition, however in number of system busy-wait operations can lead to significant degradation in performance, especially where the CPU resources are heavily constrained. E.g. web servers in virtualised-environments.

并发框架 LMAX Disruptor的更多相关文章

- [翻译]高并发框架 LMAX Disruptor 介绍

原文地址:Concurrency with LMAX Disruptor – An Introduction 译者序 前些天在并发编程网,看到了关于 Disruptor 的介绍.感觉此框架惊为天人,值 ...

- 架构师养成记--15.Disruptor并发框架

一.概述 disruptor对于处理并发任务很擅长,曾有人测过,一个线程里1s内可以处理六百万个订单,性能相当感人. 这个框架的结构大概是:数据生产端 --> 缓存 --> 消费端 缓存中 ...

- Disruptor并发框架(一)简介&上手demo

框架简介 Martin Fowler在自己网站上写了一篇LMAX架构的文章,在文章中他介绍了LMAX是一种新型零售金融交易平台,它能够以很低的延迟产生大量交易.这个系统是建立在JVM平台上,其核心是一 ...

- 并发框架Disruptor译文

Martin Fowler在自己网站上写了一篇LMAX架构的文章,在文章中他介绍了LMAX是一种新型零售金融交易平台,它能够以很低的延迟产生大量交易.这个系统是建立在JVM平台上,其核心是一个业务逻辑 ...

- Disruptor并发框架简介

Martin Fowler在自己网站上写一篇LMAX架构的文章,在文章中他介绍了LMAX是一种新型零售金额交易平台,它能够以很低的延迟产生大量交易.这个系统是建立在JVM平台上,其核心是一个业务逻辑处 ...

- 无锁并发框架Disruptor学习入门

刚刚听说disruptor,大概理一下,只为方便自己理解,文末是一些自己认为比较好的博文,如果有需要的同学可以参考. 本文目标:快速了解Disruptor是什么,主要概念,怎么用 1.Disrupto ...

- 基于Disruptor并发框架的分类任务并发

并发的场景 最近在编码中遇到的场景,我的程序需要处理不同类型的任务,场景要求如下: 1.同类任务串行.不同类任务并发. 2.高吞吐量. 3.任务类型动态增减. 思路 思路一: 最直接的想法,每有一个任 ...

- 并发编程之Disruptor并发框架

一.什么是Disruptor Martin Fowler在自己网站上写了一篇LMAX架构的文章,在文章中他介绍了LMAX是一种新型零售金融交易平台,它能够以很低的延迟产生大量交易.这个系统是建立在JV ...

- Disruptor 并发框架

什么是Disruptor Martin Fowler在自己网站上写了一篇LMAX架构的文章,在文章中他介绍了LMAX是一种新型零售金融交易平台,它能够以很低的延迟产生大量交易.这个系统是建立在JVM平 ...

- Disruptor 高性能并发框架二次封装

Disruptor是一款java高性能无锁并发处理框架.和JDK中的BlockingQueue有相似处,但是它的处理速度非常快!!!号称“一个线程一秒钟可以处理600W个订单”(反正渣渣电脑是没体会到 ...

随机推荐

- virtualbox中linux设置NAT和Host-Only上网(实现双机互通同时可上外网)

关于虚拟机中几种网络连接方式请参考其他教程. 平常,我们安装好虚机,用桥接方式也就够了.毕竟它能上内网和外网. 但是有个问题,如果你的网络环境发生变化,虚机的Ip也会随之改变(桥接的Ip和主机ip必须 ...

- 升级 vcpkg 遇到的一些坑

项目上有个需求要用到 wil 库,于是打开 cmd 输入: vcpkg install wil:x86-windows-static 等了很久,一直卡在配置命令 连续试了好几遍,还是不行,安装其他的静 ...

- 推导式,集合推导式,生成器表达式及生成器函数day13

1.推导式 用一行循环判断遍历处一系列数据的方式 推导式在使用时,只能用for循环和判断,而且判断只能是单项判断 基本语法: lst = [i for i in range(1,51)] print( ...

- ContentType组件表使用

https://www.shuzhiduo.com/A/qVdepN2r5P/

- 面试官:说说SSO单点登录的实现原理?

单点登录(Single Sign-On, SSO)是一种让用户在多个应用系统之间只需登录一次就可以访问所有授权系统的机制.单点登录主要目的是为了提高用户体验并简化安全管理. 举个例子,您在一个大型企业 ...

- Codeforces Round 734 (Div. 3)B2. Wonderful Coloring - 2(贪心构造实现)

思路: 分类讨论: 当一个数字出现的次数大于等于k,那么最多有k个能被染色, 当一个数字出现的次数小于k,南那么这些数字都可能被染色 还有一个条件就是需要满足每个颜色的数字个数一样多,这里记出现次数小 ...

- linux压缩文件并排除指定目录

今天要在linux上打包一个项目另作他用,但是项目图片都是放本地服务器的,整个项目打包好后有2G多下载十分费时.项目中的图片我们可以不要,所以压缩的时候要排除图片目录. 具体命令如下: // 参数说明 ...

- SHA算法:数据完整性的守护者

一.SHA算法的起源与演进 SHA(Secure Hash Algorithm)算法是一种哈希算法,最初由美国国家安全局(NSA)设计并由国家标准技术研究所(NIST)发布.SHA算法的目的是生成数据 ...

- PostgreSql一个月学习计划

1.背景 国内使用数据库最多的莫过于mysql,大部分程序员第一次接触数据库就是mysql.(毕竟免费的 = =!)但近年来,有一些黑马出现(如下图),其中表现最突出的莫过于PostgreSQL.特规 ...

- Ubuntu 与Windows 之间搭建共享文件夹

工作中经常需要搭建Linux环境用于测试以及其他开发需求,办公电脑通常是Windows 系统,为便于让文件在两个系统之间传输,可以采取共享文件的方式实现: 1.安装samba 服务: sudo apt ...