Notes on Probabilistic Latent Semantic Analysis (PLSA)

转自:http://www.hongliangjie.com/2010/01/04/notes-on-probabilistic-latent-semantic-analysis-plsa/

I highly recommend you read the more detailed version of http://arxiv.org/abs/1212.3900

Formulation of PLSA

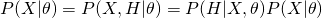

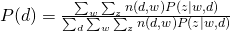

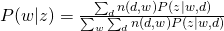

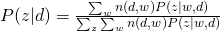

There are two ways to formulate PLSA. They are equivalent but may lead to different inference process.

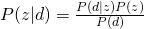

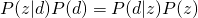

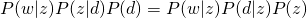

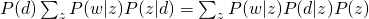

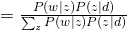

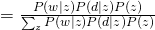

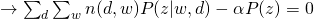

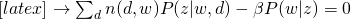

Let’s see why these two equations are equivalent by using Bayes rule.

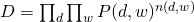

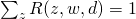

The whole data set is generated as (we assume that all words are generated independently):

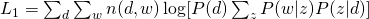

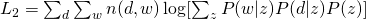

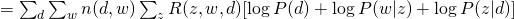

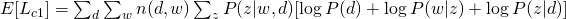

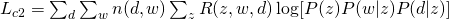

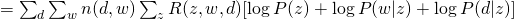

The Log-likelihood of the whole data set for (1) and (2) are:

EM

For  or

or  , the optimization is hard due to the log of sum. Therefore, an algorithm called Expectation-Maximization is usually employed. Before we introduce anything about EM, please note that EM is only guarantee to find a local optimum (although it may be a global one).

, the optimization is hard due to the log of sum. Therefore, an algorithm called Expectation-Maximization is usually employed. Before we introduce anything about EM, please note that EM is only guarantee to find a local optimum (although it may be a global one).

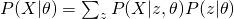

First, we see how EM works in general. As we shown for PLSA, we usually want to estimate the likelihood of data, namely  , given the paramter

, given the paramter  . The easiest way is to obtain a maximum likelihood estimator by maximizing

. The easiest way is to obtain a maximum likelihood estimator by maximizing  . However, sometimes, we also want to include some hidden variables which are usually useful for our task. Therefore, what we really want to maximize is

. However, sometimes, we also want to include some hidden variables which are usually useful for our task. Therefore, what we really want to maximize is  , the complete likelihood. Now, our attention becomes to this complete likelihood. Again, directly maximizing this likelihood is usually difficult. What we would like to show here is to obtain a lower bound of the likelihood and maximize this lower bound.

, the complete likelihood. Now, our attention becomes to this complete likelihood. Again, directly maximizing this likelihood is usually difficult. What we would like to show here is to obtain a lower bound of the likelihood and maximize this lower bound.

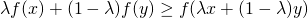

We need Jensen’s Inequality to help us obtain this lower bound. For any convex function  , Jensen’s Inequality states that :

, Jensen’s Inequality states that :

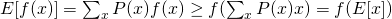

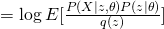

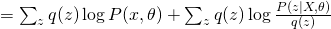

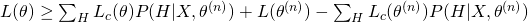

Thus, it is not difficult to show that :

and for concave functions (like logarithm), it is :

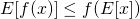

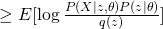

Back to our complete likelihood, we can obtain the following conclusion by using concave version of Jensen’s Inequality :

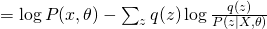

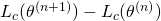

Therefore, we obtained a lower bound of complete likelihood and we want to maximize it as tight as possible. EM is an algorithm that maximize this lower bound through a iterative fashion. Usually, EM first would fix current  value and maximize

value and maximize  and then use the new

and then use the new  value to obtain a new guess on

value to obtain a new guess on  , which is essentially a two stage maximization process. The first step can be shown as follows:

, which is essentially a two stage maximization process. The first step can be shown as follows:

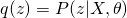

The first term is the same for all  . Therefore, in order to maximize the whole equation, we need to minimize KL divergence between

. Therefore, in order to maximize the whole equation, we need to minimize KL divergence between  and

and  , which eventually leads to the optimum solution of

, which eventually leads to the optimum solution of  . So, usually for E-step, we use current guess of

. So, usually for E-step, we use current guess of  to calculate the posterior distribution of hidden variable as the new update score. For M-step, it is problem-dependent. We will see how to do that in later discussions.

to calculate the posterior distribution of hidden variable as the new update score. For M-step, it is problem-dependent. We will see how to do that in later discussions.

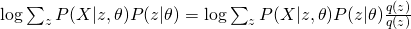

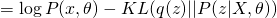

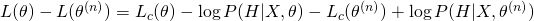

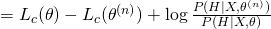

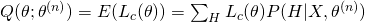

Another explanation of EM is in terms of optimizing a so-called Q function. We devise the data generation process as  . Therefore, the complete likelihood is modified as:

. Therefore, the complete likelihood is modified as:

Think about how to maximize  . Instead of directly maximizing it, we can iteratively maximize

. Instead of directly maximizing it, we can iteratively maximize  as :

as :

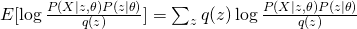

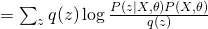

Now take the expectation of this equation, we have:

The last term is always non-negative since it can be recognized as the KL-divergence of  and

and  . Therefore, we obtain a lower bound of Likelihood :

. Therefore, we obtain a lower bound of Likelihood :

The last two terms can be treated as constants as they do not contain the variable  , so the lower bound is essentially the first term, which is also sometimes called as “Q-function”.

, so the lower bound is essentially the first term, which is also sometimes called as “Q-function”.

EM of Formulation 1

In case of Formulation 1, let us introduce hidden variables  to indicate which hidden topic

to indicate which hidden topic  is selected to generated

is selected to generated  in

in  (

( ). Therefore, the complete likelihood can be formulated as :

). Therefore, the complete likelihood can be formulated as :

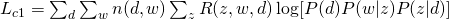

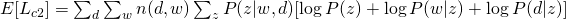

From the equation above, we can write our Q-function for the complete likelihood  :

:

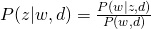

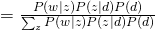

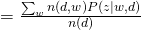

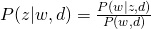

For E-step, simply using Bayes Rule, we can obtain:

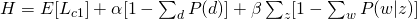

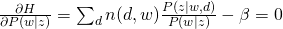

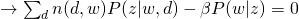

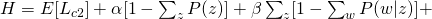

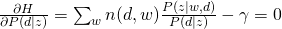

For M-step, we need to maximize Q-function, which needs to be incorporated with other constraints:

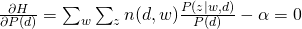

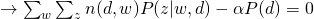

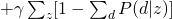

and take all derivatives:

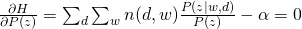

Therefore, we can easily obtain:

EM of Formulation 2

Use similar method to introduce hidden variables to indicate which  is selected to generated

is selected to generated  and

and  and we can have the following complete likelihood :

and we can have the following complete likelihood :

Therefore, the Q-function  would be :

would be :

For E-step, again, simply using Bayes Rule, we can obtain:

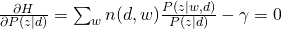

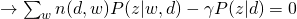

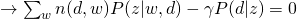

For M-step, we maximize the constraint version of Q-function:

and take all derivatives:

Therefore, we can easily obtain:

Notes on Probabilistic Latent Semantic Analysis (PLSA)的更多相关文章

- NLP —— 图模型(三)pLSA(Probabilistic latent semantic analysis,概率隐性语义分析)模型

LSA(Latent semantic analysis,隐性语义分析).pLSA(Probabilistic latent semantic analysis,概率隐性语义分析)和 LDA(Late ...

- 主题模型之概率潜在语义分析(Probabilistic Latent Semantic Analysis)

上一篇总结了潜在语义分析(Latent Semantic Analysis, LSA),LSA主要使用了线性代数中奇异值分解的方法,但是并没有严格的概率推导,由于文本文档的维度往往很高,如果在主题聚类 ...

- Latent semantic analysis note(LSA)

1 LSA Introduction LSA(latent semantic analysis)潜在语义分析,也被称为LSI(latent semantic index),是Scott Deerwes ...

- 主题模型之潜在语义分析(Latent Semantic Analysis)

主题模型(Topic Models)是一套试图在大量文档中发现潜在主题结构的机器学习模型,主题模型通过分析文本中的词来发现文档中的主题.主题之间的联系方式和主题的发展.通过主题模型可以使我们组织和总结 ...

- Latent Semantic Analysis (LSA) Tutorial 潜语义分析LSA介绍 一

Latent Semantic Analysis (LSA) Tutorial 译:http://www.puffinwarellc.com/index.php/news-and-articles/a ...

- 潜语义分析(Latent Semantic Analysis)

LSI(Latent semantic indexing, 潜语义索引)和LSA(Latent semantic analysis,潜语义分析)这两个名字其实是一回事.我们这里称为LSA. LSA源自 ...

- 潜在语义分析Latent semantic analysis note(LSA)原理及代码

文章引用:http://blog.sina.com.cn/s/blog_62a9902f0101cjl3.html Latent Semantic Analysis (LSA)也被称为Latent S ...

- 海量数据挖掘MMDS week4: 推荐系统之隐语义模型latent semantic analysis

http://blog.csdn.net/pipisorry/article/details/49256457 海量数据挖掘Mining Massive Datasets(MMDs) -Jure Le ...

- Latent Semantic Analysis(LSA/ LSI)原理简介

LSA的工作原理: How Latent Semantic Analysis Works LSA被广泛用于文献检索,文本分类,垃圾邮件过滤,语言识别,模式检索以及文章评估自动化等场景. LSA其中一个 ...

随机推荐

- android SDK 快速更新配置(转)

http://blog.csdn.net/yy1300326388/article/details/45074447 1.强制使用http替换https链接 Tools>选择Options,勾选 ...

- php延迟加载的示例

class a{ ; ; //public $d = 5; public function aa(){ echo self::$b; } public function cc(){ echo stat ...

- HDU2028 Lowest Common Multiple Plus

解题思路:最近很忙,有点乱,感觉对不起自己的中国好队友. 好好调整,一切都不是问题,Just do it ! 代码: #include<cstdio> int gcd(int a, i ...

- SQL利用Case When Then多条件判断

CASE WHEN 条件1 THEN 结果1 WHEN 条件2 THEN 结果2 WHEN 条件3 THEN 结果3 WHEN 条件4 THEN 结果4 ....... ...

- 大数据时代的技术hive:hive介绍

我最近研究了hive的相关技术,有点心得,这里和大家分享下. 首先我们要知道hive到底是做什么的.下面这几段文字很好的描述了hive的特性: 1.hive是基于Hadoop的一个数据仓库工具,可以将 ...

- Shell 的source命令

source命令用法: source FileName 作用:在当前bash环境下读取并执行FileName中的命令. 注:该命令通常用命令“.”来替代. 如:source .bash_rc 与 . ...

- mysql的password()函数和md5函数

password用于修改mysql的用户密码,如果是应用与web程序建议使用md5()函数, password函数旧版16位,新版41位,可用select length(password('12345 ...

- 基于CentOS与VmwareStation10搭建Oracle11G RAC 64集群环境:4.安装Oracle RAC FAQ-4.4.无法图形化安装Grid Infrastructure

无法图形化安装: [grid@linuxrac1 grid]$ ./runInstaller Starting Oracle Universal Installer... Checking Temp ...

- 关于Cygwin的x-Server的自动运行以及相关脚本修改

常常需要用到远端服务器的图形工具,如果在windows端没用xserver的话,很多程序无法运行.一个特殊的例子,emacs在没用xserver的时候,是直接在终端中打开的,如果不修改cygwin.b ...

- stitch image app (Qt)

result how to use? source code http://7qnct6.com1.z0.glb.clouddn.com/dropSelect.rar