图像拼接(image stitching)

# OpenCV中stitching的使用

OpenCV提供了高级别的函数封装在Stitcher类中,使用很方便,不用考虑太多的细节。

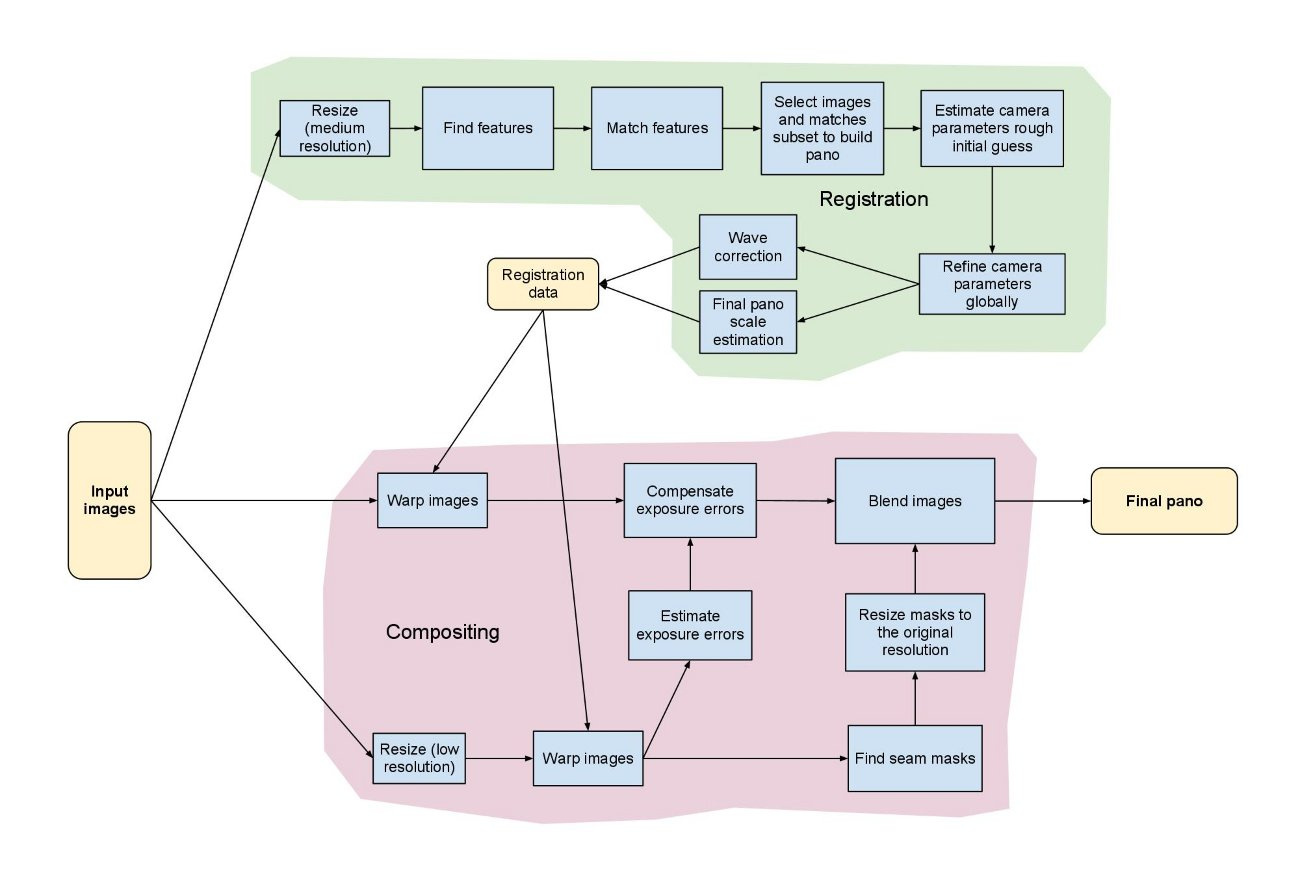

低级别函数封装在detail命名空间中,展示了OpenCV算法实现的很多步骤和细节,使熟悉如下拼接流水线的用户,方便自己定制。

可见OpenCV图像拼接模块的实现是十分精密和复杂的,拼接的结果很完善,但同时也是费时的,完全不能够实现实时应用。

官方提供的stitching和stitching_detailed使用示例,分别是高级别和低级别封装这两种方式正确地使用示例。两种结果产生的拼接结果相同,后者却可以允许用户,在参数变量初始化时,选择各项算法。

具体算法流程:

- 命令行调用程序,输入源图像以及程序的参数

- 特征点检测,判断是使用surf还是orb,默认是surf。

- 对图像的特征点进行匹配,使用最近邻和次近邻方法,将两个最优的匹配的置信度保存下来。

- 对图像进行排序以及将置信度高的图像保存到同一个集合中,删除置信度比较低的图像间的匹配,得到能正确匹配的图像序列。这样将置信度高于门限的所有匹配合并到一个集合中。

- 对所有图像进行相机参数粗略估计,然后求出旋转矩阵

- 使用光束平均法进一步精准的估计出旋转矩阵。

- 波形校正,水平或者垂直

- 拼接

- 融合,多频段融合,光照补偿,

代码:

#include "pch.h"

#include <iostream>

#include <fstream>

#include <string>

#include "opencv2/opencv_modules.hpp"

#include <opencv2/core/utility.hpp>

#include "opencv2/imgcodecs.hpp"

#include "opencv2/highgui.hpp"

#include "opencv2/stitching/detail/autocalib.hpp"

#include "opencv2/stitching/detail/blenders.hpp"

#include "opencv2/stitching/detail/timelapsers.hpp"

#include "opencv2/stitching/detail/camera.hpp"

#include "opencv2/stitching/detail/exposure_compensate.hpp"

#include "opencv2/stitching/detail/matchers.hpp"

#include "opencv2/stitching/detail/motion_estimators.hpp"

#include "opencv2/stitching/detail/seam_finders.hpp"

#include "opencv2/stitching/detail/warpers.hpp"

#include "opencv2/stitching/warpers.hpp" #ifdef HAVE_OPENCV_XFEATURES2D

#include "opencv2/xfeatures2d/nonfree.hpp"

#endif #define ENABLE_LOG 1

#define LOG(msg) std::cout << msg

#define LOGLN(msg) std::cout << msg << std::endl using namespace std;

using namespace cv;

using namespace cv::detail; static void printUsage()

{

cout <<

"Rotation model images stitcher.\n\n"

"stitching_detailed img1 img2 [...imgN] [flags]\n\n"

"Flags:\n"

" --preview\n"

" Run stitching in the preview mode. Works faster than usual mode,\n"

" but output image will have lower resolution.\n"

" --try_cuda (yes|no)\n"

" Try to use CUDA. The default value is 'no'. All default values\n"

" are for CPU mode.\n"

"\nMotion Estimation Flags:\n"

" --work_megapix <float>\n"

" Resolution for image registration step. The default is 0.6 Mpx.\n"

" --features (surf|orb|sift|akaze)\n"

" Type of features used for images matching.\n"

" The default is surf if available, orb otherwise.\n"

" --matcher (homography|affine)\n"

" Matcher used for pairwise image matching.\n"

" --estimator (homography|affine)\n"

" Type of estimator used for transformation estimation.\n"

" --match_conf <float>\n"

" Confidence for feature matching step. The default is 0.65 for surf and 0.3 for orb.\n"

" --conf_thresh <float>\n"

" Threshold for two images are from the same panorama confidence.\n"

" The default is 1.0.\n"

" --ba (no|reproj|ray|affine)\n"

" Bundle adjustment cost function. The default is ray.\n"

" --ba_refine_mask (mask)\n"

" Set refinement mask for bundle adjustment. It looks like 'x_xxx',\n"

" where 'x' means refine respective parameter and '_' means don't\n"

" refine one, and has the following format:\n"

" <fx><skew><ppx><aspect><ppy>. The default mask is 'xxxxx'. If bundle\n"

" adjustment doesn't support estimation of selected parameter then\n"

" the respective flag is ignored.\n"

" --wave_correct (no|horiz|vert)\n"

" Perform wave effect correction. The default is 'horiz'.\n"

" --save_graph <file_name>\n"

" Save matches graph represented in DOT language to <file_name> file.\n"

" Labels description: Nm is number of matches, Ni is number of inliers,\n"

" C is confidence.\n"

"\nCompositing Flags:\n"

" --warp (affine|plane|cylindrical|spherical|fisheye|stereographic|compressedPlaneA2B1|compressedPlaneA1.5B1|compressedPlanePortraitA2B1|compressedPlanePortraitA1.5B1|paniniA2B1|paniniA1.5B1|paniniPortraitA2B1|paniniPortraitA1.5B1|mercator|transverseMercator)\n"

" Warp surface type. The default is 'spherical'.\n"

" --seam_megapix <float>\n"

" Resolution for seam estimation step. The default is 0.1 Mpx.\n"

" --seam (no|voronoi|gc_color|gc_colorgrad)\n"

" Seam estimation method. The default is 'gc_color'.\n"

" --compose_megapix <float>\n"

" Resolution for compositing step. Use -1 for original resolution.\n"

" The default is -1.\n"

" --expos_comp (no|gain|gain_blocks|channels|channels_blocks)\n"

" Exposure compensation method. The default is 'gain_blocks'.\n"

" --expos_comp_nr_feeds <int>\n"

" Number of exposure compensation feed. The default is 1.\n"

" --expos_comp_nr_filtering <int>\n"

" Number of filtering iterations of the exposure compensation gains.\n"

" Only used when using a block exposure compensation method.\n"

" The default is 2.\n"

" --expos_comp_block_size <int>\n"

" BLock size in pixels used by the exposure compensator.\n"

" Only used when using a block exposure compensation method.\n"

" The default is 32.\n"

" --blend (no|feather|multiband)\n"

" Blending method. The default is 'multiband'.\n"

" --blend_strength <float>\n"

" Blending strength from [0,100] range. The default is 5.\n"

" --output <result_img>\n"

" The default is 'result.jpg'.\n"

" --timelapse (as_is|crop) \n"

" Output warped images separately as frames of a time lapse movie, with 'fixed_' prepended to input file names.\n"

" --rangewidth <int>\n"

" uses range_width to limit number of images to match with.\n";

} // Default command line args

vector<String> img_names;

bool preview = false;

bool try_cuda = false;

double work_megapix = 0.6;

double seam_megapix = 0.1;

double compose_megapix = -1;

float conf_thresh = 1.f;

#ifdef HAVE_OPENCV_XFEATURES2D

string features_type = "surf";

#else

string features_type = "orb";

#endif

string matcher_type = "homography";

string estimator_type = "homography";

string ba_cost_func = "ray";

string ba_refine_mask = "xxxxx";

bool do_wave_correct = true;

WaveCorrectKind wave_correct = detail::WAVE_CORRECT_HORIZ;

bool save_graph = false;

std::string save_graph_to;

string warp_type = "spherical";

int expos_comp_type = ExposureCompensator::GAIN_BLOCKS;

int expos_comp_nr_feeds = 1;

int expos_comp_nr_filtering = 2;

int expos_comp_block_size = 32;

float match_conf = 0.3f;

string seam_find_type = "gc_color";

int blend_type = Blender::MULTI_BAND;

int timelapse_type = Timelapser::AS_IS;

float blend_strength = 5;

string result_name = "F:/opencv/build/bin/sample-data/stitching/result.jpg";

bool timelapse = false;

int range_width = -1; int main(int argc, char* argv[])

{

#if ENABLE_LOG

int64 app_start_time = getTickCount();

#endif #if 0

cv::setBreakOnError(true);

#endif img_names.push_back("F:/opencv/build/bin/sample-data/stitching/st1.jpg");

img_names.push_back("F:/opencv/build/bin/sample-data/stitching/st2.jpg");

img_names.push_back("F:/opencv/build/bin/sample-data/stitching/st3.jpg");

img_names.push_back("F:/opencv/build/bin/sample-data/stitching/st4.jpg"); // Check if have enough images

int num_images = static_cast<int>(img_names.size());

if (num_images < 2)

{

LOGLN("Need more images");

return -1;

} double work_scale = 1, seam_scale = 1, compose_scale = 1;

bool is_work_scale_set = false, is_seam_scale_set = false, is_compose_scale_set = false; LOGLN("Finding features...");

#if ENABLE_LOG

int64 t = getTickCount();

#endif Ptr<Feature2D> finder;

if (features_type == "orb")

{

finder = ORB::create();

}

else if (features_type == "akaze")

{

finder = AKAZE::create();

}

#ifdef HAVE_OPENCV_XFEATURES2D

else if (features_type == "surf")

{

finder = xfeatures2d::SURF::create();

}

else if (features_type == "sift") {

finder = xfeatures2d::SIFT::create();

}

#endif

else

{

cout << "Unknown 2D features type: '" << features_type << "'.\n";

return -1;

} cout << "Current 2D features type: '" << features_type << "'.\n"; Mat full_img, img;

vector<ImageFeatures> features(num_images);

vector<Mat> images(num_images);

vector<Size> full_img_sizes(num_images);

double seam_work_aspect = 1; for (int i = 0; i < num_images; ++i)

{

full_img = imread(samples::findFile(img_names[i]));

full_img_sizes[i] = full_img.size(); if (full_img.empty())

{

LOGLN("Can't open image " << img_names[i]);

return -1;

}

if (work_megapix < 0)

{

img = full_img;

work_scale = 1;

is_work_scale_set = true;

}

else

{

if (!is_work_scale_set)

{

work_scale = min(1.0, sqrt(work_megapix * 1e6 / full_img.size().area()));

is_work_scale_set = true;

}

resize(full_img, img, Size(), work_scale, work_scale, INTER_LINEAR_EXACT);

}

if (!is_seam_scale_set)

{

seam_scale = min(1.0, sqrt(seam_megapix * 1e6 / full_img.size().area()));

seam_work_aspect = seam_scale / work_scale;

is_seam_scale_set = true;

} computeImageFeatures(finder, img, features[i]);

features[i].img_idx = i;

LOGLN("Features in image #" << i + 1 << ": " << features[i].keypoints.size()); resize(full_img, img, Size(), seam_scale, seam_scale, INTER_LINEAR_EXACT);

images[i] = img.clone();

} full_img.release();

img.release(); LOGLN("Finding features, time: " << ((getTickCount() - t) / getTickFrequency()) << " sec"); LOG("Pairwise matching");

#if ENABLE_LOG

t = getTickCount();

#endif

vector<MatchesInfo> pairwise_matches;

Ptr<FeaturesMatcher> matcher;

if (matcher_type == "affine")

matcher = makePtr<AffineBestOf2NearestMatcher>(false, try_cuda, match_conf);

else if (range_width == -1)

matcher = makePtr<BestOf2NearestMatcher>(try_cuda, match_conf);

else

matcher = makePtr<BestOf2NearestRangeMatcher>(range_width, try_cuda, match_conf); (*matcher)(features, pairwise_matches);

matcher->collectGarbage(); LOGLN("Pairwise matching, time: " << ((getTickCount() - t) / getTickFrequency()) << " sec"); // Check if we should save matches graph

if (save_graph)

{

LOGLN("Saving matches graph...");

ofstream f(save_graph_to.c_str());

f << matchesGraphAsString(img_names, pairwise_matches, conf_thresh);

} // Leave only images we are sure are from the same panorama

vector<int> indices = leaveBiggestComponent(features, pairwise_matches, conf_thresh);

vector<Mat> img_subset;

vector<String> img_names_subset;

vector<Size> full_img_sizes_subset;

for (size_t i = 0; i < indices.size(); ++i)

{

img_names_subset.push_back(img_names[indices[i]]);

img_subset.push_back(images[indices[i]]);

full_img_sizes_subset.push_back(full_img_sizes[indices[i]]);

} images = img_subset;

img_names = img_names_subset;

full_img_sizes = full_img_sizes_subset; // Check if we still have enough images

num_images = static_cast<int>(img_names.size());

if (num_images < 2)

{

LOGLN("Need more images from the same panorama");

return -1;

} Ptr<Estimator> estimator;

if (estimator_type == "affine")

estimator = makePtr<AffineBasedEstimator>();

else

estimator = makePtr<HomographyBasedEstimator>(); vector<CameraParams> cameras;

if (!(*estimator)(features, pairwise_matches, cameras))

{

cout << "Homography estimation failed.\n";

return -1;

} for (size_t i = 0; i < cameras.size(); ++i)

{

Mat R;

cameras[i].R.convertTo(R, CV_32F);

cameras[i].R = R;

LOGLN("Initial camera intrinsics #" << indices[i] + 1 << ":\nK:\n" << cameras[i].K() << "\nR:\n" << cameras[i].R);

} Ptr<detail::BundleAdjusterBase> adjuster;

if (ba_cost_func == "reproj") adjuster = makePtr<detail::BundleAdjusterReproj>();

else if (ba_cost_func == "ray") adjuster = makePtr<detail::BundleAdjusterRay>();

else if (ba_cost_func == "affine") adjuster = makePtr<detail::BundleAdjusterAffinePartial>();

else if (ba_cost_func == "no") adjuster = makePtr<NoBundleAdjuster>();

else

{

cout << "Unknown bundle adjustment cost function: '" << ba_cost_func << "'.\n";

return -1;

}

adjuster->setConfThresh(conf_thresh);

Mat_<uchar> refine_mask = Mat::zeros(3, 3, CV_8U);

if (ba_refine_mask[0] == 'x') refine_mask(0, 0) = 1;

if (ba_refine_mask[1] == 'x') refine_mask(0, 1) = 1;

if (ba_refine_mask[2] == 'x') refine_mask(0, 2) = 1;

if (ba_refine_mask[3] == 'x') refine_mask(1, 1) = 1;

if (ba_refine_mask[4] == 'x') refine_mask(1, 2) = 1;

adjuster->setRefinementMask(refine_mask);

if (!(*adjuster)(features, pairwise_matches, cameras))

{

cout << "Camera parameters adjusting failed.\n";

return -1;

} // Find median focal length vector<double> focals;

for (size_t i = 0; i < cameras.size(); ++i)

{

LOGLN("Camera #" << indices[i] + 1 << ":\nK:\n" << cameras[i].K() << "\nR:\n" << cameras[i].R);

focals.push_back(cameras[i].focal);

} sort(focals.begin(), focals.end());

float warped_image_scale;

if (focals.size() % 2 == 1)

warped_image_scale = static_cast<float>(focals[focals.size() / 2]);

else

warped_image_scale = static_cast<float>(focals[focals.size() / 2 - 1] + focals[focals.size() / 2]) * 0.5f; if (do_wave_correct)

{

vector<Mat> rmats;

for (size_t i = 0; i < cameras.size(); ++i)

rmats.push_back(cameras[i].R.clone());

waveCorrect(rmats, wave_correct);

for (size_t i = 0; i < cameras.size(); ++i)

cameras[i].R = rmats[i];

} LOGLN("Warping images (auxiliary)... ");

#if ENABLE_LOG

t = getTickCount();

#endif vector<Point> corners(num_images);

vector<UMat> masks_warped(num_images);

vector<UMat> images_warped(num_images);

vector<Size> sizes(num_images);

vector<UMat> masks(num_images); // Preapre images masks

for (int i = 0; i < num_images; ++i)

{

masks[i].create(images[i].size(), CV_8U);

masks[i].setTo(Scalar::all(255));

} // Warp images and their masks Ptr<WarperCreator> warper_creator;

#ifdef HAVE_OPENCV_CUDAWARPING

if (try_cuda && cuda::getCudaEnabledDeviceCount() > 0)

{

if (warp_type == "plane")

warper_creator = makePtr<cv::PlaneWarperGpu>();

else if (warp_type == "cylindrical")

warper_creator = makePtr<cv::CylindricalWarperGpu>();

else if (warp_type == "spherical")

warper_creator = makePtr<cv::SphericalWarperGpu>();

}

else

#endif

{

if (warp_type == "plane")

warper_creator = makePtr<cv::PlaneWarper>();

else if (warp_type == "affine")

warper_creator = makePtr<cv::AffineWarper>();

else if (warp_type == "cylindrical")

warper_creator = makePtr<cv::CylindricalWarper>();

else if (warp_type == "spherical")

warper_creator = makePtr<cv::SphericalWarper>();

else if (warp_type == "fisheye")

warper_creator = makePtr<cv::FisheyeWarper>();

else if (warp_type == "stereographic")

warper_creator = makePtr<cv::StereographicWarper>();

else if (warp_type == "compressedPlaneA2B1")

warper_creator = makePtr<cv::CompressedRectilinearWarper>(2.0f, 1.0f);

else if (warp_type == "compressedPlaneA1.5B1")

warper_creator = makePtr<cv::CompressedRectilinearWarper>(1.5f, 1.0f);

else if (warp_type == "compressedPlanePortraitA2B1")

warper_creator = makePtr<cv::CompressedRectilinearPortraitWarper>(2.0f, 1.0f);

else if (warp_type == "compressedPlanePortraitA1.5B1")

warper_creator = makePtr<cv::CompressedRectilinearPortraitWarper>(1.5f, 1.0f);

else if (warp_type == "paniniA2B1")

warper_creator = makePtr<cv::PaniniWarper>(2.0f, 1.0f);

else if (warp_type == "paniniA1.5B1")

warper_creator = makePtr<cv::PaniniWarper>(1.5f, 1.0f);

else if (warp_type == "paniniPortraitA2B1")

warper_creator = makePtr<cv::PaniniPortraitWarper>(2.0f, 1.0f);

else if (warp_type == "paniniPortraitA1.5B1")

warper_creator = makePtr<cv::PaniniPortraitWarper>(1.5f, 1.0f);

else if (warp_type == "mercator")

warper_creator = makePtr<cv::MercatorWarper>();

else if (warp_type == "transverseMercator")

warper_creator = makePtr<cv::TransverseMercatorWarper>();

} if (!warper_creator)

{

cout << "Can't create the following warper '" << warp_type << "'\n";

return 1;

} Ptr<RotationWarper> warper = warper_creator->create(static_cast<float>(warped_image_scale * seam_work_aspect)); for (int i = 0; i < num_images; ++i)

{

Mat_<float> K;

cameras[i].K().convertTo(K, CV_32F);

float swa = (float)seam_work_aspect;

K(0, 0) *= swa; K(0, 2) *= swa;

K(1, 1) *= swa; K(1, 2) *= swa; corners[i] = warper->warp(images[i], K, cameras[i].R, INTER_LINEAR, BORDER_REFLECT, images_warped[i]);

sizes[i] = images_warped[i].size(); warper->warp(masks[i], K, cameras[i].R, INTER_NEAREST, BORDER_CONSTANT, masks_warped[i]);

} vector<UMat> images_warped_f(num_images);

for (int i = 0; i < num_images; ++i)

images_warped[i].convertTo(images_warped_f[i], CV_32F); LOGLN("Warping images, time: " << ((getTickCount() - t) / getTickFrequency()) << " sec"); LOGLN("Compensating exposure...");

#if ENABLE_LOG

t = getTickCount();

#endif Ptr<ExposureCompensator> compensator = ExposureCompensator::createDefault(expos_comp_type);

if (dynamic_cast<GainCompensator*>(compensator.get()))

{

GainCompensator* gcompensator = dynamic_cast<GainCompensator*>(compensator.get());

gcompensator->setNrFeeds(expos_comp_nr_feeds);

} if (dynamic_cast<ChannelsCompensator*>(compensator.get()))

{

ChannelsCompensator* ccompensator = dynamic_cast<ChannelsCompensator*>(compensator.get());

ccompensator->setNrFeeds(expos_comp_nr_feeds);

} if (dynamic_cast<BlocksCompensator*>(compensator.get()))

{

BlocksCompensator* bcompensator = dynamic_cast<BlocksCompensator*>(compensator.get());

bcompensator->setNrFeeds(expos_comp_nr_feeds);

bcompensator->setNrGainsFilteringIterations(expos_comp_nr_filtering);

bcompensator->setBlockSize(expos_comp_block_size, expos_comp_block_size);

} compensator->feed(corners, images_warped, masks_warped); LOGLN("Compensating exposure, time: " << ((getTickCount() - t) / getTickFrequency()) << " sec"); LOGLN("Finding seams...");

#if ENABLE_LOG

t = getTickCount();

#endif Ptr<SeamFinder> seam_finder;

if (seam_find_type == "no")

seam_finder = makePtr<detail::NoSeamFinder>();

else if (seam_find_type == "voronoi")

seam_finder = makePtr<detail::VoronoiSeamFinder>();

else if (seam_find_type == "gc_color")

{

#ifdef HAVE_OPENCV_CUDALEGACY

if (try_cuda && cuda::getCudaEnabledDeviceCount() > 0)

seam_finder = makePtr<detail::GraphCutSeamFinderGpu>(GraphCutSeamFinderBase::COST_COLOR);

else

#endif

seam_finder = makePtr<detail::GraphCutSeamFinder>(GraphCutSeamFinderBase::COST_COLOR);

}

else if (seam_find_type == "gc_colorgrad")

{

#ifdef HAVE_OPENCV_CUDALEGACY

if (try_cuda && cuda::getCudaEnabledDeviceCount() > 0)

seam_finder = makePtr<detail::GraphCutSeamFinderGpu>(GraphCutSeamFinderBase::COST_COLOR_GRAD);

else

#endif

seam_finder = makePtr<detail::GraphCutSeamFinder>(GraphCutSeamFinderBase::COST_COLOR_GRAD);

}

else if (seam_find_type == "dp_color")

seam_finder = makePtr<detail::DpSeamFinder>(DpSeamFinder::COLOR);

else if (seam_find_type == "dp_colorgrad")

seam_finder = makePtr<detail::DpSeamFinder>(DpSeamFinder::COLOR_GRAD);

if (!seam_finder)

{

cout << "Can't create the following seam finder '" << seam_find_type << "'\n";

return 1;

} seam_finder->find(images_warped_f, corners, masks_warped); LOGLN("Finding seams, time: " << ((getTickCount() - t) / getTickFrequency()) << " sec"); // Release unused memory

images.clear();

images_warped.clear();

images_warped_f.clear();

masks.clear(); LOGLN("Compositing...");

#if ENABLE_LOG

t = getTickCount();

#endif Mat img_warped, img_warped_s;

Mat dilated_mask, seam_mask, mask, mask_warped;

Ptr<Blender> blender;

Ptr<Timelapser> timelapser;

//double compose_seam_aspect = 1;

double compose_work_aspect = 1; for (int img_idx = 0; img_idx < num_images; ++img_idx)

{

LOGLN("Compositing image #" << indices[img_idx] + 1); // Read image and resize it if necessary

full_img = imread(samples::findFile(img_names[img_idx]));

if (!is_compose_scale_set)

{

if (compose_megapix > 0)

compose_scale = min(1.0, sqrt(compose_megapix * 1e6 / full_img.size().area()));

is_compose_scale_set = true; // Compute relative scales

//compose_seam_aspect = compose_scale / seam_scale;

compose_work_aspect = compose_scale / work_scale; // Update warped image scale

warped_image_scale *= static_cast<float>(compose_work_aspect);

warper = warper_creator->create(warped_image_scale); // Update corners and sizes

for (int i = 0; i < num_images; ++i)

{

// Update intrinsics

cameras[i].focal *= compose_work_aspect;

cameras[i].ppx *= compose_work_aspect;

cameras[i].ppy *= compose_work_aspect; // Update corner and size

Size sz = full_img_sizes[i];

if (std::abs(compose_scale - 1) > 1e-1)

{

sz.width = cvRound(full_img_sizes[i].width * compose_scale);

sz.height = cvRound(full_img_sizes[i].height * compose_scale);

} Mat K;

cameras[i].K().convertTo(K, CV_32F);

Rect roi = warper->warpRoi(sz, K, cameras[i].R);

corners[i] = roi.tl();

sizes[i] = roi.size();

}

}

if (abs(compose_scale - 1) > 1e-1)

resize(full_img, img, Size(), compose_scale, compose_scale, INTER_LINEAR_EXACT);

else

img = full_img;

full_img.release();

Size img_size = img.size(); Mat K;

cameras[img_idx].K().convertTo(K, CV_32F); // Warp the current image

warper->warp(img, K, cameras[img_idx].R, INTER_LINEAR, BORDER_REFLECT, img_warped); // Warp the current image mask

mask.create(img_size, CV_8U);

mask.setTo(Scalar::all(255));

warper->warp(mask, K, cameras[img_idx].R, INTER_NEAREST, BORDER_CONSTANT, mask_warped); // Compensate exposure

compensator->apply(img_idx, corners[img_idx], img_warped, mask_warped); img_warped.convertTo(img_warped_s, CV_16S);

img_warped.release();

img.release();

mask.release(); dilate(masks_warped[img_idx], dilated_mask, Mat());

resize(dilated_mask, seam_mask, mask_warped.size(), 0, 0, INTER_LINEAR_EXACT);

mask_warped = seam_mask & mask_warped; if (!blender && !timelapse)

{

blender = Blender::createDefault(blend_type, try_cuda);

Size dst_sz = resultRoi(corners, sizes).size();

float blend_width = sqrt(static_cast<float>(dst_sz.area())) * blend_strength / 100.f;

if (blend_width < 1.f)

blender = Blender::createDefault(Blender::NO, try_cuda);

else if (blend_type == Blender::MULTI_BAND)

{

MultiBandBlender* mb = dynamic_cast<MultiBandBlender*>(blender.get());

mb->setNumBands(static_cast<int>(ceil(log(blend_width) / log(2.)) - 1.));

LOGLN("Multi-band blender, number of bands: " << mb->numBands());

}

else if (blend_type == Blender::FEATHER)

{

FeatherBlender* fb = dynamic_cast<FeatherBlender*>(blender.get());

fb->setSharpness(1.f / blend_width);

LOGLN("Feather blender, sharpness: " << fb->sharpness());

}

blender->prepare(corners, sizes);

}

else if (!timelapser && timelapse)

{

timelapser = Timelapser::createDefault(timelapse_type);

timelapser->initialize(corners, sizes);

} // Blend the current image

if (timelapse)

{

timelapser->process(img_warped_s, Mat::ones(img_warped_s.size(), CV_8UC1), corners[img_idx]);

String fixedFileName;

size_t pos_s = String(img_names[img_idx]).find_last_of("/\\");

if (pos_s == String::npos)

{

fixedFileName = "fixed_" + img_names[img_idx];

}

else

{

fixedFileName = "fixed_" + String(img_names[img_idx]).substr(pos_s + 1, String(img_names[img_idx]).length() - pos_s);

}

imwrite(fixedFileName, timelapser->getDst());

}

else

{

blender->feed(img_warped_s, mask_warped, corners[img_idx]);

}

} if (!timelapse)

{

Mat result, result_mask;

blender->blend(result, result_mask); LOGLN("Compositing, time: " << ((getTickCount() - t) / getTickFrequency()) << " sec"); imwrite(result_name, result);

} LOGLN("Finished, total time: " << ((getTickCount() - app_start_time) / getTickFrequency()) << " sec");

return 0;

}

结果:

Finding features...

Current 2D features type: 'surf'.

[ INFO:0] Initialize OpenCL runtime...

Features in image #1: 911

Features in image #2: 1085

Features in image #3: 1766

Features in image #4: 2001

Finding features, time: 3.33727 sec

Pairwise matchingPairwise matching, time: 3.2849 sec

Initial camera intrinsics #1:

K:

[4503.939581818162, 0, 285;

0, 4503.939581818162, 210;

0, 0, 1]

R:

[1.0011346, 0.0019526235, -0.0037489906;

0.00011878588, 1.0000151, -0.052518897;

-0.0011389133, 0.021224562, 1]

Initial camera intrinsics #2:

K:

[4503.939581818162, 0, 249;

0, 4503.939581818162, 222;

0, 0, 1]

R:

[1.0023992, 0.0045258515, 0.083801955;

-9.7107059e-06, 1.0006112, -0.049870808;

0.015923418, 0.048128795, 1.0000379]

Initial camera intrinsics #3:

K:

[4503.939581818162, 0, 302.5;

0, 4503.939581818162, 173.5;

0, 0, 1]

R:

[1, 0, 0;

0, 1, 0;

0, 0, 1]

Initial camera intrinsics #4:

K:

[4503.939581818162, 0, 274.5;

0, 4503.939581818162, 194.5;

0, 0, 1]

R:

[1.0004042, 0.00080040237, 0.078620218;

0.00026136645, 1.0005095, -0.0048735617;

0.0061902963, 0.0096427174, 1.0004393]

Camera #1:

K:

[6569.821976030652, 0, 285;

0, 6569.821976030652, 210;

0, 0, 1]

R:

[0.99999672, 0.00038595949, -0.0025201384;

-0.00047636221, 0.99935275, -0.035969362;

0.0025046244, 0.035970442, 0.99934971]

Camera #2:

K:

[6571.327169846625, 0, 249;

0, 6571.327169846625, 222;

0, 0, 1]

R:

[0.99835128, 0.0012797765, 0.057385404;

0.00068109832, 0.99941689, -0.03413773;

-0.05739563, 0.034120534, 0.99776828]

Camera #3:

K:

[6570.486320822205, 0, 302.5;

0, 6570.486320822205, 173.5;

0, 0, 1]

R:

[1, -1.2951205e-09, 0;

-1.2914825e-09, 1, 0;

0, -4.6566129e-10, 1]

Camera #4:

K:

[6571.394840241929, 0, 274.5;

0, 6571.394840241929, 194.5;

0, 0, 1]

R:

[0.99855018, -0.00018820527, 0.053829439;

0.0003683792, 0.99999434, -0.0033372282;

-0.053828511, 0.0033522192, 0.99854457]

Warping images (auxiliary)...

[ INFO:0] Successfully initialized OpenCL cache directory: C:\Users\mzhu\AppData\Local\Temp\opencv\4.1\opencl_cache\

[ INFO:0] Preparing OpenCL cache configuration for context: Intel_R__Corporation--Intel_R__HD_Graphics_620--21_20_16_4574

Warping images, time: 0.0817463 sec

Compensating exposure...

Compensating exposure, time: 0.22982 sec

Finding seams...

Finding seams, time: 1.49795 sec

Compositing...

Compositing image #1

Multi-band blender, number of bands: 5

Compositing image #2

Compositing image #3

Compositing image #4

Compositing, time: 0.705931 sec

Finished, total time: 116.51 sec

OpenPano: Automatic Panorama Stitching From Scratch (https://github.com/ppwwyyxx/OpenPano)

StitchIt: Optimization and Parallelization of Image Stitching (https://github.com/stitchit/StitchIt)

ParaPano: Parallel image stitching using CUDA (https://github.com/zq-chen/ParaPano)

NISwGSP : Natural Image Stitching with the Global Similarity Prior (For Windows:https://github.com/firdauslubis88/NISwGSP,论文阅读笔记:https://zhuanlan.zhihu.com/p/57543736)

推荐:

图像拼接现在还有研究的价值吗?有哪些可以研究的点?现在技术发展如何?

Best Panorama Software for Stitching Images

图像拼接(image stitching)的更多相关文章

- [计算机视觉] 图像拼接 Image Stitching

[计算机视觉] 图像拼接 Image Stitching 2017年04月28日 14:05:19 阅读数:1027 作业要求: 1.将多张图片合并拼接成一张全景图(看下面效果图) 2.尽量用C/C+ ...

- 利用OpenCV实现图像拼接Stitching模块讲解

https://zhuanlan.zhihu.com/p/71777362 1.1 图像拼接基本步骤 图像拼接的完整流程如上所示,首先对输入图像提取鲁棒的特征点,并根据特征描述子完成特征点的匹配,然后 ...

- 基于Emgu cv的图像拼接(转)

分类: 编程 C# Emgu cv Stitching 2012-10-27 11:04 753人阅读 评论(1) 收藏 举报 在新版本的Emgu cv中添加了Emgu.CV.Stitching,这极 ...

- stitching detail输出的dot图含义

如果利用opencv里面提供的stitching detail的话. 输入参数: stitching_detail --save_graph a.dot 1.png 2.png 其中a.dot 文件中 ...

- OpenCV探索之路(二十四)图像拼接和图像融合技术

图像拼接在实际的应用场景很广,比如无人机航拍,遥感图像等等,图像拼接是进一步做图像理解基础步骤,拼接效果的好坏直接影响接下来的工作,所以一个好的图像拼接算法非常重要. 再举一个身边的例子吧,你用你的手 ...

- 基于OpenCV进行图像拼接原理解析和编码实现(提纲 代码和具体内容在课件中)

一.背景 1.1概念定义 我们这里想要实现的图像拼接,既不是如题图1和2这样的"图片艺术拼接",也不是如图3这样的"显示拼接",而是实现类似"BaiD ...

- 关于OpenCV的stitching使用

配置环境:VS2010+OpenCV2.4.9 为了使用OpenCV实现图像拼接头痛了好长时间,一直都没时间做,今天下定决心去实现基本的图像拼接. 首先,看一看使用OpenCV进行拼接的方法 基本都是 ...

- Stitching模块中对特征提取的封装解析(以ORB特性为例)

titching模块中对特征提取的封装解析(以ORB特性为例) OpenCV中Stitching模块(图像拼接模块)的拼接过程可以用PipeLine来进行描述,是一个比较复杂的过程.在这个过程 ...

- Computer Vision_18_Image Stitching:A survey on image mosaicing techniques——2013

此部分是计算机视觉部分,主要侧重在底层特征提取,视频分析,跟踪,目标检测和识别方面等方面.对于自己不太熟悉的领域比如摄像机标定和立体视觉,仅仅列出上google上引用次数比较多的文献.有一些刚刚出版的 ...

随机推荐

- CSP-S2019 游记

想到正解,不一定赢 全部打满,才是成功 Day 0 首先很感谢各位朋友送的贺卡!!! 早上10点的高铁.今年可以直接在汕头站坐高铁不用专门跑到潮汕站了,1h->15min车程,巨大好评. 虽然离 ...

- easyui datebox 只显示日期,本文为转载,稍加改动

var DateBoxHandler = {}; DateBoxHandler.onlyShowMonth = function(id) { function padding(v) {if (v &l ...

- the_permalink()和get_permalink()的区别

wordpress中the_permalink()是用于posts loop循环中(判断是否有文章,如果有文章则展示出来:如果没有文章就显示没有文章),常用于文章分类列表和文章页的模板中,用法如下 & ...

- MANIFEST.MF文件对Import-Package/Export-Package重排列

众所周知,MANIFEST.MF文件中的空格开头的行是相当于拼接在上一行末尾的.很多又长又乱的Import-Package或者Export-Package,有时候想要搜索某个package却可能被换行 ...

- flayboard(纯属娱乐,别人做的)

<iframe id="iframe" src="" frameborder="0" width="100%" h ...

- windows10家庭版升级专业版/企业版

以防万一,还是把Windows10家庭版的密钥保存下来. 一.保留原密钥 1. Win+R,输入regedit 2. 进入目录 HKEY_LOCAL_MACHINE\SOFTWARE\Microsof ...

- php面试题收藏

总结几个要素: 1.个人简介名字大写,内容需要详实,一是可以给人留下映像,二是减少不必要的与面试官交换个人信息的时间.准备一份好的口头自我介绍是很有必要的,毕竟准备一次能用很久,时间花在上面很实用,面 ...

- 8259A的初始化(单片)

1.单片8259A的初始化流程图: 在单片的初始化中不需要ICW3,因为ICW3是指明主片和从片的连接情况的. 2.程序解析: (1)ICW1 MOV AL,13H (2)ICW2 MOV AL,08 ...

- [linux][c/c++]代码片段01

#include <stdio.h> #include <unistd.h> void usage() { printf("Usage:\n"); prin ...

- fiddler实现B/S端、APP抓包分析遇到的各种疑问

阅读本文前您需要先下载fiddler并成功安装,并且要有一丢丢测试和接口基础或者在学习fidder时遇到了问题,或许本文可以帮助到你 一.B/S端抓包 Fiddler设置 1. 官网下载fiddler ...