Hive HiveServer2+beeline+jdbc客户端访问操作

HiveServer

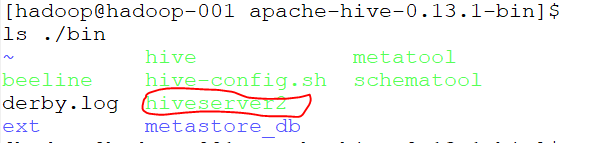

查看/home/hadoop/bigdatasoftware/apache-hive-0.13.1-bin/bin目录文件,其中有hiveserver2

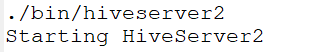

启动hiveserver2,如下图:

打开多一个终端,查看进程

有RunJar进程说明hiveserver正在运行;

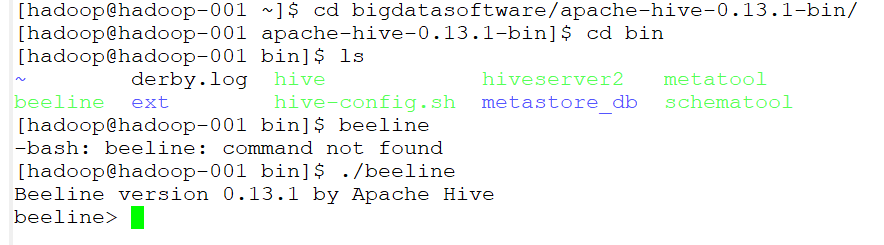

beeline

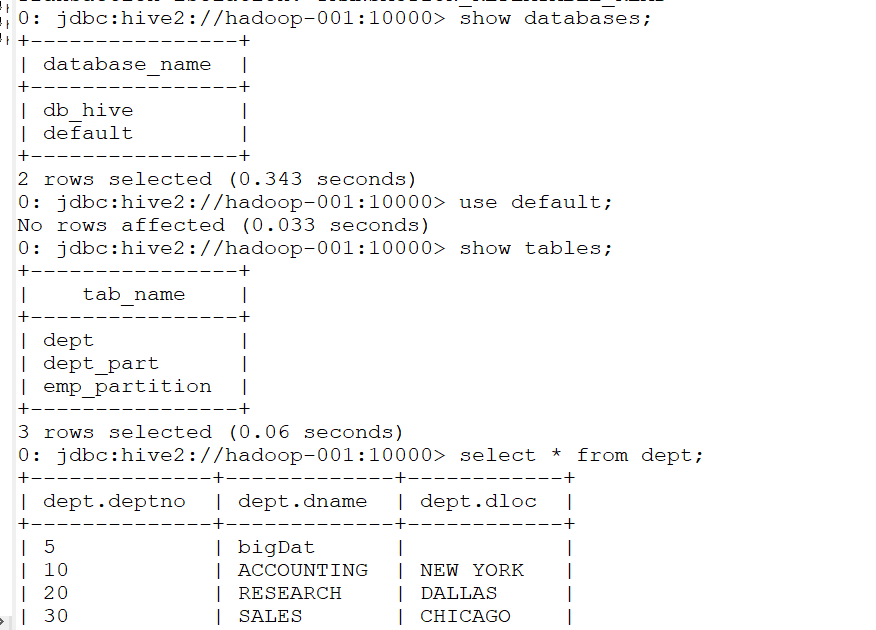

启动beeline

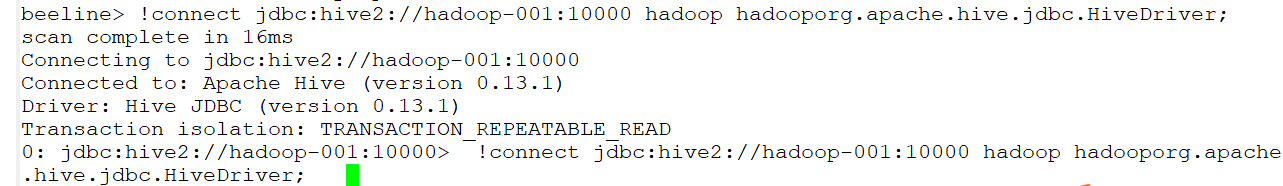

连接到jdbc

!connect jdbc:hive2://hadoop-001:10000 hadoop hadooporg.apache.hive.jdbc.HiveDriver;

然后就可以进行一系列操作了

IDEA上jdbc客户端操作hive

Using JDBC

You can use JDBC to access data stored in a relational database or other tabular format.

Load the HiveServer2 JDBC driver. As of 1.2.0 applications no longer need to explicitly load JDBC drivers using Class.forName().

For example:

Class.forName("org.apache.hive.jdbc.HiveDriver");

Connect to the database by creating a

Connectionobject with the JDBC driver.For example:

Connection cnct = DriverManager.getConnection("jdbc:hive2://<host>:<port>", "<user>", "<password>");

The default

<port>is 10000. In non-secure configurations, specify a<user>for the query to run as. The<password>field value is ignored in non-secure mode.

Connection cnct = DriverManager.getConnection("jdbc:hive2://<host>:<port>", "<user>", "");

In Kerberos secure mode, the user information is based on the Kerberos credentials.

Submit SQL to the database by creating a

Statementobject and using itsexecuteQuery()method.For example:

Statement stmt = cnct.createStatement();

ResultSet rset = stmt.executeQuery("SELECT foo FROM bar");

- Process the result set, if necessary.

These steps are illustrated in the sample code below.

代码如下:

package com.gec.demo; import java.sql.SQLException;

import java.sql.Connection;

import java.sql.ResultSet;

import java.sql.Statement;

import java.sql.DriverManager; public class HiveJdbcClient {

private static final String DRIVERNAME = "org.apache.hive.jdbc.HiveDriver"; /**

* @param args

* @throws SQLException

*/

public static void main(String[] args) throws SQLException {

try {

Class.forName(DRIVERNAME);

} catch (ClassNotFoundException e) {

// TODO Auto-generated catch block

e.printStackTrace();

System.exit(1);

}

//replace "hive" here with the name of the user the queries should run as

Connection con = DriverManager.getConnection("jdbc:hive2://hadoop-001:10000/default", "hadoop", "hadoop");

Statement stmt = con.createStatement();

String tableName = "dept";

// stmt.execute("drop table if exists " + tableName);

// stmt.execute("create table " + tableName + " (key int, value string)");

// show tables

String sql = "show tables '" + tableName + "'";

System.out.println("Running: " + sql);

ResultSet res = stmt.executeQuery(sql);

if (res.next()) {

System.out.println(res.getString(1));

}

// describe table

sql = "describe " + tableName;

System.out.println("Running: " + sql);

res = stmt.executeQuery(sql);

while (res.next()) {

System.out.println(res.getString(1) + "\t" + res.getString(2));

} // load data into table

// NOTE: filepath has to be local to the hive server

// NOTE: /tmp/a.txt is a ctrl-A separated file with two fields per line

// String filepath = "/";

// sql = "load data local inpath '" + filepath + "' into table " + tableName;

// System.out.println("Running: " + sql);

// stmt.execute(sql); // select * query

sql = "select * from " + tableName;

System.out.println("Running: " + sql);

res = stmt.executeQuery(sql);

while (res.next()) {

System.out.println(String.valueOf(res.getInt(1)) + "\t" + res.getString(2));

} // regular hive query

sql = "select count(1) from " + tableName;

System.out.println("Running: " + sql);

res = stmt.executeQuery(sql);

while (res.next()) {

System.out.println(res.getString(1));

}

}

}

pom.xml配置文件如下:

<?xml version="1.0" encoding="UTF-8"?> <project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<parent>

<artifactId>BigdataStudy</artifactId>

<groupId>com.gec.demo</groupId>

<version>1.0-SNAPSHOT</version>

</parent>

<modelVersion>4.0.0</modelVersion> <artifactId>HiveJdbcClient</artifactId> <name>HiveJdbcClient</name>

<!-- FIXME change it to the project's website -->

<url>http://www.example.com</url> <properties>

<project.build.sourceEncoding>UTF-8</project.build.sourceEncoding>

<maven.compiler.source>1.8</maven.compiler.source>

<maven.compiler.target>1.8</maven.compiler.target>

<hadoop.version>2.7.2</hadoop.version>

<!--<hive.version> 0.13.1</hive.version>-->

</properties> <dependencies>

<dependency>

<groupId>junit</groupId>

<artifactId>junit</artifactId>

<version>4.11</version>

<scope>test</scope>

</dependency> <dependency>

<groupId>org.apache.logging.log4j</groupId>

<artifactId>log4j-core</artifactId>

<version>2.8.2</version>

</dependency> <dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-common</artifactId>

<version>2.7.2</version>

</dependency> <dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-hdfs</artifactId>

<version>${hadoop.version}</version>

</dependency> <dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-client</artifactId>

<version>${hadoop.version}</version>

</dependency> <dependency>

<groupId>org.apache.hive</groupId>

<artifactId>hive-jdbc</artifactId>

<version>0.13.1</version>

</dependency>

<dependency>

<groupId>org.apache.hive</groupId>

<artifactId>hive-exec</artifactId>

<version>0.13.1</version>

</dependency> </dependencies>

</project>

Hive HiveServer2+beeline+jdbc客户端访问操作的更多相关文章

- 3.1 HiveServer2.Beeline JDBC使用

https://cwiki.apache.org/confluence/display/Hive/HiveServer2+Clients 一.HiveServer2.Beeline 1.HiveSer ...

- JDBC数据库访问操作的动态监测 之 Log4JDBC

log4jdbc是一个JDBC驱动器,能够记录SQL日志和SQL执行时间等信息.log4jdbc使用SLF4J(Simple Logging Facade)作为日志系统. 特性: 1.支持JDBC3和 ...

- JDBC数据库访问操作的动态监测 之 p6spy

P6spy是一个JDBC Driver的包装工具,p6spy通过对JDBC Driver的封装以达到对SQL语句的监听和分析,以达到各种目的. P6spy1.3 sf.net http://sourc ...

- hive JDBC客户端启动

JDBC客户端操作步骤

- [Hive]HiveServer2配置

HiveServer2(HS2)是一个服务器接口,能使远程客户端执行Hive查询,并且可以检索结果.HiveServer2是HiveServer1的改进版,HiveServer1已经被废弃.HiveS ...

- [Hive]HiveServer2概述

1. HiveServer1 HiveServer是一种可选服务,允许远程客户端可以使用各种编程语言向Hive提交请求并检索结果.HiveServer是建立在Apache ThriftTM(http: ...

- [Spark][Hive]Hive的命令行客户端启动:

[Spark][Hive]Hive的命令行客户端启动: [training@localhost Desktop]$ chkconfig | grep hive hive-metastore 0:off ...

- jdbc数据访问技术

jdbc数据访问技术 1.JDBC如何做事务处理? Con.setAutoCommit(false) Con.commit(); Con.rollback(); 2.写出几个在Jdbc中常用的接口 p ...

- Redis的C++与JavaScript访问操作

上篇简单介绍了Redis及其安装部署,这篇记录一下如何用C++语言和JavaScript语言访问操作Redis 1. Redis的接口访问方式(通用接口或者语言接口) 很多语言都包含Redis支持,R ...

随机推荐

- python flask 小项目

0 开始之前 网上看了很多教程,都不是很满意,因此自己写一个大型教程,从入门到做出一个比较完整的博客.此次教程不是直接把整个博客直接代码整理出来然后运行一遍就完事,我会从flask的各个模块讲起.所以 ...

- CentOS7+Nginx配置Tomcat负载均衡环境

1.准备两个Tomcat 配置两个Tomcat一个端口是8080另外一个端口是8081,分别在webapps下面添加一个测试用的web项目,修改index.jsp文件,8080端口的index.jsp ...

- react native 之 获取键盘高度

多说不如多撸: /** * Created by shaotingzhou on 2017/2/23. *//** * Sample React Native App * https://github ...

- For all entries in

Today I read about a blog explaining very detailedly on how to correctly use the key words FOR ALL E ...

- PHP安全之Web攻击(转)

一.SQL注入攻击(SQL Injection) 攻击者把SQL命令插入到Web表单的输入域或页面请求的字符串,欺骗服务器执行恶意的SQL命令.在某些表单中,用户输入的内容直接用来构造(或者影响)动态 ...

- logminer使用测试库进行挖掘分析,10.2.0.5

上一篇测试是在dg环境进行测试挖掘,但是如果客户存在一个测试库,那样使用日志挖掘的影响性更小.本篇进行测试分析. 测试环境介绍: oracle linux 5.6,vmware虚拟机,安装两套单实例 ...

- sharpkeys键盘按键重映射

/********************************************************************** * sharpkeys键盘按键重映射 * 说明: * 键 ...

- 【opencv基础】图像的几何变换

参考 1. 图像的几何变换-平移和镜像: 2.图像的几何变换-缩放和旋转: 3. opencv图像旋转实现: 完

- 深入理解Java中的多态

一.什么是多态? 多态指同一个实体同时具有多种形式.它是面向对象程序设计(OOP)的一个重要特征.如果一个语言只支持类而不支持多态,只能说明它是基于对象的,而不是面向对象的. 二.多态是如何实现的? ...

- Cython 使用

链接: Cython是一个快速生成Python扩展模块的工具,从语法层面上来讲是Python语法和C语言语法的混血,当Python性能遇到瓶颈时,Cython直接将C的原生速度植入Python程序,这 ...