【Keras学习】Sequential模型

序贯(Sequential)模型

序贯模型是多个网络层的线性堆叠,也就是“一条路走到黑”。

可以通过向Sequential模型传递一个layer的list来构造该模型:

from keras.models import Sequential

from keras.layers import Dense, Activation model = Sequential([

Dense(32, units=784),

Activation('relu'),

Dense(10),

Activation('softmax'),

])

也可以通过.add()方法一个个的将layer加入模型中:

model = Sequential()

model.add(Dense(32, input_shape=(784,)))

model.add(Activation('relu'))

指定输入数据的shape

模型需要知道输入数据的shape,因此,Sequential的第一层需要接受一个关于输入数据shape的参数,后面的各个层则可以自动的推导出中间数据的shape,因此不需要为每个层都指定这个参数。有几种方法来为第一层指定输入数据的shape

传递一个

input_shape的关键字参数给第一层,input_shape是一个tuple类型的数据,其中也可以填入None,如果填入None则表示此位置可能是任何正整数。数据的batch大小不应包含在其中。有些2D层,如

Dense,支持通过指定其输入维度input_dim来隐含的指定输入数据shape,是一个Int类型的数据。一些3D的时域层支持通过参数input_dim和input_length来指定输入shape。如果你需要为输入指定一个固定大小的batch_size(常用于stateful RNN网络),可以传递

batch_size参数到一个层中,例如你想指定输入张量的batch大小是32,数据shape是(6,8),则你需要传递batch_size=32和input_shape=(6,8)。

model = Sequential()

model.add(Dense(32, input_dim=784))

model = Sequential()

model.add(Dense(32, input_shape=(784,)))

编译

在训练模型之前,我们需要通过compile来对学习过程进行配置。compile接收三个参数:

优化器optimizer:该参数可指定为已预定义的优化器名,如

rmsprop、adagrad,或一个Optimizer类的对象,详情见optimizers损失函数loss:该参数为模型试图最小化的目标函数,它可为预定义的损失函数名,如

categorical_crossentropy、mse,也可以为一个损失函数。详情见losses指标列表metrics:对分类问题,我们一般将该列表设置为

metrics=['accuracy']。指标可以是一个预定义指标的名字,也可以是一个用户定制的函数.指标函数应该返回单个张量,或一个完成metric_name - > metric_value映射的字典.请参考性能评估

# For a multi-class classification problem

model.compile(optimizer='rmsprop',

loss='categorical_crossentropy',

metrics=['accuracy']) # For a binary classification problem

model.compile(optimizer='rmsprop',

loss='binary_crossentropy',

metrics=['accuracy']) # For a mean squared error regression problem

model.compile(optimizer='rmsprop',

loss='mse') # For custom metrics

import keras.backend as K def mean_pred(y_true, y_pred):

return K.mean(y_pred) model.compile(optimizer='rmsprop',

loss='binary_crossentropy',

metrics=['accuracy', mean_pred])

训练

Keras以Numpy数组作为输入数据和标签的数据类型。训练模型一般使用fit函数,该函数的详情见这里。下面是一些例子。

# For a single-input model with 2 classes (binary classification): model = Sequential()

model.add(Dense(32, activation='relu', input_dim=100))

model.add(Dense(1, activation='sigmoid'))

model.compile(optimizer='rmsprop',

loss='binary_crossentropy',

metrics=['accuracy']) # Generate dummy data

import numpy as np

data = np.random.random((1000, 100))

labels = np.random.randint(2, size=(1000, 1)) # Train the model, iterating on the data in batches of 32 samples

model.fit(data, labels, epochs=10, batch_size=32)

# For a single-input model with 10 classes (categorical classification): model = Sequential()

model.add(Dense(32, activation='relu', input_dim=100))

model.add(Dense(10, activation='softmax'))

model.compile(optimizer='rmsprop',

loss='categorical_crossentropy',

metrics=['accuracy']) # Generate dummy data

import numpy as np

data = np.random.random((1000, 100))

labels = np.random.randint(10, size=(1000, 1)) # Convert labels to categorical one-hot encoding

one_hot_labels = keras.utils.to_categorical(labels, num_classes=10) # Train the model, iterating on the data in batches of 32 samples

model.fit(data, one_hot_labels, epochs=10, batch_size=32)

例子

这里是一些帮助你开始的例子

在Keras代码包的examples文件夹中,你将找到使用真实数据的示例模型:

- CIFAR10 小图片分类:使用CNN和实时数据提升

- IMDB 电影评论观点分类:使用LSTM处理成序列的词语

- Reuters(路透社)新闻主题分类:使用多层感知器(MLP)

- MNIST手写数字识别:使用多层感知器和CNN

- 字符级文本生成:使用LSTM ...

基于多层感知器的softmax多分类:

from keras.models import Sequential

from keras.layers import Dense, Dropout, Activation

from keras.optimizers import SGD # Generate dummy data

import numpy as np

x_train = np.random.random((1000, 20))

y_train = keras.utils.to_categorical(np.random.randint(10, size=(1000, 1)), num_classes=10)

x_test = np.random.random((100, 20))

y_test = keras.utils.to_categorical(np.random.randint(10, size=(100, 1)), num_classes=10) model = Sequential()

# Dense(64) is a fully-connected layer with 64 hidden units.

# in the first layer, you must specify the expected input data shape:

# here, 20-dimensional vectors.

model.add(Dense(64, activation='relu', input_dim=20))

model.add(Dropout(0.5))

model.add(Dense(64, activation='relu'))

model.add(Dropout(0.5))

model.add(Dense(10, activation='softmax')) sgd = SGD(lr=0.01, decay=1e-6, momentum=0.9, nesterov=True)

model.compile(loss='categorical_crossentropy',

optimizer=sgd,

metrics=['accuracy']) model.fit(x_train, y_train,

epochs=20,

batch_size=128)

score = model.evaluate(x_test, y_test, batch_size=128)

MLP的二分类:

import numpy as np

from keras.models import Sequential

from keras.layers import Dense, Dropout # Generate dummy data

x_train = np.random.random((1000, 20))

y_train = np.random.randint(2, size=(1000, 1))

x_test = np.random.random((100, 20))

y_test = np.random.randint(2, size=(100, 1)) model = Sequential()

model.add(Dense(64, input_dim=20, activation='relu'))

model.add(Dropout(0.5))

model.add(Dense(64, activation='relu'))

model.add(Dropout(0.5))

model.add(Dense(1, activation='sigmoid')) model.compile(loss='binary_crossentropy',

optimizer='rmsprop',

metrics=['accuracy'])

model.fit(x_train, y_train,

epochs=20,

batch_size=128)

score = model.evaluate(x_test, y_test, batch_size=128)

类似VGG的卷积神经网络:

import numpy as np

import keras

from keras.models import Sequential

from keras.layers import Dense, Dropout, Flatten

from keras.layers import Conv2D, MaxPooling2D

from keras.optimizers import SGD # Generate dummy data

x_train = np.random.random((100, 100, 100, 3))

y_train = keras.utils.to_categorical(np.random.randint(10, size=(100, 1)), num_classes=10)

x_test = np.random.random((20, 100, 100, 3))

y_test = keras.utils.to_categorical(np.random.randint(10, size=(20, 1)), num_classes=10) model = Sequential()

# input: 100x100 images with 3 channels -> (100, 100, 3) tensors.

# this applies 32 convolution filters of size 3x3 each.

model.add(Conv2D(32, (3, 3), activation='relu', input_shape=(100, 100, 3)))

model.add(Conv2D(32, (3, 3), activation='relu'))

model.add(MaxPooling2D(pool_size=(2, 2)))

model.add(Dropout(0.25)) model.add(Conv2D(64, (3, 3), activation='relu'))

model.add(Conv2D(64, (3, 3), activation='relu'))

model.add(MaxPooling2D(pool_size=(2, 2)))

model.add(Dropout(0.25)) model.add(Flatten())

model.add(Dense(256, activation='relu'))

model.add(Dropout(0.5))

model.add(Dense(10, activation='softmax')) sgd = SGD(lr=0.01, decay=1e-6, momentum=0.9, nesterov=True)

model.compile(loss='categorical_crossentropy', optimizer=sgd) model.fit(x_train, y_train, batch_size=32, epochs=10)

score = model.evaluate(x_test, y_test, batch_size=32)

使用LSTM的序列分类

from keras.models import Sequential

from keras.layers import Dense, Dropout

from keras.layers import Embedding

from keras.layers import LSTM model = Sequential()

model.add(Embedding(max_features, output_dim=256))

model.add(LSTM(128))

model.add(Dropout(0.5))

model.add(Dense(1, activation='sigmoid')) model.compile(loss='binary_crossentropy',

optimizer='rmsprop',

metrics=['accuracy']) model.fit(x_train, y_train, batch_size=16, epochs=10)

score = model.evaluate(x_test, y_test, batch_size=16)

使用1D卷积的序列分类

from keras.models import Sequential

from keras.layers import Dense, Dropout

from keras.layers import Embedding

from keras.layers import Conv1D, GlobalAveragePooling1D, MaxPooling1D model = Sequential()

model.add(Conv1D(64, 3, activation='relu', input_shape=(seq_length, 100)))

model.add(Conv1D(64, 3, activation='relu'))

model.add(MaxPooling1D(3))

model.add(Conv1D(128, 3, activation='relu'))

model.add(Conv1D(128, 3, activation='relu'))

model.add(GlobalAveragePooling1D())

model.add(Dropout(0.5))

model.add(Dense(1, activation='sigmoid')) model.compile(loss='binary_crossentropy',

optimizer='rmsprop',

metrics=['accuracy']) model.fit(x_train, y_train, batch_size=16, epochs=10)

score = model.evaluate(x_test, y_test, batch_size=16)

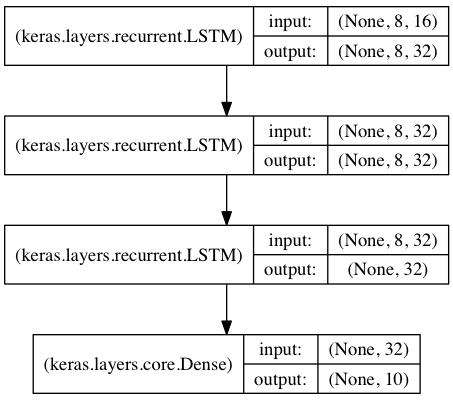

用于序列分类的栈式LSTM

在该模型中,我们将三个LSTM堆叠在一起,是该模型能够学习更高层次的时域特征表示。

开始的两层LSTM返回其全部输出序列,而第三层LSTM只返回其输出序列的最后一步结果,从而其时域维度降低(即将输入序列转换为单个向量)

from keras.models import Sequential

from keras.layers import LSTM, Dense

import numpy as np data_dim = 16

timesteps = 8

num_classes = 10 # expected input data shape: (batch_size, timesteps, data_dim)

model = Sequential()

model.add(LSTM(32, return_sequences=True,

input_shape=(timesteps, data_dim))) # returns a sequence of vectors of dimension 32

model.add(LSTM(32, return_sequences=True)) # returns a sequence of vectors of dimension 32

model.add(LSTM(32)) # return a single vector of dimension 32

model.add(Dense(10, activation='softmax')) model.compile(loss='categorical_crossentropy',

optimizer='rmsprop',

metrics=['accuracy']) # Generate dummy training data

x_train = np.random.random((1000, timesteps, data_dim))

y_train = np.random.random((1000, num_classes)) # Generate dummy validation data

x_val = np.random.random((100, timesteps, data_dim))

y_val = np.random.random((100, num_classes)) model.fit(x_train, y_train,

batch_size=64, epochs=5,

validation_data=(x_val, y_val))

采用stateful LSTM的相同模型

stateful LSTM的特点是,在处理过一个batch的训练数据后,其内部状态(记忆)会被作为下一个batch的训练数据的初始状态。状态LSTM使得我们可以在合理的计算复杂度内处理较长序列

请FAQ中关于stateful LSTM的部分获取更多信息

from keras.models import Sequential

from keras.layers import LSTM, Dense

import numpy as np data_dim = 16

timesteps = 8

num_classes = 10

batch_size = 32 # Expected input batch shape: (batch_size, timesteps, data_dim)

# Note that we have to provide the full batch_input_shape since the network is stateful.

# the sample of index i in batch k is the follow-up for the sample i in batch k-1.

model = Sequential()

model.add(LSTM(32, return_sequences=True, stateful=True,

batch_input_shape=(batch_size, timesteps, data_dim)))

model.add(LSTM(32, return_sequences=True, stateful=True))

model.add(LSTM(32, stateful=True))

model.add(Dense(10, activation='softmax')) model.compile(loss='categorical_crossentropy',

optimizer='rmsprop',

metrics=['accuracy']) # Generate dummy training data

x_train = np.random.random((batch_size * 10, timesteps, data_dim))

y_train = np.random.random((batch_size * 10, num_classes)) # Generate dummy validation data

x_val = np.random.random((batch_size * 3, timesteps, data_dim))

y_val = np.random.random((batch_size * 3, num_classes)) model.fit(x_train, y_train,

batch_size=batch_size, epochs=5, shuffle=False,

validation_data=(x_val, y_val))

【Keras学习】Sequential模型的更多相关文章

- Python机器学习笔记:深入学习Keras中Sequential模型及方法

Sequential 序贯模型 序贯模型是函数式模型的简略版,为最简单的线性.从头到尾的结构顺序,不分叉,是多个网络层的线性堆叠. Keras实现了很多层,包括core核心层,Convolution卷 ...

- Keras Model Sequential模型接口

Sequential 模型 API 在阅读这片文档前,请先阅读 Keras Sequential 模型指引. Sequential 模型方法 compile compile(optimizer, lo ...

- 深入学习Keras中Sequential模型及方法

https://www.cnblogs.com/wj-1314/p/9579490.html

- mnist手写数字识别——深度学习入门项目(tensorflow+keras+Sequential模型)

前言 今天记录一下深度学习的另外一个入门项目——<mnist数据集手写数字识别>,这是一个入门必备的学习案例,主要使用了tensorflow下的keras网络结构的Sequential模型 ...

- keras学习笔记2

1.keras的sequential模型需要知道输入数据的shape,因此,sequential的第一层需要接受一个关于输入数据shape的参数,后面的各个层则可以自动的推导出中间数据的shape,因 ...

- keras模块学习之Sequential模型学习笔记

本笔记由博客园-圆柱模板 博主整理笔记发布,转载需注明,谢谢合作! Sequential是多个网络层的线性堆叠 可以通过向Sequential模型传递一个layer的list来构造该模型: from ...

- keras系列︱Sequential与Model模型、keras基本结构功能(一)

引自:http://blog.csdn.net/sinat_26917383/article/details/72857454 中文文档:http://keras-cn.readthedocs.io/ ...

- Keras之序贯(Sequential)模型

序贯模型(Sequential) 序贯模型是多个网络层的线性堆叠. 可以通过向Sequential模型传递一个layer的list来构造该模型: from Keras.models import Se ...

- Keras 学习之旅(一)

软件环境(Windows): Visual Studio Anaconda CUDA MinGW-w64 conda install -c anaconda mingw libpython CNTK ...

随机推荐

- sprintf函数的用法

说明1:该函数包含在stdio.h的头文件中,使用时需要加入:#include <stdio.h> 说明2:sprintf与printf函数的区别:二者功能相似,但是sprintf函数打印 ...

- SpringData环境搭建代码编写

首先我们在前面的两节已经了解了SpringData是干什么用的?那我们从这节开始我们就开始编码测试SpringData. 1:首先我们从配置文件开始,我们首先需要写一个连接数据库的文件db.prope ...

- (ZT)谷歌大脑科学家 Caffe缔造者 贾扬清 微信讲座完整版

一.讲座正文:大家好!我是贾扬清,目前在Google Brain,今天有幸受雷鸣师兄邀请来和大家聊聊Caffe.没有太多准备,所以讲的不好的地方还请大家谅解.我用的ppt基本上和我们在CVPR上要做的 ...

- 想转行学Java,却又担心自己半路出家成不了大牛

想转行学Java,却又担心自己半路出家成不了大牛 很多人看好Java编程的高薪前景,在自己职业生涯迷茫的时候,想转行学Java,却又担心自己半路出家成不了大牛,赚不到钱,本文就为大家分析一下,转行学J ...

- CentOS7.2 安装Tomcat

Centos默认安装JDK 现在要删除旧版本的jdk,安装新版本jdk 查看现有jdk: [root@localhost 桌面]# rpm -qa | grep jdk java-1.8.0-open ...

- 【postman】谷歌postman插件的基本选项含义

1.form-data: 就是http请求中的multipart/form-data,它会将表单的数据处理为一条消息,以标签为单元,用分隔符分开.既可以上传键值对,也可以上传文件.当上传的字段是文件 ...

- shell 使用ping测试网络

能ping通返回1,不能返回0 ping -c 192.168.1.1 | grep '0 received' | wc -l

- Android之提示Toast

步骤: 设置监听事件步骤1.事件源,如按键 btn_simple2.事件 OnClick3.监听器new OnClickListener3.绑定事件源与事件 setOnClickListener(ne ...

- Java IO流-标准输入输出流

2017-11-05 19:13:21 标准输入输出流:System类中的两个成员变量. 标准输入流(public static final InputStream in):“标准”输入流.此流已打开 ...

- HttpClient将手机上的数据发送到服务器

到官网下载jar包,下载GA发布版本即可 在项目中将httpclient-4.5.5.jar.httpcore-4.4.9.jar.httpmime-4.5.5.jar.commons-logging ...