ID3决策树

决策树

- 优点:计算复杂度不高,输出结果易于理解,对中间值的缺少不敏感,可以处理不相关特征数据

- 缺点:过拟合

决策树的构造

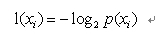

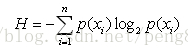

熵:混乱程度,信息的期望值

其中p(xi)是选择分类的概率

熵就是计算所有类别所有可能值包含的信息期望值,公式如下:

构造基本思路

信息增益 = 初始香农熵-新计算得到的香农熵(混乱程度下降的多少)

创建根节点(数据)

分裂:选择合适的特征进行分裂,采取的办法是遍历每个特征,然后计算并累加每个特征值的香农熵,与其他特征所计算出来的香农熵对比,选取信息增益最大的那个作为最大信息增益的特征节点进行分裂,分裂时,该特征有几个特征值,就会分裂成多少个树干,之后重复迭代分裂直至不能再分裂为止

好了!懂这些就可以直接上代码了!

from math import log

import operator def calcShannonEnt(dataSet):

"计算熵,熵代表不确定度,混乱程度" numEntries = len(dataSet) #训练样本总数

labelCounts = {} for featVec in dataSet:

currentLabel = featVec[-1] #取标签 if currentLabel not in labelCounts.keys():

labelCounts[currentLabel] = 0

labelCounts[currentLabel] += 1 #计算对应标签的数量 shannonEnt = 0.0 #初始化熵 for key in labelCounts:

"遍历每个标签字典,标签字典中包含每个标签的数量" prob = float(labelCounts[key]) / numEntries #计算选择该分类的概率概率

shannonEnt -= prob * log(prob,2)

return shannonEnt def createDataSet():

dataSet = [

[1,1,'yes'],

[1,1,'yes'],

[1,0,'no'],

[0,1,'no'],

[0,1,'no'], ]

labels = ['no surfacing','flippers']

return dataSet,labels myDat , labels = createDataSet()

ret = calcShannonEnt(myDat)

print(ret) def splitDataSet(dataSet,axis,value):

retDataSet = [] for featVec in dataSet:

if featVec[axis] == value:

"把选定特征为特定值的数据集分离出来"

reducedFeatVec = featVec[:axis]

reducedFeatVec.extend(featVec[axis+1:]) #把一个列表压缩进一个列表去而不是单纯append

retDataSet.append(reducedFeatVec)

return retDataSet def chooseBestFeatureToSplit(dataSet):

numFeatures = len(dataSet[0]) - 1

baseEntropy = calcShannonEnt(dataSet)

bestInfoGain = 0.0 #信息增益

bestFeature = -1

for i in range(numFeatures):

"遍历特征,选择最大信息增益的特征"

featList = [example[i] for example in dataSet] #按特征提取数据,用于分割数据

uniqueVals = set(featList) #去重 newEntropy = 0.0 for value in uniqueVals:

"遍历每个不同的特征值,将遍历的每个特征值为节点进行分割,计算熵,累加,选择最大信息增益的特征"

subDataSet = splitDataSet(dataSet,i,value)#分割数据集,将第几个特征为哪个特征值的数据分离出来 prob = len(subDataSet) / float(len(dataSet)) #计算这个特征为这个特征值的发生概率

newEntropy += prob * calcShannonEnt(subDataSet) infoGain = baseEntropy - newEntropy #信息增益:熵值的减少 if(infoGain > bestInfoGain):

bestInfoGain = infoGain

bestFeature = i

return bestFeature def majorityCnt(classList):

"最大投票器,用于数据集只有一个特征的时候"

classcount = {} for vote in classList:

if vote not in classcount.keys(): classcount[vote] =0

classcount += 1

sorteClassCount = sorted(classcount.items(),key=operator.itemgetter(1),reverse=True)

#由大到小 字典的值排序 return sorteClassCount[0][0] def createTree(dataSet,labels):

classList = [example[-1] for example in dataSet] #取标签 if classList.count(classList[0]) == len(classList):

"类别相同停止继续划分"

return classList[0] if len(dataSet[0]) == 1:

"停止划分,因为没有特征"

return majorityCnt(classList) bestFeat = chooseBestFeatureToSplit(dataSet)

bestFeatLabel = labels[bestFeat] mytree = {bestFeatLabel:{}} del(labels[bestFeat]) #该特征也被已划分节点,删除特征 featValues = [example[bestFeat] for example in dataSet] #提取对于该节点信息增益最大的特征的所有特征值

uniqueVals = set(featValues) #去重 for value in uniqueVals:

"为该节点下最大信息增益的特征的不重合特征值进行再次创建决策树"

subLabels = labels[:] mytree[bestFeatLabel][value] = createTree(splitDataSet(

dataSet,bestFeat,value

),subLabels)

return mytree def classify(inputTree,featLabels,testVec):

"训练完毕,用于预测"

firstStr = inputTree.keys()[0] #取第一个节点

secondDict = inputTree[firstStr] featIndex = featLabels.index(firstStr) #查看这个特征的索引(查这是第几个特征)

for key in secondDict.keys():

if testVec[featIndex] == key:

if type(secondDict[key]).__name__ == 'dict':

classLabel = classify(secondDict[key],featLabels,testVec)

else:

classLabel = secondDict[key]

return classLabel def storeTree(inputTree,filename):

"保存训练完毕的决策树模型"

import pickle

fw = open(filename,'w')

pickle.dump(inputTree,fw)

fw.close() def grabTree(filename):

"加载以保存的决策树模型" import pickle

fr = open(filename)

return pickle.load(filename,fr)

'''

绘制决策树

'''

import matplotlib.pyplot as plt decisionNode = dict(boxstyle="sawtooth", fc="0.8")

leafNode = dict(boxstyle="round4", fc="0.8")

arrow_args = dict(arrowstyle="<-") def getNumLeafs(myTree):

numLeafs = 0

firstStr = list(myTree)[0]

secondDict = myTree[firstStr]

for key in secondDict.keys():

if type(secondDict[key]).__name__ == 'dict':#test to see if the nodes are dictonaires, if not they are leaf nodes

numLeafs += getNumLeafs(secondDict[key])

else: numLeafs += 1

return numLeafs def getTreeDepth(myTree):

maxDepth = 0

firstStr = list(myTree)[0]

secondDict = myTree[firstStr]

for key in secondDict.keys():

if type(secondDict[key]).__name__ == 'dict':#test to see if the nodes are dictonaires, if not they are leaf nodes

thisDepth = 1 + getTreeDepth(secondDict[key])

else: thisDepth = 1

if thisDepth > maxDepth: maxDepth = thisDepth

return maxDepth def plotNode(nodeTxt, centerPt, parentPt, nodeType):

createPlot.ax1.annotate(nodeTxt, xy=parentPt, xycoords='axes fraction',

xytext=centerPt, textcoords='axes fraction',

va="center", ha="center", bbox=nodeType, arrowprops=arrow_args) def plotMidText(cntrPt, parentPt, txtString):

xMid = (parentPt[0]-cntrPt[0])/2.0 + cntrPt[0]

yMid = (parentPt[1]-cntrPt[1])/2.0 + cntrPt[1]

createPlot.ax1.text(xMid, yMid, txtString, va="center", ha="center", rotation=30) def plotTree(myTree, parentPt, nodeTxt):#if the first key tells you what feat was split on

numLeafs = getNumLeafs(myTree) #this determines the x width of this tree

depth = getTreeDepth(myTree)

firstStr = list(myTree)[0] #the text label for this node should be this

cntrPt = (plotTree.xOff + (1.0 + float(numLeafs))/2.0/plotTree.totalW, plotTree.yOff)

plotMidText(cntrPt, parentPt, nodeTxt)

plotNode(firstStr, cntrPt, parentPt, decisionNode)

secondDict = myTree[firstStr]

plotTree.yOff = plotTree.yOff - 1.0/plotTree.totalD

for key in secondDict.keys():

if type(secondDict[key]).__name__ == 'dict':#test to see if the nodes are dictonaires, if not they are leaf nodes

plotTree(secondDict[key], cntrPt, str(key)) #recursion

else: #it's a leaf node print the leaf node

plotTree.xOff = plotTree.xOff + 1.0/plotTree.totalW

plotNode(secondDict[key], (plotTree.xOff, plotTree.yOff), cntrPt, leafNode)

plotMidText((plotTree.xOff, plotTree.yOff), cntrPt, str(key))

plotTree.yOff = plotTree.yOff + 1.0/plotTree.totalD

#if you do get a dictonary you know it's a tree, and the first element will be another dict def createPlot(inTree):

fig = plt.figure(1, facecolor='white')

fig.clf()

axprops = dict(xticks=[], yticks=[])

createPlot.ax1 = plt.subplot(111, frameon=False, **axprops) #no ticks

#createPlot.ax1 = plt.subplot(111, frameon=False) #ticks for demo puropses

plotTree.totalW = float(getNumLeafs(inTree))

plotTree.totalD = float(getTreeDepth(inTree))

plotTree.xOff = -0.5/plotTree.totalW; plotTree.yOff = 1.0

plotTree(inTree, (0.5, 1.0), '')

plt.show() #def createPlot():

# fig = plt.figure(1, facecolor='white')

# fig.clf()

# createPlot.ax1 = plt.subplot(111, frameon=False) #ticks for demo puropses

# plotNode('a decision node', (0.5, 0.1), (0.1, 0.5), decisionNode)

# plotNode('a leaf node', (0.8, 0.1), (0.3, 0.8), leafNode)

# plt.show() def retrieveTree(i):

listOfTrees = [{'no surfacing': {0: 'no', 1: {'flippers': {0: 'no', 1: 'yes'}}}},

{'no surfacing': {0: 'no', 1: {'flippers': {0: {'head': {0: 'no', 1: 'yes'}}, 1: 'no'}}}}

]

return listOfTrees[i]

thisTree = retrieveTree(1)

createPlot(thisTree)

ID3决策树的更多相关文章

- ID3决策树预测的java实现

刚才写了ID3决策树的建立,这个是通过决策树来进行预测.这里主要用到的就是XML的遍历解析,比较简单. 关于xml的解析,参考了: http://blog.csdn.net/soszou/articl ...

- Python3实现机器学习经典算法(三)ID3决策树

一.ID3决策树概述 ID3决策树是另一种非常重要的用来处理分类问题的结构,它形似一个嵌套N层的IF…ELSE结构,但是它的判断标准不再是一个关系表达式,而是对应的模块的信息增益.它通过信息增益的大小 ...

- ID3决策树的Java实现

package DecisionTree; import java.io.*; import java.util.*; public class ID3 { //节点类 public class DT ...

- python ID3决策树实现

环境:ubuntu 16.04 python 3.6 数据来源:UCI wine_data(比较经典的酒数据) 决策树要点: 1. 如何确定分裂点(CART ID3 C4.5算法有着对应的分裂计算方式 ...

- ID3决策树---Java

1)熵与信息增益: 2)以下是实现代码: //import java.awt.color.ICC_ColorSpace; import java.io.*; import java.util.Arra ...

- java编写ID3决策树

说明:每个样本都会装入Data样本对象,决策树生成算法接收的是一个Array<Data>样本列表,所以构建测试数据时也要符合格式,最后生成的决策树是树的根节点,通过里面提供的showTre ...

- 决策树模型 ID3/C4.5/CART算法比较

决策树模型在监督学习中非常常见,可用于分类(二分类.多分类)和回归.虽然将多棵弱决策树的Bagging.Random Forest.Boosting等tree ensembel 模型更为常见,但是“完 ...

- 决策树ID3算法[分类算法]

ID3分类算法的编码实现 <?php /* *决策树ID3算法(分类算法的实现) */ /* *求信息增益Grain(S1,S2) */ //-------------------------- ...

- 机器学习之决策树(ID3 、C4.5算法)

声明:本篇博文是学习<机器学习实战>一书的方式路程,系原创,若转载请标明来源. 1 决策树的基础概念 决策树分为分类树和回归树两种,分类树对离散变量做决策树 ,回归树对连续变量做决策树.决 ...

随机推荐

- 重读《深入理解Java虚拟机》四、虚拟机如何加载Class文件

1.Java语言的特性 Java代码经过编译器编译成Class文件(字节码)后,就需要虚拟机将其加载到内存里面执行字节码所定义的代码实现程序开发设定的功能. Java语言中类型的加载.连接(验证.准备 ...

- axure rp pro 8.0 注册码

激活码:(亲测可用) 用户名:aaa 注册码:2GQrt5XHYY7SBK/4b22Gm4Dh8alaR0/0k3gEN5h7FkVPIn8oG3uphlOeytIajxGU 用户名:axureuse ...

- sql server系统存储过程大全

关键词:sql server系统存储过程,mssql系统存储过程 xp_cmdshell --*执行DOS各种命令,结果以文本行返回. xp_fixeddrives --*查询各磁盘/分区可用空间 x ...

- MathWorks.MATLAB.NET.Arrays.MWArray”的类型初始值设定项引发异常 解决方法

原因 用的是matlab7运行时,后面又安装了matlab11,后面又重新安装了matlab7运行时,c盘下就有二个运行时的版本了,程序引用了后面的那个,编译后就出上面的问题 解决方法 1重新引用上面 ...

- nginx 部署web页面问题

nginx 部署web页面的时候,路径都是对的,但是css文件就是不起作用,控制台提示如下,原来是格式的问题,截图如下: css 被转成了application/octet-stream,这个是ngi ...

- map+case结构使用技巧

people.txt文本如下 lyzx1, lyzx2, lyzx3, lyzx4, lyzx5, lyzx6, lyzx7, lyzx7,,哈哈 托塔天王 import org.apache.spa ...

- git的reset的理解

git的reset的理解 1.在理解reset命令之前,先对git中涉及到的与该reset命令相关概念进行说明和解释HEAD这是当前分支版本顶端的别名,也就是在当前分支你最近的一个提交Indexind ...

- [LeetCode] 74. Search a 2D Matrix_Medium tag: Binary Search

Write an efficient algorithm that searches for a value in an m x n matrix. This matrix has the follo ...

- 遇到问题---hosts不起作用问题的解决方法

c:\WINDOWS\system32\drivers\etc\hosts 文件的作用是添加 域名解析 定向 比如添加 127.0.0.1 www.baidu.com 那我们访问www.baidu. ...

- jquery事件重复绑定

本文实例分析了jQuery防止重复绑定事件的解决方法.分享给大家供大家参考,具体如下: 一.问题: 今天发现jQuery一个对象的事件可以重复绑定多次,当事件触发的时候会引起代码多遍执行. 下面是一个 ...