sklearn学习笔记2

Text classifcation with Naïve Bayes

In this section we will try to classify newsgroup messages using a dataset that can be retrieved from within scikit-learn. This dataset consists of around 19,000 newsgroup messages from 20 different topics ranging from politics and religion to sports and science.

As usual, we frst start by importing our pylab environment:

%pylab inline

Our dataset can be obtained by importing the fetch_20newgroups function from the sklearn.datasets module. We have to specify if we want to import a part or all of the set of instances (we will import all of them).

from sklearn.datasets import fetch_20newsgroups

news = fetch_20newsgroups(subset='all')

If we look at the properties of the dataset, we will fnd that we have the usual ones: DESCR, data, target, and target_names. The difference now is that data holds a list of text contents, instead of a numpy matrix:

print(type(news.data), type(news.target), type(news.target_names))

print(news.target_names)

print(len(news.data))

print(len(news.target))

<class 'list'> <class 'numpy.ndarray'> <class 'list'>

['alt.atheism', 'comp.graphics', 'comp.os.ms-windows.misc', 'comp.sys.ibm.pc.hardware', 'comp.sys.mac.hardware', 'comp.windows.x', 'misc.forsale', 'rec.autos', 'rec.motorcycles', 'rec.sport.baseball', 'rec.sport.hockey', 'sci.crypt', 'sci.electronics', 'sci.med', 'sci.space', 'soc.religion.christian', 'talk.politics.guns', 'talk.politics.mideast', 'talk.politics.misc', 'talk.religion.misc']

18846

18846

If you look at, say, the frst instance, you will see the content of a newsgroup message, and you can get its corresponding category:

print(news.data[0])

print(news.target[0], news.target_names[news.target[0]])

From: Mamatha Devineni Ratnam <mr47+@andrew.cmu.edu>

Subject: Pens fans reactions

Organization: Post Office, Carnegie Mellon, Pittsburgh, PA

Lines: 12

NNTP-Posting-Host: po4.andrew.cmu.edu I am sure some bashers of Pens fans are pretty confused about the lack

of any kind of posts about the recent Pens massacre of the Devils. Actually,

I am bit puzzled too and a bit relieved. However, I am going to put an end

to non-PIttsburghers' relief with a bit of praise for the Pens. Man, they

are killing those Devils worse than I thought. Jagr just showed you why

he is much better than his regular season stats. He is also a lot

fo fun to watch in the playoffs. Bowman should let JAgr have a lot of

fun in the next couple of games since the Pens are going to beat the pulp out of Jersey anyway. I was very disappointed not to see the Islanders lose the final

regular season game. PENS RULE!!! 10 rec.sport.hockey

Preprocessing the data

Our machine learning algorithms can work only on numeric data, so our next step will be to convert our text-based dataset to a numeric dataset. Currently we only have one feature, the text content of the message; we need some function that transforms a text into a meaningful set of numeric features.

Intuitively one could try to look at which are the words (or more precisely, tokens, including numbers or punctuation signs) that are used in each of the text categories, and try to characterize

each category with the frequency distribution of each of those words. The sklearn.

feature_extraction.text module has some useful utilities to build numeric feature vectors from text documents.

Before starting the transformation, we will have to partition our data into training and testing set. The loaded data is already in a random order, so we only have to split the data into, for example, 75 percent for training and the rest 25 percent for testing:

If you look inside the sklearn.feature_extraction.text module, you will fnd three different classes that can transform text into numeric features: CountVectorizer, HashingVectorizer, and TfidfVectorizer.

The difference between them resides in the calculations they perform to obtain the numeric features.

- CountVectorizer主要从语料库创建了一个单词的字典,将每一个样本转化成一个关于每个单词在文档中出现次数的向量。

- HashingVectorizer不是在内存中压缩和维护字典,而是实现了一个将标记映射到特征索引的哈希函数,然后同一样计数。

- TfidfVectorizer与CountVectorizer类似,不过它使用一种更高级的计算方式,叫做Term Frequency Inverse Document Frequency (TF-IDF)。这是一种统计来测量一个单词在文档或语料库中的重要性。直观地,它寻找在当前文档中与整个文档集相比更加频繁的单词。你可以把它看做一种方式,用来标准化结果和避免单词太频繁,因此不能用来描述样本。

Training a Naïve Bayes classifer

We will create a Naïve Bayes classifer that is composed of a feature vectorizer and the actual Bayes classifer. We will use the MultinomialNB class from the sklearn.naive_bayes module. Scikitlearn has a very useful class called Pipeline (available in the sklearn.pipeline module) that eases the construction of a compound classifer, which consists of several vectorizers and classifers.

We will create three different classifers by combining MultinomialNB with the three different text vectorizers just mentioned, and compare which one performs better using the default parameters:

from sklearn.naive_bayes import MultinomialNB

from sklearn.pipeline import Pipeline

from sklearn.feature_extraction.text import TfidfVectorizer, HashingVectorizer, CountVectorizer

clf_1 = Pipeline([

('vect', CountVectorizer()),

('clf', MultinomialNB()),

])

clf_2 = Pipeline([

('vect', HashingVectorizer(non_negative=True)),

('clf', MultinomialNB()),

])

clf_3 = Pipeline([

('vect', TfidfVectorizer()),

('clf', MultinomialNB()),

])

We will defne a function that takes a classifer and performs the K-fold crossvalidation over the specifed X and y values:

from sklearn.cross_validation import cross_val_score, KFold

from scipy.stats import sem

def evaluate_cross_validation(clf, X, y, K):

# create a k-fold cross validation iterator of k=5 folds

cv = KFold(len(y), K, shuffle=True, random_state=0)

# by default the score used is the one returned by score method of the estimator (accuracy)

scores = cross_val_score(clf, X, y, cv=cv)

print(scores)

print(("Mean score: {0:.3f} (+/-{1:.3f})").format(np.mean(scores), sem(scores)))

Then we will perform a fve-fold cross-validation by using each one of the classifers.

clfs = [clf_1, clf_2, clf_3]

for clf in clfs:

evaluate_cross_validation(clf, news.data, news.target, 5)

[ 0.85782493 0.85725657 0.84664367 0.85911382 0.8458477 ]

Mean score: 0.853 (+/-0.003)

[ 0.75543767 0.77659857 0.77049615 0.78508888 0.76200584]

Mean score: 0.770 (+/-0.005)

[ 0.84482759 0.85990979 0.84558238 0.85990979 0.84213319]

Mean score: 0.850 (+/-0.004)

As you can see CountVectorizer and TfidfVectorizer had similar performances, and much better than HashingVectorizer.

Let's continue with TfidfVectorizer; we could try to improve the results by trying to parse the text documents into tokens with a different regular expression.

clf_4 = Pipeline([

('vect', TfidfVectorizer(

token_pattern=ur"\b[a-z0-9_\-\.]+[a-z][a-z0-9_\-\.]+\b",

)),

('clf', MultinomialNB()),

])

(不知道为什么报错SyntaxError: invalid syntax)

The default regular expression: ur"\b\w\w+\b" considers alphanumeric characters and the underscore. Perhaps also considering the slash and the dot could improve the tokenization, and begin considering tokens as Wi-Fi and site.com. The new regular expression could be: ur"\b[a-z0-9_\-\.]+[a-z][a-z0-9_\-\.]+\b". If you have queries about how to defne regular expressions, please refer to the Python re module documentation. Let's try our new classifer:

evaluate_cross_validation(clf_4, news.data, news.target, 5)

We have a slight improvement from 0.84 to 0.85.

Another parameter that we can use is stop_words: this argument allows us to pass a list of words we do not want to take into account, such as too frequent words, or words we do not a priori expect to provide information about the particular topic.

We will defne a function to load the stop words from a text fle as follows:

def get_stop_words():

result = set()

for line in open('stopwords_en.txt', 'r').readlines():

result.add(line.strip())

return result

And create a new classifer with this new parameter as follows:

clf_5 = Pipeline([

('vect', TfidfVectorizer(

stop_words=get_stop_words(),

token_pattern=ur"\b[a-z0-9_\-\.]+[a-z][a-z0-9_\-\.]+\b",

)),

('clf', MultinomialNB()),

])

evaluate_cross_validation(clf_5, news.data, news.target, 5)

The preceding code shows another improvement from 0.85 to 0.87.

Let's keep this vectorizer and start looking at the MultinomialNB parameters. This classifer has few parameters to tweak; the most important is the alpha parameter, which is a smoothing parameter. Let's set it to a lower value; instead of setting alpha to 1.0 (the default value), we will set it to 0.01:

clf_6 = Pipeline([

('vect', TfidfVectorizer(

stop_words=get_stop_words(),

token_pattern=ur"\b[a-z0-9_\-\.]+[a-z][a-z0-9_\-\.]+\b",

)),

('clf', MultinomialNB(alpha=0.01)),

]) evaluate_cross_validation(clf_6, X_train, Y_train, 5)

The results had an important boost from 0.89 to 0.92, pretty good. At this point, we could continue doing trials by using different values of alpha or doing new modifcations of the vectorizer.

Evaluating the performance

If we decide that we have made enough improvements in our model, we are ready to evaluate its performance on the testing set.

We will defne a helper function(见http://www.cnblogs.com/iamxyq/p/5912048.html train_and_evaluate函数) that will train the model in the entire training setand evaluate the accuracy in the training and in the testing sets.

We will evaluate our best classifer.

train_and_evaluate(clf_7, X_train, X_test, y_train, y_test)

If we look inside the vectorizer, we can see which tokens have been used to create our dictionary:

print len(clf_7.named_steps['vect'].get_feature_names())

Let's print the feature names.

clf_7.named_steps['vect'].get_feature_names()

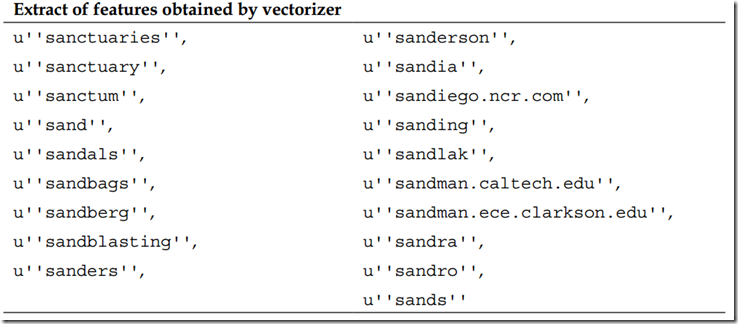

The following table presents an extract of the results:

You can see that some words are semantically very similar, for example, sand and sands, sanctuaries and sanctuary. Perhaps if the plurals and the singulars are counted to the same bucket, we would better represent the documents. This is a very common task, which could be solved using stemming, a technique that relates two words having the same lexical root.

sklearn学习笔记2的更多相关文章

- sklearn学习笔记之简单线性回归

简单线性回归 线性回归是数据挖掘中的基础算法之一,从某种意义上来说,在学习函数的时候已经开始接触线性回归了,只不过那时候并没有涉及到误差项.线性回归的思想其实就是解一组方程,得到回归函数,不过在出现误 ...

- sklearn学习笔记3

Explaining Titanic hypothesis with decision trees decision trees are very simple yet powerful superv ...

- sklearn学习笔记1

Image recognition with Support Vector Machines #our dataset is provided within scikit-learn #let's s ...

- sklearn学习笔记

用Bagging优化模型的过程:1.对于要使用的弱模型(比如线性分类器.岭回归),通过交叉验证的方式找到弱模型本身的最好超参数:2.然后用这个带着最好超参数的弱模型去构建强模型:3.对强模型也是通过交 ...

- sklearn学习笔记(一)——数据预处理 sklearn.preprocessing

https://blog.csdn.net/zhangyang10d/article/details/53418227 数据预处理 sklearn.preprocessing 标准化 (Standar ...

- sklearn学习笔记之岭回归

岭回归 岭回归是一种专用于共线性数据分析的有偏估计回归方法,实质上是一种改良的最小二乘估计法,通过放弃最小二乘法的无偏性,以损失部分信息.降低精度为代价获得回归系数更为符合实际.更可靠的回归方法,对病 ...

- sklearn学习笔记之开始

简介 自2007年发布以来,scikit-learn已经成为Python重要的机器学习库了.scikit-learn简称sklearn,支持包括分类.回归.降维和聚类四大机器学习算法.还包含了特征 ...

- sklearn学习笔记(1)--make_blobs函数及相应参数简介

make_blobs方法: sklearn.datasets.make_blobs(n_samples=100,n_features=2,centers=3, cluster_std=1.0,cent ...

- Google TensorFlow深度学习笔记

Google Deep Learning Notes Google 深度学习笔记 由于谷歌机器学习教程更新太慢,所以一边学习Deep Learning教程,经常总结是个好习惯,笔记目录奉上. Gith ...

随机推荐

- C#串口通讯实例

本文参考<C#网络通信程序设计>(张晓明 编著) 程序界面如下图: 参数设置界面代码如下: using System; using System.Collections.Generic; ...

- Web Deploy安装时显示Web Management Service无法启动

在安装显示如题错误,看了日志: IISWMSVC_STARTUP_UNABLE_TO_READ_CERTIFICATE 无法读取带有指纹"3f60e39108a7e4c54f671b75 ...

- import pysam 出错解决办法

安装pysam后,import之,结果,出现报错: Library not loaded: libcurl.4.dylib 尝试很多办法,最终发现应当这样解决: # 首先重装curl brew ins ...

- hibernate persist update 方法没有正常工作(不保存数据,不更新数据)

工程结构 问题描述 在工程中通过spring aop的方式配置事务,使用hibernate做持久化.在代码实现中使用hibernate persit()方法插入数据到数据库,使用hibernate u ...

- yii 初步安装

第一步: window下点击>开始 >运行CMD命令. 第二步:进入Yiic文件的目录 (例如在D盘里面 D:/yii/framework) 第三步:D:\yii\framework& ...

- libevent之丢失header问题

本文为原创,转载请注明:http://www.cnblogs.com/gistao/ 背景 分享一个hhvm使用http server方式来处理请求的问题及对应的patch.hhvm3+版本支持fas ...

- Android添加快捷方式

private void addShortcutToDesktop() { Intent shortcut = new Intent("com.android.launcher.action ...

- 最短路径问题——floyd算法

floyd算法和之前讲的bellman算法.dijkstra算法最大的不同在于它所处理的终于不再是单源问题了,floyd可以解决任何点到点之间的最短路径问题,个人觉得floyd是最简单最好用的一种算法 ...

- 深入剖析ConcurrentHashMap(1)

转载自并发编程网 – ifeve.com本文链接地址: 深入剖析ConcurrentHashMap(1) ConcurrentHashMap是Java5中新增加的一个线程安全的Map集合,可以用来替代 ...

- ns115 step by step

一,安装环境: sudo apt-get install git-core gnupg flex bison gperf build-essential zip curl zlib1g-dev lib ...