tomcat结合nginx或apache做负载均衡及session绑定

1、tomcat结合nginx做负载均衡,session绑定

nginx:192.168.223.136 tomcat:192.168.223.146:8081,192.168.223.146:8082这里使用tomcat的多实例做示例

upstream backserver {

server 192.168.223.146:8081 weight=1;

server 192.168.223.146:8082 weight=1;

}

location / {

root html;

index index.html index.htm;

proxy_pass http://backserver/;

}

负载均衡已实现,现在进行session会话绑定:

upstream backserver {

ip_hash;

server 192.168.223.146:8081 weight=1;

server 192.168.223.146:8082 weight=1;

}

然后不管怎么访问,源ip相同的都会被派往后端的同一台tomcat实例

2、tomcat结合apache做负载均衡,session绑定(apache与tomcat基于http协议)

httpd2.4以上版本,编译安装192.168.223.136,tomcat还是多实例192.168.223.146:8081,192.168.223.146:8082

httpd配置:vhosts.conf

<proxy balancer://lbcluster>

BalancerMember http://192.168.223.146:8081 loadfactor=1 route=tomcat1 这里route对应后端tomcat的配置文件的engine设置:<Engine name="Catalina" defaultHost="localhost" jvmRoute="tomcat1">

BalancerMember http://192.168.223.146:8082 loadfactor=1 route=tomcat2

</proxy>

<VirtualHost *:80>

ServerName 192.168.223.136

proxyVia On

ProxyRequests Off

ProxyPreserveHost On

<Proxy *>

Require all granted

</Proxy>

ProxyPass / balancer://lbcluster/

ProxyPassReverse / balancer://lbcluster/

<Location />

Require all granted

</Location>

</VirtualHost>

配置之后一直不见出现80端口,查看error日志:

[root@node1 ~]# tail -f /usr/local/apache2.4/logs/error_log

[Wed Aug 09 13:41:53.523457 2017] [mpm_prefork:notice] [pid 85758] AH00169: caught SIGTERM, shutting down

[Thu Aug 10 09:54:14.263767 2017] [proxy_balancer:emerg] [pid 88118] AH01177: Failed to lookup provider 'shm' for 'slotmem': is mod_slotmem_shm loaded??

[Thu Aug 10 09:54:14.263926 2017] [:emerg] [pid 88118] AH00020: Configuration Failed, exiting

[Thu Aug 10 09:54:26.284340 2017] [proxy_balancer:emerg] [pid 88123] AH01177: Failed to lookup provider 'shm' for 'slotmem': is mod_slotmem_shm loaded??

[Thu Aug 10 09:54:26.284427 2017] [:emerg] [pid 88123] AH00020: Configuration Failed, exiting

[Thu Aug 10 09:55:07.894942 2017] [proxy_balancer:emerg] [pid 88135] AH01177: Failed to lookup provider 'shm' for 'slotmem': is mod_slotmem_shm loaded??

[Thu Aug 10 09:55:07.895056 2017] [:emerg] [pid 88135] AH00020: Configuration Failed, exiting

[Thu Aug 10 09:57:00.483255 2017] [proxy:crit] [pid 88148] AH02432: Cannot find LB Method: byrequests

[Thu Aug 10 09:57:00.483388 2017] [proxy_balancer:emerg] [pid 88148] (22)Invalid argument: AH01183: Cannot share balancer

[Thu Aug 10 09:57:00.483420 2017] [:emerg] [pid 88148] AH00020: Configuration Failed, exiting

根据错误提示,在配置文件中开启相应的模块:

LoadModule lbmethod_byrequests_module modules/mod_lbmethod_byrequests.so

LoadModule slotmem_shm_module modules/mod_slotmem_shm.so

LoadModule proxy_balancer_module modules/mod_proxy_balancer.so

LoadModule proxy_http_module modules/mod_proxy_http.so

LoadModule proxy_module modules/mod_proxy.so

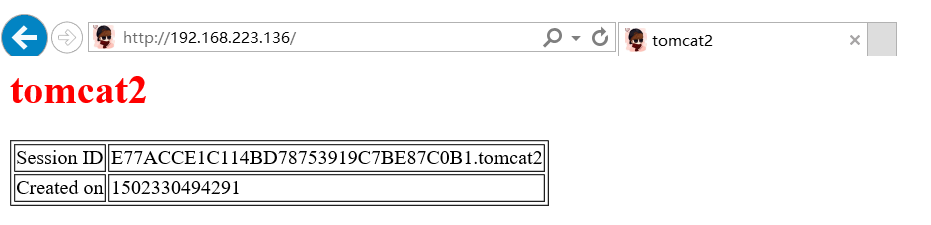

解决完后,开启服务后访问网站:

负载均衡已成功实现,现在将session会话进行绑定:

Header add Set-Cookie "ROUTEID=.%{BALANCER_WORKER_ROUTE}e; path=/" env=BALANCER_ROUTE_CHANGED

<proxy balancer://lbcluster>

BalancerMember http://192.168.223.146:8081 loadfactor=1 route=tomcat1

BalancerMember http://192.168.223.146:8082 loadfactor=1 route=tomcat2

ProxySet stickysession=ROUTEID

</proxy>

<VirtualHost *:80>

ServerName 192.168.223.136

proxyVia On

ProxyRequests Off

ProxyPreserveHost On

<Proxy *>

Require all granted

</Proxy>

ProxyPass / balancer://lbcluster/

ProxyPassReverse / balancer://lbcluster/

<Location />

Require all granted

</Location>

</VirtualHost>

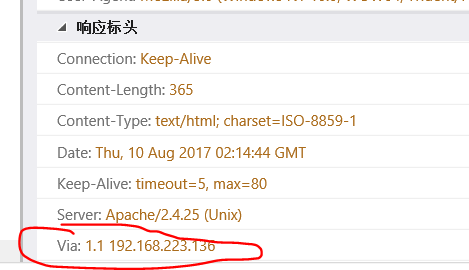

proxyVia On:

3、tomcat结合apache做负载均衡,session绑定(apache与tomcat基于ajp协议)

只需要将上面的配置文件修改为ajp协议就行,这里省略

4、tomcat结合apache做负载均衡,session绑定(apache与tomcat基于mod_jk模块)

1、编译安装mod_jk模块

wget http://archive.apache.org/dist/tomcat/tomcat-connectors/jk/tomcat-connectors-1.2.41-src.tar.gz

cd tomcat-connectors-1.2.41-src/native

./configure --with-apxs=/usr/local/apache2.4/bin/apxs

make && make install

2、配置相应的文件

cat mod_jk.conf

LoadModule jk_module modules/mod_jk.so

JkWorkersFile conf/extra/workers.properties

JkLogFile logs/mod_jk.log

JkLogLevel debug

JkMount /* tomcat1

JkMount /status/ stat1

3、workers.properties

cat workers.properties

worker.list=tomcat1,stat1

worker.tomcat1.port=8009

worker.tomcat1.host=192.168.1.155

worker.tomcat1.type=ajp13

worker.tomcat1.lbfactor=1

worker.stat1.type = status

但是检查语法时一直报错:

[root@wadeson conf]# /usr/local/apache2.4/bin/httpd -t

AH00526: Syntax error on line 1 of /usr/local/apache2.4/conf/extra/workers.properties:

Invalid command 'worker.list=tomcat1,stat1', perhaps misspelled or defined by a module not included in the server configuration

httpd2.4编译加载mod_jk模块设置配置文件一直报错,没有找到相应的解决办法

然后换成了centos7的yum安装的httpd2.4以上版本,192.168.223.147

wget http://archive.apache.org/dist/tomcat/tomcat-connectors/jk/tomcat-connectors-1.2.41-src.tar.gz

cd tomcat-connectors-1.2.41-src/native

./configure --with-apxs=/usr/bin/apxs

make && make install

[root@wadeson conf.d]# cat mod_jk.conf

LoadModule jk_module modules/mod_jk.so

JkWorkersFile /etc/httpd/conf.d/workers.properties

JkLogFile logs/mod_jk.log

JkLogLevel debug

JkMount /* tomcat1 这里的tomcat1要和后端tomcat的配置jvmRoute值相同<Engine name="Catalina" defaultHost="localhost" jvmRoute="tomcat1">

JkMount /status/ stat1

[root@wadeson conf.d]# cat workers.properties

worker.list=tomcat1,stat1

worker.tomcat1.port=8010

worker.tomcat1.host=192.168.223.146

worker.tomcat1.type=ajp13

worker.tomcat1.lbfactor=1

worker.stat1.type = status

配置都是一模一样,centos7自带的httpd就没有报错,编译安装就一直报错,于是采用centos7进行操作

基于模块mod_jk的反向代理已经成功,于是进行负载均衡:

[root@wadeson conf.d]# cat mod_jk.conf

LoadModule jk_module modules/mod_jk.so

JkWorkersFile /etc/httpd/conf.d/workers.properties

JkLogFile logs/mod_jk.log

JkLogLevel debug

JkMount /* lbcluster1

JkMount /status/ stat1

[root@wadeson conf.d]# cat workers.properties

worker.list=lbcluster1,stat1

worker.tomcat1.port=8010

worker.tomcat1.host=192.168.223.146

worker.tomcat1.type=ajp13

worker.tomcat1.lbfactor=1

worker.tomcat2.port=8011

worker.tomcat2.host=192.168.223.146

worker.tomcat2.type=ajp13

worker.tomcat2.lbfactor=1

worker.lbcluster1.type=lb

worker.lbcluster1.sticky_session=0

worker.lbcluster1.balance_workers = tomcat1,tomcat2

worker.stat1.type = status

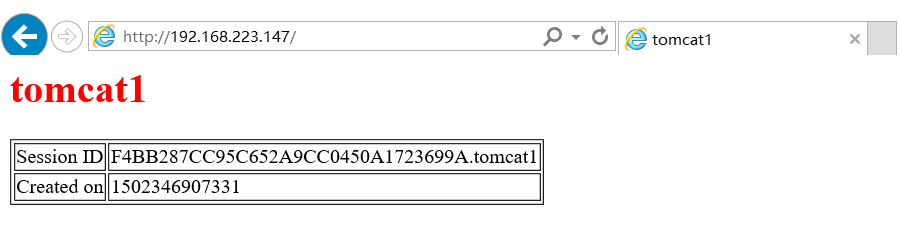

访问网站效果:

现在将负载均衡效果进行session会话绑定:

[root@wadeson conf.d]# cat workers.properties

worker.list=lbcluster1,stat1

worker.tomcat1.port=8010

worker.tomcat1.host=192.168.223.146

worker.tomcat1.type=ajp13

worker.tomcat1.lbfactor=1

worker.tomcat2.port=8011

worker.tomcat2.host=192.168.223.146

worker.tomcat2.type=ajp13

worker.tomcat2.lbfactor=1

worker.lbcluster1.type=lb

worker.lbcluster1.sticky_session=1 只需将这里的值将0变为1即可绑定session会话

worker.lbcluster1.balance_workers = tomcat1,tomcat2

worker.stat1.type = status

综上,httpd在编译安装2.4结合mod_jk模块遇到不可知的错误,更换centos7自带的2.4以上版本的yum包成功解决该错误

tomcat结合nginx或apache做负载均衡及session绑定的更多相关文章

- 通过Nginx+tomcat+redis实现反向代理 、负载均衡及session同步

一直对于负载均衡比较陌生,今天尝试着去了解了一下,并做了一个小的实验,对于这个概念有一些认识,在此做一个简单的总结 什么是负载均衡 负载均衡,英文 名称为Load Balance,指由多台服务器以对称 ...

- nginx反向代理做负载均衡以及使用redis实现session共享配置详解

1.为什么要用nginx做负载均衡? 首先我们要知道用单机tomcat做的网站,比较理想的状态下能够承受的并发访问在150到200, 按照并发访问量占总用户数的5%到10%技术,单点tomcat的用户 ...

- 使用nginx做负载均衡的session共享问题

查了一些资料,看了一些别人写的文档,总结如下,实现nginx session的共享PHP服务器有多台,用nginx做负载均衡,这样同一个IP访问同一个页面会被分配到不同的服务器上,如果session不 ...

- 关于Apache做负载均衡

Tomcat+apache配置负载均衡系统笔记 在Apache conf目录下的httpd.conf文件添加以下文字 #---------------------start------------ ...

- 整合Tomcat和Nginx实现动静态负载均衡

转载请注明原文地址:http://www.cnblogs.com/ygj0930/p/6386135.html Nginx与tomcat整合可以实现服务器的负载均衡. 在用户的请求发往服务器进行处理时 ...

- Nginx+Tomcat8+Memcached实现负载均衡及session共享

1> 基础环境 简易拓扑图: 2> 部署Tomcat [root@node01 ~]# ll -h ~ |egrep 'jdk|tomcat'-rw-r--r-- 1 root root ...

- windows系统下nginx+tomcat+redis做负载均衡和session粘滞附整套解决方案

Nginx: 在nginx-1.8.0\conf目录下找到nginx.conf文件,打开文件修改文件中http{}中的内容,在http{}中加入 upstream localhost { serve ...

- Nginx反向代理实现负载均衡以及session共享

随着社会的发展和科技水平的不断提高,互联网在人们日常生活中扮演着越来越重要的角色,同时网络安全,网络可靠性等问题日益突出.传统的单体服务架构已不能满足现代用户需求.随之而来的就是各种分布式/集群式的服 ...

- nginx + tomcat + memcached 做负载均衡及session同步

1.nginx配置 # For more information on configuration, see: # * Official English Documentation: http://n ...

随机推荐

- Hibernate更新数据报错:a different object with the same identifier value was already associated with the session: [com.elec.domain.ElecCommonMsg#297e35035c28c368015c28c3e6780001]

使用hibernate更新数据时,报错 Struts has detected an unhandled exception: Messages: a different object with th ...

- POJ3272 Cow Traffic

题目链接:http://poj.org/problem?id=3272 题目意思:n个点m条边的有向图,从所有入度为0的点出发到达n,问所有可能路径中,经过的某条路的最大次数是多少.边全是由标号小的到 ...

- UITextView 的 return响应事件

在UITextView里没有UITextField里的- (BOOL)textFieldShouldReturn:(UITextField *)textField;直接的响应事件;那么在TextVie ...

- ios开发 更改状态栏

设置statusBar 简单来说,就是设置显示电池电量.时间.网络部分标示的颜色, 这里只能设置两种颜色: 默认的黑色(UIStatusBarStyleDefault) 白色(UIStatusBarS ...

- WARNING:tensorflow:From /usr/lib/python2.7/site-packages/tensorflow/python/util/tf_should_use.py:189: initialize_all_variables (from tensorflow.python.ops.variables) is deprecated and will be removed

initialize_all_variables已被弃用,将在2017-03-02之后删除. 说明更新:使用tf.global_variables_initializer代替. 就把tf.initia ...

- $obj->0

w对象 数组 分别对内存的 消耗 CI result() This method returns the query result as an array of objects, or an empt ...

- Java 之NIO

1. NIO 简介 Java NIO(New IO)是从1.4版本开始引入的一个新的IO API,可以替代标准的Java IO API; NIO 与原来的IO有同样的作用和目的,但是使用的方式完全不同 ...

- 【numpy】

ndarray在某个维度上堆叠,np.stack() np.hstack() np.vstack() https://blog.csdn.net/csdn15698845876/article/det ...

- 临时修改当前crontab编辑器

EDITOR=viexport EDITOR然后crontab -e就不会有这个问题了

- CNI插件编写框架分析

概述 在<CNI, From A Developer's Perspective>一文中,我们已经对CNI有了较为深入的了解.我们知道,容器网络功能的实现最终是通过CNI插件来完成的.每个 ...