Standalone模式下,通过Systemd管理Flink1.11.1的启停及异常退出

Flink以Standalone模式运行时,可能会发生jobmanager(以下简称jm)或taskmanager(以下简称tm)异常退出的情况,我们可以使用Linux自带的Systemd方式管理jm以及tm的启停,并在jm或tm出现故障时,及时将jm以及tm拉起来。

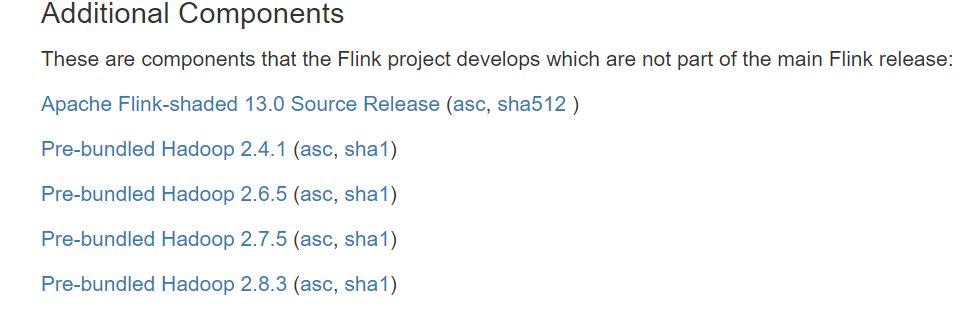

Flink在1.11版本后,从发行版中移除了对Hadoop的依赖包,如果需要使用Hadoop的一些特性,有两种解决方案:

【注】以下假设java、flink、hadoop都安装在/opt目录下,并且都建立了软连接:

1.设置HADOOP_CLASSPATH环境变量(推荐方案)

在安装了Flink的所有节点上,在/etc/profile中进行如下设置:

# Hadoop Envexport HADOOP_HOME=/opt/hadoopexport PATH=$PATH:$HADOOP_HOME/bin:$HADOOP_HOME/sbinexport HADOOP_CLASSPATH=`hadoop classpath`

然后通过以下命令使环境变量生效

sudo source /etc/profile

2.下载flink-shaded-hadoop-2-uber对应的jar包,并拷贝到Flink安装路径的lib目录下

下载地址:https://flink.apache.org/downloads.html#additional-components

由于以systemd方式启动时,系统设置的环境变量,在.service文件中是不能使用的,所以需要在.service文件中单独显式设置环境变量:

1./usr/lib/systemd/system/flink-jobmanager.service

[Unit]

Description=Flink Job Manager

After=syslog.target network.target remote-fs.target nss-lookup.target network-online.target

Requires=network-online.target [Service]

User=teld

Group=teld

Type=forking

Environment=PATH=/opt/java/bin:/opt/flink/bin:/usr/local/sbin:/usr/local/bin:/sbin:/bin:/usr/sbin:/usr/bin:/root/bin

Environment=JAVA_HOME=/opt/java

Environment=FLINK_HOME=/opt/flink

Environment=HADOOP_CLASSPATH=/opt/hadoop/etc/hadoop:/opt/hadoop/share/hadoop/common/lib/*:/opt/hadoop/share/hadoop/common/*:/opt/hadoop/

share/hadoop/hdfs:/opt/hadoop/share/hadoop/hdfs/lib/*:/opt/hadoop/share/hadoop/hdfs/*:/opt/hadoop/share/hadoop/yarn/lib/*:/opt/hadoop/sh

are/hadoop/yarn/*:/opt/hadoop/share/hadoop/mapreduce/lib/*:/opt/hadoop/share/hadoop/mapreduce/*:/opt/hadoop/contrib/capacity-scheduler/*

.jar

ExecStart=/opt/flink/bin/jobmanager.sh start

ExecStop=/opt/flink/bin/jobmanager.sh stop Restart=on-failure [Install]

WantedBy=multi-user.target

【注】HADOOP_CLASSPATH对应的值,是通过执行以下命令获得到的:

hadoop classpath

2./usr/lib/systemd/system/flink-taskmanager.service

[Unit]

Description=Flink Task Manager

After=syslog.target network.target remote-fs.target nss-lookup.target network-online.target

Requires=network-online.target [Service]

User=teld

Group=teld

Type=forking

Environment=PATH=/opt/java/bin:/opt/flink/bin:/usr/local/sbin:/usr/local/bin:/sbin:/bin:/usr/sbin:/usr/bin:/root/bin

Environment=JAVA_HOME=/opt/java

Environment=FLINK_HOME=/opt/flink

Environment=HADOOP_CLASSPATH=/opt/hadoop/etc/hadoop:/opt/hadoop/share/hadoop/common/lib/*:/opt/hadoop/share/hadoop/common/*:/opt/hadoop/

share/hadoop/hdfs:/opt/hadoop/share/hadoop/hdfs/lib/*:/opt/hadoop/share/hadoop/hdfs/*:/opt/hadoop/share/hadoop/yarn/lib/*:/opt/hadoop/sh

are/hadoop/yarn/*:/opt/hadoop/share/hadoop/mapreduce/lib/*:/opt/hadoop/share/hadoop/mapreduce/*:/opt/hadoop/contrib/capacity-scheduler/*

.jar

ExecStart=/opt/flink/bin/taskmanager.sh start

ExecStop=/opt/flink/bin/taskmanager.sh stop Restart=on-failure [Install]

WantedBy=multi-user.target

【注】HADOOP_CLASSPATH对应的值,是通过执行以下命令获得到的:

hadoop classpath

通过sudo systemctl daemon-reload命令来加载上面针对jm以及tm的配置后,就可以使用Systemd的方式来管理jm以及tm了,并且能够在jm以及tm异常退出时,及时将它们拉起来:

sudo systemctl start flink-jobmanager.service

sudo systemctl stop flink-jobmanager.service

sudo systemctl status flink-jobmanager.service

sudo systemctl start flink-taskmanager.service

sudo systemctl stop flink-taskmanager.service

sudo systemctl status flink-jobmanager.service

遇到的坑:

1.如果Flink设置了启用Checkpoint,但是没有设置HADOOP_CLASSPATH环境变量,则提交job的时候,会报如下异常:

Caused by: org.apache.flink.util.FlinkRuntimeException: Failed to create checkpoint storage at checkpoint coordinator side.

at org.apache.flink.runtime.checkpoint.CheckpointCoordinator.<init>(CheckpointCoordinator.java:304)

at org.apache.flink.runtime.checkpoint.CheckpointCoordinator.<init>(CheckpointCoordinator.java:223)

at org.apache.flink.runtime.executiongraph.ExecutionGraph.enableCheckpointing(ExecutionGraph.java:483)

at org.apache.flink.runtime.executiongraph.ExecutionGraphBuilder.buildGraph(ExecutionGraphBuilder.java:338)

at org.apache.flink.runtime.scheduler.SchedulerBase.createExecutionGraph(SchedulerBase.java:269)

at org.apache.flink.runtime.scheduler.SchedulerBase.createAndRestoreExecutionGraph(SchedulerBase.java:242)

at org.apache.flink.runtime.scheduler.SchedulerBase.<init>(SchedulerBase.java:229)

at org.apache.flink.runtime.scheduler.DefaultScheduler.<init>(DefaultScheduler.java:119)

at org.apache.flink.runtime.scheduler.DefaultSchedulerFactory.createInstance(DefaultSchedulerFactory.java:103)

at org.apache.flink.runtime.jobmaster.JobMaster.createScheduler(JobMaster.java:284)

at org.apache.flink.runtime.jobmaster.JobMaster.<init>(JobMaster.java:272)

at org.apache.flink.runtime.jobmaster.factories.DefaultJobMasterServiceFactory.createJobMasterService(DefaultJobMasterServiceFac

tory.java:98)

at org.apache.flink.runtime.jobmaster.factories.DefaultJobMasterServiceFactory.createJobMasterService(DefaultJobMasterServiceFac

tory.java:40)

at org.apache.flink.runtime.jobmaster.JobManagerRunnerImpl.<init>(JobManagerRunnerImpl.java:140)

at org.apache.flink.runtime.dispatcher.DefaultJobManagerRunnerFactory.createJobManagerRunner(DefaultJobManagerRunnerFactory.java

:84)

at org.apache.flink.runtime.dispatcher.Dispatcher.lambda$createJobManagerRunner$6(Dispatcher.java:388)

... 7 more

Caused by: org.apache.flink.core.fs.UnsupportedFileSystemSchemeException: Could not find a file system implementation for scheme 'hdfs'.

The scheme is not directly supported by Flink and no Hadoop file system to support this scheme could be loaded. For a full list of supp

2.在为flink-jobmanager.service以及flink-taskmanager.service中的HADOOP_CLASSPATH环境变量赋值时,尝试使用过反引号,期望将反引号内的Linux命令执行结果赋予变量,但实际上并不会执行反引号中的内容:

Environment=HADOOP_CLASSPATH=`/opt/hadoop/bin/hadoop classpath`

最后只得将直接执行hadoop classpath获得的结果,粘贴到.service文件中

Environment=HADOOP_CLASSPATH=/opt/hadoop/etc/hadoop:/opt/hadoop/share/hadoop/common/lib/*:/opt/hadoop/share/hadoop/common/*:/opt/hadoop/

share/hadoop/hdfs:/opt/hadoop/share/hadoop/hdfs/lib/*:/opt/hadoop/share/hadoop/hdfs/*:/opt/hadoop/share/hadoop/yarn/lib/*:/opt/hadoop/sh

are/hadoop/yarn/*:/opt/hadoop/share/hadoop/mapreduce/lib/*:/opt/hadoop/share/hadoop/mapreduce/*:/opt/hadoop/contrib/capacity-scheduler/*

.jar

Standalone模式下,通过Systemd管理Flink1.11.1的启停及异常退出的更多相关文章

- sql服务器第5级事务日志管理的阶梯:完全恢复模式下的日志管理

sql服务器第5级事务日志管理的阶梯:完全恢复模式下的日志管理 原文链接http://www.sqlservercentral.com/articles/Stairway+Series/73785/ ...

- 关于spark standalone模式下的executor问题

1.spark standalone模式下,worker与executor是一一对应的. 2.如果想要多个worker,那么需要修改spark-env的SPARK_WORKER_INSTANCES为2 ...

- VLAN 模式下的 OpenStack 管理 vSphere 集群方案

本文不合适转载,只用于自我学习. 关于为什么要用OpenStack 管理 vSphere 集群,原因可以有很多,特别是一些传统企业,VMware 的使用还是很普遍的,用 OpenStack 纳管至少会 ...

- 【Spark】Spark-shell案例——standAlone模式下读取HDFS上存放的文件

目录 可以先用local模式读取一下 步骤 一.先将做测试的数据上传到HDFS 二.开发scala代码 standAlone模式查看HDFS上的文件 步骤 一.退出local模式,重新进入Spark- ...

- weblogic管理3 - 生产模式下免密码管理配置

admin server免密码配置 >1. 生产模式中admin root目录下是否存在security/boot.properties文件 [weblogic@11g AdminServer ...

- windows游戏编程X86 32位保护模式下的内存管理概述(二)

本系列文章由jadeshu编写,转载请注明出处.http://blog.csdn.net/jadeshu/article/details/22448323 作者:jadeshu 邮箱: jades ...

- SQL Server Reporting Services本机模式下的权限管理

SQL Server Reporting Services在安装配置后,缺省只给BUILTIN\Administrators用户组(实际上只有本机的Administrator用户)提供管理权限.所以所 ...

- 在standalone模式下运行yarn 0.9.0对HDFS上的数据进行计算

1.通读http://spark.incubator.apache.org/docs/latest/spark-standalone.html 2.在每台机器上将spark安装到/opt/spark ...

- Spark在StandAlone模式下提交任务,spark.rpc.message.maxSize太小而出错

1.错误信息org.apache.spark.SparkException: Job aborted due to stage failure:Serialized task 32:5 was 172 ...

随机推荐

- 使paramiko库执行命令时,在给定的时间强制退出

原因: 使用paramiko库ssh连接到远端云主机上时,非常偶现卡死现象,连接无法退出(可以是执行命令时云主机重启等造成).需要给定一段时间,不管命令执行是否卡住,都退出连接,显示命令执行超时错误. ...

- System.IO.IOException:“找不到资源“window1.xaml”。” 解决方法

报错:找不到资源"window1.xaml 原因:在编译时使用的是en-US选项进行编译并生成了en-US为名的文件夹,里面包含了可本地化的内容:但是你的本地系统使用的是zh-CN,在你运行 ...

- PUToast - 使用PopupWindow在Presentation上模拟Toast

PUToast Android10 (API 29) 之前 Toast 组件默认只能展示在主 Display 上,PUToast 通过构造一个 PopupWindoww 在 Presentation ...

- 链表算法题之中等级别,debug调试更简单

文章简述 大家好,本篇是个人的第 5 篇文章 从本篇文章开始,分享关于链表的题目为中等难度,本次共有 3 道题目. 一,两数相加 1.1 题目分析 题中写到数字是按照逆序的方式存储,从进位的角度看,两 ...

- Linux速通 随笔整理

Linux速通 随笔整理 为了方便阅读,特整理了相关的学习笔记 零.大纲 一.系统安装 二.命令格式 三.文件管理 四.用户群组 五.文件处理 六.系统初始化及监控 七.硬盘初始化 八.网络原理

- Fcitx5 上线 FreeBSD

Fcitx5 上线 FreeBSD textproc/fcitx5textproc/fcitx5-qttextproc/fcitx5-gtktextproc/fcitx5-configtoolchin ...

- Serverless Wordpress 系列建站教程(三)

从前面两篇教程文章里,我们可以了解到 Serverless WordPress 的低门槛部署,免运维等功能优势.而建站场景中,开发者关注的另一个重点则是成本问题,Serverless 架构究竟如何计费 ...

- Windows系统添加虚拟串口及CanToolApp功能1的实现

项目开始尝试用com0com添加虚拟串口,但是遇到了问题,系统中可以看到添加的虚拟串口,但是用C#无法获取串口.经过多次尝试后,决定换用Virtual Serial Port Driver添加虚拟串口 ...

- 最简要的Dubbo文档

1.Dubbo是什么? Dubbo是阿里巴巴开源的基于 Java 的高性能 RPC 分布式服务框架,现已成为 Apache 基金会孵化项目. 面试官问你如果这个都不清楚,那下面的就没必要问了. 官网: ...

- GreenDao3.2使用详解(增,删,改,查,升级)

首先看一下效果图: 项目结构如下图所示: 第一步:在build中添加配置如下: projet 目录下的build.gradle dependencies { classpath 'org.greenr ...