Classification and Representation

Classification

To attempt classification, one method is to use linear regression and map all predictions greater than 0.5 as a 1 and all less than 0.5 as a 0. However, this method doesn't work well because classification is not actually a linear function.

The classification problem is just like the regression problem, except that the values we now want to predict take on only a small number of discrete values. For now, we will focus on the binary classification problem in which y can take on only two values, 0 and 1. (Most of what we say here will also generalize to the multiple-class case.) For instance, if we are trying to build a spam classifier for email, then  may be some features of a piece of email, and y may be 1 if it is a piece of spam mail, and 0 otherwise. Hence, y∈{0,1}. 0 is also called the negative class, and 1 the positive class, and they are sometimes also denoted by the symbols “-” and “+.” Given x(i), the corresponding

may be some features of a piece of email, and y may be 1 if it is a piece of spam mail, and 0 otherwise. Hence, y∈{0,1}. 0 is also called the negative class, and 1 the positive class, and they are sometimes also denoted by the symbols “-” and “+.” Given x(i), the corresponding  is also called the label for the training example.

is also called the label for the training example.

Hypothesis Representation

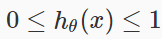

We could approach the classification problem ignoring the fact that y is discrete-valued, and use our old linear regression algorithm to try to predict y given x. However, it is easy to construct examples where this method performs very poorly. Intuitively, it also doesn’t make sense for hθ(x) to take values larger than 1 or smaller than 0 when we know that y ∈ {0, 1}. To fix this, let’s change the form for our hypotheses hθ(x) to satisfy . This is accomplished by plugging

. This is accomplished by plugging  into the Logistic Function.

into the Logistic Function.

Our new form uses the "Sigmoid Function," also called the "Logistic Function":

The following image shows us what the sigmoid function looks like:

The function g(z), shown here, maps any real number to the (0, 1) interval, making it useful for transforming an arbitrary-valued function into a function better suited for classification.

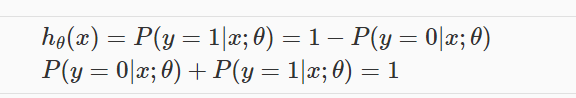

hθ(x) will give us the probability that our output is 1. For example, hθ(x)=0.7 gives us a probability of 70% that our output is 1. Our probability that our prediction is 0 is just the complement of our probability that it is 1 (e.g. if probability that it is 1 is 70%, then the probability that it is 0 is 30%).

Decision Boundary

In order to get our discrete 0 or 1 classification, we can translate the output of the hypothesis function as follows:

The way our logistic function g behaves is that when its input is greater than or equal to zero, its output is greater than or equal to 0.5:

Remember.

So if our input to g is  , then that means:

, then that means:

From these statements we can now say:

The decision boundary is the line that separates the area where y = 0 and where y = 1. It is created by our hypothesis function.

Example:

Multiclass Classification: One-vs-all

Now we will approach the classification of data when we have more than two categories. Instead of y = {0,1} we will expand our definition so that y = {0,1...n}.

Since y = {0,1...n}, we divide our problem into n+1 (+1 because the index starts at 0) binary classification problems; in each one, we predict the probability that 'y' is a member of one of our classes.

The following image shows how one could classify 3 classes:We are basically choosing one class and then lumping all the others into a single second class. We do this repeatedly, applying binary logistic regression to each case, and then use the hypothesis that returned the highest value as our prediction.

To summarize:

Classification and Representation的更多相关文章

- 浅谈Logistic回归及过拟合

判断学习速率是否合适?每步都下降即可.这篇先不整理吧... 这节学习的是逻辑回归(Logistic Regression),也算进入了比较正统的机器学习算法.啥叫正统呢?我概念里面机器学习算法一般是这 ...

- Stanford机器学习---第三讲. 逻辑回归和过拟合问题的解决 logistic Regression & Regularization

原文:http://blog.csdn.net/abcjennifer/article/details/7716281 本栏目(Machine learning)包括单参数的线性回归.多参数的线性回归 ...

- Machine Learning - 第3周(Logistic Regression、Regularization)

Logistic regression is a method for classifying data into discrete outcomes. For example, we might u ...

- 《Machine Learning》系列学习笔记之第三周

第三周 第一部分 Classification and Representation Classification 为了尝试分类,一种方法是使用线性回归,并将大于0.5的所有预测映射为1,所有小于0. ...

- Andrew Ng机器学习课程笔记--week3(逻辑回归&正则化参数)

Logistic Regression 一.内容概要 Classification and Representation Classification Hypothesis Representatio ...

- ICLR 2014 International Conference on Learning Representations深度学习论文papers

ICLR 2014 International Conference on Learning Representations Apr 14 - 16, 2014, Banff, Canada Work ...

- Course Machine Learning Note

Machine Learning Note Introduction Introduction What is Machine Learning? Two definitions of Machine ...

- Survey of single-target visual tracking methods based on online learning 翻译

基于在线学习的单目标跟踪算法调研 摘要 视觉跟踪在计算机视觉和机器人学领域是一个流行和有挑战的话题.由于多种场景下出现的目标外貌和复杂环境变量的改变,先进的跟踪框架就有必要采用在线学习的原理.本论文简 ...

- 《Learning Structured Representation for Text Classification via Reinforcement Learning》论文翻译.md

摘要 表征学习是自然语言处理中的一个基本问题.本文研究了如何学习文本分类的结构化表示.与大多数既不使用结构又依赖于预先指定结构的现有表示模型不同,我们提出了一种强化学习(RL)方法,通过自动覆盖优化结 ...

随机推荐

- pip 更新安装失败解决方法

python3 -m ensurepip https://stackoverflow.com/questions/28664082/python-no-module-pip-main-error-wh ...

- 【2017 Multi-University Training Contest - Team 2】 Regular polygon

[Link]: [Description] 给你n个点整数点; 问你这n个点,能够组成多少个正多边形 [Solution] 整点只能构成正四边形. 则先把所有的边预处理出来; 枚举每某两条边为对角线的 ...

- Jquery学习总结(1)——Jquery常用代码片段汇总

1. 禁止右键点击 ? 1 2 3 4 5 $(document).ready(function(){ $(document).bind("contextmenu",fun ...

- understand软件使用教程(转)

源代码阅读工具(Scientific Toolworks Understand)的特色 1.支持多语言:Ada, C, C++, C#, Java, FORTRAN, Delphi, Jovial, ...

- Ubuntu 美团sql优化工具SQLAdvisor的安装(转)

by2009 by2009 发表于 3 个月前 SQLAdvisor简介 SQLAdvisor是由美团点评公司技术工程部DBA团队(北京)开发维护的一个分析SQL给出索引优化建议的工具.它基于MySQ ...

- Java语言的Hook实现

引言:最近在玩完美时空的诛仙Online(不知道这里有没人有共同爱好的),这个游戏每晚七点会出现一个任务"新科试炼".这个任务简单地说就是做选择题,范围小到柴米油盐,大到世界大千, ...

- ios学习之旅---c语言函数

1.函数的概述 C源程序是由函数组成的. 尽管在前面各章的程序中大都仅仅有一个主函数main(),但有用程序往往由多个 函数组成. 函数是C源程序的基本模块,通过对函数模块的调用实现特定的功能. C语 ...

- AsyncTask源代码翻译

前言: /** <p>AsyncTask enables proper and easy use of the UI thread. This class allows to perfor ...

- worktools-git 工具的使用总结(3)

1.标签的使用,增加标签 git tag 1.0 branch_name zhangshuli@zhangshuli-MS-:~/myGit$ git br -av parent e2e09c4 so ...

- CISP/CISA 每日一题 八

CISA 每日一题(答)网关执行电子邮件格式转换 电子邮件安全——加密 大文件——对称加密 不可否认——非对称 哈希——完整性 电子银行主要风险: 战略.经营和声誉上的风险 双SSP每日一题 ...