附014.Kubernetes Prometheus+Grafana+EFK+Kibana+Glusterfs整合解决方案

一 glusterfs存储集群部署

1.1 架构示意

1.2 相关规划

|

主机

|

IP

|

磁盘

|

备注

|

|

k8smaster01

|

172.24.8.71

|

——

|

Kubernetes Master节点

Heketi主机

|

|

k8smaster02

|

172.24.8.72

|

——

|

Kubernetes Master节点

Heketi主机

|

|

k8smaster03

|

172.24.8.73

|

——

|

Kubernetes Master节点

Heketi主机

|

|

k8snode01

|

172.24.8.74

|

sdb

|

Kubernetes Worker节点

glusterfs 01节点

|

|

k8snode02

|

172.24.8.75

|

sdb

|

Kubernetes Worker节点

glusterfs 02节点

|

|

k8snode03

|

172.24.8.76

|

sdb

|

Kubernetes Worker节点

glusterfs 03节点

|

1.3 安装glusterfs

1.4 添加信任池

1.5 安装heketi

1.6 配置heketi

{ " "", " " " " " " " }, " " " } }, " " " " " " " " " " ], " " " " " "", " }, " " " " " " ], " } }

1.7 配置免秘钥

1.8 启动heketi

1.9 配置Heketi拓扑

{ " { " { " " " " ], " " ] }, " }, " " ] }, { " " " " ], " " ] }, " }, " " ] }, { " " " " ], " " ] }, " }, " " ] } ] } ] }

1.10 集群管理及测试

1.11 创建StorageClass

apiVersion: v1 kind: Secret metadata: namespace: heketi key: YWRtaW4xMjM= type: kubernetes.io/glusterfs

StorageClass apiVersion: storage.k8s.io/v1 kind: StorageClass metadata: parameters: resturl: " clusterid: " restauthenabled: " restuser: " secretName: " secretNamespace: " volumetype: " provisioner: kubernetes.io/glusterfs reclaimPolicy: Delete

二 集群监控Metrics

2.1 开启聚合层

2.2 获取部署文件

…… image: mirrorgooglecontainers/metrics-server-amd64:v0.3.6 command: - /metrics-server - --metric-resolution=30s - --kubelet-insecure-tls - --kubelet-preferred-address- ……

2.3 正式部署

2.4 确认验证

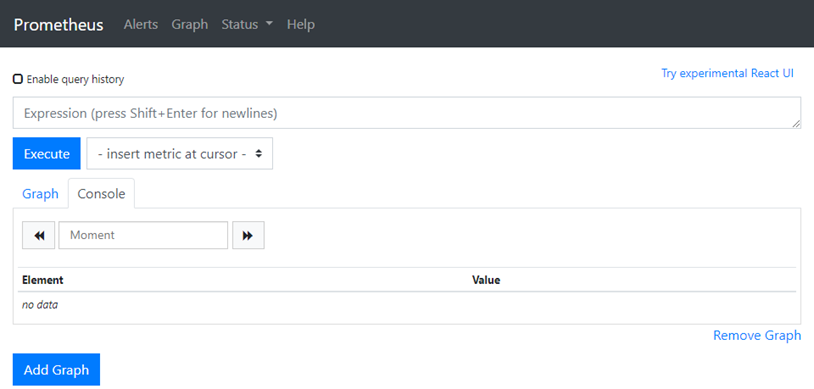

三 Prometheus部署

3.1 获取部署文件

3.2 创建命名空间

apiVersion: v1 kind: Namespace metadata:

3.3 创建RBAC

apiVersion: rbac.authorization.k8s.io/v1beta1 kind: ClusterRole metadata: rules: - apiGroups: [""] resources: - nodes - nodes/ - services - endpoints - pods verbs: [" - apiGroups: - extensions resources: - ingresses verbs: [" - nonResourceURLs: [" verbs: [" --- apiVersion: v1 kind: ServiceAccount metadata: namespace: monitoring --- apiVersion: rbac.authorization.k8s.io/v1beta1 kind: ClusterRoleBinding metadata: roleRef: apiGroup: rbac.authorization.k8s.io kind: ClusterRole subjects: - kind: ServiceAccount namespace: monitoring #仅需修改命名空间

3.4 创建Prometheus ConfigMap

apiVersion: v1 kind: ConfigMap metadata: labels: namespace: monitoring prometheus.yml: |- global: scrape_interval: 10s evaluation_interval: 10s scrape_configs: - job_name: 'kubernetes-apiservers' kubernetes_sd_configs: - role: endpoints scheme: https tls_config: ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token relabel_configs: - source_labels: [__meta_kubernetes_namespace, __meta_kubernetes_service_name, __meta_kubernetes_endpoint_port_name] action: keep regex: default;kubernetes;https - job_name: 'kubernetes-nodes' scheme: https tls_config: ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token kubernetes_sd_configs: - role: node relabel_configs: - action: labelmap regex: __meta_kubernetes_node_label_(.+) - target_label: __address__ replacement: kubernetes.default.svc:443 - source_labels: [__meta_kubernetes_node_name] regex: (.+) target_label: __metrics_path__ replacement: /api/v1/nodes/${1}/ - job_name: 'kubernetes-cadvisor' scheme: https tls_config: ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token kubernetes_sd_configs: - role: node relabel_configs: - action: labelmap regex: __meta_kubernetes_node_label_(.+) - target_label: __address__ replacement: kubernetes.default.svc:443 - source_labels: [__meta_kubernetes_node_name] regex: (.+) target_label: __metrics_path__ replacement: /api/v1/nodes/${1}/ - job_name: 'kubernetes-service-endpoints' kubernetes_sd_configs: - role: endpoints relabel_configs: - source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scrape] action: keep regex: true - source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scheme] action: target_label: __scheme__ regex: (https?) - source_labels: [__meta_kubernetes_service_annotation_prometheus_io_path] action: target_label: __metrics_path__ regex: (.+) - source_labels: [__address__, __meta_kubernetes_service_annotation_prometheus_io_port] action: target_label: __address__ regex: ([^:]+)(?::\d+)?;(\d+) replacement: $1:$2 - action: labelmap regex: __meta_kubernetes_service_label_(.+) - source_labels: [__meta_kubernetes_namespace] action: target_label: kubernetes_namespace - source_labels: [__meta_kubernetes_service_name] action: target_label: kubernetes_name - job_name: 'kubernetes-services' metrics_path: /probe params: kubernetes_sd_configs: - role: service relabel_configs: - source_labels: [__meta_kubernetes_service_annotation_prometheus_io_probe] action: keep regex: true - source_labels: [__address__] target_label: __param_target - target_label: __address__ replacement: blackbox-exporter.example.com:9115 - source_labels: [__param_target] target_label: - action: labelmap regex: __meta_kubernetes_service_label_(.+) - source_labels: [__meta_kubernetes_namespace] target_label: kubernetes_namespace - source_labels: [__meta_kubernetes_service_name] target_label: kubernetes_name - job_name: 'kubernetes-ingresses' kubernetes_sd_configs: - role: ingress relabel_configs: - source_labels: [__meta_kubernetes_ingress_annotation_prometheus_io_probe] action: keep regex: true - source_labels: [__meta_kubernetes_ingress_scheme,__address__,__meta_kubernetes_ingress_path] regex: (.+);(.+);(.+) replacement: ${1}://${2}${3} target_label: __param_target - target_label: __address__ replacement: blackbox-exporter.example.com:9115 - source_labels: [__param_target] target_label: - action: labelmap regex: __meta_kubernetes_ingress_label_(.+) - source_labels: [__meta_kubernetes_namespace] target_label: kubernetes_namespace - source_labels: [__meta_kubernetes_ingress_name] target_label: kubernetes_name - job_name: 'kubernetes-pods' kubernetes_sd_configs: - role: pod relabel_configs: - source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_scrape] action: keep regex: true - source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_path] action: target_label: __metrics_path__ regex: (.+) - source_labels: [__address__, __meta_kubernetes_pod_annotation_prometheus_io_port] action: regex: ([^:]+)(?::\d+)?;(\d+) replacement: $1:$2 target_label: __address__ - action: labelmap regex: __meta_kubernetes_pod_label_(.+) - source_labels: [__meta_kubernetes_namespace] action: target_label: kubernetes_namespace - source_labels: [__meta_kubernetes_pod_name] action: target_label: kubernetes_pod_name

3.5 创建持久PVC

apiVersion: v1 kind: PersistentVolumeClaim metadata: namespace: monitoring annotations: volume.beta.kubernetes.io/storage- spec: accessModes: - ReadWriteMany resources: requests: storage: 5Gi

3.6 Prometheus部署

apiVersion: apps/v1beta2 kind: Deployment metadata: labels: namespace: monitoring spec: replicas: 1 selector: matchLabels: app: prometheus-server template: metadata: labels: app: prometheus-server spec: containers: - image: prom/prometheus:v2.14.0 command: - " args: - " - " - " ports: - containerPort: 9090 protocol: TCP volumeMounts: - mountPath: /etc/prometheus/ - mountPath: /prometheus/ serviceAccountName: prometheus imagePullSecrets: - volumes: - configMap: defaultMode: 420 - persistentVolumeClaim: claimName: prometheus-pvc

3.7 创建Prometheus Service

apiVersion: v1 kind: Service metadata: labels: app: prometheus-service namespace: monitoring spec: type: NodePort selector: app: prometheus-server ports: - port: 9090 targetPort: 9090 nodePort: 30001

3.8 确认验证Prometheus

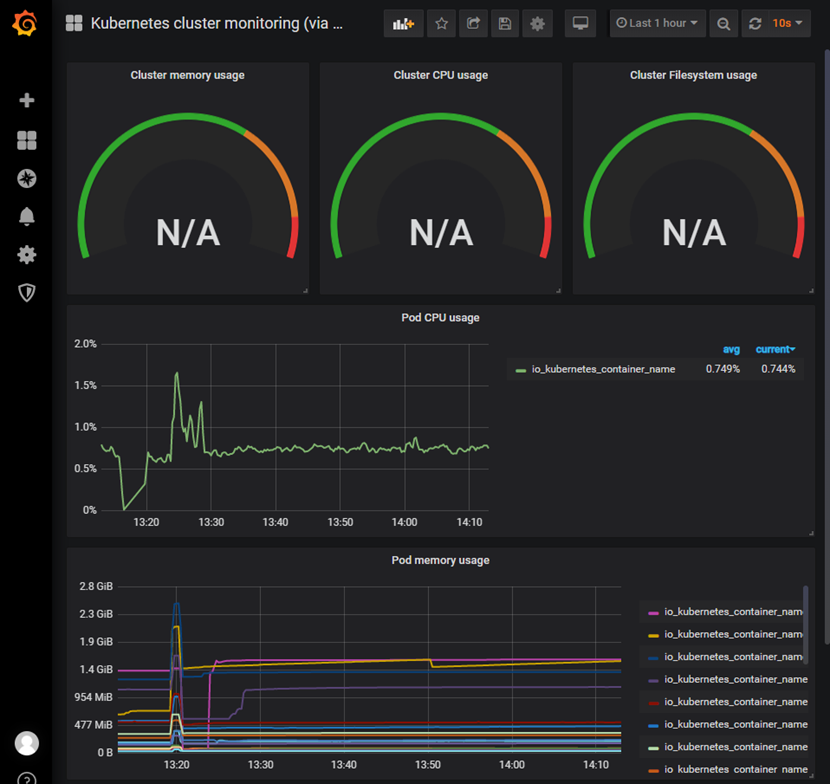

四 部署grafana

4.1 获取部署文件

4.2 创建持久PVC

apiVersion: v1 kind: PersistentVolumeClaim metadata: namespace: monitoring annotations: volume.beta.kubernetes.io/storage- spec: accessModes: - ReadWriteOnce resources: requests: storage: 5Gi

4.3 grafana部署

apiVersion: extensions/v1beta1 kind: Deployment metadata: namespace: monitoring spec: replicas: 1 template: metadata: labels: task: monitoring k8s-app: grafana spec: containers: - image: grafana/grafana:6.5.0 imagePullPolicy: IfNotPresent ports: - containerPort: 3000 protocol: TCP volumeMounts: - mountPath: /var/lib/grafana env: - value: monitoring-influxdb - value: "" - value: " - value: " - value: Admin - value: / readinessProbe: httpGet: port: 3000 volumes: - persistentVolumeClaim: claimName: grafana- nodeSelector: node-role.kubernetes.io/master: " tolerations: - key: " effect: " --- apiVersion: v1 kind: Service metadata: labels: kubernetes.io/cluster-service: 'true' kubernetes.io/ annotations: prometheus.io/scrape: 'true' prometheus.io/tcp-probe: 'true' prometheus.io/tcp-probe-port: '80' namespace: monitoring spec: type: NodePort ports: - port: 80 targetPort: 3000 nodePort: 30002 selector: k8s-app: grafana

4.4 确认验证Prometheus

4.4 grafana配置

- 添加数据源:略

- 创建用户:略

4.5 查看监控

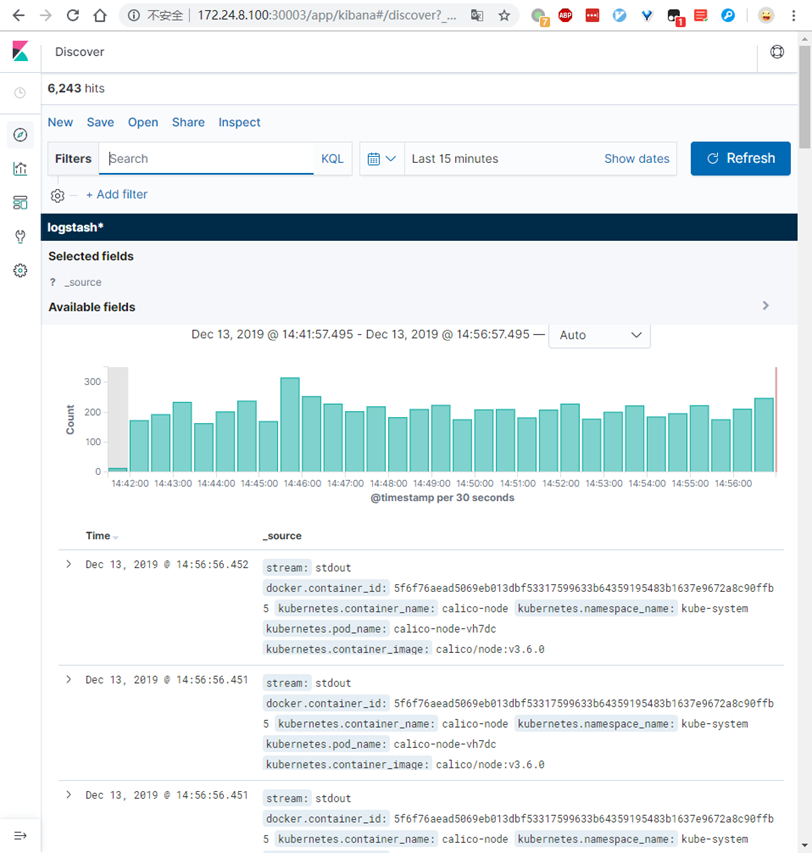

五 日志管理

5.1 获取部署文件

5.2 修改相关源

…… - image: quay-mirror.qiniu.com/fluentd_elasticsearch/elasticsearch:v7.3.2 imagePullPolicy: IfNotPresent ……

…… image: quay-mirror.qiniu.com/fluentd_elasticsearch/fluentd:v2.7.0 imagePullPolicy: IfNotPresent ……

…… image: docker.elastic.co/kibana/kibana-oss:7.3.2 imagePullPolicy: IfNotPresent ……

5.3 创建持久PVC

apiVersion: v1 kind: PersistentVolumeClaim metadata: namespace: kube-system annotations: volume.beta.kubernetes.io/storage- spec: accessModes: - ReadWriteMany resources: requests: storage: 5Gi

apiVersion: v1

kind: ServiceAccount

metadata:

name: elasticsearch-logging

namespace: kube-system

labels:

k8s-app: elasticsearch-logging

addonmanager.kubernetes.io/mode: Reconcile

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: elasticsearch-logging

labels:

k8s-app: elasticsearch-logging

addonmanager.kubernetes.io/mode: Reconcile

rules:

- apiGroups:

- ""

resources:

- "services"

- "namespaces"

- "endpoints"

verbs:

- "get"

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

namespace: kube-system

name: elasticsearch-logging

labels:

k8s-app: elasticsearch-logging

addonmanager.kubernetes.io/mode: Reconcile

subjects:

- kind: ServiceAccount

name: elasticsearch-logging

namespace: kube-system

apiGroup: ""

roleRef:

kind: ClusterRole

name: elasticsearch-logging

apiGroup: ""

---

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: elasticsearch-logging

namespace: kube-system

labels:

k8s-app: elasticsearch-logging

version: v7.3.2

addonmanager.kubernetes.io/mode: Reconcile

spec:

serviceName: elasticsearch-logging

replicas: 1

selector:

matchLabels:

k8s-app: elasticsearch-logging

version: v7.3.2

template:

metadata:

labels:

k8s-app: elasticsearch-logging

version: v7.3.2

spec:

serviceAccountName: elasticsearch-logging

containers:

- image: quay-mirror.qiniu.com/fluentd_elasticsearch/elasticsearch:v7.3.2

name: elasticsearch-logging

imagePullPolicy: IfNotPresent

resources:

limits:

cpu: 1000m

memory: 3Gi

requests:

cpu: 100m

memory: 3Gi

ports:

- containerPort: 9200

name: db

protocol: TCP

- containerPort: 9300

name: transport

protocol: TCP

volumeMounts:

- name: elasticsearch-logging

mountPath: /data

env:

- name: "NAMESPACE"

valueFrom:

fieldRef:

fieldPath: metadata.namespace

volumes:

- name: elasticsearch-logging #挂载永久存储PVC

persistentVolumeClaim:

claimName: elasticsearch-pvc

initContainers:

- image: alpine:3.6

command: ["/sbin/sysctl", "-w", "vm.max_map_count=262144"]

name: elasticsearch-logging-init

securityContext:

privileged: true

5.5 部署Elasticsearch SVC

apiVersion: v1

kind: Service

metadata:

name: elasticsearch-logging

namespace: kube-system

labels:

k8s-app: elasticsearch-logging

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

kubernetes.io/name: "Elasticsearch"

spec:

ports:

- port: 9200

protocol: TCP

targetPort: db

selector:

k8s-app: elasticsearch-logging

5.6 部署fluentd

[root@k8smaster01 fluentd-elasticsearch]# kubectl create -f fluentd-es-ds.yaml #部署fluentd

5.7 部署Kibana

apiVersion: apps/v1

kind: Deployment

metadata:

name: kibana-logging

namespace: kube-system

labels:

k8s-app: kibana-logging

addonmanager.kubernetes.io/mode: Reconcile

spec:

replicas: 1

selector:

matchLabels:

k8s-app: kibana-logging

template:

metadata:

labels:

k8s-app: kibana-logging

annotations:

seccomp.security.alpha.kubernetes.io/pod: 'docker/default'

spec:

containers:

- name: kibana-logging

image: docker.elastic.co/kibana/kibana-oss:7.3.2

imagePullPolicy: IfNotPresent

resources:

limits:

cpu: 1000m

requests:

cpu: 100m

env:

- name: ELASTICSEARCH_HOSTS

value: http://elasticsearch-logging:9200

ports:

- containerPort: 5601

name: ui

protocol: TCP

5.8 部署Kibana SVC

apiVersion: v1

kind: Service

metadata:

name: kibana-logging

namespace: kube-system

labels:

k8s-app: kibana-logging

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

kubernetes.io/name: "Kibana"

spec:

type: NodePort

ports:

- port: 5601

protocol: TCP

nodePort: 30003

targetPort: ui

selector:

k8s-app: kibana-logging

5.9 确认验证

附014.Kubernetes Prometheus+Grafana+EFK+Kibana+Glusterfs整合解决方案的更多相关文章

- 附014.Kubernetes Prometheus+Grafana+EFK+Kibana+Glusterfs整合性方案

一 glusterfs存储集群部署 注意:以下为简略步骤,详情参考<附009.Kubernetes永久存储之GlusterFS独立部署>. 1.1 架构示意 略 1.2 相关规划 主机 I ...

- Kubernetes+Prometheus+Grafana部署笔记

一.基础概念 1.1 基础概念 Kubernetes(通常写成“k8s”)Kubernetes是Google开源的容器集群管理系统.其设计目标是在主机集群之间提供一个能够自动化部署.可拓展.应用容器可 ...

- Kubernetes prometheus+grafana k8s 监控

参考: https://www.cnblogs.com/terrycy/p/10058944.html https://www.cnblogs.com/weiBlog/p/10629966.html ...

- 附024.Kubernetes全系列大总结

Kubernetes全系列总结如下,后期不定期更新.欢迎基于学习.交流目的的转载和分享,禁止任何商业盗用,同时希望能带上原文出处,尊重ITer的成果,也是尊重知识.若发现任何错误或纰漏,留言反馈或右侧 ...

- 基于Docker+Prometheus+Grafana监控SpringBoot健康信息

在微服务体系当中,监控是必不可少的.当系统环境超过指定的阀值以后,需要提醒指定的运维人员或开发人员进行有效的防范,从而降低系统宕机的风险.在CNCF云计算平台中,Prometheus+Grafana是 ...

- 使用 Prometheus + Grafana 对 Kubernetes 进行性能监控的实践

1 什么是 Kubernetes? Kubernetes 是 Google 开源的容器集群管理系统,其管理操作包括部署,调度和节点集群间扩展等. 如下图所示为目前 Kubernetes 的架构图,由 ...

- [转帖]Prometheus+Grafana监控Kubernetes

原博客的位置: https://blog.csdn.net/shenhonglei1234/article/details/80503353 感谢原作者 这里记录一下自己试验过程中遇到的问题: . 自 ...

- 附010.Kubernetes永久存储之GlusterFS超融合部署

一 前期准备 1.1 基础知识 在Kubernetes中,使用GlusterFS文件系统,操作步骤通常是: 创建brick-->创建volume-->创建PV-->创建PVC--&g ...

- [转帖]安装prometheus+grafana监控mysql redis kubernetes等

安装prometheus+grafana监控mysql redis kubernetes等 https://www.cnblogs.com/sfnz/p/6566951.html plug 的模式进行 ...

随机推荐

- 操作的系统的PV操作

转自:https://blog.csdn.net/sunlovefly2012/article/details/9396201 在操作系统中,进程之间经常会存在互斥(都需要共享独占性资源时) 和同步( ...

- 吴裕雄--python学习笔记:os模块函数

os.sep:取代操作系统特定的路径分隔符 os.name:指示你正在使用的工作平台.比如对于Windows,它是'nt',而对于Linux/Unix用户,它是'posix'. os.getcwd:得 ...

- 吴裕雄--天生自然KITTEN编程:逃离漩涡

- 吴裕雄--天生自然KITTEN编程:行走

- idea如何打包项目(java)

1.右击项目打开open module settings 2.依次打开 3.选择你的程序主入口 JAR files from libraies ①和设置库中的jar文件选择第一个打包时会把依赖库(li ...

- 剑指CopyOnWriteArrayList

上期回顾 之前的一篇 剑指ConcurrentHashMap[基于JDK1.8] 给大家详细分析了一波JUC的ConcurrentHashMap,它在线程安全的基础上提供了更好的写并发能力.那么既然有 ...

- 第12章 Reference-RIL运行框架

Reference-RIL完成两部分处理逻辑: 与LibRIL交互完成RIL消息的处理. 与Modem通信模块交互完成AT命令的执行. Reference-RIL的运行机制 主要涉及以下几个方面: R ...

- HAProxy此例简单介绍基于docker的HAProxy反代

HAProxy拓展连接 此例简单介绍基于Docker的HAProxy反代 反代: 1.获取haproxy镜像 docker pull haproxy 2.写配置文件haproxy.cfg 1 glo ...

- ButterKnife的使用及其解析

本博客介绍ButterKnife的使用及其源码解析. ButterKnife的使用 ButterKnife简介 添加依赖 在Project级别的build.gradle文件中添加为ButterKnif ...

- hadoop地址配置、内存配置、守护进程设置、环境设置

1.1 hadoop配置 hadoop配置文件在安装包的etc/hadoop目录下,但是为了方便升级,配置不被覆盖一般放在其他地方,并用环境变量HADOOP_CONF_DIR指定目录. 1.1.1 ...