CHD-5.3.6集群上hive安装

解压过后:

[hadoop@master CDH5.3.6]$ ls -rlt

total 8

drwxr-xr-x. 17 hadoop hadoop 4096 Jun 2 16:07 hadoop-2.5.0-cdh5.3.6

drwxr-xr-x. 11 hadoop hadoop 4096 Jun 2 16:28 hive-0.13.1-cdh5.3.6

1.配置hive-env.sh

export JAVA_HOME=/usr/local/jdk1.

export HADOOP_HOME=/home/hadoop/CDH5.3.6/hadoop-2.5.-cdh5.3.6

export HIVE_HOME=/home/hadoop/CDH5.3.6/hive-0.13.-cdh5.3.6

export HIVE_CONF_DIR=/home/hadoop/CDH5.3.6/hive-0.13.-cdh5.3.6/conf

2.配置hive-log4j.properties

hive.log.dir=/home/hadoop/CDH5.3.6/hive-0.13.-cdh5.3.6/log

3.配置hive-site.xml

这个寻找Apache-hadoop下的就可以,直接考过来就可以,在conf 目录下

4.配置环境变量

vi .bash_profile

export HADOOP_HOME=/home/hadoop/CDH5.3.6/hadoop-2.5.-cdh5.3.6

export HADOOP_MAPRED_HOME=$HADOOP_HOME

export HADOOP_COMMON_HOME=$HADOOP_HOME

export HADOOP_HDFS_HOME=$HADOOP_HOME

export YARN_HOME=$HADOOP_HOME

export HADOOP_COMMON_LIB_NATIVE_DIR=$HADOOP_HOME/lib/native

export HIVE_HOME=/home/hadoop/CDH5.3.6/hive-0.13.-cdh5.3.6

export HADOOP_INSTALL=$HADOOP_HOME

export PATH=$PATH:$HADOOP_HOME/sbin:$HADOOP_HOME/bin:$MAVEN_HOME/bin:$HIVE_HOME/bin

5.拷贝MySQL包

cp /home/hadoop/hive/lib/mysql-connector-java-5.1..jar ./

6.hive命令报错:

Exception in thread "main" java.lang.RuntimeException: java.lang.RuntimeException: Unable to instantiate org.apache.hadoop.hive.metastore.HiveMetaStoreClient

解决方法:

格式化MySQL:

schematool -dbType mysql -initSchema

7.进入hive

hive (default)>

> CREATE TABLE dept(

> deptno int,

> dname string,

> loc string)

> ROW FORMAT DELIMITED FIELDS TERMINATED BY ',' STORED AS textfile;

OK

Time taken: 0.57 seconds

8.准备数据:

vi detp.txt

,ACCOUNTING,NEW YORK

,RESEARCH,DALLAS

,SALES,CHICAGO

,OPERATIONS,BOSTON

9.装数据:

load data local inpath '/home/hadoop/tmp/detp.txt' overwrite into table dept;

10.查询:

hive (default)> select count(1) from dept;

Total jobs =

Launching Job out of

Number of reduce tasks determined at compile time:

In order to change the average load for a reducer (in bytes):

set hive.exec.reducers.bytes.per.reducer=<number>

In order to limit the maximum number of reducers:

set hive.exec.reducers.max=<number>

In order to set a constant number of reducers:

set mapreduce.job.reduces=<number>

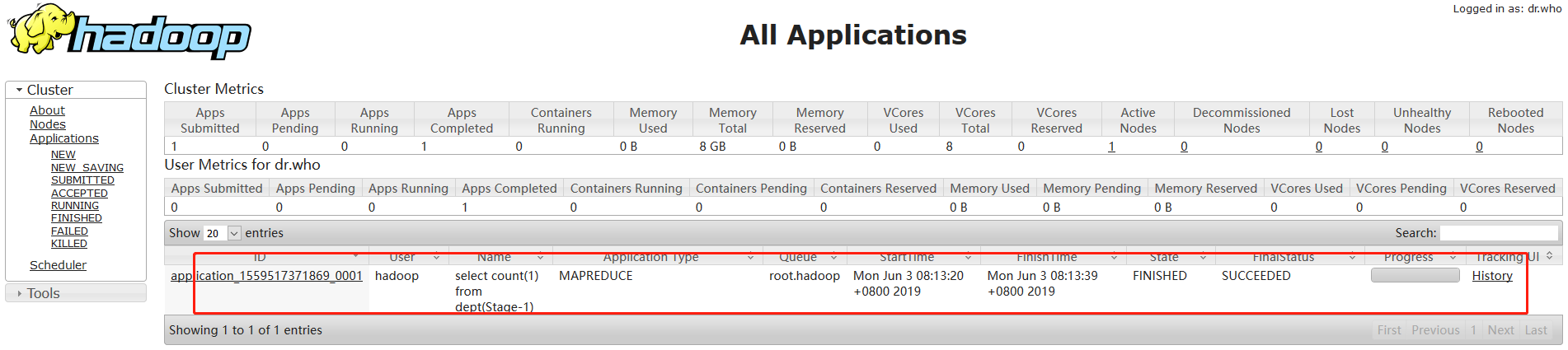

Starting Job = job_1559517371869_0001, Tracking URL = http://master:8088/proxy/application_1559517371869_0001/

Kill Command = /home/hadoop/CDH5.3.6/hadoop-2.5.-cdh5.3.6/bin/hadoop job -kill job_1559517371869_0001

Hadoop job information for Stage-: number of mappers: ; number of reducers:

-- ::, Stage- map = %, reduce = %

-- ::, Stage- map = %, reduce = %, Cumulative CPU 0.79 sec

-- ::, Stage- map = %, reduce = %, Cumulative CPU 1.7 sec

MapReduce Total cumulative CPU time: seconds msec

Ended Job = job_1559517371869_0001

MapReduce Jobs Launched:

Stage-Stage-: Map: Reduce: Cumulative CPU: 1.7 sec HDFS Read: HDFS Write: SUCCESS

Total MapReduce CPU Time Spent: seconds msec

OK

_c0 Time taken: 22.14 seconds, Fetched: row(s)

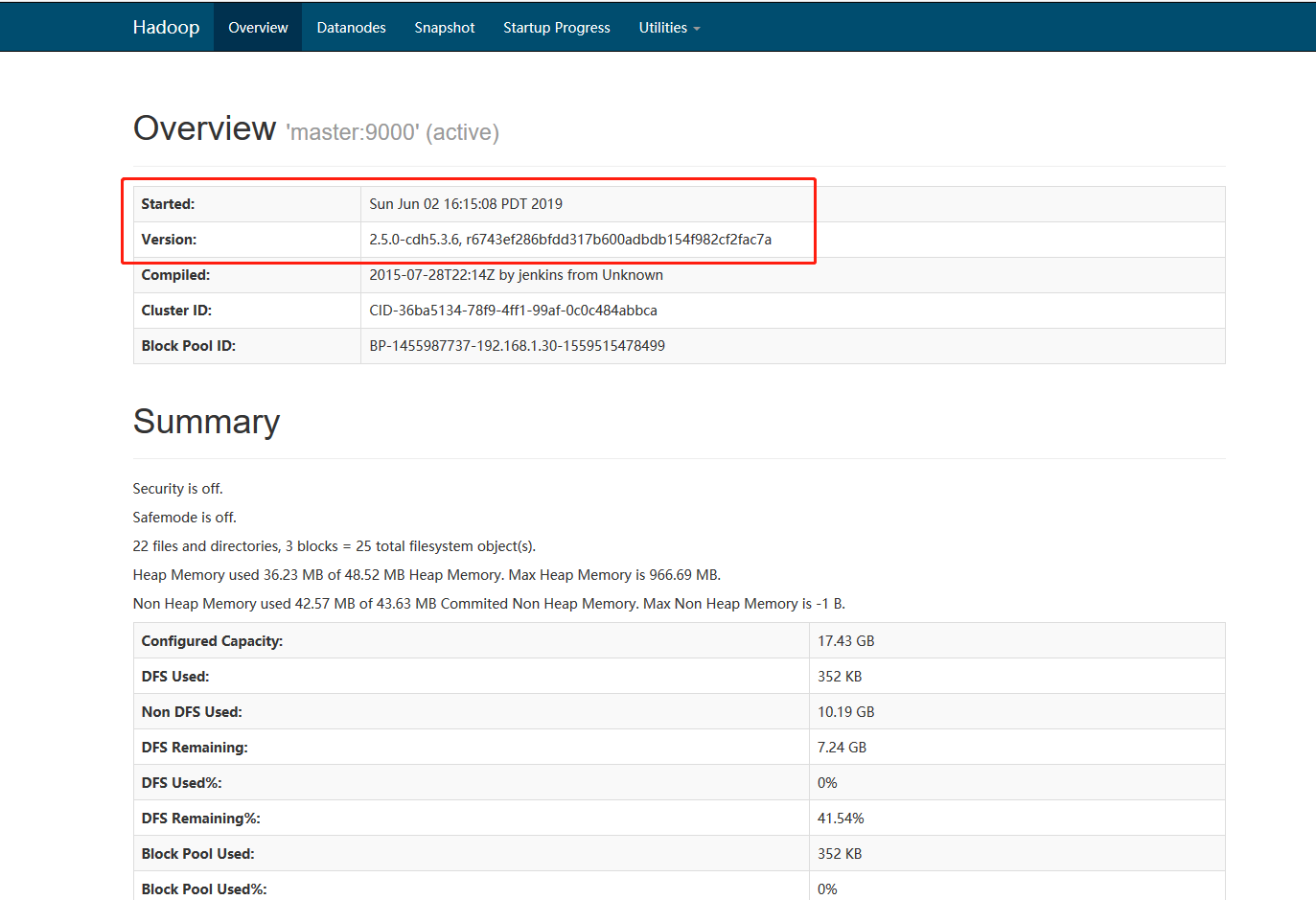

页面验证:

http://192.168.1.30:8088/cluster

http://192.168.1.30:50070/dfshealth.html#tab-overview

CHD-5.3.6集群上hive安装的更多相关文章

- 基于Hadoop集群搭建Hive安装与配置(yum插件安装MySQL)---linux系统《小白篇》

用到的安装包有: apache-hive-1.2.1-bin.tar.gz mysql-connector-java-5.1.49.tar.gz 百度网盘链接: 链接:https://pan.baid ...

- CHD-5.3.6集群上Flume安装

Flume is a distributed, reliable, and available service for efficiently collecting, aggregating, and ...

- CHD-5.3.6集群上sqoop安装

Sqoop(发音:skup)是一款开源的工具,主要用于在Hadoop(Hive)与传统的数据库(mysql.postgresql...)间进行数据的传递,可以将一个关系型数据库(例如 : MySQL ...

- CHD-5.3.6集群上oozie安装

参考文档:http://archive.cloudera.com/cdh5/cdh/5/oozie-4.0.0-cdh5.3.6/DG_QuickStart.html tar -zxvf oozie ...

- hive1.2.1安装步骤(在hadoop2.6.4集群上)

hive1.2.1在hadoop2.6.4集群上的安装 hive只需在一个节点上安装即可,这里再hadoop1上安装 1.上传hive安装包到/usr/local/目录下 2.解压 tar -zxvf ...

- 在Ubuntu16.04集群上手工部署Kubernetes

目前Kubernetes为Ubuntu提供的kube-up脚本,不支持15.10以及16.04这两个使用systemd作为init系统的版本. 这里详细介绍一下如何以非Docker方式在Ubuntu1 ...

- 在Hadoop集群上的HBase配置

之前,我们已经在hadoop集群上配置了Hive,今天我们来配置下Hbase. 一.准备工作 1.ZooKeeper下载地址:http://archive.apache.org/dist/zookee ...

- [转载] 把Nutch爬虫部署到Hadoop集群上

http://f.dataguru.cn/thread-240156-1-1.html 软件版本:Nutch 1.7, Hadoop 1.2.1, CentOS 6.5, JDK 1.7 前面的3篇文 ...

- 把Nutch爬虫部署到Hadoop集群上

原文地址:http://cn.soulmachine.me/blog/20140204/ 把Nutch爬虫部署到Hadoop集群上 Feb 4th, 2014 | Comments 软件版本:Nutc ...

随机推荐

- Build Telemetry for Distributed Services之Jaeger

github链接:https://github.com/jaegertracing/jaeger 官网:https://www.jaegertracing.io/ Jaeger: open sourc ...

- 深入理解Flink ---- End-to-End Exactly-Once语义

上一篇文章所述的Exactly-Once语义是针对Flink系统内部而言的. 那么Flink和外部系统(如Kafka)之间的消息传递如何做到exactly once呢? 问题所在: 如上图,当sink ...

- java -- SSM配置完成后,能访问jsp文件不能访问html文件,报错解析

SSM配置完成后,能访问jsp文件不能访问html文件,报错解析 在确保路径没有任何问题的,情况下,相同的页面,jsp能够正常访问,html却不能正常访问(404). 解决方法: 在web.xml中添 ...

- Nginx+Keepalived双主架构实现

Keepalived+Nginx实现高可用Web负载均衡 Master 192.168.0.69 nginx.keepalived Centos7.4backup 192.168.0.70 nginx ...

- CentOS查看每个进程的网络流量

所需工具nethogs 安装:yum install -y nethogs 使用:nethogs eth0 sudo nethogs -s //按接收流量大小排序 如上图,PID一列就是进程的PID, ...

- Install Virtualbox on CentOS7---(後話,最終還是沒有用virtualbox做VM server ,感覺只適用于桌面)

參考: https://wiki.centos.org/zh-tw/HowTos/Virtualization/VirtualBox cd /etc/yum.repos.d wget http://d ...

- python-Web-flask-视图内容和模板

2 视图内容和模板: 基本使用 #设置cookie值 @app.route('/set_cookie') def set_cookie(): response = make_response(&quo ...

- 当微信小程序遇到AR(二)

当微信小程序遇到AR,会擦出怎么样的火花?期待与激动...... 通过该教程,可以从基础开始打造一个微信小程序的AR框架,所有代码开源,提供大家学习. 本课程需要一定的基础:微信开发者工具,JavaS ...

- No manual entry for printf in section 3

在引入标准库头文件的时候,很多时候要先查询一下该函数所属的库,以及基本用法,在linux系统下,可以使用 man 1-9 函数名称 但是 问题来了,No manual entry for printf ...

- NDK学习笔记-gdb调试

在做开发的时候,难免会crash,那么在这时候需要进行调试,在C/C++的代码调试中,gdb是很常用的gdb在这不做过多介绍,之前在C语言中已经做过总结,这里简要回顾一下 要使用gdb,在编译的时候需 ...