Build an ETL Pipeline With Kafka Connect via JDBC Connectors

This article is an in-depth tutorial for using Kafka to move data from PostgreSQL to Hadoop HDFS via JDBC connections.

Read this eGuide to discover the fundamental differences between iPaaS and dPaaS and how the innovative approach of dPaaS gets to the heart of today’s most pressing integration problems, brought to you in partnership with Liaison.

Tutorial: Discover how to build a pipeline with Kafka leveraging DataDirect PostgreSQL JDBC driver to move the data from PostgreSQL to HDFS. Let’s go streaming!

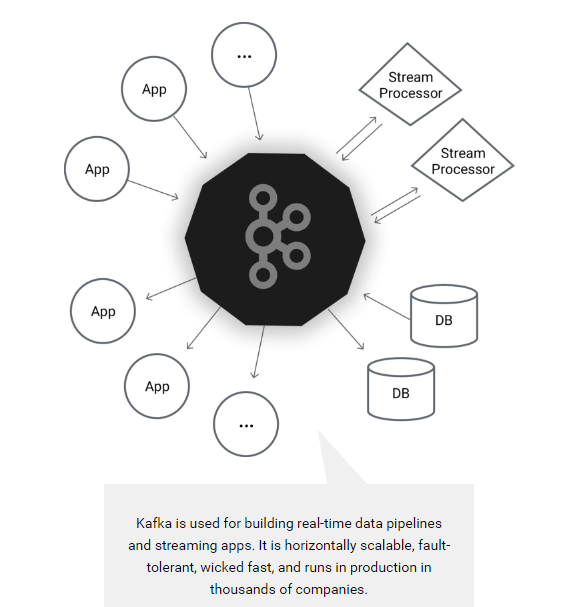

Apache Kafka is an open source distributed streaming platform which enables you to build streaming data pipelines between different applications. You can also build real-time streaming applications that interact with streams of data, focusing on providing a scalable, high throughput and low latency platform to interact with data streams.

Earlier this year, Apache Kafka announced a new tool called Kafka Connect which can helps users to easily move datasets in and out of Kafka using connectors, and it has support for JDBC connectors out of the box! One of the major benefits for DataDirect customers is that you can now easily build an ETL pipeline using Kafka leveraging your DataDirect JDBC drivers. Now you can easily connect and get the data from your data sources into Kafka and export the data from there to another data source.

Image From https://kafka.apache.org/

Environment Setup

Before proceeding any further with this tutorial, make sure that you have installed the following and are configured properly. This tutorial is written assuming you are also working on Ubuntu 16.04 LTS, you have PostgreSQL, Apache Hadoop, and Hive installed.

- Installing Apache Kafka and required toolsTo make the installation process easier for people trying this out for the first time, we will be installing Confluent Platform. This takes care of installing Apache Kafka, Schema Registry and Kafka Connect which includes connectors for moving files, JDBC connectors and HDFS connector for Hadoop.

- To begin with, install Confluent’s public key by running the command:

wget -qO -http://packages.confluent.io/deb/2.0/archive.key | sudo apt-key add - - Now add the repository to your sources.list by running the following command:

sudo add-apt-repository "deb http://packages.confluent.io/deb/2.0 stable main" - Update your package lists and then install the Confluent platform by running the following commands:

sudo apt-get updatesudo apt-get install confluent-platform-2.11.7

- To begin with, install Confluent’s public key by running the command:

- Install DataDirect PostgreSQL JDBC driver

- Download DataDirect PostgreSQL JDBC driver by visiting here.

- Install the PostgreSQL JDBC driver by running the following command:

java -jar PROGRESS_DATADIRECT_JDBC_POSTGRESQL_ALL.jar - Follow the instructions on the screen to install the driver successfully (you can install the driver in evaluation mode where you can try it for 15 days, or in license mode, if you have bought the driver)

- Configuring data sources for Kafka Connect

- Create a new file called postgres.properties, paste the following configuration and save the file. To learn more about the modes that are being used in the below configuration file, visit this page.

name=test-postgres-jdbcconnector.class=io.confluent.connect.jdbc.JdbcSourceConnectortasks.max=1connection.url=jdbc:datadirect:postgresql://<;server>:<port>;User=<user>;Password=<password>;Database=<dbname>mode=timestamp+incrementingincrementing.column.name=<id>timestamp.column.name=<modifiedtimestamp>topic.prefix=test_jdbc_table.whitelist=actor - Create another file called hdfs.properties, paste the following configuration and save the file. To learn more about HDFS connector and configuration options used, visit this page.

name=hdfs-sinkconnector.class=io.confluent.connect.hdfs.HdfsSinkConnectortasks.max=1topics=test_jdbc_actorhdfs.url=hdfs://<;server>:<port>flush.size=2hive.metastore.uris=thrift://<;server>:<port>hive.integration=trueschema.compatibility=BACKWARD - Note that postgres.properties and hdfs.properties have basically the connection configuration details and behavior of the JDBC and HDFS connectors.

- Create a symbolic link for DataDirect Postgres JDBC driver in Hive lib folder by using the following command:

ln -s /path/to/datadirect/lib/postgresql.jar /path/to/hive/lib/postgresql.jar - Also make the DataDirect Postgres JDBC driver available on Kafka Connect process’s CLASSPATH by running the following command:

export CLASSPATH=/path/to/datadirect/lib/postgresql.jar - Start the Hadoop cluster by running following commands:

cd /path/to/hadoop/sbin./start-dfs.sh./start-yarn.sh

- Create a new file called postgres.properties, paste the following configuration and save the file. To learn more about the modes that are being used in the below configuration file, visit this page.

- Configuring and running Kafka Services

- Download the configuration files for Kafka, zookeeper and schema-registry services

- Start the Zookeeper service by providing the zookeeper.properties file path as a parameter by using the command:

zookeeper-server-start /path/to/zookeeper.properties - Start the Kafka service by providing the server.properties file path as a parameter by using the command:

kafka-server-start /path/to/server.properties - Start the Schema registry service by providing the schema-registry.properties file path as a parameter by using the command:

schema-registry-start /path/to/ schema-registry.properties

Ingesting Data Into HDFS using Kafka Connect

To start ingesting data from PostgreSQL, the final thing that you have to do is start Kafka Connect. You can start Kafka Connect by running the following command:

connect-standalone /path/to/connect-avro-standalone.properties \ /path/to/postgres.properties /path/to/hdfs.properties

This will import the data from PostgreSQL to Kafka using DataDirect PostgreSQL JDBC drivers and create a topic with name test_jdbc_actor. Then the data is exported from Kafka to HDFS by reading the topic test_jdbc_actor through the HDFS connector. The data stays in Kafka, so you can reuse it to export to any other data sources.

Next Steps

We hope this tutorial helped you understand on how you can build a simple ETL pipeline using Kafka Connect leveraging DataDirect PostgreSQL JDBC drivers. This tutorial is not limited to PostgreSQL. In fact, you can create ETL pipelines leveraging any of our DataDirect JDBC drivers that we offer for Relational databases like Oracle, DB2 and SQL Server, Cloud sources likeSalesforce and Eloqua or BigData sources like CDH Hive, Spark SQL and Cassandra by following similar steps. Also, subscribe to our blog via email or RSS feed for more awesome tutorials.

Discover the unprecedented possibilities and challenges, created by today’s fast paced data climate andwhy your current integration solution is not enough, brought to you in partnership with Liaison.

Build an ETL Pipeline With Kafka Connect via JDBC Connectors的更多相关文章

- 1.3 Quick Start中 Step 7: Use Kafka Connect to import/export data官网剖析(博主推荐)

不多说,直接上干货! 一切来源于官网 http://kafka.apache.org/documentation/ Step 7: Use Kafka Connect to import/export ...

- Kafka connect快速构建数据ETL通道

摘要: 作者:Syn良子 出处:http://www.cnblogs.com/cssdongl 转载请注明出处 业余时间调研了一下Kafka connect的配置和使用,记录一些自己的理解和心得,欢迎 ...

- Kafka Connect Architecture

Kafka Connect's goal of copying data between systems has been tackled by a variety of frameworks, ma ...

- Streaming data from Oracle using Oracle GoldenGate and Kafka Connect

This is a guest blog from Robin Moffatt. Robin Moffatt is Head of R&D (Europe) at Rittman Mead, ...

- 基于Kafka Connect框架DataPipeline在实时数据集成上做了哪些提升?

在不断满足当前企业客户数据集成需求的同时,DataPipeline也基于Kafka Connect 框架做了很多非常重要的提升. 1. 系统架构层面. DataPipeline引入DataPipeli ...

- 打造实时数据集成平台——DataPipeline基于Kafka Connect的应用实践

导读:传统ETL方案让企业难以承受数据集成之重,基于Kafka Connect构建的新型实时数据集成平台被寄予厚望. 在4月21日的Kafka Beijing Meetup第四场活动上,DataPip ...

- 替代Flume——Kafka Connect简介

我们知道过去对于Kafka的定义是分布式,分区化的,带备份机制的日志提交服务.也就是一个分布式的消息队列,这也是他最常见的用法.但是Kafka不止于此,打开最新的官网. 我们看到Kafka最新的定义是 ...

- Apache Kafka Connect - 2019完整指南

今天,我们将讨论Apache Kafka Connect.此Kafka Connect文章包含有关Kafka Connector类型的信息,Kafka Connect的功能和限制.此外,我们将了解Ka ...

- SQL Server CDC配合Kafka Connect监听数据变化

写在前面 好久没更新Blog了,从CRUD Boy转型大数据开发,拉宽了不少的知识面,从今年年初开始筹备.组建.招兵买马,到现在稳定开搞中,期间踏过无数的火坑,也许除了这篇还很写上三四篇. 进入主题, ...

随机推荐

- linux grep 查找字符串

2015年8月27日 12:04:58 在当前文件夹查找 public function abc() grep -re 'public function abc\b' . // 可以不加e, 适合函数 ...

- lists删除

List<Map<String, Object>> trackList = bizFollowRepo.findList("trackFindPageList&quo ...

- ASM:《X86汇编语言-从实模式到保护模式》第七章应用例:用adc命令计算1到1000的累加

在16位的处理器上,做加法的指令是add,但是他每次只能做8位或者16位的加法,除此之外,还有一个带进位的加法指令adc(Add With Carry),他的指令格式和add一样,目的操作数可以是8位 ...

- 为什么使用<!DOCTYPE HTML>

不管是刚接触前端,还是你已经"精通"web前端开发的内容,你应该知道在你写html的时候需要定义文档类型:你知道如果没有它,浏览器在渲染页面的时候会使用怪异模式:你知道各个浏览器在 ...

- JavaBean中set/get的命名规范

今天遇到了这样的问题,在jsp取session中的值时,取不到.有个SessionUser对象,该对象有个uId属性,set/get方法为setUId/getUId,在jsp页面通过el表达式取值${ ...

- 20145213《Java程序设计》第四周学习总结

20145213<Java程序设计>第四周学习总结 教材学习内容总结 本周任务是学习面向对象的继承.接口以及之后的如何活用多态.(还真是路漫漫其修远兮啊!)教材也是延续上周艰深晦涩的语言风 ...

- September 25th 2016 Week 40th Sunday

Everything is good in its season. 万物逢时皆美好. Don't lose hope. Remeber that even a dog has its day. Onc ...

- Rabbitmq实现负载均衡与消息持久化

Rabbitmq 是对AMQP协议的一种实现.使用范围也比较广泛,主要用于消息异步通讯. 一,默认情况下Rabbitmq使用轮询(round-robin)方式转发消息.为了较好实现负载,可以在消息 ...

- Smarty模板技术学习(二)

本文主要包括以下内容 公共文件引入与继承 内容捕捉 变量调剂器 缓存 Smarty过滤器 数据对象.注册对象 与已有项目结合 公共文件引入与继承 可以把许多模板页面都用到的公共页面放到单独文件里边,通 ...

- Mac系统下使用VirtualBox虚拟机安装win7--第三步 在虚拟机上安装 Windows 7

第三步 在虚拟机上安装 Windows 7 等待虚拟机进入 Windows 7 的安装界面以后,在语言,货币,键盘输入法这一面,建议保持默认设置,直接点击“下一步”按钮,如图所示