1.3 Quick Start中 Step 7: Use Kafka Connect to import/export data官网剖析(博主推荐)

不多说,直接上干货!

一切来源于官网

http://kafka.apache.org/documentation/

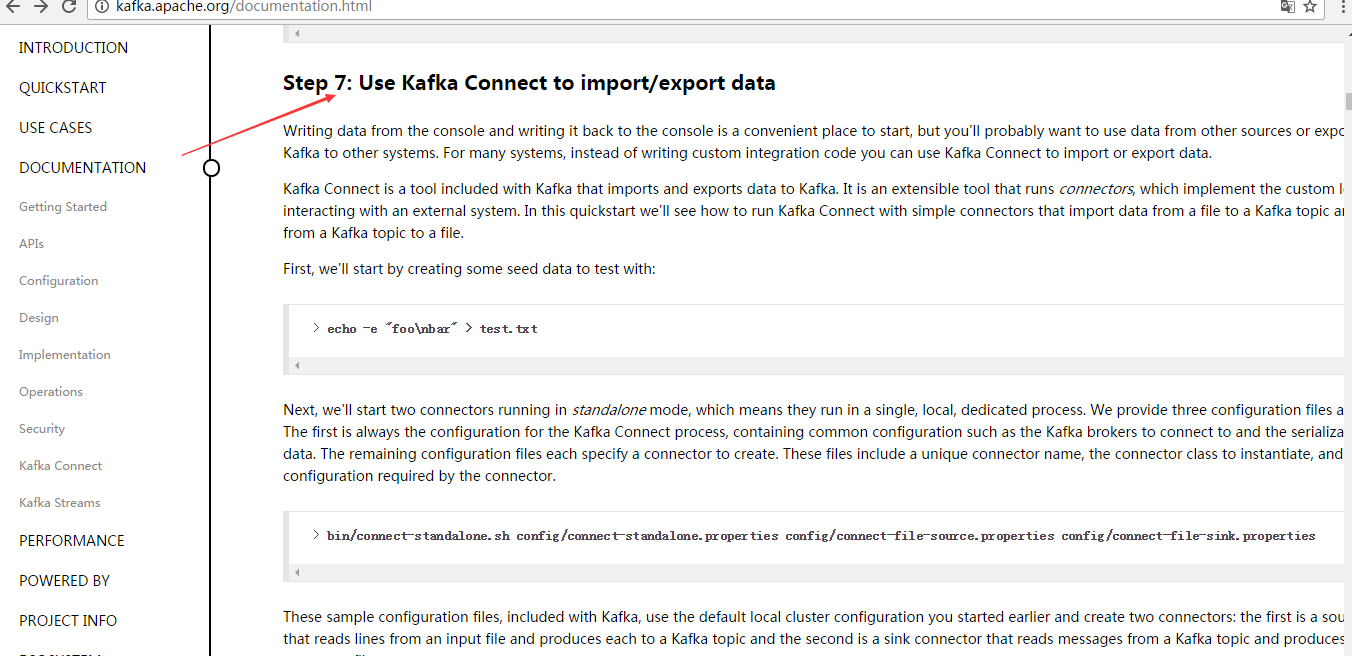

Step 7: Use Kafka Connect to import/export data

Step : 使用 Kafka Connect 来 导入/导出 数据

Writing data from the console and writing it back to the console is a convenient place to start, but you'll probably want to use data from other sources or export data from Kafka to other systems. For many systems, instead of writing custom integration code you can use Kafka Connect to import or export data.

从控制台写入和写回数据是一个方便的开始,但你可能想要从其他来源导入或导出数据到其他系统。

对于大多数系统,可以使用kafka Connect,而不需要编写自定义集成代码。

Kafka Connect is a tool included with Kafka that imports and exports data to Kafka. It is an extensible tool that runs connectors, which implement the custom logic for interacting with an external system. In this quickstart we'll see how to run Kafka Connect with simple connectors that import data from a file to a Kafka topic and export data from a Kafka topic to a file.

Kafka Connect是导入和导出数据的一个工具。它是一个可扩展的工具,运行连接器,实现与自定义的逻辑的外部系统交互。

在这个快速入门里,

我们将看到如何运行Kafka Connect用简单的连接器从文件导入数据到Kafka主题,

再从Kafka主题导出数据到文件

First, we'll start by creating some seed data to test with:

首先,我们首先创建一些种子数据用来测试:

> echo -e "foo\nbar" > test.txt

Next, we'll start two connectors running in standalone mode, which means they run in a single, local, dedicated process. We provide three configuration files as parameters. The first is always the configuration for the Kafka Connect process, containing common configuration such as the Kafka brokers to connect to and the serialization format for data. The remaining configuration files each specify a connector to create. These files include a unique connector name, the connector class to instantiate, and any other configuration required by the connector.

接下来,我们开启2个连接器运行在独立的模式,这意味着它们运行在一个单一的,本地的,专用的进程。

我们提供3个配置文件作为参数。

第一个始终是kafka Connect进程,如kafka broker连接和数据库序列化格式,

剩下的配置文件每个指定的连接器来创建,这些文件包括一个独特的连接器名称,连接器类来实例化和任何其他配置要求的。

> bin/connect-standalone.sh config/connect-standalone.properties config/connect-file-source.properties config/connect-file-sink.properties

These sample configuration files, included with Kafka, use the default local cluster configuration you started earlier and create two connectors: the first is a source connector that reads lines from an input file and produces each to a Kafka topic and the second is a sink connector that reads messages from a Kafka topic and produces each as a line in an output file.

这是示例的配置文件,使用默认的本地集群配置并创建了2个连接器:

第一个是导入连接器,从导入文件中读取并发布到Kafka主题,

第二个是导出连接器,从kafka主题读取消息输出到外部文件

During startup you'll see a number of log messages, including some indicating that the connectors are being instantiated. Once the Kafka Connect process has started, the source connector should start reading lines from test.txt and producing them to the topic connect-test, and the sink connector should start reading messages from the topic connect-test and write them to the file test.sink.txt. We can verify the data has been delivered through the entire pipeline by examining the contents of the output file:

在启动过程中,你会看到一些日志消息,包括一些连接器实例化的说明。

一旦kafka Connect进程已经开始,导入连接器应该读取从test.txt和写入到topicconnect-test,导出连接器从主题connect-test读取消息写入到文件test.sink.txt

. 我们可以通过验证输出文件的内容来验证数据数据已经全部导出:

> cat test.sink.txt

foo

bar

Note that the data is being stored in the Kafka topic connect-test, so we can also run a console consumer to see the data in the topic (or use custom consumer code to process it):

注意,导入的数据也已经在Kafka主题connect-test里,所以我们可以使用该命令查看这个主题:

> bin/kafka-console-consumer.sh --bootstrap-server localhost:9092 --topic connect-test --from-beginning

{"schema":{"type":"string","optional":false},"payload":"foo"}

{"schema":{"type":"string","optional":false},"payload":"bar"}

...

The connectors continue to process data, so we can add data to the file and see it move through the pipeline:

连接器继续处理数据,因此我们可以添加数据到文件并通过管道移动:

> echo "Another line" >> test.txt

You should see the line appear in the console consumer output and in the sink file.

你应该会看到出现在消费者控台输出一行信息并导出到文件。

1.3 Quick Start中 Step 7: Use Kafka Connect to import/export data官网剖析(博主推荐)的更多相关文章

- 1.3 Quick Start中 Step 8: Use Kafka Streams to process data官网剖析(博主推荐)

不多说,直接上干货! 一切来源于官网 http://kafka.apache.org/documentation/ Step 8: Use Kafka Streams to process data ...

- 1.3 Quick Start中 Step 6: Setting up a multi-broker cluster官网剖析(博主推荐)

不多说,直接上干货! 一切来源于官网 http://kafka.apache.org/documentation/ Step 6: Setting up a multi-broker cluster ...

- 1.3 Quick Start中 Step 4: Send some messages官网剖析(博主推荐)

不多说,直接上干货! 一切来源于官网 http://kafka.apache.org/documentation/ Step 4: Send some messages Step : 发送消息 Kaf ...

- 1.3 Quick Start中 Step 2: Start the server官网剖析(博主推荐)

不多说,直接上干货! 一切来源于官网 http://kafka.apache.org/documentation/ Step 2: Start the server Step : 启动服务 Kafka ...

- 1.3 Quick Start中 Step 5: Start a consumer官网剖析(博主推荐)

不多说,直接上干货! 一切来源于官网 http://kafka.apache.org/documentation/ Step 5: Start a consumer Step : 消费消息 Kafka ...

- 1.3 Quick Start中 Step 3: Create a topic官网剖析(博主推荐)

不多说,直接上干货! 一切来源于官网 http://kafka.apache.org/documentation/ Step 3: Create a topic Step 3: 创建一个主题(topi ...

- 1.1 Introduction中 Kafka for Stream Processing官网剖析(博主推荐)

不多说,直接上干货! 一切来源于官网 http://kafka.apache.org/documentation/ Kafka for Stream Processing kafka的流处理 It i ...

- 1.1 Introduction中 Kafka as a Storage System官网剖析(博主推荐)

不多说,直接上干货! 一切来源于官网 http://kafka.apache.org/documentation/ Kafka as a Storage System kafka作为一个存储系统 An ...

- 1.1 Introduction中 Kafka as a Messaging System官网剖析(博主推荐)

不多说,直接上干货! 一切来源于官网 http://kafka.apache.org/documentation/ Kafka as a Messaging System kafka作为一个消息系统 ...

随机推荐

- PatentTips - Virtualizing performance counters

BACKGROUND Generally, the concept of virtualization in information processing systems allows multipl ...

- ids for this class must be manually assigned before calling save():Xxx

把Xxx.hbm.xml主键生成策略改成identity

- EF框架—Database-First

ADO.NET Entity Framework 是微软以 ADO.NET 为基础所发展出来的对象关系对应 (O/R Mapping) 解决方案,现已经包含在 Visual Studio 2008 S ...

- POJ - 3984 - 迷宫问题 (DFS)

迷宫问题 Time Limit: 1000MS Memory Limit: 65536K Total Submissions: 10936 Accepted: 6531 Description ...

- BZOJ1045: [HAOI2008]糖果传递&BZOJ1465: 糖果传递&BZOJ3293: [Cqoi2011]分金币

[传送门:BZOJ1045&BZOJ1465&BZOJ3293] 简要题意: 给出n个数,每个数每次可以-1使得左边或者右边的数+1,代价为1,求出使得这n个数相等的最小代价 题解: ...

- 存储过程和transaction

https://stackoverflow.com/questions/11531352/how-to-rollback-a-transaction-in-a-stored-procedure BEG ...

- RPC和Socket

RPC和Socket的区别 rpc是通过什么实现啊?socket! RPC(Remote Procedure Call,远程过程调用)是建立在Socket之上的,出于一种类比的愿望,在一台机器上运行的 ...

- Entity Framework之Model First开发方式

一.Model First开发方式 在项目一开始,就没用数据库时,可以借助EF设计模型,然后根据模型同步完成数据库中表的创建,这就是Model First开发方式.总结一点就是,现有模型再有表. 二. ...

- 简单的quartz 可视化监听管理界面

spring-quartz. 导包.配置,不在此介绍. 简单的quartz管理界面,包括触发器的暂停.恢复.删除.修改(暂无),任务的运行.触发添加.创建,删除. 扩展内容:日志的管理,添加和创建触发 ...

- UVALive - 6268 Cycling 贪心

UVALive - 6268 Cycling 题意:从一端走到另一端,有T个红绿灯,告诉你红绿灯的持续时间,求最短的到达终点的时间.x 思路: