spark编译安装 spark 2.1.0 hadoop2.6.0-cdh5.7.0

1、准备:

centos 6.5

jdk 1.7

Java SE安装包下载地址:http://www.oracle.com/technetwork/java/javase/downloads/java-archive-downloads-javase7-521261.html

maven3.3.9

Maven3.3.9安装包下载地址:https://mirrors.tuna.tsinghua.edu.cn/apache//maven/maven-3/3.3.9/binaries/

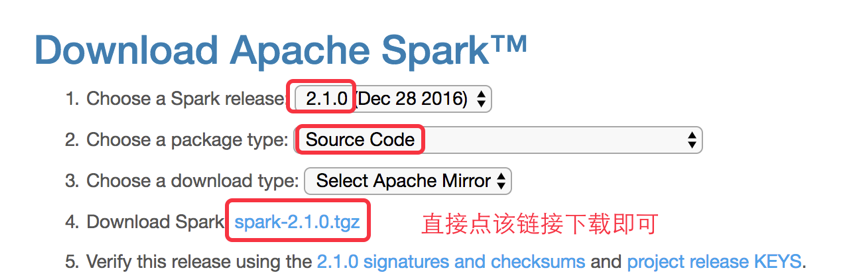

spark 2.1.0 下载

http://spark.apache.org/downloads.html

下载后文件名:

***************************************************分界线 编译开始*********************************************************************

上传到linux

安装maven,解压,配置环境变量

在此略掉...

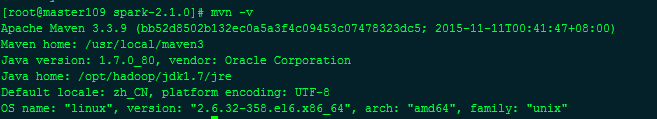

mvn-v

说明mvn就已经没问题

*************************************************************分界线***********************************************************************************

我的hadoop版本是hadoop2.6.0-cdh5.7.0

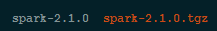

解压spark源码包

得到源码包

忽略我这边已经编译好的spark安装包

先设置maven的内存,不然会有问题,直接设置临时的

export MAVEN_OPTS="-Xmx2g -XX:ReservedCodeCacheSize=512m"

[root@master109 opt]# echo $MAVEN_OPTS

-Xmx2g -XX:ReservedCodeCacheSize=512m

进入spark源码主目录

./dev/make-distribution.sh --name 2.6.0-cdh5.7.0 --tgz -Phadoop-2.6 -Dhadoop.version=2.6.0-cdh5.7.0 -Phive -Phive-thriftserver -Pyarn

结果:

[INFO] BUILD FAILURE

[INFO] ------------------------------------------------------------------------

[INFO] Total time: 9.810 s (Wall Clock)

[INFO] Finished at: 2017-10-13T15:52:09+08:00

[INFO] Final Memory: 67M/707M

[INFO] ------------------------------------------------------------------------

[ERROR] Failed to execute goal on project spark-launcher_2.11: Could not resolve dependencies for project org.apache.spark:spark-launcher_2.11:jar:2.1.0: Failure to find org.apache.hadoop:hadoop-client:jar:2.6.0-cdh5.7.0 in https://repo1.maven.org/maven2 was cached in the local repository, resolution will not be reattempted until the update interval of central has elapsed or updates are forced -> [Help 1]

[ERROR]

[ERROR] To see the full stack trace of the errors, re-run Maven with the -e switch.

[ERROR] Re-run Maven using the -X switch to enable full debug logging.

[ERROR]

[ERROR] For more information about the errors and possible solutions, please read the following articles:

[ERROR] [Help 1] http://cwiki.apache.org/confluence/display/MAVEN/DependencyResolutionException

[ERROR]

[ERROR] After correcting the problems, you can resume the build with the command

[ERROR] mvn <goals> -rf :spark-launcher_2.11

编译失败,显示没有找到一些包,这里是数据源不对,默认的是Apache的源,这里要改成cdh的源

编辑 pom.xml

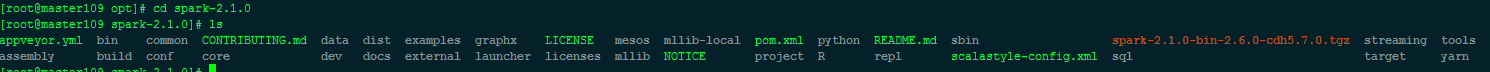

[root@master109 spark-2.1.0]# ls

appveyor.yml bin common CONTRIBUTING.md data docs external launcher licenses mllib NOTICE project R repl scalastyle-config.xml streaming tools

assembly build conf core dev examples graphx LICENSE mesos mllib-local pom.xml python README.md sbin sql target yarn

[root@master109 spark-2.1.0]# vim pom.xml

在如下位置插入

#---------------------------------------------

中间的内容,改变数据源。记住,删掉上下的分隔符。

#---------------------------------------------

<repositories>

<repository>

<id>central</id>

<!-- This should be at top, it makes maven try the central repo first and then others and hence faster dep resolution -->

<name>Maven Repository</name>

<url>https://repo1.maven.org/maven2</url>

<releases>

<enabled>true</enabled>

</releases>

<snapshots>

<enabled>false</enabled>

</snapshots>

</repository> #---------------------------------------------

<repository>

<id>cloudera</id>

<name>cloudera Repository</name>

<url>https://repository.cloudera.com/artifactory/cloudera-repos</url>

</repository>

#---------------------------------------------

</repositories>

重新编译开始:

[root@master109 spark-2.1.0]# ./dev/make-distribution.sh --name 2.6.0-cdh5.7.0 --tgz -Phadoop-2.6 -Dhadoop.version=2.6.0-cdh5.7.0 -Phive -Phive-thriftserver -Pyarn

等待几分钟:

[INFO] Reactor Summary:

[INFO]

[INFO] Spark Project Parent POM ........................... SUCCESS [ 3.997 s]

[INFO] Spark Project Tags ................................. SUCCESS [ 3.394 s]

[INFO] Spark Project Sketch ............................... SUCCESS [ 14.061 s]

[INFO] Spark Project Networking ........................... SUCCESS [ 37.680 s]

[INFO] Spark Project Shuffle Streaming Service ............ SUCCESS [ 12.750 s]

[INFO] Spark Project Unsafe ............................... SUCCESS [ 33.158 s]

[INFO] Spark Project Launcher ............................. SUCCESS [ 50.148 s]

[INFO] Spark Project Core ................................. SUCCESS [04:16 min]

[INFO] Spark Project ML Local Library ..................... SUCCESS [ 45.832 s]

[INFO] Spark Project GraphX ............................... SUCCESS [ 26.712 s]

[INFO] Spark Project Streaming ............................ SUCCESS [ 58.080 s]

[INFO] Spark Project Catalyst ............................. SUCCESS [02:22 min]

[INFO] Spark Project SQL .................................. SUCCESS [03:02 min]

[INFO] Spark Project ML Library ........................... SUCCESS [02:16 min]

[INFO] Spark Project Tools ................................ SUCCESS [ 2.588 s]

[INFO] Spark Project Hive ................................. SUCCESS [01:19 min]

[INFO] Spark Project REPL ................................. SUCCESS [ 6.337 s]

[INFO] Spark Project YARN Shuffle Service ................. SUCCESS [ 13.252 s]

[INFO] Spark Project YARN ................................. SUCCESS [ 57.556 s]

[INFO] Spark Project Hive Thrift Server ................... SUCCESS [ 45.074 s]

[INFO] Spark Project Assembly ............................. SUCCESS [ 7.410 s]

[INFO] Spark Project External Flume Sink .................. SUCCESS [ 30.214 s]

[INFO] Spark Project External Flume ....................... SUCCESS [ 19.359 s]

[INFO] Spark Project External Flume Assembly .............. SUCCESS [ 6.082 s]

[INFO] Spark Integration for Kafka 0.8 .................... SUCCESS [ 30.266 s]

[INFO] Spark Project Examples ............................. SUCCESS [ 28.668 s]

[INFO] Spark Project External Kafka Assembly .............. SUCCESS [ 6.919 s]

[INFO] Spark Integration for Kafka 0.10 ................... SUCCESS [ 30.811 s]

[INFO] Spark Integration for Kafka 0.10 Assembly .......... SUCCESS [ 6.551 s]

[INFO] Kafka 0.10 Source for Structured Streaming ......... SUCCESS [ 17.707 s]

[INFO] ------------------------------------------------------------------------

[INFO] BUILD SUCCESS

[INFO] ------------------------------------------------------------------------

[INFO] Total time: 13:25 min (Wall Clock)

[INFO] Finished at: 2017-10-13T16:35:47+08:00

[INFO] Final Memory: 90M/979M

[INFO] ------------------------------------------------------------------------

完事!

2017-10-13 16:55:55

作者by :山高似水深(http://www.cnblogs.com/tnsay/)转载注明出处。

spark编译安装 spark 2.1.0 hadoop2.6.0-cdh5.7.0的更多相关文章

- 基于cdh5.10.x hadoop版本的apache源码编译安装spark

参考文档:http://spark.apache.org/docs/1.6.0/building-spark.html spark安装需要选择源码编译方式进行安装部署,cdh5.10.0提供默认的二进 ...

- 编译安装spark 1.5.x(Building Spark)

原文连接:http://spark.apache.org/docs/1.5.0/building-spark.html · Building with build/mvn · Building a R ...

- Spark入门实战系列--2.Spark编译与部署(下)--Spark编译安装

[注]该系列文章以及使用到安装包/测试数据 可以在<倾情大奉送--Spark入门实战系列>获取 .编译Spark .时间不一样,SBT是白天编译,Maven是深夜进行的,获取依赖包速度不同 ...

- Spark编译安装和运行

一.环境说明 Mac OSX Java 1.7.0_71 Spark 二.编译安装 tar -zxvf spark-.tgz cd spark- ./sbt/sbt assembly ps:如果之前执 ...

- Spark编译及spark开发环境搭建

最近需要将生产环境的spark1.3版本升级到spark1.6(尽管spark2.0已经发布一段时间了,稳定可靠起见,还是选择了spark1.6),同时需要基于spark开发一些中间件,因此需要搭建一 ...

- Cenos7 编译安装 Mariadb Nginx PHP Memcache ZendOpcache (实测 笔记 Centos 7.0 + Mariadb 10.0.15 + Nginx 1.6.2 + PHP 5.5.19)

环境: 系统硬件:vmware vsphere (CPU:2*4核,内存2G,双网卡) 系统版本:CentOS-7.0-1406-x86_64-DVD.iso 安装步骤: 1.准备 1.1 显示系统版 ...

- hadoop2.3.0cdh5.0.2 升级到cdh5.7.0

后儿就放假了,上班这心真心收不住,为了能充实的度过这难熬的两天,我决定搞个大工程.....ps:我为啥这么期待放假呢,在沙发上像死人一样躺一天真的有意义嘛....... 当然版本:hadoop2.3. ...

- Spark入门实战系列--2.Spark编译与部署(上)--基础环境搭建

[注] 1.该系列文章以及使用到安装包/测试数据 可以在<倾情大奉送--Spark入门实战系列>获取: 2.Spark编译与部署将以CentOS 64位操作系统为基础,主要是考虑到实际应用 ...

- Spark编译与部署

Spark入门实战系列--2.Spark编译与部署(上)--基础环境搭建 [注] 1.该系列文章以及使用到安装包/测试数据 可以在<倾情大奉送--Spark入门实战系列>获取: 2.S ...

随机推荐

- 如何拿到半数面试公司Offer——我的Python求职之路(转载)

从八月底开始找工作,短短的一星期多一些,面试了9家公司,拿到5份Offer,可能是因为我所面试的公司都是些创业性的公司吧,不过还是感触良多,因为学习Python的时间还很短,没想到还算比较容易的找到了 ...

- LeetCode OJ :Remove Linked List Elements (移除链表元素)

Remove all elements from a linked list of integers that have value val. ExampleGiven: 1 --> 2 --& ...

- “一键”知道自己的IP地址和网络供应商

打开浏览器,然后在地址栏里面输入“www.baidu.com” 进入百度主页以后,在搜索框内输入 “ip”,然后回车就可以了

- [C#] Newtonsoft.Json 版本冲突

在web.config或者app.config里面加上一段: <runtime> <assemblyBinding xmlns="urn:schemas-microsoft ...

- 深度学习(六十五)移动端网络MobileNets

- win10 desktop.ini文件

更新windows之后,桌面上突然多了一个隐藏文件desktop.ini,如下图所示: 这并不是病毒,而是一个配置文件.而且这个文件是系统保护文件,本应该是被隐藏的.可能以前个人用户设置的时候显示了系 ...

- VC++6.0/MFC 自定义edit 限制输入内容 响应复制粘贴全选剪切的功能

Ctrl组合键ASCII码 ^Z代表Ctrl+z ASCII值 控制字符 ASCII值 控制字符 ASCII值 控制字符 ASCII值 控制字符0(00) ...

- 分布式缓冲之memcache

1. memcache简介 memcache是danga.com的一个项目,它是一款开源的高性能的分布式内存对象缓存系统,,最早是给LiveJournal提供服务的,后来逐渐被越来越多的大型网站所采用 ...

- kafka系列之(3)——Coordinator与offset管理和Consumer Rebalance

from:http://www.jianshu.com/p/5aa8776868bb kafka系列之(3)——Coordinator与offset管理和Consumer Rebalance 时之结绳 ...

- 在JVM中,新生代和旧生代有何区别?GC的回收方式有几种?server和client有和区别?

在JVM中,新生代和旧生代有何区别?GC的回收方式有几种?server和client有和区别? 2014-04-12 12:09 7226人阅读 评论(0) 收藏 举报 分类: J2SE(5) 一 ...