【转】Derivation of the Normal Equation for linear regression

I was going through the Coursera "Machine Learning" course, and in the section on multivariate linear regression something caught my eye. Andrew Ng presented the Normal Equation as an analytical solution to the linear regression problem with a least-squares cost function. He mentioned that in some cases (such as for small feature sets) using it is more effective than applying gradient descent; unfortunately, he left its derivation out.

Here I want to show how the normal equation is derived.

First, some terminology. The following symbols are compatible with the machine learning course, not with the exposition of the normal equation on Wikipedia and other sites - semantically it's all the same, just the symbols are different.

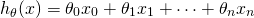

Given the hypothesis function:

We'd like to minimize the least-squares cost:

Where  is the i-th sample (from a set of m samples) and

is the i-th sample (from a set of m samples) and  is the i-th expected result.

is the i-th expected result.

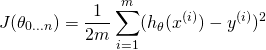

To proceed, we'll represent the problem in matrix notation; this is natural, since we essentially have a system of linear equations here. The regression coefficients  we're looking for are the vector:

we're looking for are the vector:

Each of the m input samples is similarly a column vector with n+1 rows,  being 1 for convenience. So we can now rewrite the hypothesis function as:

being 1 for convenience. So we can now rewrite the hypothesis function as:

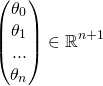

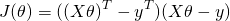

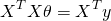

When this is summed over all samples, we can dip further into matrix notation. We'll define the "design matrix" X (uppercase X) as a matrix of m rows, in which each row is the i-th sample (the vector  ). With this, we can rewrite the least-squares cost as following, replacing the explicit sum by matrix multiplication:

). With this, we can rewrite the least-squares cost as following, replacing the explicit sum by matrix multiplication:

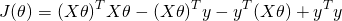

Now, using some matrix transpose identities, we can simplify this a bit. I'll throw the  part away since we're going to compare a derivative to zero anyway:

part away since we're going to compare a derivative to zero anyway:

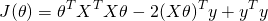

Note that  is a vector, and so is y. So when we multiply one by another, it doesn't matter what the order is (as long as the dimensions work out). So we can further simplify:

is a vector, and so is y. So when we multiply one by another, it doesn't matter what the order is (as long as the dimensions work out). So we can further simplify:

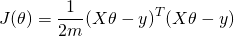

Recall that here  is our unknown. To find where the above function has a minimum, we will derive by

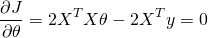

is our unknown. To find where the above function has a minimum, we will derive by  and compare to 0. Deriving by a vector may feel uncomfortable, but there's nothing to worry about. Recall that here we only use matrix notation to conveniently represent a system of linear formulae. So we derive by each component of the vector, and then combine the resulting derivatives into a vector again. The result is:

and compare to 0. Deriving by a vector may feel uncomfortable, but there's nothing to worry about. Recall that here we only use matrix notation to conveniently represent a system of linear formulae. So we derive by each component of the vector, and then combine the resulting derivatives into a vector again. The result is:

Or:

[Update 27-May-2015: I've written another post that explains in more detail how these derivatives are computed.]

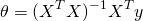

Now, assuming that the matrix  is invertible, we can multiply both sides by

is invertible, we can multiply both sides by  and get:

and get:

Which is the normal equation.

【转】Derivation of the Normal Equation for linear regression的更多相关文章

- (三)用Normal Equation拟合Liner Regression模型

继续考虑Liner Regression的问题,把它写成如下的矩阵形式,然后即可得到θ的Normal Equation. Normal Equation: θ=(XTX)-1XTy 当X可逆时,(XT ...

- CS229 3.用Normal Equation拟合Liner Regression模型

继续考虑Liner Regression的问题,把它写成如下的矩阵形式,然后即可得到θ的Normal Equation. Normal Equation: θ=(XTX)-1XTy 当X可逆时,(XT ...

- Linear regression with multiple variables(多特征的线型回归)算法实例_梯度下降解法(Gradient DesentMulti)以及正规方程解法(Normal Equation)

,, ,, ,, ,, ,, ,, ,, ,, ,, ,, ,, ,, ,, ,, ,, ,, ,, ,, ,, ,, ,, ,, ,, ,, ,, ,, ,, ,, ,, ,, ,, ,, ,, , ...

- machine learning (7)---normal equation相对于gradient descent而言求解linear regression问题的另一种方式

Normal equation: 一种用来linear regression问题的求解Θ的方法,另一种可以是gradient descent 仅适用于linear regression问题的求解,对其 ...

- 机器学习入门:Linear Regression与Normal Equation -2017年8月23日22:11:50

本文会讲到: (1)另一种线性回归方法:Normal Equation: (2)Gradient Descent与Normal Equation的优缺点: 前面我们通过Gradient Desce ...

- 5种方法推导Normal Equation

引言: Normal Equation 是最基础的最小二乘方法.在Andrew Ng的课程中给出了矩阵推到形式,本文将重点提供几种推导方式以便于全方位帮助Machine Learning用户学习. N ...

- Normal Equation Algorithm

和梯度下降法一样,Normal Equation(正规方程法)算法也是一种线性回归算法(Linear Regression Algorithm).与梯度下降法通过一步步计算来逐步靠近最佳θ值不同,No ...

- coursera机器学习笔记-多元线性回归,normal equation

#对coursera上Andrew Ng老师开的机器学习课程的笔记和心得: #注:此笔记是我自己认为本节课里比较重要.难理解或容易忘记的内容并做了些补充,并非是课堂详细笔记和要点: #标记为<补 ...

- Normal Equation

一.Normal Equation 我们知道梯度下降在求解最优参数\(\theta\)过程中需要合适的\(\alpha\),并且需要进行多次迭代,那么有没有经过简单的数学计算就得到参数\(\theta ...

随机推荐

- 尚未解决的intellij问题:补充措施

2016-12-06 遇到问题 D:\software\apache-tomcat-7.0.57\bin\catalina.bat run [2016-12-06 09:54:52,342] Arti ...

- discuz, 使用同一数据库, 只是换个环境, 数据就不一样了

如题, 本以为是由于某些冲突导致, 细查之后, 发现是开了缓存了, 把缓存关掉或是在后台清理缓存就OK了 后台清理缓存, 全局--性能优化--内存优化 清理缓存 关闭缓存, 修改全局配置文件, co ...

- redis 中文手册

https://redis.readthedocs.org/en/latest/ http://www.cnblogs.com/ikodota/archive/2012/03/05/php_redis ...

- POJ 2653 Pick-up sticks (判断线段相交)

Pick-up sticks Time Limit: 3000MS Memory Limit: 65536K Total Submissions: 10330 Accepted: 3833 D ...

- Android缓存技术

android应用程序中 1. 尽可能的把文件缓存到本地.可以是 memory,cache dir,甚至是放进 SD 卡中(比如大的图片和音视频). 可以设置双重缓冲,较大的图片或者音频放到SD ...

- HTML5 微信二维码提示框

这是一个js的小案例,主要效果是显示一个微信二维码的提示框,非常简单实用. 源码如下: JS部分 <script src="js/jquery-1.8.3.min.js"&g ...

- python面对对象编程----------7:callable(类调用)与context(上下文)

一:callables callables使类实例能够像函数一样被调用 如果类需要一个函数型接口这时用callable,最好继承自abc.Callable,这样有些检查机制并且一看就知道此类的目的是c ...

- bootstrap新闻模块样式模板

<!-- news beginning --> <div class="container mp30"> <div class="row&q ...

- Python 时间函数

时间的运用 #coding=utf-8 #!user/bin/python import time import calendar ticks = time.asctime(time.localtim ...

- 最全ASCLL码

结果 描述 实体编号 space ! exclamation mark ! " quotation mark " # number sign # $ dollar sign $ ...