虚拟机hadoop集群搭建

hadoop

tar -xvf hadoop-2.7.3.tar.gz

mv hadoop-2.7.3 hadoop

在hadoop根目录创建目录

hadoop/hdfs

hadoop/hdfs/tmp

hadoop/hdfs/name

hadoop/hdfs/data

core-site.xml

修改/etc/hadoop中的配置文件

<configuration>

<property>

<name>hadoop.tmp.dir</name>

<value>file:/usr/local/hadoop/hdfs/tmp</value>

<description>A base for other temporary directories.</description>

</property>

<property>

<name>fs.defaultFS</name>

<value>hdfs://sjck-node01:9000</value>

</property>

</configuration>

hdfs-site.xml

创建hdfs文件系统

dfs.replication维护副本数,默认是3个

<configuration>

<property>

<name>dfs.namenode.name.dir</name>

<value>file:/usr/local/hadoop/hdfs/name</value>

</property>

<property>

<name>dfs.datanode.data.dir</name>

<value>file:/usr/local/hadoop/hdfs/data</value>

</property>

<property>

<name>dfs.replication</name>

<value>2</value>

</property>

<property>

<name>dfs.namenode.secondary.http-address</name>

<value>sjck-node01:9001</value>

</property>

<property>

<name>dfs.webhdfs.enabled</name>

<value>true</value>

</property>

<property>

<name>dfs.namenode.datanode.registration.ip-hostname-check</name>

<value>false</value>

</property>

</configuration>

mapred-site.xml

cp mapred-site.xml.template mapred-site.xml

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<property>

<name>mapreduce.jobhistory.address</name>

<value>sjck-node01:10020</value>

</property>

<property>

<name>mapreduce.jobhistory.webapp.address</name>

<value>sjck-node01:19888</value>

</property>

</configuration>

yarn-site.xml

webapp.address是web端口

yarn.resourcemanager.address,port for clients to submit jobs.

arn.resourcemanager.scheduler.address,port for ApplicationMasters to talk to Scheduler to obtain resources.

<property>

<name>yarn.resourcemanager.address</name>

<value>master:18040</value>

</property>

<property>

<name>yarn.resourcemanager.scheduler.address</name>

<value>master:18030</value>

</property>

<property>

<name>yarn.resourcemanager.webapp.address</name>

<value>master:18088</value>

</property>

<property>

<name>yarn.resourcemanager.resource-tracker.address</name>

<value>master:18025</value>

</property>

<property>

<name>yarn.resourcemanager.admin.address</name>

<value>master:18141</value>

</property>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.nodemanager.auxservices.mapreduce.shuffle.class</name>

<value>org.apache.hadoop.mapred.ShuffleHandler</value>

</property>

slaves

sjck-node02

sjck-node03

hadoop-env.sh,配置里的变量为集群特有的值

export JAVA_HOME=/usr/local/src/jdk/jdk1.8

export HADOOP_NAMENODE_OPTS="-XX:+UseParallelGC"

yarn-env.sh

export JAVA_HOME=/usr/local/src/jdk/jdk1.8

环境变量

export HADOOP_HOME=/usr/local/hadoop

export PATH="$HADOOP_HOME/bin:$HADOOP_HOME/sbin:$PATH"

export HADOOP_CONF_DIR=$HADOOP_HOME/etc/hadoop

将目录复制到slaver

scp -r /usr/local/hadoop/ sjck-node02:/usr/local/

scp -r /usr/local/hadoop/ sjck-node03:/usr/local/

启动hadoop的命令都在master上执行,初始化hadoop

hadoop namenode -format

INFO common.Storage: Storage directory /usr/local/hadoop/hdfs/name has been successfully formatted.

master启动

Hadoop守护进程的日志默认写入到 ${HADOOP_HOME}/logs

[root@sjck-node01 sbin]# /usr/local/hadoop/sbin/start-all.sh

This script is Deprecated. Instead use start-dfs.sh and start-yarn.sh

Starting namenodes on [sjck-node01]

sjck-node01: starting namenode, logging to /usr/local/hadoop/logs/hadoop-root-namenode-sjck-node01.out

sjck-node02: starting datanode, logging to /usr/local/hadoop/logs/hadoop-root-datanode-sjck-node02.out

sjck-node03: starting datanode, logging to /usr/local/hadoop/logs/hadoop-root-datanode-sjck-node03.out

Starting secondary namenodes [sjck-node01]

sjck-node01: starting secondarynamenode, logging to /usr/local/hadoop/logs/hadoop-root-secondarynamenode-sjck-node01.out

starting yarn daemons

starting resourcemanager, logging to /usr/local/hadoop/logs/yarn-root-resourcemanager-sjck-node01.out

sjck-node02: starting nodemanager, logging to /usr/local/hadoop/logs/yarn-root-nodemanager-sjck-node02.out

sjck-node03: starting nodemanager, logging to /usr/local/hadoop/logs/yarn-root-nodemanager-sjck-node03.out

master关闭

[root@sjck-node01 sbin]# /usr/local/hadoop/sbin/stop-all.sh

This script is Deprecated. Instead use stop-dfs.sh and stop-yarn.sh

Stopping namenodes on [sjck-node01]

sjck-node01: stopping namenode

sjck-node02: stopping datanode

sjck-node03: stopping datanode

Stopping secondary namenodes [sjck-node01]

sjck-node01: stopping secondarynamenode

stopping yarn daemons

stopping resourcemanager

sjck-node02: stopping nodemanager

sjck-node03: stopping nodemanager

no proxyserver to stop

查看集群状态

Namenode

文件系统的管理节点,维护文件系统的目录结构元数据,还有文件与block对应关系

master是NameNode,也是JobTracker

DataNode

提供真实文件数据,slaver是DataNode,也是TaskTracker

查看master进程状态

[root@sjck-node01 hadoop]# jps

4404 SecondaryNameNode

4215 NameNode

4808 Jps

4555 ResourceManager

查看slaver进程状态

[root@sjck-node02 hadoop]# jps

2752 DataNode

4995 Jps

2839 NodeManager

[root@sjck-node03 hadoop]# jps

3076 DataNode

3163 NodeManager

3260 Jps

查看防火墙状态

service iptables status

关闭防火墙,开机不启动

service iptables stop

chkconfig --del iptables

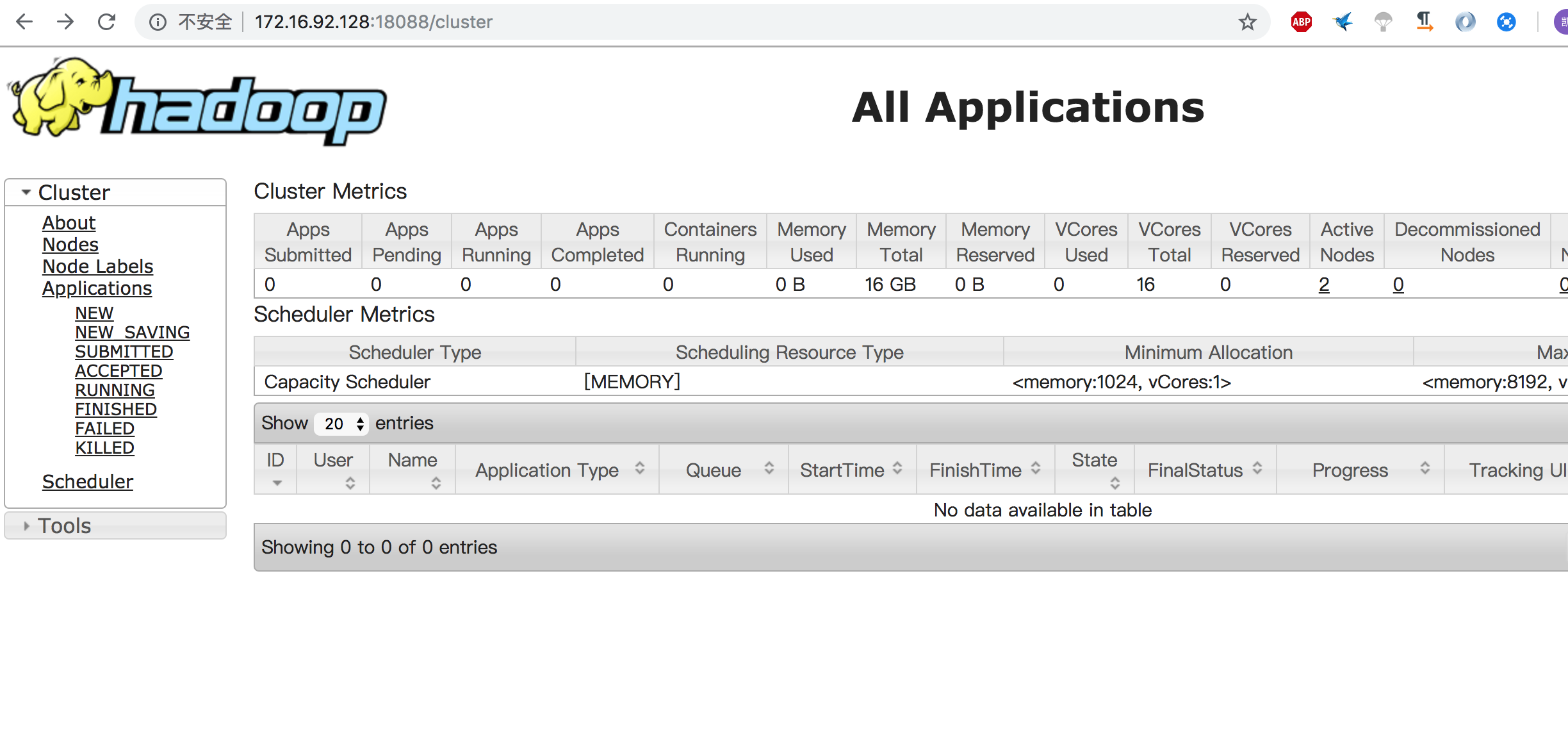

查看集群状态

http://172.16.92.128:18088/cluster

执行测试任务

hadoop jar /usr/local/hadoop/share/hadoop/mapreduce/hadoop-mapreduce-examples-2.7.3.jar pi 10 10

19/03/20 20:42:30 INFO mapreduce.Job: map 0% reduce 0%

19/03/20 20:42:51 INFO mapreduce.Job: map 20% reduce 0%

19/03/20 20:43:05 INFO mapreduce.Job: map 20% reduce 7%

19/03/20 20:45:43 INFO mapreduce.Job: map 40% reduce 7%

19/03/20 20:45:44 INFO mapreduce.Job: map 50% reduce 7%

19/03/20 20:45:47 INFO mapreduce.Job: map 60% reduce 7%

19/03/20 20:45:48 INFO mapreduce.Job: map 100% reduce 7%

19/03/20 20:45:49 INFO mapreduce.Job: map 100% reduce 33%

19/03/20 20:45:50 INFO mapreduce.Job: map 100% reduce 100%

Job Finished in 218.298 seconds

配置参数详情

[root@sjck-node01 hadoop]# jps

4404 SecondaryNameNode

4215 NameNode

4808 Jps

4555 ResourceManager

虚拟机hadoop集群搭建的更多相关文章

- Hadoop集群搭建(完全分布式版本) VMWARE虚拟机

Hadoop集群搭建(完全分布式版本) VMWARE虚拟机 一.准备工作 三台虚拟机:master.node1.node2 时间同步 ntpdate ntp.aliyun.com 调整时区 cp /u ...

- Hadoop集群搭建安装过程(一)(图文详解---尽情点击!!!)

Hadoop集群搭建(一)(上篇中讲到了Linux虚拟机的安装) 一.安装所需插件(以hadoop2.6.4为例,如果需要可以到官方网站进行下载:http://hadoop.apache.org) h ...

- Linux环境下Hadoop集群搭建

Linux环境下Hadoop集群搭建 前言: 最近来到了武汉大学,在这里开始了我的研究生生涯.昨天通过学长们的耐心培训,了解了Hadoop,Hdfs,Hive,Hbase,MangoDB等等相关的知识 ...

- Hadoop(二) HADOOP集群搭建

一.HADOOP集群搭建 1.集群简介 HADOOP集群具体来说包含两个集群:HDFS集群和YARN集群,两者逻辑上分离,但物理上常在一起 HDFS集群: 负责海量数据的存储,集群中的角色主要有 Na ...

- Hadoop学习之路(四)Hadoop集群搭建和简单应用

概念了解 主从结构:在一个集群中,会有部分节点充当主服务器的角色,其他服务器都是从服务器的角色,当前这种架构模式叫做主从结构. 主从结构分类: 1.一主多从 2.多主多从 Hadoop中的HDFS和Y ...

- 1.Hadoop集群搭建之Linux主机环境准备

Hadoop集群搭建之Linux主机环境 创建虚拟机包含1个主节点master,2个从节点slave1,slave2 虚拟机网络连接模式为host-only(非虚拟机环境可跳过) 集群规划如下表: 主 ...

- 三节点Hadoop集群搭建

1. 基础环境搭建 新建3个CentOS6.5操作系统的虚拟机,命名(可自定)为masternode.slavenode1和slavenode2.该过程参考上一篇博文CentOS6.5安装配置详解 2 ...

- 大数据初级笔记二:Hadoop入门之Hadoop集群搭建

Hadoop集群搭建 把环境全部准备好,包括编程环境. JDK安装 版本要求: 强烈建议使用64位的JDK版本,这样的优势在于JVM的能够访问到的最大内存就不受限制,基于后期可能会学习到Spark技术 ...

- 大数据学习——HADOOP集群搭建

4.1 HADOOP集群搭建 4.1.1集群简介 HADOOP集群具体来说包含两个集群:HDFS集群和YARN集群,两者逻辑上分离,但物理上常在一起 HDFS集群: 负责海量数据的存储,集群中的角色主 ...

随机推荐

- Backbone基础笔记

之前做一个iPad的金融项目的时候有用到Backbone,不过当时去的时候项目已经进入UAT测试阶段了,就只是改了改Bug,对Backbone框架算不上深入了解,但要说我一点都不熟悉那倒也不是,我不太 ...

- 实验一 《网络对抗技术》逆向及Bof技术

- 20155322 2016-2017-2 《Java程序设计》第5周学习总结

20155322 2016-2017-2 <Java程序设计>第5周学习总结 教材学习内容总结 本周的学习任务是课本第八第九章: 第八章主要是讲异常处理.这里要理解Java的错误以对象的方 ...

- 【转】.NET中的三种Timer的区别和用法

最近正好做一个WEB中定期执行的程序,而.NET中有3个不同的定时器.所以正好研究研究.这3个定时器分别是: //1.实现按用户定义的时间间隔引发事件的计时器.此计时器最宜用于 Windows 窗体应 ...

- C语言入门教程-(2)基本程序结构

1.简单的C语言程序结构 要建造房屋,首先需要打地基.搬砖搭建框架(这大概就是为什么叫搬砖的原因).学习计算机语言的时候也一样,应该从基本的结构开始学起.下面,我们看一段简单的源代码,这段代码希望大家 ...

- 未来人类T5 安装win10,ubuntu双系统

1.首先确保win10已经安装,u盘中已刻录好系统,下载好英伟达最新驱动保存在u盘中,压缩100g的磁盘空间给ubuntu. 2.设置双显卡模式,重启时按F7选择进入u盘启动. 3.进入安装界面,选择 ...

- connect by和strart with子句

--使用connect by和strart with子句 SELECT [level],column,expression, ... FROM table [WHERE where_clause] [ ...

- Python类相关的装饰器

一.装饰器装饰类方法 from functools import wraps def wrapper(func): @wraps(func) def inner(self,*args,**kwargs ...

- shell用户管理->

用户的添加与删除练习 -> 脚本1(if then) 思路:1.条件测试, 脚本使用案例, 创建用户[交互式创建] 1.怎么交互式 read -p 2.接收到对应字符串怎么创建用户 userad ...

- 使用os模块实现展示目录下的文件和文件夹

Windows 10家庭中文版,Python 3.6.4 今天学习了os模块,下面是使用它开发的一个展示目录下的文件和文件夹的函数,代码如下: import os # deep大于等于1的整数,默认为 ...