064 UDF

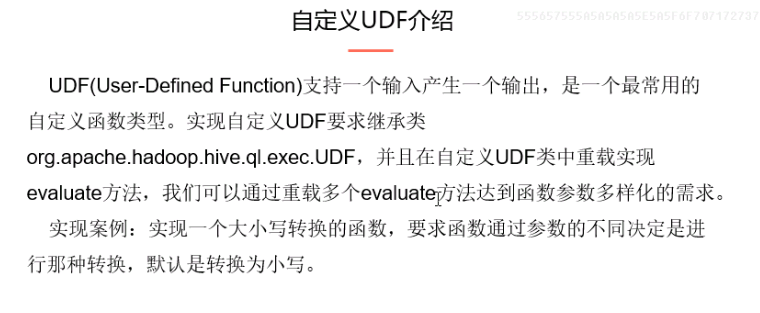

一:UDF

1.自定义UDF

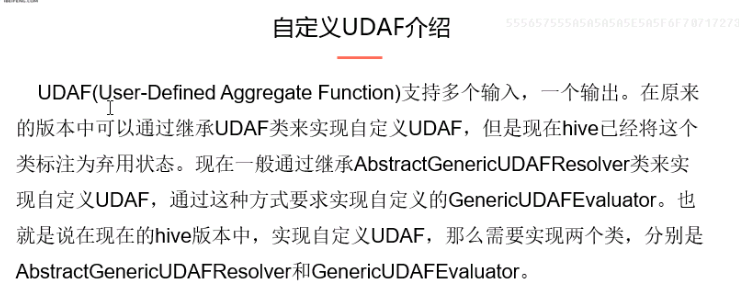

二:UDAF

2.UDAF

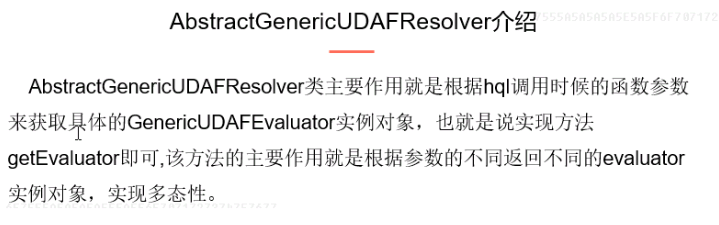

3.介绍AbstractGenericUDAFResolver

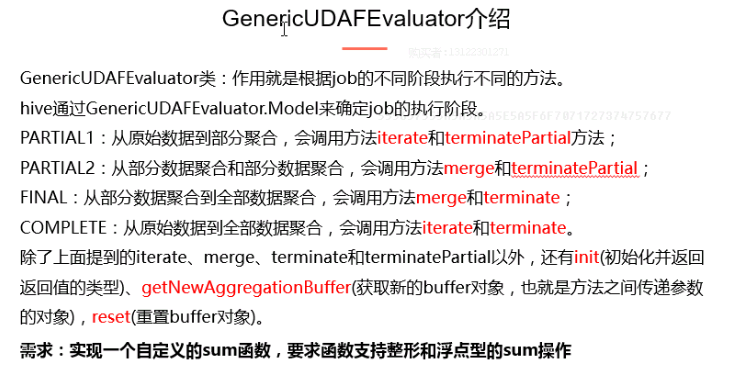

4.介绍GenericUDAFEvaluator

5.程序

package org.apache.hadoop.hive_udf; import org.apache.hadoop.hive.ql.exec.UDFArgumentException;

import org.apache.hadoop.hive.ql.metadata.HiveException;

import org.apache.hadoop.hive.ql.parse.SemanticException;

import org.apache.hadoop.hive.ql.udf.generic.AbstractGenericUDAFResolver;

import org.apache.hadoop.hive.ql.udf.generic.GenericUDAFEvaluator;

import org.apache.hadoop.hive.ql.udf.generic.GenericUDAFParameterInfo;

import org.apache.hadoop.hive.serde2.io.DoubleWritable;

import org.apache.hadoop.hive.serde2.objectinspector.ObjectInspector;

import org.apache.hadoop.hive.serde2.objectinspector.PrimitiveObjectInspector;

import org.apache.hadoop.hive.serde2.objectinspector.primitive.AbstractPrimitiveWritableObjectInspector;

import org.apache.hadoop.hive.serde2.objectinspector.primitive.PrimitiveObjectInspectorFactory;

import org.apache.hadoop.hive.serde2.objectinspector.primitive.PrimitiveObjectInspectorUtils;

import org.apache.hadoop.io.LongWritable; /**

*

* 需求:实现sum函数,支持int和double类型

*

*/ public class UdafProject extends AbstractGenericUDAFResolver{

public GenericUDAFEvaluator getEvaluator(GenericUDAFParameterInfo info)

throws SemanticException {

//判断参数是否是全部列

if(info.isAllColumns()){

throw new SemanticException("不支持*的参数");

} //判断是否只有一个参数

ObjectInspector[] inspector = info.getParameterObjectInspectors();

if(inspector.length != 1){

throw new SemanticException("参数只能有一个");

}

//判断输入列的数据类型是否为基本类型

if(inspector[0].getCategory() != ObjectInspector.Category.PRIMITIVE){

throw new SemanticException("参数必须为基本数据类型");

} AbstractPrimitiveWritableObjectInspector woi = (AbstractPrimitiveWritableObjectInspector) inspector[0]; //判断是那种基本数据类型 switch(woi.getPrimitiveCategory()){

case INT:

case LONG:

case BYTE:

case SHORT:

return new udafLong();

case FLOAT:

case DOUBLE:

return new udafDouble();

default:

throw new SemanticException("参数必须是基本类型,且不能为string等类型"); } } /**

* 对整形数据进行求和

*/

public static class udafLong extends GenericUDAFEvaluator{ //定义输入数据类型

public PrimitiveObjectInspector inputor; //实现自定义buffer

static class sumlongagg implements AggregationBuffer{

long sum;

boolean empty;

} //初始化方法

@Override

public ObjectInspector init(Mode m, ObjectInspector[] parameters)

throws HiveException {

// TODO Auto-generated method stub super.init(m, parameters);

if(parameters.length !=1 ){

throw new UDFArgumentException("参数异常");

}

if(inputor == null){

this.inputor = (PrimitiveObjectInspector) parameters[0];

}

//注意返回的类型要与最终sum的类型一致

return PrimitiveObjectInspectorFactory.writableLongObjectInspector;

} @Override

public AggregationBuffer getNewAggregationBuffer() throws HiveException {

// TODO Auto-generated method stub

sumlongagg slg = new sumlongagg();

this.reset(slg);

return slg;

} @Override

public void reset(AggregationBuffer agg) throws HiveException {

// TODO Auto-generated method stub

sumlongagg slg = (sumlongagg) agg;

slg.sum=0;

slg.empty=true;

} @Override

public void iterate(AggregationBuffer agg, Object[] parameters)

throws HiveException {

// TODO Auto-generated method stub

if(parameters.length != 1){

throw new UDFArgumentException("参数错误");

}

this.merge(agg, parameters[0]); } @Override

public Object terminatePartial(AggregationBuffer agg)

throws HiveException {

// TODO Auto-generated method stub

return this.terminate(agg);

} @Override

public void merge(AggregationBuffer agg, Object partial)

throws HiveException {

// TODO Auto-generated method stub

sumlongagg slg = (sumlongagg) agg;

if(partial != null){

slg.sum += PrimitiveObjectInspectorUtils.getLong(partial, inputor);

slg.empty=false;

}

} @Override

public Object terminate(AggregationBuffer agg) throws HiveException {

// TODO Auto-generated method stub

sumlongagg slg = (sumlongagg) agg;

if(slg.empty){

return null;

}

return new LongWritable(slg.sum);

} } /**

* 实现浮点型的求和

*/

public static class udafDouble extends GenericUDAFEvaluator{ //定义输入数据类型

public PrimitiveObjectInspector input; //实现自定义buffer

static class sumdoubleagg implements AggregationBuffer{

double sum;

boolean empty;

} //初始化方法

@Override

public ObjectInspector init(Mode m, ObjectInspector[] parameters)

throws HiveException {

// TODO Auto-generated method stub super.init(m, parameters);

if(parameters.length !=1 ){

throw new UDFArgumentException("参数异常");

}

if(input == null){

this.input = (PrimitiveObjectInspector) parameters[0];

}

//注意返回的类型要与最终sum的类型一致

return PrimitiveObjectInspectorFactory.writableDoubleObjectInspector;

} @Override

public AggregationBuffer getNewAggregationBuffer() throws HiveException {

// TODO Auto-generated method stub

sumdoubleagg sdg = new sumdoubleagg();

this.reset(sdg);

return sdg;

} @Override

public void reset(AggregationBuffer agg) throws HiveException {

// TODO Auto-generated method stub

sumdoubleagg sdg = (sumdoubleagg) agg;

sdg.sum=0;

sdg.empty=true;

} @Override

public void iterate(AggregationBuffer agg, Object[] parameters)

throws HiveException {

// TODO Auto-generated method stub

if(parameters.length != 1){

throw new UDFArgumentException("参数错误");

}

this.merge(agg, parameters[0]);

} @Override

public Object terminatePartial(AggregationBuffer agg)

throws HiveException {

// TODO Auto-generated method stub

return this.terminate(agg);

} @Override

public void merge(AggregationBuffer agg, Object partial)

throws HiveException {

// TODO Auto-generated method stub

sumdoubleagg sdg =(sumdoubleagg) agg;

if(partial != null){

sdg.sum += PrimitiveObjectInspectorUtils.getDouble(sdg, input);

sdg.empty=false;

}

} @Override

public Object terminate(AggregationBuffer agg) throws HiveException {

// TODO Auto-generated method stub

sumdoubleagg sdg = (sumdoubleagg) agg;

if (sdg.empty){

return null;

}

return new DoubleWritable(sdg.sum);

} } }

6.打成jar包

并放入路径:/etc/opt/datas/

7.添加jar到path

格式:

add jar linux_path;

即:

add jar /etc/opt/datas/af.jar

8.创建方法

create temporary function af as 'org.apache.hadoop.hive_udf.UdafProject';

9.在hive中运行

select sum(id),af(id) from stu_info;

三:UDTF

1.UDTF

2.程序

package org.apache.hadoop.hive.udf; import java.util.ArrayList; import org.apache.hadoop.hive.ql.exec.UDFArgumentException;

import org.apache.hadoop.hive.ql.metadata.HiveException;

import org.apache.hadoop.hive.ql.udf.generic.GenericUDTF;

import org.apache.hadoop.hive.serde2.objectinspector.ObjectInspector;

import org.apache.hadoop.hive.serde2.objectinspector.ObjectInspectorFactory;

import org.apache.hadoop.hive.serde2.objectinspector.StructObjectInspector;

import org.apache.hadoop.hive.serde2.objectinspector.primitive.PrimitiveObjectInspectorFactory; public class UDTFtest extends GenericUDTF { @Override

public StructObjectInspector initialize(StructObjectInspector argOIs)

throws UDFArgumentException {

// TODO Auto-generated method stub

if(argOIs.getAllStructFieldRefs().size() != 1){

throw new UDFArgumentException("参数只能有一个");

}

ArrayList<String> fieldname = new ArrayList<String>();

fieldname.add("name");

fieldname.add("email");

ArrayList<ObjectInspector> fieldio = new ArrayList<ObjectInspector>();

fieldio.add(PrimitiveObjectInspectorFactory.javaStringObjectInspector);

fieldio.add(PrimitiveObjectInspectorFactory.javaStringObjectInspector); return ObjectInspectorFactory.getStandardStructObjectInspector(fieldname, fieldio);

} @Override

public void process(Object[] args) throws HiveException {

// TODO Auto-generated method stub

if(args.length == 1){

String name = args[0].toString();

String email = name + "@ibeifneg.com";

super.forward(new String[] {name,email});

}

} @Override

public void close() throws HiveException {

// TODO Auto-generated method stub

super.forward(new String[] {"complete","finish"});

} }

3.同样的步骤

4.在hive中运行

select tf(ename) as (name,email) from emp;

064 UDF的更多相关文章

- SQL Server-聚焦在视图和UDF中使用SCHEMABINDING(二十六)

前言 上一节我们讨论了视图中的一些限制以及建议等,这节我们讲讲关于在UDF和视图中使用SCHEMABINDING的问题,简短的内容,深入的理解,Always to review the basics. ...

- MySql UDF 调用外部程序和系统命令

1.mysql利用mysqludf的一个mysql插件可以实现调用外部程序和系统命令 下载lib_mysqludf_sys程序:https://github.com/mysqludf/lib_mysq ...

- Hive UDF初探

1. 引言 在前一篇中,解决了Hive表中复杂数据结构平铺化以导入Kylin的问题,但是平铺之后计算广告日志的曝光PV是翻倍的,因为一个用户对应于多个标签.所以,为了计算曝光PV,我们得另外创建视图. ...

- sparksql udf的运用----scala及python版(2016年7月17日前完成)

问:udf在sparksql 里面的作用是什么呢? 答:oracle的存储过程会有用到定义函数,那么现在udf就相当于一个在sparksql用到的函数定义: 第二个问题udf是怎么实现的呢? regi ...

- Hive UDF开发实例学习

1. 本地环境配置 必须包含的一些包. http://blog.csdn.net/azhao_dn/article/details/6981115 2. 去重UDF实例 http://blog.csd ...

- Adding New Functions to MySQL(User-Defined Function Interface UDF、Native Function)

catalog . How to Add New Functions to MySQL . Features of the User-Defined Function Interface . User ...

- gearman mysql udf

gearman安装 apt-get install gearman gearman-server libgearman-dev 配置bindip /etc/defalut/gearman-job-se ...

- HiveServer2 的jdbc方式创建udf的修改(add jar 最好不要使用),否则会造成异常: java.sql.SQLException: Error while processing statement: null

自从Hive0.13.0开始,使用HiveServer2 的jdbc方式创建udf的临时函数的方法由: ADD JAR ${HiveUDFJarPath} create TEMPORARY funct ...

- HIVE: UDF应用实例

数据文件内容 TEST DATA HERE Good to Go 我们准备写一个函数,把所有字符变为小写. 1.开发UDF package MyTestPackage; import org.apac ...

随机推荐

- 20155204 2016-2017-2 《Java程序设计》第6周学习总结

20155204 2016-2017-2 <Java程序设计>第6周学习总结 教材学习内容总结 一切皆对象,输入输出也是类,线程也是 String对象的trim方法去掉首尾空格和空字符 f ...

- 绘图QPainter-字体

方式一: import sys from PyQt5.QtGui import QPainter, QFont,QPen from PyQt5.QtWidgets import QApplicatio ...

- [HEOI2015]定价 (贪心)

分类讨论大法好! \(solution:\) 先说一下我对这个题目的态度: 首先这一题是贪心,这个十分明显,看了一眼其他题解都是十分优秀的贪心,可是大家都没有想过吗:你们贪心都是在区间\([l,r]\ ...

- B - Finding Palindromes (字典树+manacher)

题目链接:https://cn.vjudge.net/contest/283743#problem/B 题目大意:给你n个字符串,然后问你将这位n个字符串任意两两组合,然后问你这所有的n*n种情况中, ...

- Docker容器数据卷

⒈Docker容器中数据如何持久化? ①通过commit命令使容器反向为镜像 ②以容器数据卷的方式将数据抽离 ⒉容器数据卷的作用? ①容器数据的持久化 ②容器间继承.共享数据 ⒊能干嘛? 卷就是目录或 ...

- 解决 ionic 中的 CORS(跨域)

译者注:本人翻译功力有限,所以文中难免有翻译不准确的地方,凑合看吧,牛逼的话你看英文版的去,完事儿欢迎回来指正交流(^_^) 如果你通过 ionic serve 或者 ionic run 命令使用或 ...

- GCC的符号可见性——解决多个库同名符号冲突问题

引用自:https://github.com/wwbmmm/blog/wiki/gcc_visibility 问题 最近项目遇到一些问题,场景如下 主程序依赖了两个库libA的funcA函数和libB ...

- Django中全局Context处理器

1.模板标签和模板变量 模板标签在{% %}中定义: {% if is_logged_in %} Thanks for logging in! {% else %} Please log in. {% ...

- ARMV8 datasheet学习笔记4:AArch64系统级体系结构之存储模型

1.前言 关于存储系统体系架构,可以概述如下: 存储系统体系结构的形式 VMSA 存储属性 2. 存储系统体系结构 2.1. 地址空间 指令地址空间溢出 指令地址计算((address_of ...

- Graphql 相关 strapi -- Koa2

Graphql 相关资源: https://github.com/chentsulin/awesome-graphql graphql-apis : https://gi ...