lucene源码分析(7)Analyzer分析

1.Analyzer的使用

Analyzer使用在IndexWriter的构造方法

/**

* Constructs a new IndexWriter per the settings given in <code>conf</code>.

* If you want to make "live" changes to this writer instance, use

* {@link #getConfig()}.

*

* <p>

* <b>NOTE:</b> after ths writer is created, the given configuration instance

* cannot be passed to another writer.

*

* @param d

* the index directory. The index is either created or appended

* according <code>conf.getOpenMode()</code>.

* @param conf

* the configuration settings according to which IndexWriter should

* be initialized.

* @throws IOException

* if the directory cannot be read/written to, or if it does not

* exist and <code>conf.getOpenMode()</code> is

* <code>OpenMode.APPEND</code> or if there is any other low-level

* IO error

*/

public IndexWriter(Directory d, IndexWriterConfig conf) throws IOException {

enableTestPoints = isEnableTestPoints();

conf.setIndexWriter(this); // prevent reuse by other instances

config = conf;

infoStream = config.getInfoStream();

softDeletesEnabled = config.getSoftDeletesField() != null;

// obtain the write.lock. If the user configured a timeout,

// we wrap with a sleeper and this might take some time.

writeLock = d.obtainLock(WRITE_LOCK_NAME); boolean success = false;

try {

directoryOrig = d;

directory = new LockValidatingDirectoryWrapper(d, writeLock); analyzer = config.getAnalyzer();

mergeScheduler = config.getMergeScheduler();

mergeScheduler.setInfoStream(infoStream);

codec = config.getCodec();

OpenMode mode = config.getOpenMode();

final boolean indexExists;

final boolean create;

if (mode == OpenMode.CREATE) {

indexExists = DirectoryReader.indexExists(directory);

create = true;

} else if (mode == OpenMode.APPEND) {

indexExists = true;

create = false;

} else {

// CREATE_OR_APPEND - create only if an index does not exist

indexExists = DirectoryReader.indexExists(directory);

create = !indexExists;

} // If index is too old, reading the segments will throw

// IndexFormatTooOldException. String[] files = directory.listAll(); // Set up our initial SegmentInfos:

IndexCommit commit = config.getIndexCommit(); // Set up our initial SegmentInfos:

StandardDirectoryReader reader;

if (commit == null) {

reader = null;

} else {

reader = commit.getReader();

} if (create) { if (config.getIndexCommit() != null) {

// We cannot both open from a commit point and create:

if (mode == OpenMode.CREATE) {

throw new IllegalArgumentException("cannot use IndexWriterConfig.setIndexCommit() with OpenMode.CREATE");

} else {

throw new IllegalArgumentException("cannot use IndexWriterConfig.setIndexCommit() when index has no commit");

}

} // Try to read first. This is to allow create

// against an index that's currently open for

// searching. In this case we write the next

// segments_N file with no segments:

final SegmentInfos sis = new SegmentInfos(Version.LATEST.major);

if (indexExists) {

final SegmentInfos previous = SegmentInfos.readLatestCommit(directory);

sis.updateGenerationVersionAndCounter(previous);

}

segmentInfos = sis;

rollbackSegments = segmentInfos.createBackupSegmentInfos(); // Record that we have a change (zero out all

// segments) pending:

changed(); } else if (reader != null) {

// Init from an existing already opened NRT or non-NRT reader: if (reader.directory() != commit.getDirectory()) {

throw new IllegalArgumentException("IndexCommit's reader must have the same directory as the IndexCommit");

} if (reader.directory() != directoryOrig) {

throw new IllegalArgumentException("IndexCommit's reader must have the same directory passed to IndexWriter");

} if (reader.segmentInfos.getLastGeneration() == 0) {

// TODO: maybe we could allow this? It's tricky...

throw new IllegalArgumentException("index must already have an initial commit to open from reader");

} // Must clone because we don't want the incoming NRT reader to "see" any changes this writer now makes:

segmentInfos = reader.segmentInfos.clone(); SegmentInfos lastCommit;

try {

lastCommit = SegmentInfos.readCommit(directoryOrig, segmentInfos.getSegmentsFileName());

} catch (IOException ioe) {

throw new IllegalArgumentException("the provided reader is stale: its prior commit file \"" + segmentInfos.getSegmentsFileName() + "\" is missing from index");

} if (reader.writer != null) { // The old writer better be closed (we have the write lock now!):

assert reader.writer.closed; // In case the old writer wrote further segments (which we are now dropping),

// update SIS metadata so we remain write-once:

segmentInfos.updateGenerationVersionAndCounter(reader.writer.segmentInfos);

lastCommit.updateGenerationVersionAndCounter(reader.writer.segmentInfos);

} rollbackSegments = lastCommit.createBackupSegmentInfos();

} else {

// Init from either the latest commit point, or an explicit prior commit point: String lastSegmentsFile = SegmentInfos.getLastCommitSegmentsFileName(files);

if (lastSegmentsFile == null) {

throw new IndexNotFoundException("no segments* file found in " + directory + ": files: " + Arrays.toString(files));

} // Do not use SegmentInfos.read(Directory) since the spooky

// retrying it does is not necessary here (we hold the write lock):

segmentInfos = SegmentInfos.readCommit(directoryOrig, lastSegmentsFile); if (commit != null) {

// Swap out all segments, but, keep metadata in

// SegmentInfos, like version & generation, to

// preserve write-once. This is important if

// readers are open against the future commit

// points.

if (commit.getDirectory() != directoryOrig) {

throw new IllegalArgumentException("IndexCommit's directory doesn't match my directory, expected=" + directoryOrig + ", got=" + commit.getDirectory());

} SegmentInfos oldInfos = SegmentInfos.readCommit(directoryOrig, commit.getSegmentsFileName());

segmentInfos.replace(oldInfos);

changed(); if (infoStream.isEnabled("IW")) {

infoStream.message("IW", "init: loaded commit \"" + commit.getSegmentsFileName() + "\"");

}

} rollbackSegments = segmentInfos.createBackupSegmentInfos();

} commitUserData = new HashMap<>(segmentInfos.getUserData()).entrySet(); pendingNumDocs.set(segmentInfos.totalMaxDoc()); // start with previous field numbers, but new FieldInfos

// NOTE: this is correct even for an NRT reader because we'll pull FieldInfos even for the un-committed segments:

globalFieldNumberMap = getFieldNumberMap(); validateIndexSort(); config.getFlushPolicy().init(config);

bufferedUpdatesStream = new BufferedUpdatesStream(infoStream);

docWriter = new DocumentsWriter(flushNotifications, segmentInfos.getIndexCreatedVersionMajor(), pendingNumDocs,

enableTestPoints, this::newSegmentName,

config, directoryOrig, directory, globalFieldNumberMap);

readerPool = new ReaderPool(directory, directoryOrig, segmentInfos, globalFieldNumberMap,

bufferedUpdatesStream::getCompletedDelGen, infoStream, conf.getSoftDeletesField(), reader);

if (config.getReaderPooling()) {

readerPool.enableReaderPooling();

}

// Default deleter (for backwards compatibility) is

// KeepOnlyLastCommitDeleter: // Sync'd is silly here, but IFD asserts we sync'd on the IW instance:

synchronized(this) {

deleter = new IndexFileDeleter(files, directoryOrig, directory,

config.getIndexDeletionPolicy(),

segmentInfos, infoStream, this,

indexExists, reader != null); // We incRef all files when we return an NRT reader from IW, so all files must exist even in the NRT case:

assert create || filesExist(segmentInfos);

} if (deleter.startingCommitDeleted) {

// Deletion policy deleted the "head" commit point.

// We have to mark ourself as changed so that if we

// are closed w/o any further changes we write a new

// segments_N file.

changed();

} if (reader != null) {

// We always assume we are carrying over incoming changes when opening from reader:

segmentInfos.changed();

changed();

} if (infoStream.isEnabled("IW")) {

infoStream.message("IW", "init: create=" + create + " reader=" + reader);

messageState();

} success = true; } finally {

if (!success) {

if (infoStream.isEnabled("IW")) {

infoStream.message("IW", "init: hit exception on init; releasing write lock");

}

IOUtils.closeWhileHandlingException(writeLock);

writeLock = null;

}

}

}

2.Analyzer的定义

/**

* An Analyzer builds TokenStreams, which analyze text. It thus represents a

* policy for extracting index terms from text.

* <p>

* In order to define what analysis is done, subclasses must define their

* {@link TokenStreamComponents TokenStreamComponents} in {@link #createComponents(String)}.

* The components are then reused in each call to {@link #tokenStream(String, Reader)}.

* <p>

* Simple example:

* <pre class="prettyprint">

* Analyzer analyzer = new Analyzer() {

* {@literal @Override}

* protected TokenStreamComponents createComponents(String fieldName) {

* Tokenizer source = new FooTokenizer(reader);

* TokenStream filter = new FooFilter(source);

* filter = new BarFilter(filter);

* return new TokenStreamComponents(source, filter);

* }

* {@literal @Override}

* protected TokenStream normalize(TokenStream in) {

* // Assuming FooFilter is about normalization and BarFilter is about

* // stemming, only FooFilter should be applied

* return new FooFilter(in);

* }

* };

* </pre>

* For more examples, see the {@link org.apache.lucene.analysis Analysis package documentation}.

* <p>

* For some concrete implementations bundled with Lucene, look in the analysis modules:

* <ul>

* <li><a href="{@docRoot}/../analyzers-common/overview-summary.html">Common</a>:

* Analyzers for indexing content in different languages and domains.

* <li><a href="{@docRoot}/../analyzers-icu/overview-summary.html">ICU</a>:

* Exposes functionality from ICU to Apache Lucene.

* <li><a href="{@docRoot}/../analyzers-kuromoji/overview-summary.html">Kuromoji</a>:

* Morphological analyzer for Japanese text.

* <li><a href="{@docRoot}/../analyzers-morfologik/overview-summary.html">Morfologik</a>:

* Dictionary-driven lemmatization for the Polish language.

* <li><a href="{@docRoot}/../analyzers-phonetic/overview-summary.html">Phonetic</a>:

* Analysis for indexing phonetic signatures (for sounds-alike search).

* <li><a href="{@docRoot}/../analyzers-smartcn/overview-summary.html">Smart Chinese</a>:

* Analyzer for Simplified Chinese, which indexes words.

* <li><a href="{@docRoot}/../analyzers-stempel/overview-summary.html">Stempel</a>:

* Algorithmic Stemmer for the Polish Language.

* </ul>

*/

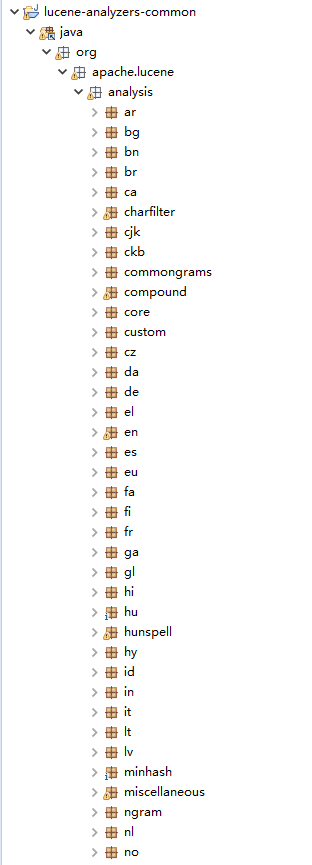

可以看出,Analyzer针对不同的语言给出了不同的方式

其中,common抽象出所有Analyzer类,如下图所示

lucene源码分析(7)Analyzer分析的更多相关文章

- Lucene 源码分析之倒排索引(三)

上文找到了 collect(-) 方法,其形参就是匹配的文档 Id,根据代码上下文,其中 doc 是由 iterator.nextDoc() 获得的,那 DefaultBulkScorer.itera ...

- 一个lucene源码分析的博客

ITpub上的一个lucene源码分析的博客,写的比较全面:http://blog.itpub.net/28624388/cid-93356-list-1/

- lucene源码分析的一些资料

针对lucene6.1较新的分析:http://46aae4d1e2371e4aa769798941cef698.devproxy.yunshipei.com/conansonic/article/d ...

- ArrayList源码和多线程安全问题分析

1.ArrayList源码和多线程安全问题分析 在分析ArrayList线程安全问题之前,我们线对此类的源码进行分析,找出可能出现线程安全问题的地方,然后代码进行验证和分析. 1.1 数据结构 Arr ...

- Okhttp3源码解析(3)-Call分析(整体流程)

### 前言 前面我们讲了 [Okhttp的基本用法](https://www.jianshu.com/p/8e404d9c160f) [Okhttp3源码解析(1)-OkHttpClient分析]( ...

- Okhttp3源码解析(2)-Request分析

### 前言 前面我们讲了 [Okhttp的基本用法](https://www.jianshu.com/p/8e404d9c160f) [Okhttp3源码解析(1)-OkHttpClient分析]( ...

- Spring mvc之源码 handlerMapping和handlerAdapter分析

Spring mvc之源码 handlerMapping和handlerAdapter分析 本篇并不是具体分析Spring mvc,所以好多细节都是一笔带过,主要是带大家梳理一下整个Spring mv ...

- HashMap的源码学习以及性能分析

HashMap的源码学习以及性能分析 一).Map接口的实现类 HashTable.HashMap.LinkedHashMap.TreeMap 二).HashMap和HashTable的区别 1).H ...

- ThreadLocal源码及相关问题分析

前言 在高并发的环境下,当我们使用一个公共的变量时如果不加锁会出现并发问题,例如SimpleDateFormat,但是加锁的话会影响性能,对于这种情况我们可以使用ThreadLocal.ThreadL ...

- 物联网防火墙himqtt源码之MQTT协议分析

物联网防火墙himqtt源码之MQTT协议分析 himqtt是首款完整源码的高性能MQTT物联网防火墙 - MQTT Application FireWall,C语言编写,采用epoll模式支持数十万 ...

随机推荐

- Moving XML/BI Publisher Components Between Instances

As it is well known fact that XMLPublisher stores the metadata and physical files for templates and ...

- MAC将 /etc/sudoers文件修改错后的几种解决方法

文件修改错误后难以再次修改的原因: 1.修改此文件必须是root权限 2.此文件出现问题时sudo命令不可用 3.默认情况下MAC系统不启用root用户 解决的方法: 一.启用root用户,使用roo ...

- SQL SERVER 2008 附加数据库出现只读问题。

问题描述 在附加数据库时出现的图片: 解决办法 步骤一,修改文件夹的 1.打开该数据库文件存放的目录或数据库文件的属性窗口,选择"属性"菜单->选择"安全&qu ...

- WinForm&&DEV知识小结

-------------------------------------------------------------------------------- 1.父窗体Form1中调用子窗体For ...

- WPF CompositionTarget

CompositionTarget 是一个类,表示其绘制你的应用程序的显示图面. WPF 动画引擎提供了许多用于创建基于帧的动画的功能. 但是,有应用程序方案中,您需要通过基于每个帧来呈现控件. Co ...

- Swift实战-小QQ(第3章):QQ主界面布局

1.导航栏外观设定*在AppDelegate.swift文件中的didFinishLaunchingWithOptions方法添加以下代码 func application(application: ...

- day111 爬虫第一天

一.模拟浏览器发请求. import requests r1 =requests.get( url ="https://dig.chouti.com/", headers ={ & ...

- NRF52840相对于之前的NRF52系列、NRF51系列增加了什么功能

现在广大客户的蓝牙采用NORDIC越来越多了,NORDIC一直在不断进行技术改进更好的满足市场需求 推出了新款NRF52840.NRF52840更为先进些,支持的功能也多点,比如IEEE802.15. ...

- “全栈2019”Java多线程第二十四章:等待唤醒机制详解

难度 初级 学习时间 10分钟 适合人群 零基础 开发语言 Java 开发环境 JDK v11 IntelliJ IDEA v2018.3 文章原文链接 "全栈2019"Java多 ...

- js代码上的优化

例1 if ( config.url === '/web/teacher/classes' || config.url === '/web/teacher/students || config.u ...