Zeppelin使用spark解释器

Zeppelin为0.5.6

Zeppelin默认自带本地spark,可以不依赖任何集群,下载bin包,解压安装就可以使用。

使用其他的spark集群在yarn模式下。

配置:

vi zeppelin-env.sh

添加:

export SPARK_HOME=/usr/crh/current/spark-client

export SPARK_SUBMIT_OPTIONS="--driver-memory 512M --executor-memory 1G"

export HADOOP_CONF_DIR=/etc/hadoop/conf

Zeppelin Interpreter配置

注意:设置完重启解释器。

Properties的master属性如下:

新建Notebook

Tips:几个月前zeppelin还是0.5.6,现在最新0.6.2,zeppelin 0.5.6写notebook时前面必须加%spark,而0.6.2若什么也不加就默认是scala语言。

zeppelin 0.5.6不加就报如下错:

Connect to 'databank:4300' failed

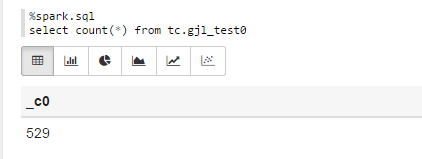

%spark.sql

select count(*) from tc.gjl_test0

报错:

com.fasterxml.jackson.databind.JsonMappingException: Could not find creator property with name 'id' (in class org.apache.spark.rdd.RDDOperationScope)

at [Source: {"id":"2","name":"ConvertToSafe"}; line: 1, column: 1]

at com.fasterxml.jackson.databind.JsonMappingException.from(JsonMappingException.java:148)

at com.fasterxml.jackson.databind.DeserializationContext.mappingException(DeserializationContext.java:843)

at com.fasterxml.jackson.databind.deser.BeanDeserializerFactory.addBeanProps(BeanDeserializerFactory.java:533)

at com.fasterxml.jackson.databind.deser.BeanDeserializerFactory.buildBeanDeserializer(BeanDeserializerFactory.java:220)

at com.fasterxml.jackson.databind.deser.BeanDeserializerFactory.createBeanDeserializer(BeanDeserializerFactory.java:143)

at com.fasterxml.jackson.databind.deser.DeserializerCache._createDeserializer2(DeserializerCache.java:409)

at com.fasterxml.jackson.databind.deser.DeserializerCache._createDeserializer(DeserializerCache.java:358)

at com.fasterxml.jackson.databind.deser.DeserializerCache._createAndCache2(DeserializerCache.java:265)

at com.fasterxml.jackson.databind.deser.DeserializerCache._createAndCacheValueDeserializer(DeserializerCache.java:245)

at com.fasterxml.jackson.databind.deser.DeserializerCache.findValueDeserializer(DeserializerCache.java:143)

at com.fasterxml.jackson.databind.DeserializationContext.findRootValueDeserializer(DeserializationContext.java:439)

at com.fasterxml.jackson.databind.ObjectMapper._findRootDeserializer(ObjectMapper.java:3666)

at com.fasterxml.jackson.databind.ObjectMapper._readMapAndClose(ObjectMapper.java:3558)

at com.fasterxml.jackson.databind.ObjectMapper.readValue(ObjectMapper.java:2578)

at org.apache.spark.rdd.RDDOperationScope$.fromJson(RDDOperationScope.scala:85)

at org.apache.spark.rdd.RDDOperationScope$$anonfun$5.apply(RDDOperationScope.scala:136)

at org.apache.spark.rdd.RDDOperationScope$$anonfun$5.apply(RDDOperationScope.scala:136)

at scala.Option.map(Option.scala:145)

at org.apache.spark.rdd.RDDOperationScope$.withScope(RDDOperationScope.scala:136)

at org.apache.spark.sql.execution.SparkPlan.execute(SparkPlan.scala:130)

at org.apache.spark.sql.execution.ConvertToSafe.doExecute(rowFormatConverters.scala:56)

at org.apache.spark.sql.execution.SparkPlan$$anonfun$execute$5.apply(SparkPlan.scala:132)

at org.apache.spark.sql.execution.SparkPlan$$anonfun$execute$5.apply(SparkPlan.scala:130)

at org.apache.spark.rdd.RDDOperationScope$.withScope(RDDOperationScope.scala:150)

at org.apache.spark.sql.execution.SparkPlan.execute(SparkPlan.scala:130)

at org.apache.spark.sql.execution.SparkPlan.executeTake(SparkPlan.scala:187)

at org.apache.spark.sql.execution.Limit.executeCollect(basicOperators.scala:165)

at org.apache.spark.sql.execution.SparkPlan.executeCollectPublic(SparkPlan.scala:174)

at org.apache.spark.sql.DataFrame$$anonfun$org$apache$spark$sql$DataFrame$$execute$1$1.apply(DataFrame.scala:1499)

at org.apache.spark.sql.DataFrame$$anonfun$org$apache$spark$sql$DataFrame$$execute$1$1.apply(DataFrame.scala:1499)

at org.apache.spark.sql.execution.SQLExecution$.withNewExecutionId(SQLExecution.scala:56)

at org.apache.spark.sql.DataFrame.withNewExecutionId(DataFrame.scala:2086)

at org.apache.spark.sql.DataFrame.org$apache$spark$sql$DataFrame$$execute$1(DataFrame.scala:1498)

at org.apache.spark.sql.DataFrame.org$apache$spark$sql$DataFrame$$collect(DataFrame.scala:1505)

at org.apache.spark.sql.DataFrame$$anonfun$head$1.apply(DataFrame.scala:1375)

at org.apache.spark.sql.DataFrame$$anonfun$head$1.apply(DataFrame.scala:1374)

at org.apache.spark.sql.DataFrame.withCallback(DataFrame.scala:2099)

at org.apache.spark.sql.DataFrame.head(DataFrame.scala:1374)

at org.apache.spark.sql.DataFrame.take(DataFrame.scala:1456)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:57)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:606)

at org.apache.zeppelin.spark.ZeppelinContext.showDF(ZeppelinContext.java:297)

at org.apache.zeppelin.spark.SparkSqlInterpreter.interpret(SparkSqlInterpreter.java:144)

at org.apache.zeppelin.interpreter.ClassloaderInterpreter.interpret(ClassloaderInterpreter.java:57)

at org.apache.zeppelin.interpreter.LazyOpenInterpreter.interpret(LazyOpenInterpreter.java:93)

at org.apache.zeppelin.interpreter.remote.RemoteInterpreterServer$InterpretJob.jobRun(RemoteInterpreterServer.java:300)

at org.apache.zeppelin.scheduler.Job.run(Job.java:169)

at org.apache.zeppelin.scheduler.FIFOScheduler$1.run(FIFOScheduler.java:134)

at java.util.concurrent.Executors$RunnableAdapter.call(Executors.java:471)

at java.util.concurrent.FutureTask.run(FutureTask.java:262)

at java.util.concurrent.ScheduledThreadPoolExecutor$ScheduledFutureTask.access$201(ScheduledThreadPoolExecutor.java:178)

at java.util.concurrent.ScheduledThreadPoolExecutor$ScheduledFutureTask.run(ScheduledThreadPoolExecutor.java:292)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1145)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:615)

at java.lang.Thread.run(Thread.java:745)

原因:

进入/opt/zeppelin-0.5.6-incubating-bin-all目录下:

# ls lib |grep jackson

jackson-annotations-2.5.0.jar

jackson-core-2.5.3.jar

jackson-databind-2.5.3.jar

将里面的版本换成如下版本:

# ls lib |grep jackson

jackson-annotations-2.4.4.jar

jackson-core-2.4.4.jar

jackson-databind-2.4.4.jar

测试成功!

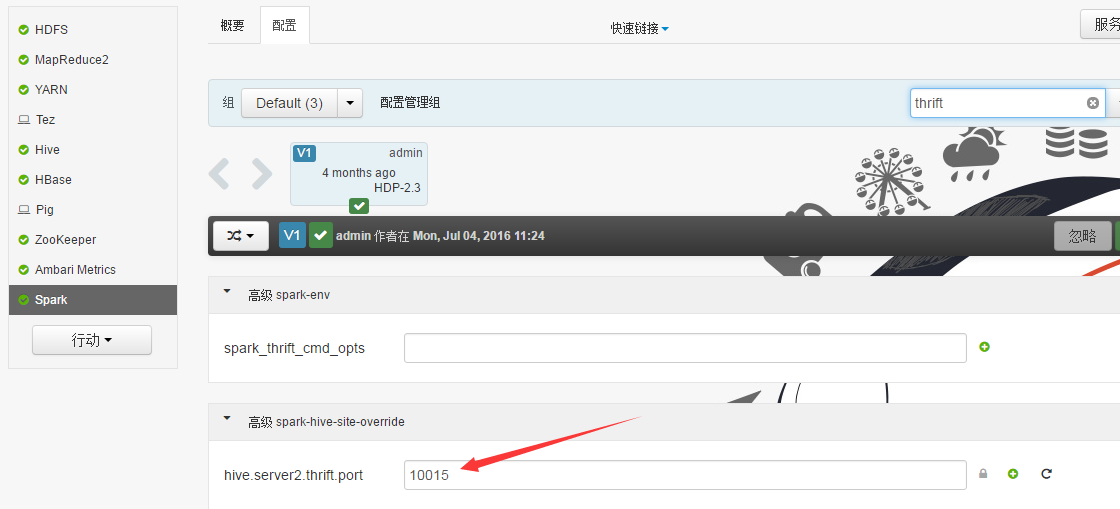

Sparksql也可直接通过hive jdbc连接,只需换端口,如下图:

Zeppelin使用spark解释器的更多相关文章

- Zeppelin使用Spark的yarn-client模式

Zeppelin版本0.6.2 1. Export SPARK_HOME In conf/zeppelin-env.sh, export SPARK_HOME environment variable ...

- Zeppelin0.5.6使用spark解释器

Zeppelin为0.5.6 Zeppelin默认自带本地spark,可以不依赖任何集群,下载bin包,解压安装就可以使用. 使用其他的spark集群在yarn模式下. 配置: vi zeppelin ...

- Zeppelin添加mysql解释器

安装Apache zeppelin 1 wget http://apache.fayea.com/zeppelin/zeppelin-0.6.2/zeppelin-0.6.2-bin-all.tgz ...

- Zeppelin使用phoenix解释器

Interpreters设置

- Zeppelin 0.6.2使用Spark的yarn-client模式

Zeppelin版本0.6.2 1. Export SPARK_HOME In conf/zeppelin-env.sh, export SPARK_HOME environment variable ...

- Spark实战2:Zeppelin的安装和SparkSQL使用总结

zeppelin是spark的web版本notebook编辑器,相当于ipython的notebook编辑器. 一Zeppelin安装 (前提是spark已经安装好) 1 下载https://zepp ...

- Ubuntu下基于Saprk安装Zeppelin

前言 Apache Zeppelin是一款基于web的notebook(类似于ipython的notebook),支持交互式地数据分析,即一个Web笔记形式的交互式数据查询分析工具,可以在线用scal ...

- Zeppelin原理简介

Zeppelin是一个基于Web的notebook,提供交互数据分析和可视化.后台支持接入多种数据处理引擎,如spark,hive等.支持多种语言: Scala(Apache Spark).Pytho ...

- Spark in meituan http://tech.meituan.com/spark-in-meituan.html

Spark在美团的实践 忽略元数据末尾 回到原数据开始处 引言:Spark美团系列终于凑成三部曲了,Spark很强大应用很广泛, 文中Spark交互式开发平台和作业ETL模板的设计都很有启发借鉴意义. ...

随机推荐

- js实现复制内容

一.实现点击按钮,复制文本框中的的内容 <script type="text/javascript"> function ...

- [转]Numpy使用MKL库提升计算性能

from:http://unifius.wordpress.com.cn/archives/5 系统:Gentoo Linux (64bit, Kernel 3.7.1)配置:Intel(R) Cor ...

- Java错误提示is not an enclosing class

今天脑袋晕乎乎的,犯了个低级错误,好半天才反应过来 一直提示:is not an enclosing class 我居然把 RegisterActivity.class 写成了 RegisterAct ...

- Mysq 5.7l服务无法启动,没有报告任何错误

昨天系统崩溃了,然后重装了Mysql 5.7 安装步骤和遇到问题及解决方案. 去官网下载Mysql 5.7的解压包(zip),解压到你要安装的目录. 我的安装目录是:D:\Java\Mysql 安装步 ...

- RSA算法记录----摘抄

RSA算法原理(一) "公钥加密算法". 因为它是计算机通信安全的基石,保证了加密数据不会被破解.你可以想象一下,信用卡交易被破解的后果. 进入正题之前,我先简单介绍一下,什么 ...

- Signalr 实现心跳包

项目分析: 一个实时的IM坐席系统,客户端和坐席使用IM通信,客户端使用android和ios的app,坐席使用web. web端可以保留自己的登录状态,但为防止意外情况的发生(如浏览器异常关闭,断网 ...

- 转:【iOS开发每日小笔记(十一)】iOS8更新留下的“坑” NSAttributedString设置下划线 NSUnderlineStyleAttributeName 属性必须为NSNumber

http://www.bubuko.com/infodetail-382485.html 标签:des class style 代码 html 使用 问题 文件 数据 ...

- 1.webpack-----模块加载器兼打包工具

一.webpack的优势 1. 能模块化 JS . 2. 开发便捷,能替代部分 grunt/gulp 的工作,比如打包.压缩混淆.图片转base64等. 3. 扩展性强,插件机制完善,特别是支持 Re ...

- HDU 1040 As Easy As A+B(排序)

As Easy As A+B Problem Description These days, I am thinking about a question, how can I get a probl ...

- 2016 Vultr VPS最新优惠码,赠送新用户70美元,亲测有效

vultr肯定疯了,从来没有哪家海外vps像vultr那么大力度的优惠.近期,vultr vps再度推出优惠码,任何新用户注册即送20美元!要知道,vultr vps最便宜的vps套餐只要5美元/月, ...