Hadoop基础-完全分布式模式部署yarn日志聚集功能

Hadoop基础-完全分布式模式部署yarn日志聚集功能

作者:尹正杰

版权声明:原创作品,谢绝转载!否则将追究法律责任。

其实我们不用配置也可以在服务器后台通过命令行的形式查看相应的日志,但为了更方便查看日志,我们可以将其配置成通过webUI的形式访问日志,本篇博客会手把手的教你如何实操。如果你的集群配置比较低的话,并不建议开启日志,但是一般的大数据集群,服务器配置应该都不低,不过最好根据实际情况考虑。

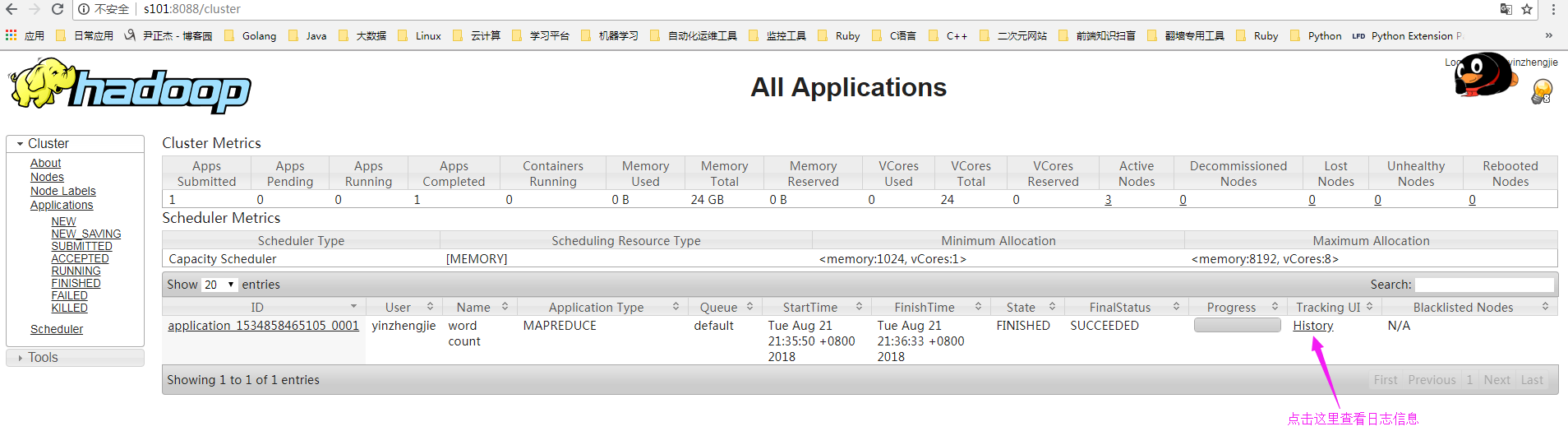

一.查看日志信息

1>.通过web界面查看日志信息

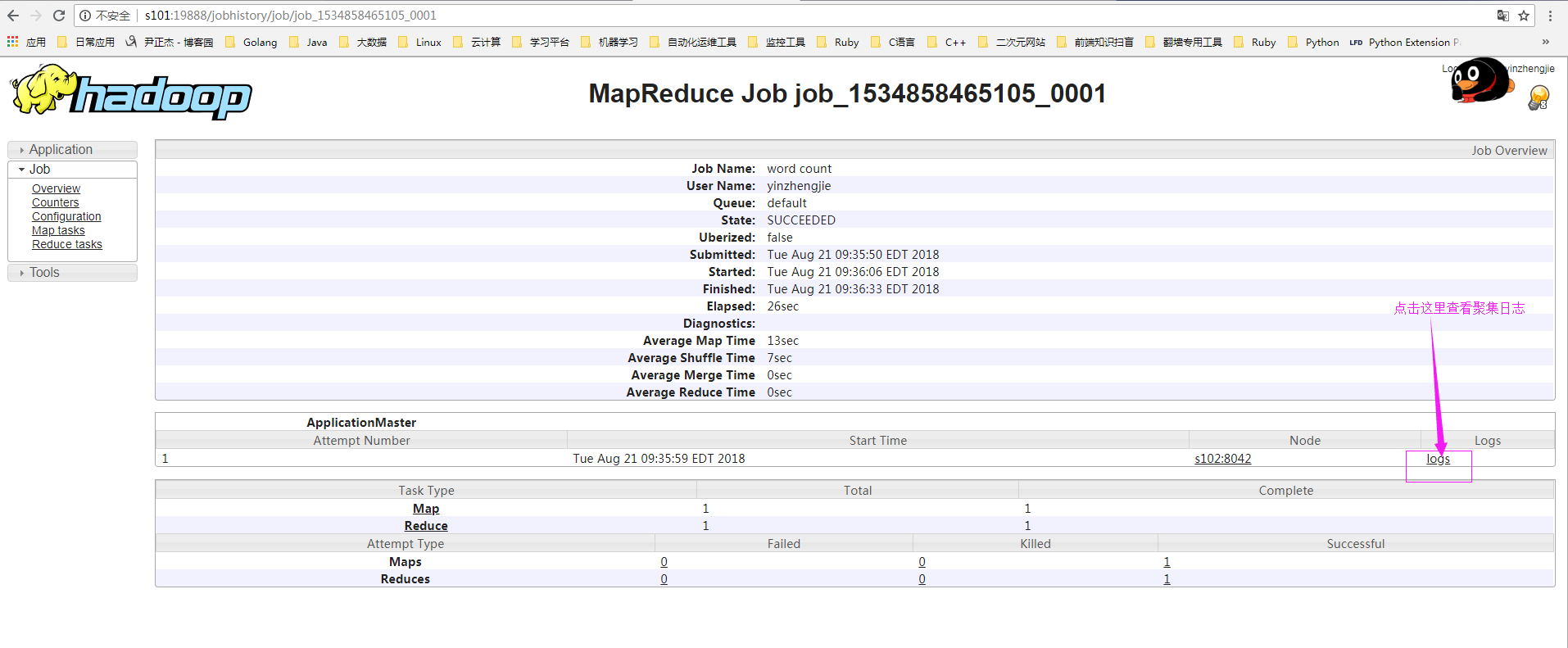

2>.webUI默认是无法查看到日志信息

3>.通过命令行查看

日志默认存放在安装hadoop目录的logs文件夹中,其实我们不用配置web页面也可以查看相应的日志,下图就是通过命令行的形式查看日志。

二.配置日志聚集功能

1>.停止hadoop集群

[yinzhengjie@s101 ~]$ stop-dfs.sh

Stopping namenodes on [s101 s105]

s101: stopping namenode

s105: stopping namenode

s103: stopping datanode

s104: stopping datanode

s102: stopping datanode

Stopping journal nodes [s102 s103 s104]

s102: stopping journalnode

s104: stopping journalnode

s103: stopping journalnode

Stopping ZK Failover Controllers on NN hosts [s101 s105]

s101: stopping zkfc

s105: stopping zkfc

[yinzhengjie@s101 ~]$

停止hdfs分布式文件系统([yinzhengjie@s101 ~]$ stop-dfs.sh )

[yinzhengjie@s101 ~]$ stop-yarn.sh

stopping yarn daemons

s101: stopping resourcemanager

s105: no resourcemanager to stop

s102: stopping nodemanager

s104: stopping nodemanager

s103: stopping nodemanager

s102: nodemanager did not stop gracefully after seconds: killing with kill -

s104: nodemanager did not stop gracefully after seconds: killing with kill -

s103: nodemanager did not stop gracefully after seconds: killing with kill -

no proxyserver to stop

[yinzhengjie@s101 ~]$

停止yarn集群([yinzhengjie@s101 ~]$ stop-yarn.sh )

[yinzhengjie@s101 ~]$ mr-jobhistory-daemon.sh stop historyserver

stopping historyserver

[yinzhengjie@s101 ~]$

停止yarn日志服务([yinzhengjie@s101 ~]$ mr-jobhistory-daemon.sh stop historyserver)

[yinzhengjie@s101 ~]$ more `which xcall.sh`

#!/bin/bash

#@author :yinzhengjie

#blog:http://www.cnblogs.com/yinzhengjie

#EMAIL:y1053419035@qq.com #判断用户是否传参

if [ $# -lt ];then

echo "请输入参数"

exit

fi #获取用户输入的命令

cmd=$@ for (( i=;i<=;i++ ))

do

#使终端变绿色

tput setaf

echo ============= s$i $cmd ============

#使终端变回原来的颜色,即白灰色

tput setaf

#远程执行命令

ssh s$i $cmd

#判断命令是否执行成功

if [ $? == ];then

echo "命令执行成功"

fi

done

[yinzhengjie@s101 ~]$

[yinzhengjie@s101 ~]$ xcall.sh jps

============= s101 jps ============

Jps

命令执行成功

============= s102 jps ============

Jps

QuorumPeerMain

命令执行成功

============= s103 jps ============

Jps

QuorumPeerMain

命令执行成功

============= s104 jps ============

QuorumPeerMain

Jps

命令执行成功

============= s105 jps ============

Jps

命令执行成功

[yinzhengjie@s101 ~]$

检查是否停止成功([yinzhengjie@s101 ~]$ xcall.sh jps)

2>.修改“yarn-site.xml”配置文件

[yinzhengjie@s101 ~]$ more /soft/hadoop/etc/hadoop/yarn-site.xml

<?xml version="1.0"?>

<configuration>

<property>

<name>yarn.resourcemanager.hostname</name>

<value>s101</value>

</property>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property> <property>

<name>yarn.nodemanager.pmem-check-enabled</name>

<value>false</value>

</property> <property>

<name>yarn.nodemanager.vmem-check-enabled</name>

<value>false</value>

</property> <property>

<name>yarn.log-aggregation-enable</name>

<value>true</value>

</property> <!-- 日志保留时间设置7天 -->

<property>

<name>yarn.log-aggregation.retain-seconds</name>

<value>604800</value>

</property> </configuration> <!-- yarn-site.xml配置文件的作用:

#主要用于配置调度器级别的参数. yarn.resourcemanager.hostname 参数的作用:

#指定资源管理器(resourcemanager)的主机名 yarn.nodemanager.aux-services 参数的作用:

#指定nodemanager使用shuffle yarn.nodemanager.pmem-check-enabled 参数的作用:

#是否启动一个线程检查每个任务正使用的物理内存量,如果任务超出分配值,则直接将其杀掉,默认是true yarn.nodemanager.vmem-check-enabled 参数的作用:

#是否启动一个线程检查每个任务正使用的虚拟内存量,如果任务超出分配值,则直接将其杀掉,默认是true yarn.log-aggregation-enable 参数的作用:

#是否开启webUI日志聚集功能使能,默认为flase yarn.log-aggregation.retain-seconds 参数的作用:

#指定日志保留时间,单位为妙

-->

[yinzhengjie@s101 ~]$

3>.分发配置文件到各个节点

[yinzhengjie@s101 ~]$ more `which xrsync.sh`

#!/bin/bash

#@author :yinzhengjie

#blog:http://www.cnblogs.com/yinzhengjie

#EMAIL:y1053419035@qq.com #判断用户是否传参

if [ $# -lt ];then

echo "请输入参数";

exit

fi #获取文件路径

file=$@ #获取子路径

filename=`basename $file` #获取父路径

dirpath=`dirname $file` #获取完整路径

cd $dirpath

fullpath=`pwd -P` #同步文件到DataNode

for (( i=;i<=;i++ ))

do

#使终端变绿色

tput setaf

echo =========== s$i %file ===========

#使终端变回原来的颜色,即白灰色

tput setaf

#远程执行命令

rsync -lr $filename `whoami`@s$i:$fullpath

#判断命令是否执行成功

if [ $? == ];then

echo "命令执行成功"

fi

done

[yinzhengjie@s101 ~]$

[yinzhengjie@s101 ~]$ xrsync.sh /soft/hadoop-2.7./

=========== s102 %file ===========

命令执行成功

=========== s103 %file ===========

命令执行成功

=========== s104 %file ===========

命令执行成功

=========== s105 %file ===========

命令执行成功

[yinzhengjie@s101 ~]$

4>.启动hadoop集群

[yinzhengjie@s101 ~]$ start-dfs.sh

Starting namenodes on [s101 s105]

s105: starting namenode, logging to /soft/hadoop-2.7./logs/hadoop-yinzhengjie-namenode-s105.out

s101: starting namenode, logging to /soft/hadoop-2.7./logs/hadoop-yinzhengjie-namenode-s101.out

s103: starting datanode, logging to /soft/hadoop-2.7./logs/hadoop-yinzhengjie-datanode-s103.out

s102: starting datanode, logging to /soft/hadoop-2.7./logs/hadoop-yinzhengjie-datanode-s102.out

s104: starting datanode, logging to /soft/hadoop-2.7./logs/hadoop-yinzhengjie-datanode-s104.out

Starting journal nodes [s102 s103 s104]

s102: starting journalnode, logging to /soft/hadoop-2.7./logs/hadoop-yinzhengjie-journalnode-s102.out

s103: starting journalnode, logging to /soft/hadoop-2.7./logs/hadoop-yinzhengjie-journalnode-s103.out

s104: starting journalnode, logging to /soft/hadoop-2.7./logs/hadoop-yinzhengjie-journalnode-s104.out

Starting ZK Failover Controllers on NN hosts [s101 s105]

s105: starting zkfc, logging to /soft/hadoop-2.7./logs/hadoop-yinzhengjie-zkfc-s105.out

s101: starting zkfc, logging to /soft/hadoop-2.7./logs/hadoop-yinzhengjie-zkfc-s101.out

[yinzhengjie@s101 ~]$

启动hdfs分布式文件系统([yinzhengjie@s101 ~]$ start-dfs.sh )

[yinzhengjie@s101 ~]$ start-yarn.sh

starting yarn daemons

s101: starting resourcemanager, logging to /soft/hadoop-2.7./logs/yarn-yinzhengjie-resourcemanager-s101.out

s105: starting resourcemanager, logging to /soft/hadoop-2.7./logs/yarn-yinzhengjie-resourcemanager-s105.out

s102: starting nodemanager, logging to /soft/hadoop-2.7./logs/yarn-yinzhengjie-nodemanager-s102.out

s103: starting nodemanager, logging to /soft/hadoop-2.7./logs/yarn-yinzhengjie-nodemanager-s103.out

s104: starting nodemanager, logging to /soft/hadoop-2.7./logs/yarn-yinzhengjie-nodemanager-s104.out

[yinzhengjie@s101 ~]$

启动yarn集群([yinzhengjie@s101 ~]$ start-yarn.sh )

[yinzhengjie@s101 ~]$ mr-jobhistory-daemon.sh start historyserver

starting historyserver, logging to /soft/hadoop-2.7./logs/mapred-yinzhengjie-historyserver-s101.out

[yinzhengjie@s101 ~]$

启动yarn日志服务([yinzhengjie@s101 ~]$ mr-jobhistory-daemon.sh start historyserver)

[yinzhengjie@s101 ~]$ xcall.sh jps

============= s101 jps ============

DFSZKFailoverController

Jps

NameNode

JobHistoryServer

ResourceManager

命令执行成功

============= s102 jps ============

DataNode

JournalNode

NodeManager

Jps

QuorumPeerMain

命令执行成功

============= s103 jps ============

JournalNode

NodeManager

Jps

DataNode

QuorumPeerMain

命令执行成功

============= s104 jps ============

JournalNode

NodeManager

QuorumPeerMain

Jps

DataNode

命令执行成功

============= s105 jps ============

NameNode

Jps

DFSZKFailoverController

命令执行成功

[yinzhengjie@s101 ~]$

检查集群是否启动成功([yinzhengjie@s101 ~]$ xcall.sh jps)

5>.在yarn上执行MapReduce程序

[yinzhengjie@s101 ~]$ hdfs dfs -rm -R /yinzhengjie/data/output

// :: INFO fs.TrashPolicyDefault: Namenode trash configuration: Deletion interval = minutes, Emptier interval = minutes.

Deleted /yinzhengjie/data/output

[yinzhengjie@s101 ~]$

[yinzhengjie@s101 ~]$ hadoop jar /soft/hadoop/share/hadoop/mapreduce/hadoop-mapreduce-examples-2.7..jar wordcount /yinzhengjie/data/input /yinzhengjie/data/output

// :: INFO client.RMProxy: Connecting to ResourceManager at s101/172.30.1.101:

// :: INFO input.FileInputFormat: Total input paths to process :

// :: INFO mapreduce.JobSubmitter: number of splits:

// :: INFO mapreduce.JobSubmitter: Submitting tokens for job: job_1534857666985_0001

// :: INFO impl.YarnClientImpl: Submitted application application_1534857666985_0001

// :: INFO mapreduce.Job: The url to track the job: http://s101:8088/proxy/application_1534857666985_0001/

// :: INFO mapreduce.Job: Running job: job_1534857666985_0001

// :: INFO mapreduce.Job: Job job_1534857666985_0001 running in uber mode : false

// :: INFO mapreduce.Job: map % reduce %

// :: INFO mapreduce.Job: map % reduce %

// :: INFO mapreduce.Job: map % reduce %

// :: INFO mapreduce.Job: Job job_1534857666985_0001 completed successfully

// :: INFO mapreduce.Job: Counters:

File System Counters

FILE: Number of bytes read=

FILE: Number of bytes written=

FILE: Number of read operations=

FILE: Number of large read operations=

FILE: Number of write operations=

HDFS: Number of bytes read=

HDFS: Number of bytes written=

HDFS: Number of read operations=

HDFS: Number of large read operations=

HDFS: Number of write operations=

Job Counters

Launched map tasks=

Launched reduce tasks=

Data-local map tasks=

Total time spent by all maps in occupied slots (ms)=

Total time spent by all reduces in occupied slots (ms)=

Total time spent by all map tasks (ms)=

Total time spent by all reduce tasks (ms)=

Total vcore-milliseconds taken by all map tasks=

Total vcore-milliseconds taken by all reduce tasks=

Total megabyte-milliseconds taken by all map tasks=

Total megabyte-milliseconds taken by all reduce tasks=

Map-Reduce Framework

Map input records=

Map output records=

Map output bytes=

Map output materialized bytes=

Input split bytes=

Combine input records=

Combine output records=

Reduce input groups=

Reduce shuffle bytes=

Reduce input records=

Reduce output records=

Spilled Records=

Shuffled Maps =

Failed Shuffles=

Merged Map outputs=

GC time elapsed (ms)=

CPU time spent (ms)=

Physical memory (bytes) snapshot=

Virtual memory (bytes) snapshot=

Total committed heap usage (bytes)=

Shuffle Errors

BAD_ID=

CONNECTION=

IO_ERROR=

WRONG_LENGTH=

WRONG_MAP=

WRONG_REDUCE=

File Input Format Counters

Bytes Read=

File Output Format Counters

Bytes Written=

[yinzhengjie@s101 ~]$

6>.查看yarn的记录信息

7>.查看历史日志

8>.查看日志信息

Hadoop基础-完全分布式模式部署yarn日志聚集功能的更多相关文章

- 启用yarn日志聚集功能

在yarn-site.xml配置文件中添加如下内容: ##开启日志聚集功能 <property> <name>yarn.log-ag ...

- Hadoop基础-HDFS分布式文件系统的存储

Hadoop基础-HDFS分布式文件系统的存储 作者:尹正杰 版权声明:原创作品,谢绝转载!否则将追究法律责任. 一.HDFS数据块 1>.磁盘中的数据块 每个磁盘都有默认的数据块大小,这个磁盘 ...

- Yarn 的日志聚集功能配置使用

需要 hadoop 的安装目录/etc/hadoop/yarn-site.xml 中进行配置 配置内容 <property> <name>yarn.log-aggregati ...

- hadoop 3.x 配置日志聚集功能

打开$HADOOP_HOME/etc/hadoop/yarn-site.xml,增加以下配置(在此配置文件中尽量不要使用中文注释) <!--logs--> <property> ...

- 开启spark日志聚集功能

spark监控应用方式: 1)在运行过程中可以通过web Ui:4040端口进行监控 2)任务运行完成想要监控spark,需要启动日志聚集功能 开启日志聚集功能方法: 编辑conf/spark-env ...

- Hadoop伪分布式模式部署

Hadoop的安装有三种执行模式: 单机模式(Local (Standalone) Mode):Hadoop的默认模式,0配置.Hadoop执行在一个Java进程中.使用本地文件系统.不使用HDFS, ...

- 用python + hadoop streaming 编写分布式程序(三) -- 自定义功能

又是期末又是实训TA的事耽搁了好久……先把写好的放上博客吧 相关随笔: Hadoop-1.0.4集群搭建笔记 用python + hadoop streaming 编写分布式程序(一) -- 原理介绍 ...

- Hadoop+Hbas完全分布式安装部署

Hadoop安装部署基本步骤: 1.安装jdk,配置环境变量. jdk可以去网上自行下载,环境变量如下: 编辑 vim /etc/profile 文件,添加如下内容: export JAVA_HO ...

- hadoop实战之分布式模式

环境 192.168.1.101 host101 192.168.1.102 host102 1.安装配置host101 [root@host101 ~]# cat /etc/hosts |grep ...

随机推荐

- Freemaker的了解

freemarket 模板技术 与web容器没什么关系 可以用struct2作为视图组件 第一步导入jar包 项目目录下建立一个templates目录 在此目录下建立一个模板文件a.ftl文件 ...

- first time to use github

first time to use github and feeling good. 学习软件工程,老师要求我们用这个软件管理自己的代码,网站是全英的,软件也简单易用,方便 https://githu ...

- 二维数组转化为一维数组 contact 与apply 的结合

将多维数组(尤其是二维数组)转化为一维数组是业务开发中的常用逻辑,除了使用朴素的循环转换以外,我们还可以利用Javascript的语言特性实现更为简洁优雅的转换.本文将从朴素的循环转换开始,逐一介绍三 ...

- git学习笔记2——ProGit2

先附上教程--<ProGit 2> 配置信息 Git 自带一个 git config 的工具来帮助设置控制 Git 外观和行为的配置变量. 这些变量存储在三个不同的位置: /etc/git ...

- WinForm(WPF) splash screen demo with C#

https://www.codeproject.com/Articles/21062/Splash-Screen-Control https://www.codeproject.com/Article ...

- 通过Oracle DUMP 文件获取表的创建语句

1. 有了dump文件之后 想获取表的创建语句. 之前一直不知道 dump文件能够直接解析文件. 今天学习了下 需要的材料. dump文件, dump文件对应的schema和用户. 以及一个版本合适的 ...

- [工作相关] 一个虚拟机上面的SAP4HANA的简单使用维护

1.公司组织竞品分析, 选择了SAP的 SAP4HANA作为竞品 这边协助同事搭建了SAP4HANA的测试环境: 备注 这个环境 应该是同事通过一些渠道获取到的. 里面是基于这个虚拟机进行的说明:: ...

- SAP入行就业

就大局势来说, 缺乏人最多的模块有abap 还有就是FICO 和MM. 如果您 英语水平特别高的话,建议您学习FICO HR 或BW. 如果您想追求高薪,那就是FICO无疑了.想快速就业或者有编程基础 ...

- Qt开发之信号槽机制

一.信号槽机制原理 1.如何声明信号槽 Qt头文件中一段的简化版: class Example: public QObject { Q_OBJECT signals: void customSigna ...

- AXI4

axis AXI4-stream 去掉了地址项,允许无限制的数据突发传输规模: 摘自http://xilinx.eetrend.com/blog/11443