Facebook Gradient boosting 梯度提升 separate the positive and negative labeled points using a single line 梯度提升决策树 Gradient Boosted Decision Trees (GBDT)

https://www.quora.com/Why-do-people-use-gradient-boosted-decision-trees-to-do-feature-transform

Why is linearity/non-linearity important?

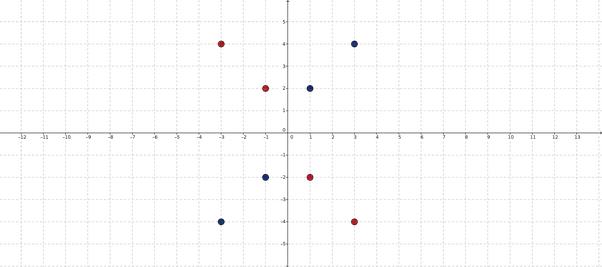

Most of our classification models try to find a single line that separates the two sets of point. I say that they find a line (but a line makes sense for 2 dimensional space), but in higher dimensional space, "a line" is referred to as a Hyperplane. But, for the moment, we will work with 2 dimensional spaces since they are easy to visualize and hence we can simply think that the classifiers try to find lines in this 2D space which will separate the set of points. Now, since the above set of points cannot be separated by a single line, which means most of our classifiers will not work on the above dataset.

How to solve?

There are two ways to solve:

- One way is to explicitly use non-linear classifiers. Non linear classifiers are classifiers that do not try to separate the set of points with a single line but uses either a non-linear separator or a set of linear separators (to make a piece-wise non-linear separator).

- Another way is to transform the input space in such a way that the non-linearity is eliminated and we get a linear feature space.

Second Method

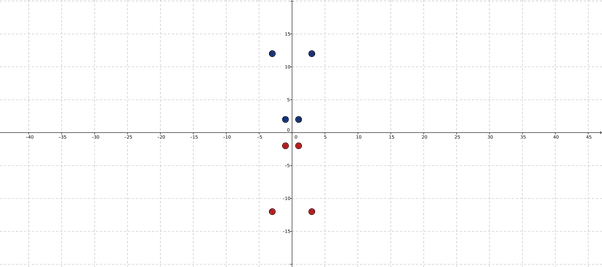

Let us try to find a transformation for the given set of points such that the non linearity is removed. If we carefully see the set of points given to us, we would note that the the label is negative exactly when one of the dimension is negative. If both dimensions are positive or negative, the label given is positive. Therefore, let x=(x1,x2)x=(x1,x2) be a 2D point and let ff be a function that transforms this 2D point to another 2D point as follows: f(x)=(x1,x1x2)f(x)=(x1,x1x2). Let us see what happens to the set of points given to us, when we pass it through the above transformation:

【升维 超线性 分割类】

Oh look at that! Now the two sets of points can be separated by a line! This tells us that now, all our linear classification models will work on this transformed space.

Is it easy to find such transformations?

No, while we were lucky to find a transformation for the above case, in general, it is not so easy to find such a transformation. However, in the above case, I mapped the points from 2D to 2D, but one can map these 2D points to some higher dimensional space as well. There is a theorem called Cover's theorem which states that if you map the points to sufficiently large and high dimensional space, then with high probability, the non-linearity would be erased and that the points will be separated by a line in that high dimensional space.

Evaluating boosted decision trees for billions of users

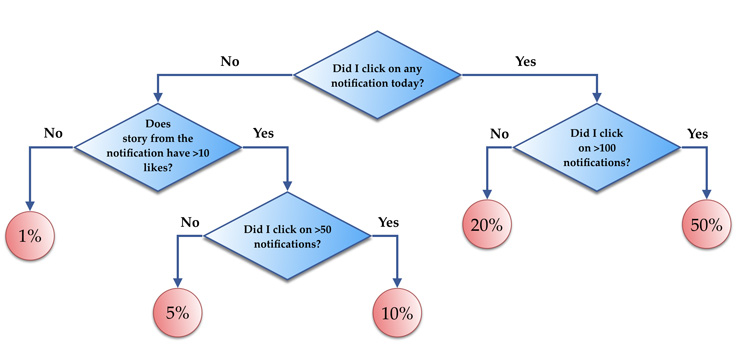

【Facebook点击预测 predicting the probability of clicking a notification】

We trained a boosted decision tree model for predicting the probability of clicking a notification using 256 trees, where each of the trees contains 32 leaves. Next, we compared the CPU usage for feature vector evaluations, where each batch was ranking 1,000 candidates on average. The batch size value N was tuned to be optimal based on the machine L1/L2 cache sizes. We saw the following performance improvements over the flat tree implementation:

- Plain compiled model: 2x improvement.

- Compiled model with annotations: 2.5x improvement.

- Compiled model with annotations, ranges and common/categorical features: 5x improvement.

The performance improvements were similar for different algorithm parameters (128 or 512 trees, 16 or 64 leaves).

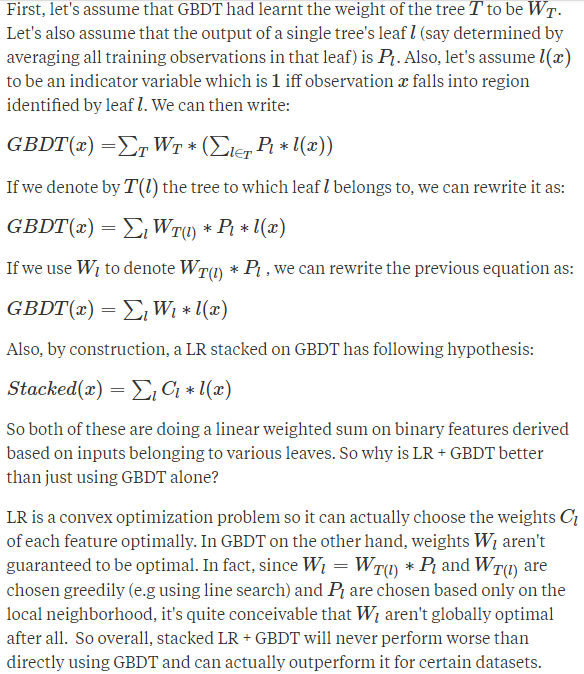

Facebook's paper gives empirical results which show that stacking a logistic regression (LR) on top of gradient boosted decision trees (GBDT) beats just directly using the GBDT on their dataset. Let me try to provide some intuition on why that might be happening.

Facebook's paper gives empirical results which show that stacking a logistic regression (LR) on top of gradient boosted decision trees (GBDT) beats just directly using the GBDT on their dataset. Let me try to provide some intuition on why that might be happening.

First, let's assume that GBDT had learnt the weight of the tree TT to be WTWT. Let's also assume that the output of a single tree's leaf ll (say determined by averaging all training observations in that leaf) is PlPl. Also, let's assume l(x)l(x)to be an indicator variable which is 11 iff observation xx falls into region identified by leaf ll. We can then write:

GBDT(x)=GBDT(x)=∑TWT∗(∑l∈TPl∗l(x))∑TWT∗(∑l∈TPl∗l(x))

If we denote by T(l)T(l) the tree to which leaf ll belongs to, we can rewrite it as:

GBDT(x)=∑lWT(l)∗Pl∗l(x)GBDT(x)=∑lWT(l)∗Pl∗l(x)

If we use WlWl to denote WT(l)∗PlWT(l)∗Pl , we can rewrite the previous equation as:

GBDT(x)=∑lWl∗l(x)GBDT(x)=∑lWl∗l(x)

Also, by construction, a LR stacked on GBDT has following hypothesis:

Stacked(x)=∑lCl∗l(x)Stacked(x)=∑lCl∗l(x)

So both of these are doing a linear weighted sum on binary features derived based on inputs belonging to various leaves. So why is LR + GBDT better than just using GBDT alone?

【堆积LR + GBDT性能优于GBDT 】

LR is a convex optimization problem so it can actually choose the weights ClClof each feature optimally. In GBDT on the other hand, weights WlWl aren't guaranteed to be optimal. In fact, since Wl=WT(l)∗PlWl=WT(l)∗Pl and WT(l)WT(l) are chosen greedily (e.g using line search) and PlPl are chosen based only on the local neighborhood, it's quite conceivable that WlWl aren't globally optimal after all. So overall, stacked LR + GBDT will never perform worse than directly using GBDT and can actually outperform it for certain datasets.

But there is another really important advantage of using the stacked model.

Facebook's paper also shows that data freshness is a very important factor for them and that prediction accuracy degrades as the delay between training and test set increases. It's unrealistic/costly to do online training of GBDT whereas online LR is much easier to do. By stacking an online LR over periodically computed batch GBDT, they are able to retain much of the benefits of online training in a pretty cheap way.

Overall, it's a pretty clever idea that works really well in real-world.

Facebook Gradient boosting 梯度提升 separate the positive and negative labeled points using a single line 梯度提升决策树 Gradient Boosted Decision Trees (GBDT)的更多相关文章

- CatBoost使用GPU实现决策树的快速梯度提升CatBoost Enables Fast Gradient Boosting on Decision Trees Using GPUs

python机器学习-乳腺癌细胞挖掘(博主亲自录制视频)https://study.163.com/course/introduction.htm?courseId=1005269003&ut ...

- How to Configure the Gradient Boosting Algorithm

How to Configure the Gradient Boosting Algorithm by Jason Brownlee on September 12, 2016 in XGBoost ...

- 梯度提升树 Gradient Boosting Decision Tree

Adaboost + CART 用 CART 决策树来作为 Adaboost 的基础学习器 但是问题在于,需要把决策树改成能接收带权样本输入的版本.(need: weighted DTree(D, u ...

- Ensemble Learning 之 Gradient Boosting 与 GBDT

之前一篇写了关于基于权重的 Boosting 方法 Adaboost,本文主要讲述 Boosting 的另一种形式 Gradient Boosting ,在 Adaboost 中样本权重随着分类正确与 ...

- Gradient Boosting Decision Tree学习

Gradient Boosting Decision Tree,即梯度提升树,简称GBDT,也叫GBRT(Gradient Boosting Regression Tree),也称为Multiple ...

- GBDT(Gradient Boosting Decision Tree) 没有实现仅仅有原理

阿弥陀佛.好久没写文章,实在是受不了了.特来填坑,近期实习了(ting)解(shuo)到(le)非常多工业界经常使用的算法.诸如GBDT,CRF,topic model的一些算 ...

- 集成学习之Boosting —— Gradient Boosting原理

集成学习之Boosting -- AdaBoost原理 集成学习之Boosting -- AdaBoost实现 集成学习之Boosting -- Gradient Boosting原理 集成学习之Bo ...

- Gradient Boosting算法简介

最近项目中涉及基于Gradient Boosting Regression 算法拟合时间序列曲线的内容,利用python机器学习包 scikit-learn 中的GradientBoostingReg ...

- 论文笔记:LightGBM: A Highly Efficient Gradient Boosting Decision Tree

引言 GBDT已经有了比较成熟的应用,例如XGBoost和pGBRT,但是在特征维度很高数据量很大的时候依然不够快.一个主要的原因是,对于每个特征,他们都需要遍历每一条数据,对每一个可能的分割点去计算 ...

随机推荐

- 洛谷—— P1609 最小回文数

https://www.luogu.org/problemnew/show/1609 题目描述 回文数是从左向右读和从右向左读结果一样的数字串. 例如:121.44 和3是回文数,175和36不是. ...

- Xamarin XAML语言教程Xamarin.Forms中改变活动指示器颜色

Xamarin XAML语言教程Xamarin.Forms中改变活动指示器颜色 在图12.10~12.12中我们会看到在各个平台下活动指示器的颜色是不一样的.Android的活动指示器默认是深粉色的: ...

- 翻译BonoboService官网的安装教程

This page covers simple Bonobo Git Server installation. Be sure to check prerequisites page before i ...

- Java中的http相关的库:httpclient/httpcore/okhttp/http-request

httpclient/httpcore是apache下面的项目:中文文档下载参考 5 官网:http://hc.apache.org/ 在线文档:http://hc.apache.org/httpco ...

- DB11 TCP数据协议拆包接收主要方法

北京地标(DB11) 据接收器. /// <summary> /// DB11协议拆包器 /// </summary> public class SplictProtocol ...

- SparkStreaming和Drools结合的HelloWord版

关于sparkStreaming的测试Drools框架结合版 package com.dinpay.bdp.rcp.service; import java.math.BigDecimal; impo ...

- PhoneNumber

项目地址:PhoneNumber 简介:一个获取号码归属地和其他信息(诈骗.骚扰等)的开源库 一个获取号码归属地和其他信息(诈骗.骚扰等)的开源库.支持本地离线(含归属地.骚扰.常用号码)和网络( ...

- Synchronous XMLHttpRequest on the main thread is deprecated because of its detrimental……

Synchronous XMLHttpRequest on the main thread is deprecated because of its detrimental effects to th ...

- HDU1272 小希的迷宫(基础并查集)

杭电的图论题目列表.共计500题,努力刷吧 AC 64ms #include <iostream> #include <cstdlib> #include <cstdio ...

- Linux ps 命令查看进程启动及运行时间

引言 同事问我怎样看一个进程的启动时间和运行时间,我第一反应当然是说用 ps 命令啦.ps aux或ps -ef不就可以看时间吗? ps aux选项及输出说明 我们来重新复习下ps aux的选项,这是 ...