Neural Style学习3——操作

Basic usage:

th neural_style.lua -style_image <image.jpg> -content_image <image.jpg>

OpenCL usage with NIN Model (This requires you download the NIN Imagenet model files as described above):

th neural_style.lua -style_image examples/inputs/picasso_selfport1907.jpg -content_image examples/inputs/brad_pitt.jpg -output_image profile.png -model_file models/nin_imagenet_conv.caffemodel -proto_file models/train_val.prototxt -gpu 0 -backend clnn -num_iterations 1000 -seed 123 -content_layers relu0,relu3,relu7,relu12 -style_layers relu0,relu3,relu7,relu12 -content_weight 10 -style_weight 1000 -image_size 512 -optimizer adam

To use multiple style images, pass a comma-separated list like this:

-style_image starry_night.jpg,the_scream.jpg.

Note that paths to images should not contain the ~ character to represent your home directory; you should instead use a relative

path or a full absolute path.

Options:

-image_size: Maximum side length (in pixels) of of the generated image. Default is 512.-style_blend_weights: The weight for blending the style of multiple style images, as a

comma-separated list, such as-style_blend_weights 3,7. By default all style images

are equally weighted.-gpu: Zero-indexed ID of the GPU to use; for CPU mode set-gputo -1.

Optimization options:

-content_weight: How much to weight the content reconstruction term. Default is 5e0.-style_weight: How much to weight the style reconstruction term. Default is 1e2.-tv_weight: Weight of total-variation (TV) regularization; this helps to smooth the image.

Default is 1e-3. Set to 0 to disable TV regularization.-num_iterations: Default is 1000.-init: Method for generating the generated image; one ofrandomorimage.

Default israndomwhich uses a noise initialization as in the paper;image

initializes with the content image.-optimizer: The optimization algorithm to use; eitherlbfgsoradam; default islbfgs.

L-BFGS tends to give better results, but uses more memory. Switching to ADAM will reduce memory usage;

when using ADAM you will probably need to play with other parameters to get good results, especially

the style weight, content weight, and learning rate; you may also want to normalize gradients when

using ADAM.-learning_rate: Learning rate to use with the ADAM optimizer. Default is 1e1.-normalize_gradients: If this flag is present, style and content gradients from each layer will be

L1 normalized. Idea from andersbll/neural_artistic_style.

Output options:

-output_image: Name of the output image. Default isout.png.-print_iter: Print progress everyprint_iteriterations. Set to 0 to disable printing.-save_iter: Save the image everysave_iteriterations. Set to 0 to disable saving intermediate results.

Layer options:

-content_layers: Comma-separated list of layer names to use for content reconstruction.

Default isrelu4_2.-style_layers: Comma-separated list of layer names to use for style reconstruction.

Default isrelu1_1,relu2_1,relu3_1,relu4_1,relu5_1.

Other options:

-style_scale: Scale at which to extract features from the style image. Default is 1.0.-original_colors: If you set this to 1, then the output image will keep the colors of the content image.-proto_file: Path to thedeploy.txtfile for the VGG Caffe model.-model_file: Path to the.caffemodelfile for the VGG Caffe model.

Default is the original VGG-19 model; you can also try the normalized VGG-19 model used in the paper.-pooling: The type of pooling layers to use; one ofmaxoravg. Default ismax.

The VGG-19 models uses max pooling layers, but the paper mentions that replacing these layers with average

pooling layers can improve the results. I haven't been able to get good results using average pooling, but

the option is here.-backend:nn,cudnn, orclnn. Default isnn.cudnnrequires

cudnn.torch and may reduce memory usage.

clnnrequires cltorch and clnn-cudnn_autotune: When using the cuDNN backend, pass this flag to use the built-in cuDNN autotuner to select

the best convolution algorithms for your architecture. This will make the first iteration a bit slower and can

take a bit more memory, but may significantly speed up the cuDNN backend.

Frequently Asked Questions

Problem: Generated image has saturation artifacts:

Solution: Update the image packge to the latest version: luarocks install image

Problem: Running without a GPU gives an error message complaining about cutorch not found

Solution:

Pass the flag -gpu -1 when running in CPU-only mode

Problem: The program runs out of memory and dies

Solution: Try reducing the image size: -image_size 256 (or lower). Note that different image sizes will likely

require non-default values for -style_weight and -content_weight for optimal results.

If you are running on a GPU, you can also try running with -backend cudnn to reduce memory usage.

Problem: Get the following error message:

models/VGG_ILSVRC_19_layers_deploy.prototxt.cpu.lua:7: attempt to call method 'ceil' (a nil value)

Solution: Update nn package to the latest version: luarocks install nn

Problem: Get an error message complaining about paths.extname

Solution: Update torch.paths package to the latest version: luarocks install paths

Problem: NIN Imagenet model is not giving good results.

Solution: Make sure the correct -proto_file is selected. Also make sure the correct parameters for -content_layers and -style_layers are set. (See OpenCL usage example above.)

Problem: -backend cudnn is slower than default NN backend

Solution: Add the flag -cudnn_autotune; this will use the built-in cuDNN autotuner to select the best convolution algorithms.

Memory Usage

By default, neural-style uses the nn backend for convolutions and L-BFGS for optimization.

These give good results, but can both use a lot of memory. You can reduce memory usage with the following:

- Use cuDNN: Add the flag

-backend cudnnto use the cuDNN backend. This will only work in GPU mode. - Use ADAM: Add the flag

-optimizer adamto use ADAM instead of L-BFGS. This should significantly

reduce memory usage, but may require tuning of other parameters for good results; in particular you should

play with the learning rate, content weight, style weight, and also consider using gradient normalization.

This should work in both CPU and GPU modes. - Reduce image size: If the above tricks are not enough, you can reduce the size of the generated image;

pass the flag-image_size 256to generate an image at half the default size.

With the default settings, neural-style uses about 3.5GB of GPU memory on my system;

switching to ADAM and cuDNN reduces the GPU memory footprint to about 1GB.

Speed

Speed can vary a lot depending on the backend and the optimizer.

Here are some times for running 500 iterations with -image_size=512 on a Maxwell Titan X with different settings:

-backend nn -optimizer lbfgs: 62 seconds-backend nn -optimizer adam: 49 seconds-backend cudnn -optimizer lbfgs: 79 seconds-backend cudnn -cudnn_autotune -optimizer lbfgs: 58 seconds-backend cudnn -cudnn_autotune -optimizer adam: 44 seconds-backend clnn -optimizer lbfgs: 169 seconds-backend clnn -optimizer adam: 106 seconds

Here are the same benchmarks on a Pascal Titan X with cuDNN 5.0 on CUDA 8.0 RC:

-backend nn -optimizer lbfgs: 43 seconds-backend nn -optimizer adam: 36 seconds-backend cudnn -optimizer lbfgs: 45 seconds-backend cudnn -cudnn_autotune -optimizer lbfgs: 30 seconds-backend cudnn -cudnn_autotune -optimizer adam: 22 seconds

Multi-GPU scaling

You can use multiple GPUs to process images at higher resolutions; different layers of the network will be

computed on different GPUs. You can control which GPUs are used with the -gpu flag, and you can control

how to split layers across GPUs using the -multigpu_strategy flag.

For example in a server with four GPUs, you can give the flag -gpu 0,1,2,3 to process on GPUs 0, 1, 2, and

3 in that order; by also giving the flag -multigpu_strategy 3,6,12 you indicate that the first two layers

should be computed on GPU 0, layers 3 to 5 should be computed on GPU 1, layers 6 to 11 should be computed on

GPU 2, and the remaining layers should be computed on GPU 3. You will need to tune the -multigpu_strategy

for your setup in order to achieve maximal resolution.

We can achieve very high quality results at high resolution by combining multi-GPU processing with multiscale

generation as described in the paper

Controlling Perceptual Factors in Neural Style Transfer by Leon A. Gatys,

Alexander S. Ecker, Matthias Bethge, Aaron Hertzmann and Eli Shechtman.

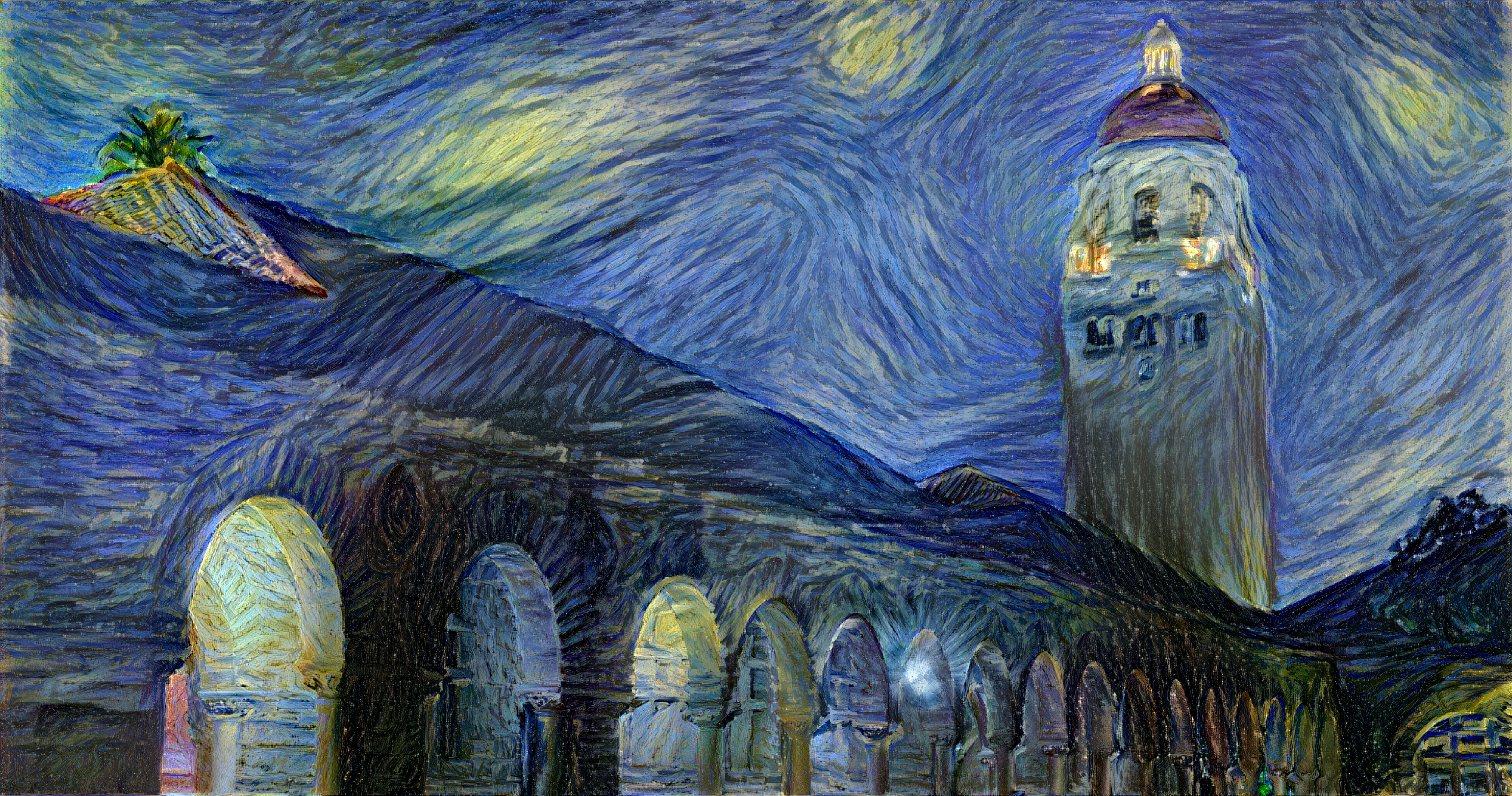

Here is a 3620 x 1905 image generated on a server with four Pascal Titan X GPUs:

The script used to generate this image can be found here.

Neural Style学习3——操作的更多相关文章

- Neural Style学习2——环境安装

neural-style Installation This guide will walk you through the setup for neural-style on Ubuntu. Ste ...

- Neural Style学习1——简介

该项目是Github上面的一个开源项目,其利用卷积神经网络的理论,参照论文A Neural Algorithm of Artistic Style,可以实现一种效果:两张图片,一张取其内容,另一张取其 ...

- 课程四(Convolutional Neural Networks),第四 周(Special applications: Face recognition & Neural style transfer) —— 2.Programming assignments:Art generation with Neural Style Transfer

Deep Learning & Art: Neural Style Transfer Welcome to the second assignment of this week. In thi ...

- Neural Style论文笔记+源码解析

引言 前面在Ubuntu16.04+GTX1080配置TensorFlow并实现图像风格转换中介绍了TensorFlow的配置过程,以及运用TensorFlow实现图像风格转换,主要是使用了文章A N ...

- [C4W4] Convolutional Neural Networks - Special applications: Face recognition & Neural style transfer

第四周:Special applications: Face recognition & Neural style transfer 什么是人脸识别?(What is face recogni ...

- 【原创】梵高油画用深度卷积神经网络迭代十万次是什么效果? A neural style of convolutional neural networks

作为一个脱离了低级趣味的码农,春节假期闲来无事,决定做一些有意思的事情打发时间,碰巧看到这篇论文: A neural style of convolutional neural networks,译作 ...

- 项目总结四:神经风格迁移项目(Art generation with Neural Style Transfer)

1.项目介绍 神经风格转换 (NST) 是深部学习中最有趣的技术之一.它合并两个图像, 即 内容图像 C(content image) 和 样式图像S(style image), 以生成图像 G(ge ...

- 【原创】梵高油画用深度卷积神经网络迭代10万次是什么效果? A neural style of convolutional neural networks

作为一个脱离了低级趣味的码农,春节假期闲来无事,决定做一些有意思的事情打发时间,碰巧看到这篇论文: A neural style of convolutional neural networks,译作 ...

- fast neural style transfer图像风格迁移基于tensorflow实现

引自:深度学习实践:使用Tensorflow实现快速风格迁移 一.风格迁移简介 风格迁移(Style Transfer)是深度学习众多应用中非常有趣的一种,如图,我们可以使用这种方法把一张图片的风格“ ...

随机推荐

- 【Swift 2.1】为 UIView 添加点击事件和点击效果

前言 UIView 不像 UIButton 加了点击事件就会有点击效果,体验要差不少,这里分别通过自定义和扩展来实现类似 UIButton 的效果. 声明 欢迎转载,但请保留文章原始出处:) 博客园: ...

- 敏捷开发与jira之项目现状

从三个方面概述项目的现状 资源组织结构 资源中的特殊角色 •反馈问题接口人 –测试兼,处理实施反馈回来的问题,Bug复现后分配给开发负责人:需求指向需求做进一步的需求分析 •流程反馈处理人 –测试或开 ...

- 2014年年度工作总结--IT狂人实录

2014年也是我人生最重要的一年,她见证了我的成长与蜕变,让我从一个迷茫的旅者踏上一条柳暗花明的路. 春宇之行 从春宇短暂的9个月,却经历常人难以想想的风风雨雨,首先要感谢春宇公司给我带来了安逸宽松的 ...

- c#中抽象类(abstract)和接口(interface)的相同点与区别

相同点: 1.都可以被继承 2.都不能被实例化 3.都可以包含方法声明 4.派生类必须实现未实现的方法 区别: 1.抽象基类可以定义字段.属性.方法实现.接口只能定义属性.索引器.事件.和方法声明,不 ...

- IT人创业之融资方式 - 创业与投资系列文章

对于想要创业的IT人,最基本的就是需要资金和团队.笔者在经历了自己制定的职业道路之后(见文:IT从业者的职业道路(从程序员到部门经理) - 项目管理系列文章),进行过投资(见文:IT人经济思维之投资 ...

- 使用EF自带的EntityState枚举和自定义枚举实现单个和多个实体的增删改查

本文目录 使用EntityState枚举实现单个实体的增/删/改 增加:DbSet.Add = > EntityState.Added 标记实体为未改变:EntityState.Unchange ...

- 《java JDK7 学习笔记》之对象封装

1.构造函数实现对象初始化流程的封装.方法封装了操作对象的流程.java中还可以使用private封装对象私有数据成员.封装的目的主要就是隐藏对象细节,将对象当做黑箱子进行操作. 2.在java命名规 ...

- Linux笔记:使用Vim编辑器

Vi编辑器是Unix系统上早先的编辑器,在GNU项目将Vi编辑器移植到开源世界时,他们决定对其作一些改进. 于它不再是以前Unix中的那个原始的Vi编辑器了,开发人员也就将它重命名为Vi improv ...

- 查看C#的dll所依赖.Net版本

Microsoft SDK自带的ildasm.exe工具, 是一个反编译工具, 可以查看编译好后的dll的文件 双击ildasm.exe, 把你要识别的.dll文件拖进来, 就会反编译了. 接着在il ...

- 数据分析:.Net程序员该如何选择?

上文我介绍了用.Net实现的拉勾爬虫,可全站采集,其中.Net和C#(不区分)的数据爬取开始的早,全国主要城市都有一定数量的分布,加上有了近期其他相似技术类别的数据进行横向比较,可以得到比较合理的推测 ...