python3编写网络爬虫22-爬取知乎用户信息

思路

选定起始人 选一个关注数或者粉丝数多的大V作为爬虫起始点

获取粉丝和关注列表 通过知乎接口获得该大V的粉丝列表和关注列表

获取列表用户信息 获取列表每个用户的详细信息

获取每个用户的粉丝和关注 进一步对列表中的每个用户 获取他们的粉丝和关注列表实现递归爬取

起始点 https://www.zhihu.com/people/excited-vczh/answers

抓取信息

个人信息

关注列表 ajax请求

代码实现

./items.py文件

# -*- coding: utf-8 -*- # Define here the models for your scraped items

#

# See documentation in:

# http://doc.scrapy.org/en/latest/topics/items.html from scrapy import Item, Field class UserItem(Item):

# define the fields for your item here like:

id = Field()

name = Field()

avatar_url = Field()

headline = Field()

description = Field()

url = Field()

url_token = Field()

gender = Field()

cover_url = Field()

type = Field()

badge = Field() answer_count = Field()

articles_count = Field()

commercial_question_count = Field()

favorite_count = Field()

favorited_count = Field()

follower_count = Field()

following_columns_count = Field()

following_count = Field()

pins_count = Field()

question_count = Field()

thank_from_count = Field()

thank_to_count = Field()

thanked_count = Field()

vote_from_count = Field()

vote_to_count = Field()

voteup_count = Field()

following_favlists_count = Field()

following_question_count = Field()

following_topic_count = Field()

marked_answers_count = Field()

mutual_followees_count = Field()

hosted_live_count = Field()

participated_live_count = Field() locations = Field()

educations = Field()

employments = Field()

./middlewares.py文件

# -*- coding: utf-8 -*- # Define here the models for your spider middleware

#

# See documentation in:

# http://doc.scrapy.org/en/latest/topics/spider-middleware.html from scrapy import signals class ZhihuSpiderMiddleware(object):

# Not all methods need to be defined. If a method is not defined,

# scrapy acts as if the spider middleware does not modify the

# passed objects. @classmethod

def from_crawler(cls, crawler):

# This method is used by Scrapy to create your spiders.

s = cls()

crawler.signals.connect(s.spider_opened, signal=signals.spider_opened)

return s def process_spider_input(response, spider):

# Called for each response that goes through the spider

# middleware and into the spider. # Should return None or raise an exception.

return None def process_spider_output(response, result, spider):

# Called with the results returned from the Spider, after

# it has processed the response. # Must return an iterable of Request, dict or Item objects.

for i in result:

yield i def process_spider_exception(response, exception, spider):

# Called when a spider or process_spider_input() method

# (from other spider middleware) raises an exception. # Should return either None or an iterable of Response, dict

# or Item objects.

pass def process_start_requests(start_requests, spider):

# Called with the start requests of the spider, and works

# similarly to the process_spider_output() method, except

# that it doesn’t have a response associated. # Must return only requests (not items).

for r in start_requests:

yield r def spider_opened(self, spider):

spider.logger.info('Spider opened: %s' % spider.name)

./pipelines.py文件

# -*- coding: utf-8 -*- # Define your item pipelines here

#

# Don't forget to add your pipeline to the ITEM_PIPELINES setting

# See: http://doc.scrapy.org/en/latest/topics/item-pipeline.html

import pymongo class ZhihuPipeline(object):

def process_item(self, item, spider):

return item class MongoPipeline(object):

collection_name = 'users' def __init__(self, mongo_uri, mongo_db):

self.mongo_uri = mongo_uri

self.mongo_db = mongo_db @classmethod

def from_crawler(cls, crawler):

return cls(

mongo_uri=crawler.settings.get('MONGO_URI'),

mongo_db=crawler.settings.get('MONGO_DATABASE')

) def open_spider(self, spider):

self.client = pymongo.MongoClient(self.mongo_uri)

self.db = self.client[self.mongo_db] def close_spider(self, spider):

self.client.close() def process_item(self, item, spider):

self.db[self.collection_name].update({'url_token': item['url_token']}, dict(item), True)

return item

./settings.py文件

# -*- coding: utf-8 -*- # Scrapy settings for zhihuuser project

#

# For simplicity, this file contains only settings considered important or

# commonly used. You can find more settings consulting the documentation:

#

# http://doc.scrapy.org/en/latest/topics/settings.html

# http://scrapy.readthedocs.org/en/latest/topics/downloader-middleware.html

# http://scrapy.readthedocs.org/en/latest/topics/spider-middleware.html BOT_NAME = 'zhihuuser' SPIDER_MODULES = ['zhihuuser.spiders']

NEWSPIDER_MODULE = 'zhihuuser.spiders' # Crawl responsibly by identifying yourself (and your website) on the user-agent

# USER_AGENT = 'zhihu (+http://www.yourdomain.com)' # Obey robots.txt rules

ROBOTSTXT_OBEY = False # Configure maximum concurrent requests performed by Scrapy (default: 16)

# CONCURRENT_REQUESTS = 32 # Configure a delay for requests for the same website (default: 0)

# See http://scrapy.readthedocs.org/en/latest/topics/settings.html#download-delay

# See also autothrottle settings and docs

# DOWNLOAD_DELAY = 3

# The download delay setting will honor only one of:

# CONCURRENT_REQUESTS_PER_DOMAIN = 16

# CONCURRENT_REQUESTS_PER_IP = 16 # Disable cookies (enabled by default)

# COOKIES_ENABLED = False # Disable Telnet Console (enabled by default)

# TELNETCONSOLE_ENABLED = False # Override the default request headers:

DEFAULT_REQUEST_HEADERS = {

'User-Agent': 'Mozilla/5.0 (Macintosh; Intel Mac OS X 10_12_3) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/56.0.2924.87 Safari/537.36',

'authorization': 'oauth c3cef7c66a1843f8b3a9e6a1e3160e20',

} # Enable or disable spider middlewares

# See http://scrapy.readthedocs.org/en/latest/topics/spider-middleware.html

# SPIDER_MIDDLEWARES = {

# 'zhihuuser.middlewares.ZhihuSpiderMiddleware': 543,

# } # SPIDER_MIDDLEWARES = {

# 'scrapy_splash.SplashDeduplicateArgsMiddleware': 100,

# } # Enable or disable downloader middlewares

# See http://scrapy.readthedocs.org/en/latest/topics/downloader-middleware.html

# DOWNLOADER_MIDDLEWARES = {

# 'zhihuuser.middlewares.MyCustomDownloaderMiddleware': 543,

# } # DOWNLOADER_MIDDLEWARES = {

# 'scrapy_splash.SplashCookiesMiddleware': 723,

# 'scrapy_splash.SplashMiddleware': 725,

# 'scrapy.downloadermiddlewares.httpcompression.HttpCompressionMiddleware': 810,

# } # Enable or disable extensions

# See http://scrapy.readthedocs.org/en/latest/topics/extensions.html

# EXTENSIONS = {

# 'scrapy.extensions.telnet.TelnetConsole': None,

# } # Configure item pipelines

# See http://scrapy.readthedocs.org/en/latest/topics/item-pipeline.html

ITEM_PIPELINES = {

'zhihuuser.pipelines.MongoPipeline': 300,

# 'scrapy_redis.pipelines.RedisPipeline': 301

} # Enable and configure the AutoThrottle extension (disabled by default)

# See http://doc.scrapy.org/en/latest/topics/autothrottle.html

# AUTOTHROTTLE_ENABLED = True

# The initial download delay

# AUTOTHROTTLE_START_DELAY = 5

# The maximum download delay to be set in case of high latencies

# AUTOTHROTTLE_MAX_DELAY = 60

# The average number of requests Scrapy should be sending in parallel to

# each remote server

# AUTOTHROTTLE_TARGET_CONCURRENCY = 1.0

# Enable showing throttling stats for every response received:

# AUTOTHROTTLE_DEBUG = False # Enable and configure HTTP caching (disabled by default)

# See http://scrapy.readthedocs.org/en/latest/topics/downloader-middleware.html#httpcache-middleware-settings

# HTTPCACHE_ENABLED = True

# HTTPCACHE_EXPIRATION_SECS = 0

# HTTPCACHE_DIR = 'httpcache'

# HTTPCACHE_IGNORE_HTTP_CODES = []

# HTTPCACHE_STORAGE = 'scrapy.extensions.httpcache.FilesystemCacheStorage' # DUPEFILTER_CLASS = 'scrapy_splash.SplashAwareDupeFilter'

# HTTPCACHE_STORAGE = 'scrapy_splash.SplashAwareFSCacheStorage' # SPLASH_URL = 'http://192.168.99.100:8050' MONGO_URI = 'localhost'

MONGO_DATABASE = 'zhihu' # SCHEDULER = "scrapy_redis.scheduler.Scheduler" # DUPEFILTER_CLASS = "scrapy_redis.dupefilter.RFPDupeFilter" # SCHEDULER_FLUSH_ON_START = True

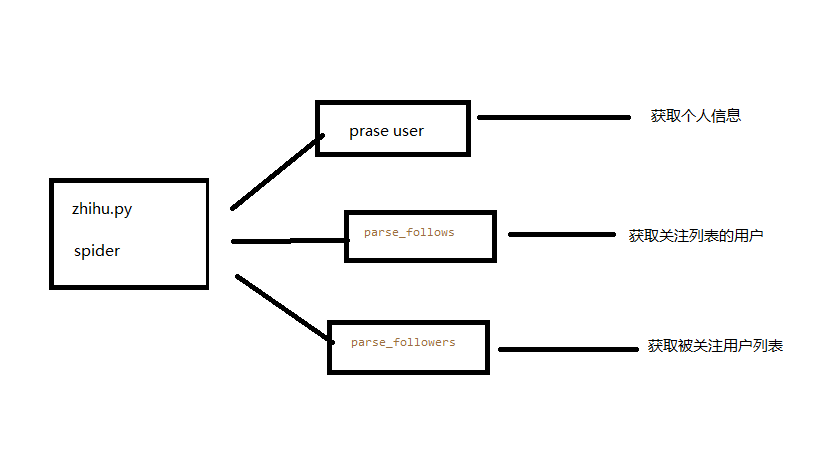

./spiders文件夹下 zhihu.py文件

# -*- coding: utf-8 -*-

import json from scrapy import Spider, Request

from zhihuuser.items import UserItem class ZhihuSpider(Spider):

name = "zhihu"

allowed_domains = ["www.zhihu.com"]

user_url = 'https://www.zhihu.com/api/v4/members/{user}?include={include}'

follows_url = 'https://www.zhihu.com/api/v4/members/{user}/followees?include={include}&offset={offset}&limit={limit}'

followers_url = 'https://www.zhihu.com/api/v4/members/{user}/followers?include={include}&offset={offset}&limit={limit}'

start_user = 'excited-vczh'

user_query = 'locations,employments,gender,educations,business,voteup_count,thanked_Count,follower_count,following_count,cover_url,following_topic_count,following_question_count,following_favlists_count,following_columns_count,answer_count,articles_count,pins_count,question_count,commercial_question_count,favorite_count,favorited_count,logs_count,marked_answers_count,marked_answers_text,message_thread_token,account_status,is_active,is_force_renamed,is_bind_sina,sina_weibo_url,sina_weibo_name,show_sina_weibo,is_blocking,is_blocked,is_following,is_followed,mutual_followees_count,vote_to_count,vote_from_count,thank_to_count,thank_from_count,thanked_count,description,hosted_live_count,participated_live_count,allow_message,industry_category,org_name,org_homepage,badge[?(type=best_answerer)].topics'

follows_query = 'data[*].answer_count,articles_count,gender,follower_count,is_followed,is_following,badge[?(type=best_answerer)].topics'

followers_query = 'data[*].answer_count,articles_count,gender,follower_count,is_followed,is_following,badge[?(type=best_answerer)].topics' def start_requests(self):

yield Request(self.user_url.format(user=self.start_user, include=self.user_query), self.parse_user)

yield Request(self.follows_url.format(user=self.start_user, include=self.follows_query, limit=20, offset=0),

self.parse_follows)

yield Request(self.followers_url.format(user=self.start_user, include=self.followers_query, limit=20, offset=0),

self.parse_followers) def parse_user(self, response):

result = json.loads(response.text)

item = UserItem() for field in item.fields:

if field in result.keys():

item[field] = result.get(field)

yield item yield Request(

self.follows_url.format(user=result.get('url_token'), include=self.follows_query, limit=20, offset=0),

self.parse_follows) yield Request(

self.followers_url.format(user=result.get('url_token'), include=self.followers_query, limit=20, offset=0),

self.parse_followers) def parse_follows(self, response):

results = json.loads(response.text) if 'data' in results.keys():

for result in results.get('data'):

yield Request(self.user_url.format(user=result.get('url_token'), include=self.user_query),

self.parse_user) if 'paging' in results.keys() and results.get('paging').get('is_end') == False:

next_page = results.get('paging').get('next')

yield Request(next_page,

self.parse_follows) def parse_followers(self, response):

results = json.loads(response.text) if 'data' in results.keys():

for result in results.get('data'):

yield Request(self.user_url.format(user=result.get('url_token'), include=self.user_query),

self.parse_user) if 'paging' in results.keys() and results.get('paging').get('is_end') == False:

next_page = results.get('paging').get('next')

yield Request(next_page,

self.parse_followers)

最后运行zhihu.py爬虫脚本 再查看MongoDB数据库中的数据 知乎用户信息数据采集就完成了。

那些步骤不理解的 欢迎下方留言

python3编写网络爬虫22-爬取知乎用户信息的更多相关文章

- python3编写网络爬虫19-app爬取

一.app爬取 前面都是介绍爬取Web网页的内容,随着移动互联网的发展,越来越多的企业并没有提供Web页面端的服务,而是直接开发了App,更多信息都是通过App展示的 App爬取相比Web端更加容易 ...

- 基于webmagic的爬虫小应用--爬取知乎用户信息

听到“爬虫”,是不是第一时间想到Python/php ? 多少想玩爬虫的Java学习者就因为语言不通而止步.Java是真的不能做爬虫吗? 当然不是. 只不过python的3行代码能解决的问题,而Jav ...

- 爬虫(十六):scrapy爬取知乎用户信息

一:爬取思路 首先我们应该找到一个账号,这个账号被关注的人和关注的人都相对比较多的,就是下图中金字塔顶端的人,然后通过爬取这个账号的信息后,再爬取他关注的人和被关注的人的账号信息,然后爬取被关注人的账 ...

- [Python爬虫] Selenium爬取新浪微博客户端用户信息、热点话题及评论 (上)

转载自:http://blog.csdn.net/eastmount/article/details/51231852 一. 文章介绍 源码下载地址:http://download.csdn.net/ ...

- 利用 Scrapy 爬取知乎用户信息

思路:通过获取知乎某个大V的关注列表和被关注列表,查看该大V和其关注用户和被关注用户的详细信息,然后通过层层递归调用,实现获取关注用户和被关注用户的关注列表和被关注列表,最终实现获取大量用户信息. 一 ...

- 第二个爬虫之爬取知乎用户回答和文章并将所有内容保存到txt文件中

自从这两天开始学爬虫,就一直想做个爬虫爬知乎.于是就开始动手了. 知乎用户动态采取的是动态加载的方式,也就是先加载一部分的动态,要一直滑道底才会加载另一部分的动态.要爬取全部的动态,就得先获取全部的u ...

- Srapy 爬取知乎用户信息

今天用scrapy框架爬取一下所有知乎用户的信息.道理很简单,找一个知乎大V(就是粉丝和关注量都很多的那种),找到他的粉丝和他关注的人的信息,然后分别再找这些人的粉丝和关注的人的信息,层层递进,这样下 ...

- 爬虫实战--利用Scrapy爬取知乎用户信息

思路: 主要逻辑图:

- python3编写网络爬虫16-使用selenium 爬取淘宝商品信息

一.使用selenium 模拟浏览器操作爬取淘宝商品信息 之前我们已经成功尝试分析Ajax来抓取相关数据,但是并不是所有页面都可以通过分析Ajax来完成抓取.比如,淘宝,它的整个页面数据确实也是通过A ...

随机推荐

- .NET CORE 实践(2)--对Ubuntu下安装SDK的记录

根据官网Ubuntu安装SDK操作如下: allen@allen-Virtual-Machine:~$ sudo apt-key adv --keyserver apt-mo.trafficmanag ...

- “每日一道面试题”.Net中所有类的基类是以及包含的方法

闲来无事,每日一贴.水平有限,大牛勿喷. .Net中所有内建类型的基类是System.Object毋庸置疑 Puclic Class A{}和 Public Class A:System.Object ...

- Transact-SQL解析和基本的实用语句

SQL语言 DDL(数据定义语句) DML(数据操作语句) DCL(数据控制语句) DDL 数据定义 操作对象 操作方式 创建 删除 修改 模式 CREATE SCHEMA DROP SCHEMA 表 ...

- [android] android下创建一个sqlite数据库

Sqlite数据库是开源的c语言写的数据库,android和iphone都使用的这个,首先需要创建数据库,然后创建表和字段,android提供了一个api叫SQLiteOpenHelper数据库的打开 ...

- 【Java每日一题】20170222

20170221问题解析请点击今日问题下方的“[Java每日一题]20170222”查看(问题解析在公众号首发,公众号ID:weknow619) package Feb2017; import jav ...

- Could not get JDBC connection

想学习下JavaWeb,手头有2017年有活动的时候买的一本书,还是全彩的,应该很适合我这种菜鸟技术渣. 只可惜照着书搭建了一套Web环境,代码和db脚本都是拷贝的光盘里的,也反复检查了数据库的连接情 ...

- python基础学习(十三)函数进阶

目录 1. 函数参数和返回值的作用 1.1 无参数,无返回值 1.2 无参数,有返回值 1.3 有参数,无返回值 1.4 有参数,有返回值 2. 函数的返回值进阶 例子:显示当前的湿度和温度 例子:交 ...

- netty入门demo(一)

目录 前言 正文 代码部分 服务端 客服端 测试结果一: 解决粘包,拆包的问题 总结 前言 最近做一个项目: 大概需求: 多个温度传感器不断向java服务发送温度数据,该传感器采用socket发送数据 ...

- 弹性盒模型flex

一.flex flex是flexible box的缩写,意为“弹性布局”: 定义弹性布局 display:flex; box{ display:flex; } 二.基本定义 我只简单的说一下容器和项目 ...

- Django引入静态文件

在HTML文件中引入方式: 简单引入一个bootstrap中的内敛表单,效果图如下: