【Paper Reading】Deep Supervised Hashing for fast Image Retrieval

what has been done:

This paper proposed a novel Deep Supervised Hashing method to learn a compact similarity-presevering binary code for the huge body of image data.

Data sets:

CIFAR-10: 60,000 32*32 belonging to 10 mutually exclusively categories(6000 images per category)

NUS-WIDE: 269,648 from Flickr, warpped to 64*64

content based image retrieval: visually similar or semantically similar.

Traditional method: calculate the distance between the query image and the database images.

Problem: time and memory

Solution: hashing methods(map image to compact binary codes that approximately preserve the data structure in the original space)

Problem: performace depends on the features used, more suitable for dealing with the visiual similarity search rather than the sematically similarity search.

Solution: CNNs, the CNNs successful applications of CNNs in various tasks imply that the feature learned by CNNs can well capture the underlying sematic structure of images in spite of significant appearance variations.

Related works:

Locality Sensitive Hashing(LSH):use random projections to produce hashing bits

cons: requires long codes to achieve satisfactory performance.(large memory)

data-dependent hashing methods: unsupervised vs supervised

unsupervised methods: only make use of unlabelled training data to lean hash functions

- spectral hashing(SH): minimizes the weighted hamming distance of image pairs

- Iterative Quantization(ITQ): minimize the quantization error on projected image descriptors so as to allievate the information loss

supervised methods: take advantage of label inforamtion thus can preserve semantic similarity

- CCA-ITQ: an extension of iterative quantization

- predictable discriminative binary code: looks for hypeplanes that seperate categories with large margin as hash function.

- Minimal Loss Hashing(MLH): optimize upper bound of a hinge-like loss to learn the hash functions

problem: the above methods use linear projection as hash functions and can only deal with linearly seperable data.

solution: supervised hashing with kernels(KSH) and Binary Reconstructive Embedding(BRE).

Deep hashing: exploits a non-linear deep networks to produce binary code.

Problem : most hash methods relax the binary codes to real-values in optimizations and quantize the model outputs to produce binary codes. However there is no guarantee that the optimal real-valued codes are still optimal after quantization .

Solution: DIscrete Graph Hashing(DGH) and Supervided Discrete Hashing(DSH) are proposed to directly optimize the binary codes.

Problem : Use hand crafted feature and cannot capture the semantic information.

Solution: CNNs base hashing method

Our goal: similar images should be encoded to similar binary codes and the binary codes should be computed efficiently.

Loss function:

Relaxation:

Implementation details:

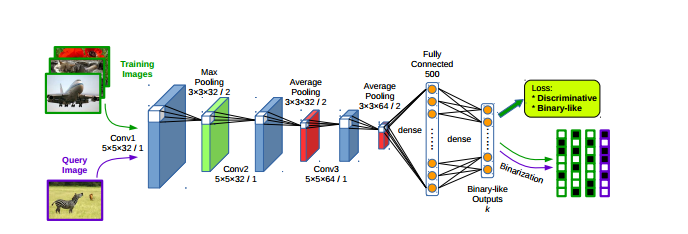

Network structure:

3*卷积层:

3*池化层:

2*全连接层:

Training methodology:

- generate images pairs online by exploiting all the image pairs in each mini-batch. Allivate the need to store the whole pair-wise similarity matrix, thus being scalable to large-scale data-sets.

- Fine-tune vs Train from scratch

Experiment:

CIFAR-10

GIST descriptors for conventional hashing methods

NUS-WIDE

225-D normalized block-wise color moment features

Evalutaion Metrics

mAP: mean Average Precision

precision-recall curves(48-bit)

mean precision within Hamming radius 2 for different code lengths

Network ensembles?

Comparison with state-of-the-art method

CNNH: trainin the model to fit pre-computed discriminative binary code. binary code generation and the network learning are isolated

CLBHC: train the model with a binary-line hidden layer as features for classification, encoding dissimilar images to similar binary code would not be punished.

DNNH: used triplet-based constraints to describe more complex semantic relations, training its networks become more diffucult due to the sigmoid non-linearlity and the parameterized piece-wise threshold function used in the output layer.

Combine binary code generation with network learning

Comparision of Encoding Time

【Paper Reading】Deep Supervised Hashing for fast Image Retrieval的更多相关文章

- 【Paper Reading】Learning while Reading

Learning while Reading 不限于具体的书,只限于知识的宽度 这个系列集合了一周所学所看的精华,它们往往来自不只一本书 我们之所以将自然界分类,组织成各种概念,并按其分类,主要是因为 ...

- 【Paper Reading】Object Recognition from Scale-Invariant Features

Paper: Object Recognition from Scale-Invariant Features Sorce: http://www.cs.ubc.ca/~lowe/papers/icc ...

- 【Paper Reading】Bayesian Face Sketch Synthesis

Contribution: 1) Systematic interpretation to existing face sketch synthesis methods. 2) Bayesian fa ...

- 【Paper Reading】Improved Textured Networks: Maximizing quality and diversity in Feed-Forward Stylization and Texture Synthesis

Improved Textured Networks: Maximizing quality and diversity in Feed-Forward Stylization and Texture ...

- 【资料总结】| Deep Reinforcement Learning 深度强化学习

在机器学习中,我们经常会分类为有监督学习和无监督学习,但是尝尝会忽略一个重要的分支,强化学习.有监督学习和无监督学习非常好去区分,学习的目标,有无标签等都是区分标准.如果说监督学习的目标是预测,那么强 ...

- 【文献阅读】Deep Residual Learning for Image Recognition--CVPR--2016

最近准备用Resnet来解决问题,于是重读Resnet的paper <Deep Residual Learning for Image Recognition>, 这是何恺明在2016-C ...

- 【文献阅读】Augmenting Supervised Neural Networks with Unsupervised Objectives-ICML-2016

一.Abstract 从近期对unsupervised learning 的研究得到启发,在large-scale setting 上,本文把unsupervised learning 与superv ...

- 【CS-4476-project 6】Deep Learning

AlexNet / VGG-F network visualized by mNeuron. Project 6: Deep LearningIntroduction to Computer Visi ...

- 【论文阅读】Deep Mixture of Diverse Experts for Large-Scale Visual Recognition

导读: 本文为论文<Deep Mixture of Diverse Experts for Large-Scale Visual Recognition>的阅读总结.目的是做大规模图像分类 ...

随机推荐

- 环境搭建Selenium2+Eclipse+Java+TestNG_(一)

第一步 安装JDK 第二步 下载Eclipse 第三步 在Eclipse中安装TestNG 第四步 下载Selenium IDE.SeleniumRC.IEDriverServer 第五步 下载Fi ...

- 不可靠信号SIGCHLD丢失的问题

如果采用 void sig_chld(int signo) { pid_t pid; int stat; while((pid = waitp ...

- HDU 2815

特判B不能大于等于C 高次同余方程 #include <iostream> #include <cstdio> #include <cstring> #includ ...

- Unity3D 人形血条制作小知识

这几天用Unity3D做个射击小游戏,想做个人形的血条.百思不得其解,后来问了网上的牛牛们,攻克了,事实上挺简单的,GUI里面有个函数DrawTextureWithTexCoords就能够实现图片的裁 ...

- 【解决】run-as: Package '' is unknown

问题: [2014-07-30 20:20:25 - nativeSensorStl] gdbserver output: [2014-07-30 20:20:25 - nativeSensorStl ...

- Android中XML解析,保存的三种方法

简单介绍 在Android开发中,关于XML解析有三种方式,各自是: SAX 基于事件的解析器.解析速度快.占用内存少.非常适合在Android移动设备中使用. DOM 在内存中以树形结构存放,因此检 ...

- COCOS2D-X 动作 CCSequence动作序列

CCSequence继承于CCActionInterval类,CCActionInterval类为延时动作类,即动作在运行过程中有一个过程. CCSequence中的重要方法: static CCSe ...

- Oracle Hint的用法

1. /*+ALL_ROWS*/ 表明对语句块选择基于开销的优化方法,并获得最佳吞吐量,使资源消耗最小化. 例如: SELECT /*+ALL+_ROWS*/ EMP_NO,EMP_NAM,DAT_I ...

- 项目中解决实际问题的代码片段-javascript方法,Vue方法(长期更新)

总结项目用到的一些处理方法,用来解决数据处理的一些实际问题,所有方法都可以放在一个公共工具方法里面,实现不限ES5,ES6还有些Vue处理的方法. 都是项目中来的,有代码跟图片展示,长期更新. 1.获 ...

- 三种启动SQLSERVER服务的方法(启动sqlserver服务器,先要启动sqlserver服务)

1.后台启动 计算机-管理-服务和应用程序 2.SQL SERVER配置管理器 3.在运行窗口中使用命令进行启动: