spark 笔记 8: Stage

/**

* A stage is a set of independent tasks all computing the same function that need to run as part

* of a Spark job, where all the tasks have the same shuffle dependencies. Each DAG of tasks run

* by the scheduler is split up into stages at the boundaries where shuffle occurs, and then the

* DAGScheduler runs these stages in topological order.

*

* Each Stage can either be a shuffle map stage, in which case its tasks' results are input for

* another stage, or a result stage, in which case its tasks directly compute the action that

* initiated a job (e.g. count(), save(), etc). For shuffle map stages, we also track the nodes

* that each output partition is on.

*

* Each Stage also has a jobId, identifying the job that first submitted the stage. When FIFO

* scheduling is used, this allows Stages from earlier jobs to be computed first or recovered

* faster on failure.

*

* The callSite provides a location in user code which relates to the stage. For a shuffle map

* stage, the callSite gives the user code that created the RDD being shuffled. For a result

* stage, the callSite gives the user code that executes the associated action (e.g. count()).

*

* A single stage can consist of multiple attempts. In that case, the latestInfo field will

* be updated for each attempt.

*

*/

private[spark] class Stage(

val id: Int,

val rdd: RDD[_],

val numTasks: Int,

val shuffleDep: Option[ShuffleDependency[_, _, _]], // Output shuffle if stage is a map stage

val parents: List[Stage],

val jobId: Int,

val callSite: CallSite)

extends Logging {

val isShuffleMap = shuffleDep.isDefined

val numPartitions = rdd.partitions.size

val outputLocs = Array.fill[List[MapStatus]](numPartitions)(Nil)

var numAvailableOutputs = 0

/** Set of jobs that this stage belongs to. */

val jobIds = new HashSet[Int]

/** For stages that are the final (consists of only ResultTasks), link to the ActiveJob. */

var resultOfJob: Option[ActiveJob] = None

var pendingTasks = new HashSet[Task[_]]

def addOutputLoc(partition: Int, status: MapStatus) {

/**

* Result returned by a ShuffleMapTask to a scheduler. Includes the block manager address that the

* task ran on as well as the sizes of outputs for each reducer, for passing on to the reduce tasks.

* The map output sizes are compressed using MapOutputTracker.compressSize.

*/

private[spark] class MapStatus(var location: BlockManagerId, var compressedSizes: Array[Byte])

spark 笔记 8: Stage的更多相关文章

- spark笔记 环境配置

spark笔记 spark简介 saprk 有六个核心组件: SparkCore.SparkSQL.SparkStreaming.StructedStreaming.MLlib,Graphx Spar ...

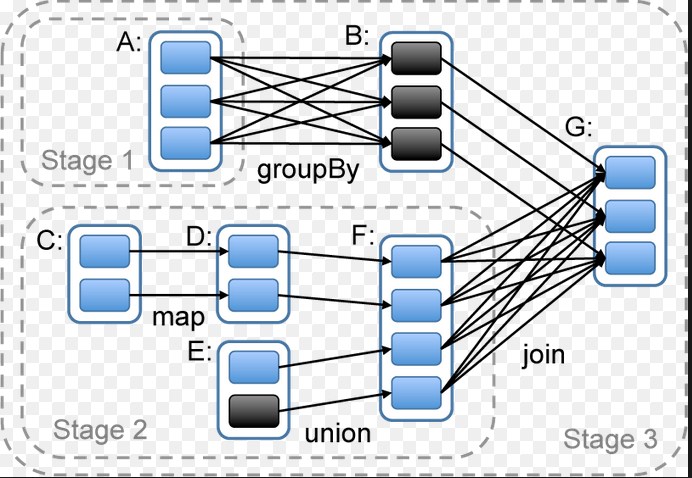

- Spark 资源调度包 stage 类解析

spark 资源调度包 Stage(阶段) 类解析 Stage 概念 Spark 任务会根据 RDD 之间的依赖关系, 形成一个DAG有向无环图, DAG会被提交给DAGScheduler, DAGS ...

- spark 笔记 15: ShuffleManager,shuffle map两端的stage/task的桥梁

无论是Hadoop还是spark,shuffle操作都是决定其性能的重要因素.在不能减少shuffle的情况下,使用一个好的shuffle管理器也是优化性能的重要手段. ShuffleManager的 ...

- spark 笔记 13: 再看DAGScheduler,stage状态更新流程

当某个task完成后,某个shuffle Stage X可能已完成,那么就可能会一些仅依赖Stage X的Stage现在可以执行了,所以要有响应task完成的状态更新流程. ============= ...

- 大数据学习——spark笔记

变量的定义 val a: Int = 1 var b = 2 方法和函数 区别:函数可以作为参数传递给方法 方法: def test(arg: Int): Int=>Int ={ 方法体 } v ...

- spark 笔记 16: BlockManager

先看一下原理性的文章:http://jerryshao.me/architecture/2013/10/08/spark-storage-module-analysis/ ,http://jerrys ...

- spark 笔记 9: Task/TaskContext

DAGScheduler最终创建了task set,并提交给了taskScheduler.那先得看看task是怎么定义和执行的. Task是execution执行的一个单元. Task: execut ...

- spark 笔记 7: DAGScheduler

在前面的sparkContex和RDD都可以看到,真正的计算工作都是同过调用DAGScheduler的runjob方法来实现的.这是一个很重要的类.在看这个类实现之前,需要对actor模式有一点了解: ...

- spark 笔记 2: Resilient Distributed Datasets: A Fault-Tolerant Abstraction for In-Memory Cluster Computing

http://www.cs.berkeley.edu/~matei/papers/2012/nsdi_spark.pdf ucb关于spark的论文,对spark中核心组件RDD最原始.本质的理解, ...

随机推荐

- 25个免费的jQuery/ JavaScript的图表和图形库

1. JS Charts Features Prepare your chart data in XML, JSON or JavaScript Array Create charts in dif ...

- Halide安装指南release版本

Halide安装指南 本版本针对Halide release版本 by jourluohua 使用的是Ubuntu 16.04 64位系统默认Gcc 4.6 由于halide中需要C++ 11中的特性 ...

- Ubuntu 部署Python开发环境

一.开发环境包安装 sudo apt-get install git-core sudo apt-get install libxml2-dev sudo apt-get install libxsl ...

- Linux系统层级结构标准

Linux Foundation有一套标准规范: FHS: Filesystem Hierarchy[‘haɪərɑːkɪ] Standard(文件系统层级标准)目前最新的标准是2.3版本:http: ...

- mysql事务,视图,触发器,存储过程与备份

.事务 通俗的说,事务指一组操作,要么都执行成功,要么都执行失败 思考: 我去银行给朋友汇款, 我卡上有1000元, 朋友卡上1000元, 我给朋友转账100元(无手续费), 如果,我的钱刚扣,而朋友 ...

- Android 热修复 Tinker platform 中的坑,以及详细步骤(二)

操作流程: 一.注册平台账号: http://www.tinkerpatch.com 二.查看操作文档: http://www.tinkerpatch.com/Docs/SDK 参考文档: https ...

- MyEclipse使用教程:使用工作集组织工作区

[MyEclipse CI 2019.4.0安装包下载] 工作集允许您通过过滤掉不关注的项目来组织项目视图.激活工作集时,只有分配给它的项目才会显示在项目视图中. 如果您的视图中有大量项目,这将非常有 ...

- 利用java8新特性,用简洁高效的代码来实现一些数据处理

定义1个Apple对象: public class Apple { private Integer id; private String name; private BigDecim ...

- linux学习:【第4篇】之nginx

一.知识点回顾 临时:关闭当前正在运行的 /etc/init.d/iptables stop 永久:关闭开机自启动 chkonfig iptables off ll /var/log/secure # ...

- 清空DataGridView

DataTable dt = (DataTable)dgv.DataSource; dt.Rows.Clear(); dgv.DataSource = dt;