HiBench成长笔记——(4) HiBench测试Spark SQL

很多内容之前的博客已经提过,这里不再赘述,详细内容参照本系列前面的博客:https://www.cnblogs.com/ratels/p/10970905.html 和 https://www.cnblogs.com/ratels/p/10976060.html

执行脚本

bin/workloads/sql/scan/prepare/prepare.sh

返回信息

[root@node1 prepare]# ./prepare.sh patching args= Parsing conf: /home/cf/app/HiBench-master/conf/hadoop.conf Parsing conf: /home/cf/app/HiBench-master/conf/hibench.conf Parsing conf: /home/cf/app/HiBench-master/conf/spark.conf Parsing conf: /home/cf/app/HiBench-master/conf/workloads/sql/scan.conf probe -.cdh5./lib/hadoop/../../jars/hadoop-mapreduce-client-jobclient--cdh5.14.2-tests.jar start HadoopPrepareScan bench hdfs -.cdh5./bin/hadoop --config /etc/hadoop/conf.cloudera.yarn fs -rm -r -skipTrash hdfs://node1:8020/HiBench/Scan/Input rm: `hdfs://node1:8020/HiBench/Scan/Input': No such file or directory Pages:, USERVISITS: Submit MapReduce Job: /opt/cloudera/parcels/CDH--.cdh5./bin/hadoop --config /etc/hadoop/conf.cloudera.yarn jar /home/cf/app/HiBench-master/autogen/target/autogen-7.1-SNAPSHOT-jar-with-dependencies.jar HiBench.DataGen -t hive -b hdfs://node1:8020/HiBench/Scan -n Input -m 8 -r 8 -p 120 -v 1000 -o sequence // :: INFO HiBench.HiveData: Closing hive data generator... finish HadoopPrepareScan bench

执行脚本

bin/workloads/sql/scan/spark/run.sh

返回信息

[root@node1 spark]# ./run.sh patching args= Parsing conf: /home/cf/app/HiBench-master/conf/hadoop.conf Parsing conf: /home/cf/app/HiBench-master/conf/hibench.conf Parsing conf: /home/cf/app/HiBench-master/conf/spark.conf Parsing conf: /home/cf/app/HiBench-master/conf/workloads/sql/scan.conf probe -.cdh5./lib/hadoop/../../jars/hadoop-mapreduce-client-jobclient--cdh5.14.2-tests.jar start ScalaSparkScan bench hdfs -.cdh5./bin/hadoop --config /etc/hadoop/conf.cloudera.yarn fs -rm -r -skipTrash hdfs://node1:8020/HiBench/Scan/Output rm: `hdfs://node1:8020/HiBench/Scan/Output': No such file or directory Export env: SPARKBENCH_PROPERTIES_FILES=/home/cf/app/HiBench-master/report/scan/spark/conf/sparkbench/sparkbench.conf Export env: HADOOP_CONF_DIR=/etc/hadoop/conf.cloudera.yarn Submit Spark job: /opt/cloudera/parcels/CDH--.cdh5./lib/spark/bin/spark-submit --properties- --executor-cores --executor-memory 4g /home/cf/app/HiBench-master/sparkbench/assembly/target/sparkbench-assembly-7.1-SNAPSHOT-dist.jar ScalaScan /home/cf/app/HiBench-master/report/scan/spark/conf/../rankings_uservisits_scan.hive // :: INFO CuratorFrameworkSingleton: Closing ZooKeeper client. hdfs -.cdh5./bin/hadoop --config /etc/hadoop/conf.cloudera.yarn fs -du -s hdfs://node1:8020/HiBench/Scan/Output finish ScalaSparkScan bench

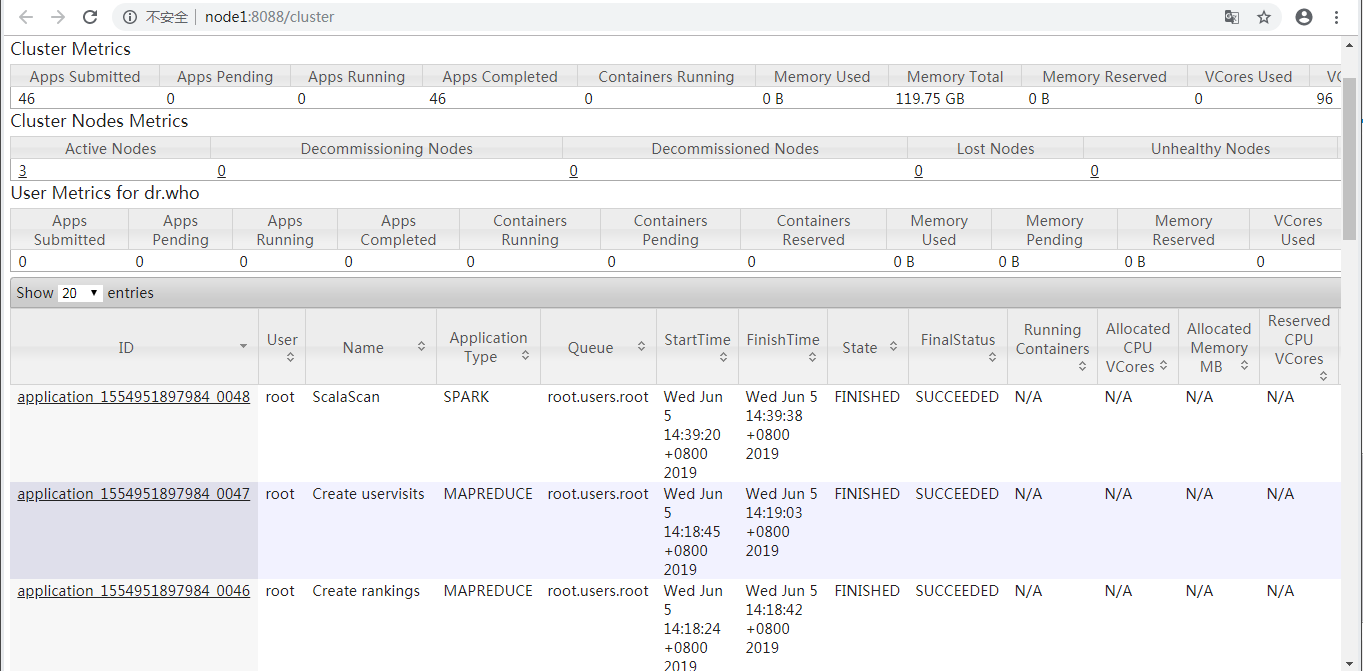

查看ResourceManager Web UI

prepare.sh启动了application_1554951897984_0047和application_1554951897984_0046两个MAPREDUCE任务,run.sh启动了application_1554951897984_0048这个Spark任务。

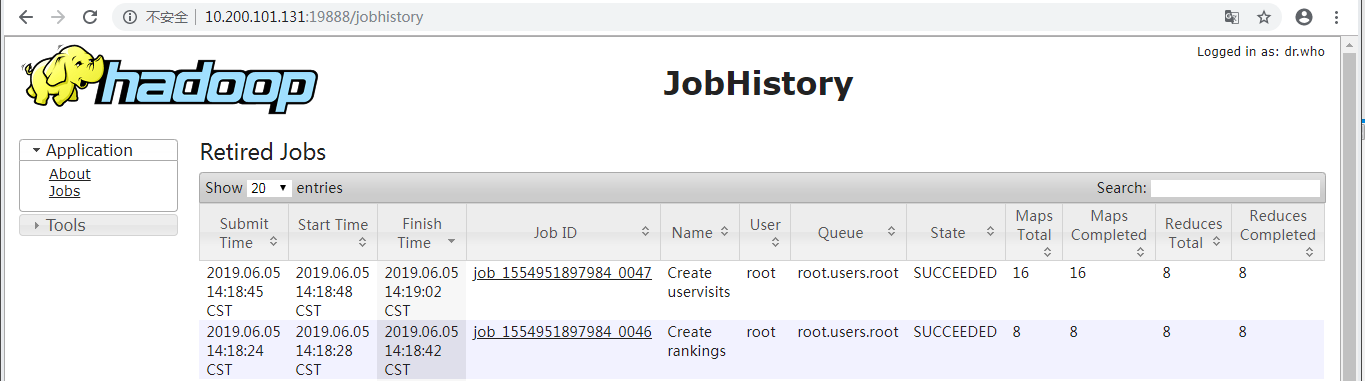

查看(Hadoop)HistoryServer Web UI

显示了prepare.sh启动的application_1554951897984_0047和application_1554951897984_0046两个MAPREDUCE任务。

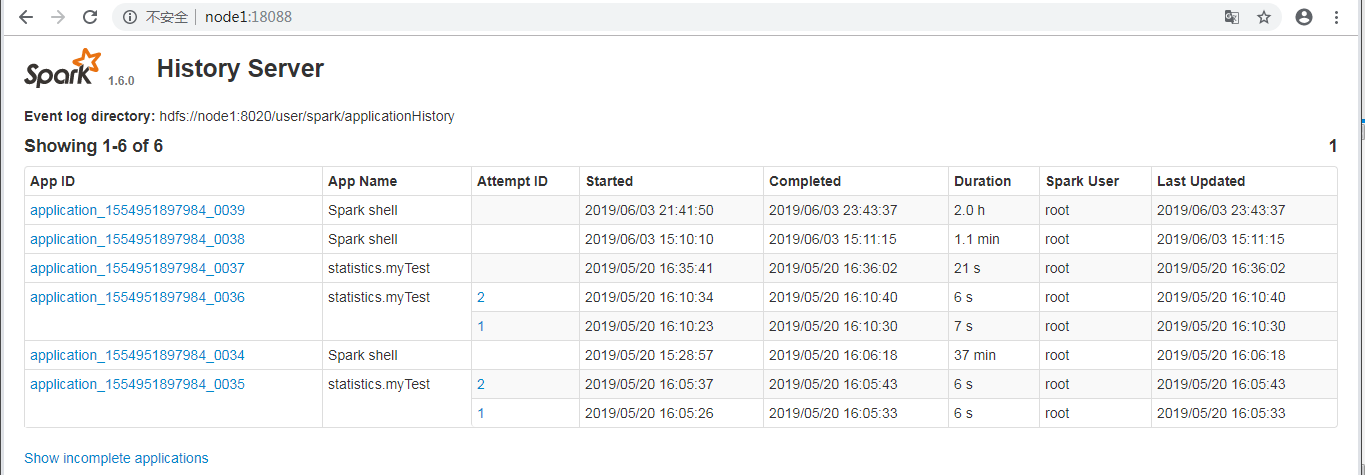

查看(Spark) History Server Web UI

并未显示run.sh启动的application_1554951897984_0048这个Spark任务。

执行脚本

bin/workloads/sql/join/prepare/prepare.sh

返回信息

[root@node1 prepare]# ./prepare.sh patching args= Parsing conf: /home/cf/app/HiBench-master/conf/hadoop.conf Parsing conf: /home/cf/app/HiBench-master/conf/hibench.conf Parsing conf: /home/cf/app/HiBench-master/conf/spark.conf Parsing conf: /home/cf/app/HiBench-master/conf/workloads/sql/join.conf probe -.cdh5./lib/hadoop/../../jars/hadoop-mapreduce-client-jobclient--cdh5.14.2-tests.jar start HadoopPrepareJoin bench hdfs -.cdh5./bin/hadoop --config /etc/hadoop/conf.cloudera.yarn fs -rm -r -skipTrash hdfs://node1:8020/HiBench/Join/Input rm: `hdfs://node1:8020/HiBench/Join/Input': No such file or directory Pages:, USERVISITS: Submit MapReduce Job: /opt/cloudera/parcels/CDH--.cdh5./bin/hadoop --config /etc/hadoop/conf.cloudera.yarn jar /home/cf/app/HiBench-master/autogen/target/autogen-7.1-SNAPSHOT-jar-with-dependencies.jar HiBench.DataGen -t hive -b hdfs://node1:8020/HiBench/Join -n Input -m 8 -r 8 -p 120 -v 1000 -o sequence // :: INFO HiBench.HiveData: Closing hive data generator... finish HadoopPrepareJoin bench

执行脚本

bin/workloads/sql/join/spark/run.sh

返回信息

[root@node1 spark]# ./run.sh patching args= Parsing conf: /home/cf/app/HiBench-master/conf/hadoop.conf Parsing conf: /home/cf/app/HiBench-master/conf/hibench.conf Parsing conf: /home/cf/app/HiBench-master/conf/spark.conf Parsing conf: /home/cf/app/HiBench-master/conf/workloads/sql/join.conf probe -.cdh5./lib/hadoop/../../jars/hadoop-mapreduce-client-jobclient--cdh5.14.2-tests.jar start ScalaSparkJoin bench hdfs -.cdh5./bin/hadoop --config /etc/hadoop/conf.cloudera.yarn fs -du -s hdfs://node1:8020/HiBench/Join/Input hdfs -.cdh5./bin/hadoop --config /etc/hadoop/conf.cloudera.yarn fs -rm -r -skipTrash hdfs://node1:8020/HiBench/Join/Output rm: `hdfs://node1:8020/HiBench/Join/Output': No such file or directory Export env: SPARKBENCH_PROPERTIES_FILES=/home/cf/app/HiBench-master/report/join/spark/conf/sparkbench/sparkbench.conf Export env: HADOOP_CONF_DIR=/etc/hadoop/conf.cloudera.yarn Submit Spark job: /opt/cloudera/parcels/CDH--.cdh5./lib/spark/bin/spark-submit --properties- --executor-cores --executor-memory 4g /home/cf/app/HiBench-master/sparkbench/assembly/target/sparkbench-assembly-7.1-SNAPSHOT-dist.jar ScalaJoin /home/cf/app/HiBench-master/report/join/spark/conf/../rankings_uservisits_join.hive // :: INFO remote.RemoteActorRefProvider$RemotingTerminator: Remote daemon shut down; proceeding with flushing remote transports. finish ScalaSparkJoin bench

执行脚本

bin/workloads/sql/aggregation/prepare/prepare.sh

返回信息

[root@node1 prepare]# ./prepare.sh patching args= Parsing conf: /home/cf/app/HiBench-master/conf/hadoop.conf Parsing conf: /home/cf/app/HiBench-master/conf/hibench.conf Parsing conf: /home/cf/app/HiBench-master/conf/spark.conf Parsing conf: /home/cf/app/HiBench-master/conf/workloads/sql/aggregation.conf probe -.cdh5./lib/hadoop/../../jars/hadoop-mapreduce-client-jobclient--cdh5.14.2-tests.jar start HadoopPrepareAggregation bench hdfs -.cdh5./bin/hadoop --config /etc/hadoop/conf.cloudera.yarn fs -rm -r -skipTrash hdfs://node1:8020/HiBench/Aggregation/Input rm: `hdfs://node1:8020/HiBench/Aggregation/Input': No such file or directory Pages:, USERVISITS: Submit MapReduce Job: /opt/cloudera/parcels/CDH--.cdh5./bin/hadoop --config /etc/hadoop/conf.cloudera.yarn jar /home/cf/app/HiBench-master/autogen/target/autogen-7.1-SNAPSHOT-jar-with-dependencies.jar HiBench.DataGen -t hive -b hdfs://node1:8020/HiBench/Aggregation -n Input -m 8 -r 8 -p 120 -v 1000 -o sequence // :: INFO HiBench.HiveData: Closing hive data generator... finish HadoopPrepareAggregation bench

执行脚本

bin/workloads/sql/aggregation/spark/run.sh

返回信息

[root@node1 spark]# ./run.sh patching args= Parsing conf: /home/cf/app/HiBench-master/conf/hadoop.conf Parsing conf: /home/cf/app/HiBench-master/conf/hibench.conf Parsing conf: /home/cf/app/HiBench-master/conf/spark.conf Parsing conf: /home/cf/app/HiBench-master/conf/workloads/sql/aggregation.conf probe -.cdh5./lib/hadoop/../../jars/hadoop-mapreduce-client-jobclient--cdh5.14.2-tests.jar start ScalaSparkAggregation bench hdfs -.cdh5./bin/hadoop --config /etc/hadoop/conf.cloudera.yarn fs -rm -r -skipTrash hdfs://node1:8020/HiBench/Aggregation/Output rm: `hdfs://node1:8020/HiBench/Aggregation/Output': No such file or directory Export env: SPARKBENCH_PROPERTIES_FILES=/home/cf/app/HiBench-master/report/aggregation/spark/conf/sparkbench/sparkbench.conf Export env: HADOOP_CONF_DIR=/etc/hadoop/conf.cloudera.yarn Submit Spark job: /opt/cloudera/parcels/CDH--.cdh5./lib/spark/bin/spark-submit --properties- --executor-cores --executor-memory 4g /home/cf/app/HiBench-master/sparkbench/assembly/target/sparkbench-assembly-7.1-SNAPSHOT-dist.jar ScalaAggregation /home/cf/app/HiBench-master/report/aggregation/spark/conf/../uservisits_aggre.hive // :: INFO remote.RemoteActorRefProvider$RemotingTerminator: Remote daemon shut down; proceeding with flushing remote transports. hdfs -.cdh5./bin/hadoop --config /etc/hadoop/conf.cloudera.yarn fs -du -s hdfs://node1:8020/HiBench/Aggregation/Output finish ScalaSparkAggregation bench

参考:https://www.cnblogs.com/barneywill/p/10436299.html

HiBench成长笔记——(4) HiBench测试Spark SQL的更多相关文章

- HiBench成长笔记——(1) HiBench概述

测试分类 HiBench共计19个测试方向,可大致分为6个测试类别:分别是micro,ml(机器学习),sql,graph,websearch和streaming. 2.1 micro Benchma ...

- HiBench成长笔记——(3) HiBench测试Spark

很多内容之前的博客已经提过,这里不再赘述,详细内容参照本系列前面的博客:https://www.cnblogs.com/ratels/p/10970905.html 创建并修改配置文件conf/spa ...

- HiBench成长笔记——(6) HiBench测试结果分析

Scan Join Aggregation Scan Join Aggregation Scan Join Aggregation Scan Join Aggregation Scan Join Ag ...

- HiBench成长笔记——(5) HiBench-Spark-SQL-Scan源码分析

run.sh #!/bin/bash # Licensed to the Apache Software Foundation (ASF) under one or more # contributo ...

- HiBench成长笔记——(2) CentOS部署安装HiBench

安装Scala 使用spark-shell命令进入shell模式,查看spark版本和Scala版本: 下载Scala2.10.5 wget https://downloads.lightbend.c ...

- HiBench成长笔记——(8) 分析源码workload_functions.sh

workload_functions.sh 是测试程序的入口,粘连了监控程序 monitor.py 和 主运行程序: #!/bin/bash # Licensed to the Apache Soft ...

- HiBench成长笔记——(7) 阅读《The HiBench Benchmark Suite: Characterization of the MapReduce-Based Data Analysis》

<The HiBench Benchmark Suite: Characterization of the MapReduce-Based Data Analysis>内容精选 We th ...

- HiBench成长笔记——(10) 分析源码execute_with_log.py

#!/usr/bin/env python2 # Licensed to the Apache Software Foundation (ASF) under one or more # contri ...

- HiBench成长笔记——(9) 分析源码monitor.py

monitor.py 是主监控程序,将监控数据写入日志,并统计监控数据生成HTML统计展示页面: #!/usr/bin/env python2 # Licensed to the Apache Sof ...

随机推荐

- Centos6.X创建Oracle用户

第一步:创建数据表空间 第二步:创建临时表空间 第三步:创建用户并指定表空间 第四步:给用户授予权限 1.创建数据表空间 格式: create tablespace 表间名 datafile ‘数据文 ...

- centos7使用docker制作tomcat本地镜像

1.安装Docker 安装docker前请确认当前linux的内核版必须是3.10及以上 命令: uname -r 1).yum install -y yum-utils device-mapper ...

- 设计模式01 创建型模式 - 单例模式(Singleton Pattern)

参考 [1] 设计模式之:创建型设计模式(6种) | 博客园 [2] 单例模式的八种写法比较 | 博客园 单例模式(Singleton Pattern) 确保一个类有且仅有一个实例,并且为客户提供一 ...

- 50道SQL练习题及答案与详细分析(MySQL)

50道SQL练习题及答案与详细分析(MySQL) 网上的经典50到SQL题,经过一阵子的半抄带做,基于个人理解使用MySQL重新完成一遍,感觉个人比较喜欢用join,联合查询较少 希望与大家一起学习研 ...

- 机器学习基础系列--先验概率 后验概率 似然函数 最大似然估计(MLE) 最大后验概率(MAE) 以及贝叶斯公式的理解

目录 机器学习基础 1. 概率和统计 2. 先验概率(由历史求因) 3. 后验概率(知果求因) 4. 似然函数(由因求果) 5. 有趣的野史--贝叶斯和似然之争-最大似然概率(MLE)-最大后验概率( ...

- Airless Pump Bottle For The Rise Of Cosmetic Packaging Solutions

Airless Pump Bottle are used in the rise of cosmetic packaging solutions. According to the suppli ...

- PAT T1012 Greedy Snake

直接暴力枚举,注意每次深搜完状态的还原~ #include<bits/stdc++.h> using namespace std; ; int visit[maxn][maxn]; int ...

- 3 CSS 定位&浮动&水平对齐&组合选择符&伪类&伪元素

CSS Position(定位):元素的定位与文档流无关 static定位: HTML元素的默认值, 没有定位,元素出现在正常的流中 静态定位的元素不会受到top,bottom,left,right影 ...

- 新增6 n个骰子的点数

/* * * 面试题43:n个骰子的点数 * 把n个骰子扔在地上,所有骰子朝上一面的点数之和为s. * 输入n,打印出s的所有可能的值出现的概率. * */ #include <iostream ...

- 学习SpringMVC 文件上传 遇到的问题,403:returned a response status of 403 Forbidden ,409文件夹未找到

问题一: 409:文件夹没有创建好,找不到指定的文件夹,创建了即可. 问题二: 403:returned a response status of 403 Forbidden 我出现这个错误的原因是因 ...