(转) Let’s make an A3C: Theory

本文转自:https://jaromiru.com/2017/02/16/lets-make-an-a3c-theory/

Let’s make an A3C: Theory

1. Theory

2. Implementation (TBD)

Introduction

Policy Gradient Methods is an interesting family of Reinforcement Learning algorithms. They have a long history1, but only recently were backed by neural networks and had success in high-dimensional cases. A3C algorithm was published in 2016 and can do better than DQN with a fraction of time and resources2.

In this series of articles we will explain the theory behind Policy Gradient Methods, A3C algorithm and develop a simple agent in Python.

It is very recommended to have read at least the first Theory article from Let’s make a DQNseries which explains theory behind Reinforcement Learning (RL). We will also make comparison to DQN and make references to these older series.

Background

Let’s review the RL basics. An agent exists in an environment, which evolves in discrete time steps. Agent can influence the environment by taking an action a each time step, after which it receives a reward r and an observed state s. For simplification, we only consider deterministic environments. That means that taking action a in state s always results in the same state s’.

Although these high level concepts stay the same as in DQN case, they are some important changes in Policy Gradient (PG) Methods. To understand the following, we have to make some definitions.

First, agent’s actions are determined by a stochastic policy π(s). Stochastic policy means that it does not output a single action, but a distribution of probabilities over actions, which sum to 1.0. We’ll also use a notation π(a|s) which means the probability of taking action a in state s.

For clarity, note that there is no concept of greedy policy in this case. The policy π does not maximize any value. It is simply a function of a state s, returning probabilities for all possible actions.

We will also use a concept of expectation of some value. Expectation of value X in a probability distribution P is:

where are all possible values of X and

their probabilities of occurrence. It can also be viewed as a weighted average of values

with weights

.

The important thing here is that if we had a pool of values X, ratio of which was given by P, and we randomly picked a number of these, we would expect the mean of them to be . And the mean would get closer to

as the number of samples rise.

We’ll use the concept of expectation right away. We define a value function V(s) of policy π as an expected discounted return, which can be viewed as a following recurrent definition:

Basically, we weight-average the for every possible action we can take in state s. Note again that there is no max, we are simply averaging.

Action-value function Q(s, a) is on the other hand defined plainly as:

simply because the action is given and there is only one following s’.

Now, let’s define a new function A(s, a) as:

We call A(s, a) an advantage function and it expresses how good it is to take an action a in a state s compared to average. If the action a is better than average, the advantage function is positive, if worse, it is negative.

And last, let’s define as some distribution of states, saying what the probability of being in some state is. We’ll use two notations –

, which gives us a distribution of starting states in the environment and

, which gives us a distribution of states under policy π. In other words, it gives us probabilities of being in a state when following policy π.

Policy Gradient

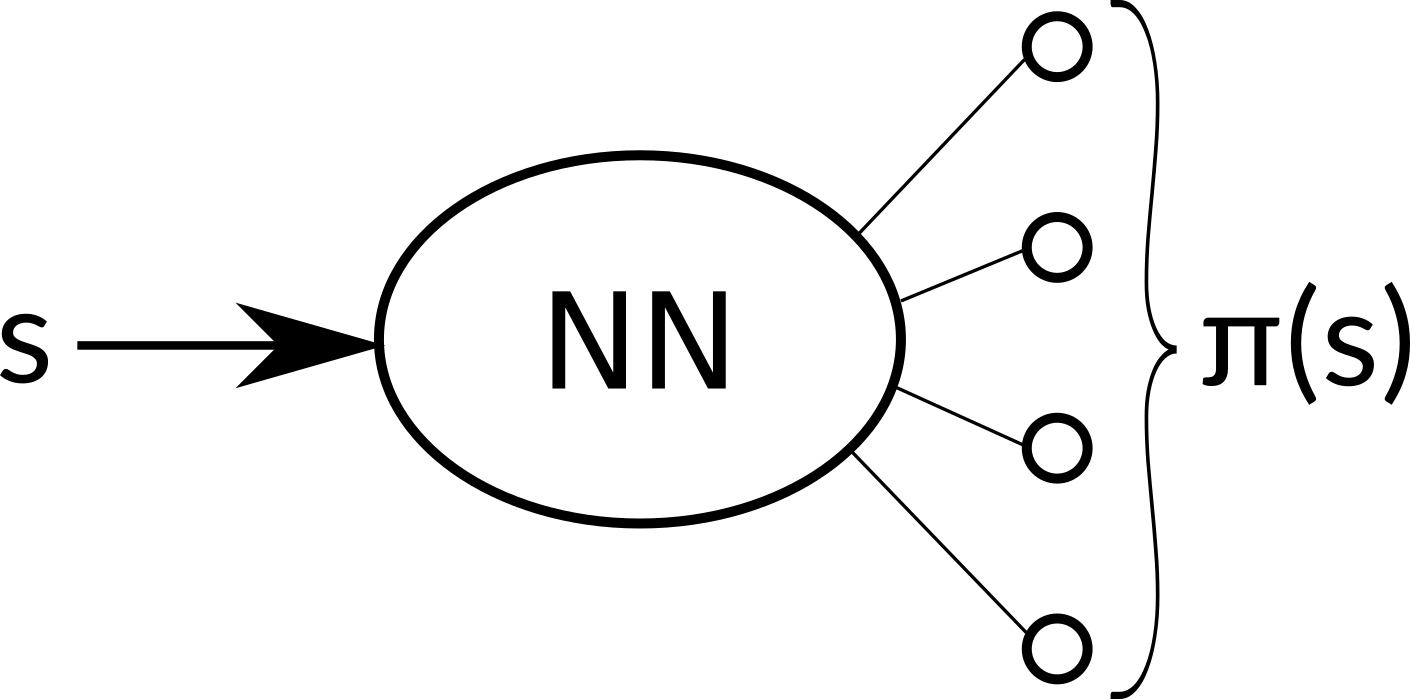

When we built the DQN agent, we used a neural network to approximate the Q(s, a) function. But now we will take a different approach. The policy π is just a function of state s, so we can approximate directly that. Our neural network with weights will now take an state s as an input and output an action probability distribution,

. From now on, by writing π it is meant

, a policy parametrized by the network weights

.

In practice, we can take an action according to this distribution or simply take the action with the highest probability, both approaches have their pros and cons.

But we want the policy to get better, so how do we optimize it? First, we need some metric that will tell us how good a policy is. Let’s define a function as a discounted reward that a policy π can gain, averaged over all possible starting states

.

We can agree that this metric truly expresses, how good a policy is. The problem is that it’s hard to estimate. Good news are, that we don’t have to.

What we truly care about is how to improve this quantity. If we knew the gradient of this function, it would be trivial. Surprisingly, it turns out that there’s easily computable gradient of function in the following form:

I understand that the step from to

looks a bit mysterious, but a proof is out of scope of this article. The formula above is derived in the Policy Gradient Theorem3 and you can look it up if you want to delve into quite a piece of mathematics. I also direct you to a more digestible online lecture4, where David Silver explains the theorem and also a concept of baseline, which I already incorporated.

The formula might seem intimidating, but it’s actually quite intuitive when it’s broken down. First, what does it say? It informs us in what direction we have to change the weights of the neural network if we want the function to improve.

Let’s look at the right side of the expression. The second term inside the expectation, , tells us a direction in which logged probability of taking action a in state s rises. Simply said, how to make this action in this context more probable.

The first term, , is a scalar value and tells us what’s the advantage of taking this action. Combined we see that likelihood of actions that are better than average is increased, and likelihood of actions worse than average is decreased. That sounds like a right thing to do.

Both terms are inside an expectation over state and action distribution of π. However, we can’t exactly compute it over every state and every action. Instead, we can use that nice property of expectation that the mean of samples with these distributions lays near the expected value.

Fortunately, running an episode with a policy π yields samples distributed exactly as we need. States encountered and actions taken are indeed an unbiased sample from the and π(s)distributions.

That’s great news. We can simply let our agent run in the environment and record the (s, a, r, s’) samples. When we gather enough of them, we use the formula above to find a good approximation of the gradient . We can then use any of the existing techniques based on gradient descend to improve our policy.

Actor-critic

One thing that remains to be explained is how we compute the A(s, a) term. Let’s expand the definition:

A sample from a run can give us an unbiased estimate of the Q(s, a) function. We can also see that it is sufficient to know the value function V(s) to compute A(s, a).

The value function can also be approximated by a neural network, just as we did with action-value function in DQN. Compared to that, it’s easier to learn, because there is only one value for each state.

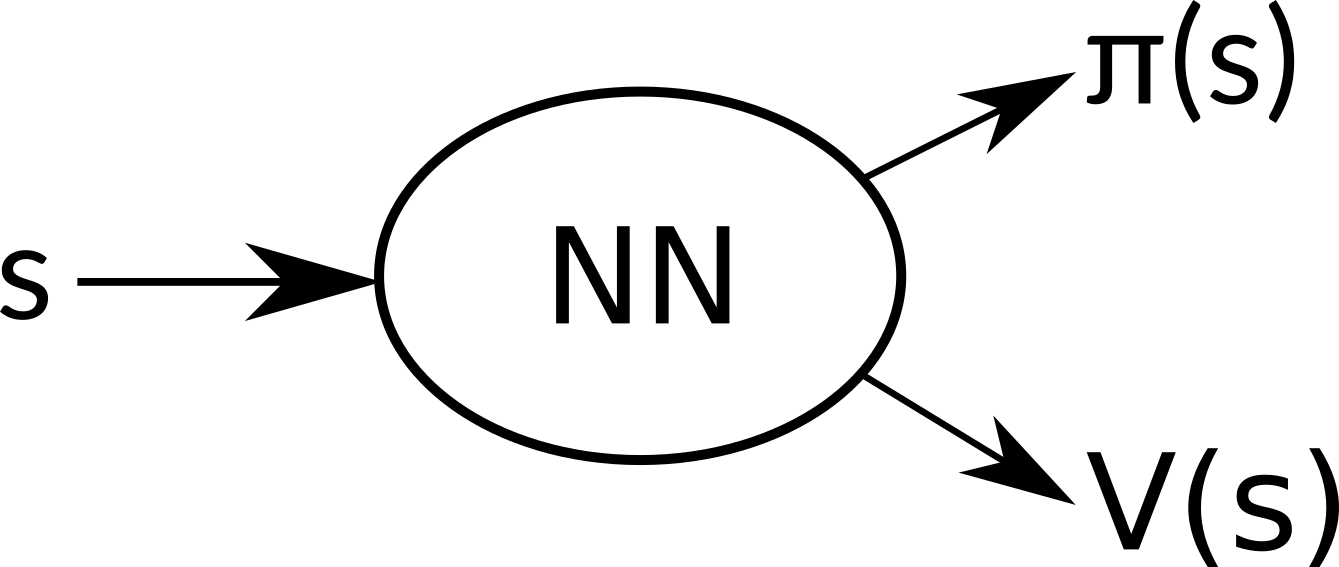

What’s more, we can use the same neural network for estimating π(s) to estimate V(s). This has multiple benefits. Because we optimize both of these goals together, we learn much faster and effectively. Separate networks would very probably learn very similar low level features, which is obviously superfluous. Optimizing both goals together also acts as a regularizing element and leads to a greater stability. Exact details on how to train our network will be explained in the next article. The final architecture then looks like this:

Our neural network share all hidden layers and outputs two sets – π(s) and V(s).

So we have two different concepts working together. The goal of the first one is to optimize the policy, so it performs better. This part is called actor. The second is trying to estimate the value function, to make it more precise. That is called critic. I believe these terms arose from the Policy Gradient Theorem:

The actor acts, and the critic gives insight into what is a good action and what is bad.

Parallel agents

The samples we gather during a run of an agent are highly correlated. If we use them as they arrive, we quickly run into issues of online learning. In DQN, we used a technique named Experience Replay to overcome this issue. We stored the samples in a memory and retrieved them in random order to form a batch.

But there’s another way to break this correlation while still using online learning. We can run several agents in parallel, each with its own copy of the environment, and use their samples as they arrive. Different agents will likely experience different states and transitions, thus avoiding the correlation2. Another benefit is that this approach needs much less memory, because we don’t need to store the samples.

This is the approach the A3C algorithm takes. The full name is Asynchronous advantage actor-critic (A3C) and now you should be able to understand why.

Conclusion

We learned the fundamental theory behind PG methods and will use this knowledge to implement an agent in the next article. We will explain how to use the gradients to train the neural network with our familiar tools, Python, Keras and newly TensorFlow.

References

- Williams, R., Simple statistical gradient-following algorithms for connectionist reinforcement learning, Machine Learning, 1992

- Mnih, V. et al., Asynchronous methods for deep reinforcement learning, ICML, 2016

- Sutton, R. et al., Policy Gradient Methods for Reinforcement Learning with Function Approximation, NIPS, 1999

- Silver, D., Policy Gradient Methods, https://www.youtube.com/watch?v=KHZVXao4qXs, 2015

Post navigation

Leave a Reply

![]()

(转) Let’s make an A3C: Theory的更多相关文章

- Introduction to graph theory 图论/脑网络基础

Source: Connected Brain Figure above: Bullmore E, Sporns O. Complex brain networks: graph theoretica ...

- 博弈论揭示了深度学习的未来(译自:Game Theory Reveals the Future of Deep Learning)

Game Theory Reveals the Future of Deep Learning Carlos E. Perez Deep Learning Patterns, Methodology ...

- Understanding theory (1)

Source: verysmartbrothas.com It has been confusing since my first day as a PhD student about theory ...

- Machine Learning Algorithms Study Notes(3)--Learning Theory

Machine Learning Algorithms Study Notes 高雪松 @雪松Cedro Microsoft MVP 本系列文章是Andrew Ng 在斯坦福的机器学习课程 CS 22 ...

- Java theory and practice

This content is part of the series: Java theory and practice A brief history of garbage collection A ...

- CCJ PRML Study Note - Chapter 1.6 : Information Theory

Chapter 1.6 : Information Theory Chapter 1.6 : Information Theory Christopher M. Bishop, PRML, C ...

- 信息熵 Information Theory

信息论(Information Theory)是概率论与数理统计的一个分枝.用于信息处理.信息熵.通信系统.数据传输.率失真理论.密码学.信噪比.数据压缩和相关课题.本文主要罗列一些基于熵的概念及其意 ...

- Computer Science Theory for the Information Age-4: 一些机器学习算法的简介

一些机器学习算法的简介 本节开始,介绍<Computer Science Theory for the Information Age>一书中第六章(这里先暂时跳过第三章),主要涉及学习以 ...

- Computer Science Theory for the Information Age-1: 高维空间中的球体

高维空间中的球体 注:此系列随笔是我在阅读图灵奖获得者John Hopcroft的最新书籍<Computer Science Theory for the Information Age> ...

随机推荐

- linux命令-查找所有文件中包含某个字符串

查找目录下的所有文件中是否含有某个字符串 find .|xargs grep -ri "IBM" 查找目录下的所有文件中是否含有某个字符串,并且只打印出文件名 find .|xar ...

- java 序列化和反序列化的实现原理

老是听说序列化反序列化,就是不知道到底什么是序列化,什么是反序列化?今天就在网上搜索学习一下,这一搜不要紧,发现自己曾经用过,竟然不知道那就是JDK类库中序列化和反序列化的API. ----什么是序列 ...

- Map集合——双列集合

双列集合<k, v> Map: Map 和 HashMap是无序的: LinkedHashMap是有序的: HashMap & LinkedHashMap: put方法: 其中,可 ...

- python中从键盘输入内容的方法raw_input()和input()的区别

raw_input()输出结果都是字符串 Input()输入什么内容,输出就是什么内容

- c# 设置控件的前景颜色和背景颜色

AutoSize:设置为false取消自动计算尺寸功能,控件的大小则按照设定的Size来呈现,设置为true自动计算大小 TextAlign:设置对齐方式 // // 摘要: // 用默认的所有者运行 ...

- 迭代器 生成器 yield

iter 迭代iterable 可迭代的 iterator迭代器 dir函数查看一个数据类型内部含有哪些方法 两边带着双下划线的方法叫做"魔术方法","双下方法" ...

- 102.自己实现ArrayList

package collection; import java.util.ArrayList; import java.util.List; /** * 自己实现一个ArrayList,帮助理解底层结 ...

- Linux CPU使用率含义及原理

相关概念 在Linux/Unix下,CPU利用率分为用户态.系统态和空闲态,分别表示CPU处于用户态执的时间,系统内核执行的时间,和空闲系统进程执行的时间. 下面是几个与CPU占用率相关的概念. CP ...

- Django框架----外键关联

app/models.py中: 创建班级表 class classes(models.Model): id = models.AutoField(primary_key=True) name = mo ...

- Java动态菜单添加

自己做出来的添加数据库配置好的动态菜单的方法 private void createMenu() { IMenuDAO dao = new MenuDAOImpl(); String sql1 = ...