Temporal-Difference Learning for Prediction

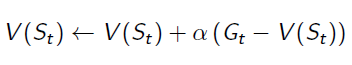

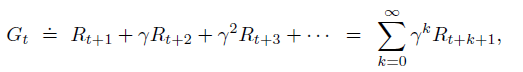

In Monte Carlo Learning, we've got the estimation of value function:

Gt is the episode return from time t, which can be calculated by:

Please recall, Gt can be only calculated at the end of a given episode. This reveals a disadvantage of Monte Carlo Learning: have to wait until the end of episodes.

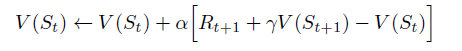

TD(0) algorithm replace Gt of the equation to the immediate reward and estimated value function of the next state:

The algorithm updates the Estimated State-Value Function at time t+1, because everything in the equation is determined. This means we will wait until the agent reaching the next state, so that the agent can get the immediate reward Rt+1 and know which state the system will transition to at time t+1.

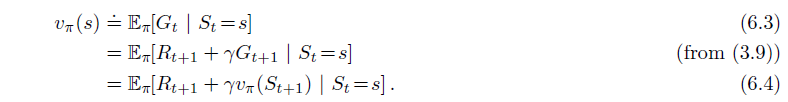

The equations below are State-Value Function for Dynamic Programming, in which the whole environment is known. Compare to these equations:

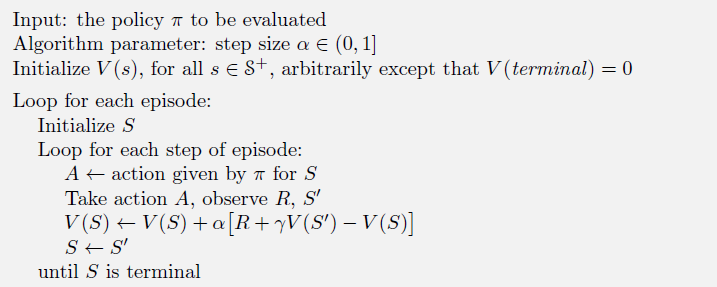

TD algorithm is quite like 6.4 Bellman Equation, but it does not take expectation. Instead, it uses the knowledge till now to estimate how much reward I am going to get from this state. The whole algorithm can be demonstrated as:

TD Target, TD Error

Bias/ Viriance trade-off

Bootstraping

Temporal-Difference Learning for Prediction的更多相关文章

- 【PPT】 Least squares temporal difference learning

最小二次方时序差分学习 原文地址: https://www.google.com/url?sa=t&rct=j&q=&esrc=s&source=web&cd= ...

- PP: Multi-Horizon Time Series Forecasting with Temporal Attention Learning

Problem: multi-horizon probabilistic forecasting tasks; Propose an end-to-end framework for multi-ho ...

- [Reinforcement Learning] Model-Free Prediction

上篇文章介绍了 Model-based 的通用方法--动态规划,本文内容介绍 Model-Free 情况下 Prediction 问题,即 "Estimate the value funct ...

- [Machine Learning] 机器学习常见算法分类汇总

声明:本篇博文根据http://www.ctocio.com/hotnews/15919.html整理,原作者张萌,尊重原创. 机器学习无疑是当前数据分析领域的一个热点内容.很多人在平时的工作中都或多 ...

- (转) Deep Learning Research Review Week 2: Reinforcement Learning

Deep Learning Research Review Week 2: Reinforcement Learning 转载自: https://adeshpande3.github.io/ad ...

- Awesome Reinforcement Learning

Awesome Reinforcement Learning A curated list of resources dedicated to reinforcement learning. We h ...

- Machine Learning 学习笔记1 - 基本概念以及各分类

What is machine learning? 并没有广泛认可的定义来准确定义机器学习.以下定义均为译文,若以后有时间,将补充原英文...... 定义1.来自Arthur Samuel(上世纪50 ...

- Distributional Reinforcement Learning with Quantile Regression

郑重声明:原文参见标题,如有侵权,请联系作者,将会撤销发布! arXiv:1710.10044v1 [cs.AI] 27 Oct 2017 In AAAI Conference on Artifici ...

- 3. Distributional Reinforcement Learning with Quantile Regression

C51算法理论上用Wasserstein度量衡量两个累积分布函数间的距离证明了价值分布的可行性,但在实际算法中用KL散度对离散支持的概率进行拟合,不能作用于累积分布函数,不能保证Bellman更新收敛 ...

随机推荐

- ASSERT()断言

头文件<assert.h> 作用:用于判断是否有非法的数据,有则程序报告错误,终止运行.(注意是非法情况,而不是错误情况) ASSERT()和assert()的区别: ASSERT ...

- Taro -- 文字左右滚动公告效果

文字左右滚动公告效果 设置公告的左移距离,不断减小,当左移距离大于公告长度(即公告已移出屏幕),重新循环. <View className='scroll-wrap'> <View ...

- 牛客练习赛14 A n的约数 (数论)

链接:https://ac.nowcoder.com/acm/contest/82/A来源:牛客网 时间限制:C/C++ 1秒,其他语言2秒 空间限制:C/C++ 262144K,其他语言524288 ...

- [ZJOI2006]物流运输(动态规划,最短路)

[ZJOI2006]物流运输 题目描述 物流公司要把一批货物从码头A运到码头B.由于货物量比较大,需要n天才能运完.货物运输过程中一般要转停好几个码头.物流公司通常会设计一条固定的运输路线,以便对整个 ...

- Insomni'hack teaser 2019 - Reverse - beginner_reverse

参考链接 https://ctftime.org/task/7455 题目描述 A babyrust to become a hardcore reverser 点我下载 解题过程 一道用rust写的 ...

- 小程序内置组件swiper,circular(衔接)使用小技巧

swiper,关于滑块的一些效果无缝,断点,视差等等...我想这里就不用做太多的赘述,这里给大家分享一下实战项目中使用circular(衔接)的一点小特性.小技巧,当然你也可以理解为遇到了一个小坑,因 ...

- puppet使用rsync模块

puppet使用rsync模块同步目录和文件 环境说明: OS : CentOS5.4 i686puppet版本: ...

- Keras MAE和MSE source code

def mean_squared_error(y_true, y_pred): if not K.is_tensor(y_pred): y_pred = K.constant(y_pred) y_tr ...

- linux运维、架构之路-Kickstart无人值守

一.PXE介绍 PXE全名Pre-boot Execution Environment,预启动执行环境:通过网络接口启动计算机,不依赖本地存储设备或本地已安装的操作系统:Client ...

- webkit内核的浏览器常见7种分别是..

Google Chrome Safari 遨游浏览器 3.x 搜狗浏览器 阿里云浏览器 QQ浏览器 360浏览器 ...