Flume环境安装

源码包下载:

http://archive.apache.org/dist/flume/1.8.0/

集群环境:

master 192.168.1.99

slave1 192.168.1.100

slave2 192.168.1.101

下载安装包:

# Master

wget http://archive.apache.org/dist/flume/1.8.0/apache-flume-1.8.0-bin.tar.gz -C /usr/local/src

tar -zxvf apache-flume-1.8.0-bin.tar.gz

mv apache-flume-1.8.0-bin /usr/local/flume

Flume配置:

#Netcat

cd /usr/local/flume/conf

vim flume-netcat.conf

# Name the components on this agent

agent.sources = r1

agent.sinks = k1

agent.channels = c1 # Describe/configuration the source

agent.sources.r1.type = netcat

agent.sources.r1.bind = master

agent.sources.r1.port = #Describe the sink

agent.sinks.k1.type = logger # Use a channel which buffers events in memory

agent.channels.c1.type = memory

agent.channels.c1.capacity =

agent.channels.c1.transactionCapacity = # Bind the source and sink to the channel

agent.sources.r1.channels = c1

agent.sinks.k1.channel = c1

验证:

服务端:

/usr/local/flume/bin/flume-ng agent -f flume-netcat.conf -n agent -Dflume.root.logger=INFO, console 客户端:

telnet master 44444

结果如图:

#Exec

cd /usr/local/flume/conf

vim flume-exec.conf

# Name the components on this agent

agent.sources = r1

agent.sinks = k1

agent.channels = c1 # Describe/configuration the source

agent.sources.r1.type = exec

agent.sources.r1.command = tail -f /root/test.log #Describe the sink

agent.sinks.k1.type = logger # Use a channel which buffers events in memory

agent.channels.c1.type = memory

agent.channels.c1.capacity =

agent.channels.c1.transactionCapacity = # Bind the source and sink to the channel

agent.sources.r1.channels = c1

agent.sinks.k1.channel = c1

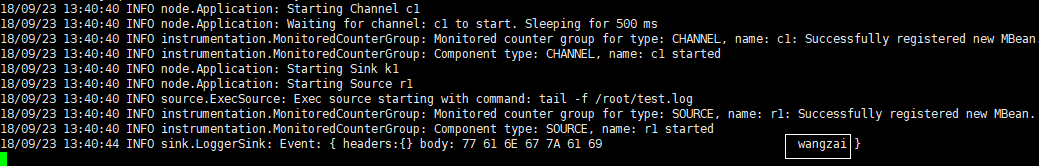

验证:

服务端

/usr/local/flume/bin/flume-ng agent -f flume-exec.conf -n agent -Dflume.root.logger=INFO, console 客户端

echo "wangzai" > /root/test.log

结果如图:

#HDFS

cd /usr/local/flume/conf

vim flume-exec-hdfs.conf

# Name the components on this agent

agent.sources = r1

agent.sinks = k1

agent.channels = c1

# Describe/configuration the source

agent.sources.r1.type = exec

agent.sources.r1.command = tail -f /root/test.log

agent.sources.r1.shell = /bin/bash -c

#Describe the sink

agent.sinks.k1.type = hdfs

agent.sinks.k1.hdfs.path = hdfs://master:9000/data/flume/tail

agent.sinks.k1.hdfs.fileType=DataStream

agent.sinks.k1.hdfs.writeFormat=Text

## hdfs sink间隔多长将临时文件滚动成最终目标文件,单位:秒,默认为30s

## 如果设置成0,则表示不根据时间来滚动文件;

# agent.sinks.k1.hdfs.rollInterval = 0

## 表示到134M的时候回滚到下一个文件

#agent.sinks.k1.hdfs.rollSize = 134217728

#agent.sinks.k1.hdfs.rollCount = 1000000

#agent.sinks.k1.hdfs.batchSize=10

# Use a channel which buffers events in memory

agent.channels.c1.type = memory

#agent.channels.c1.capacity = 1000

#agent.channels.c1.transactionCapacity = 100

# Bind the source and sink to the channel

agent.sources.r1.channels = c1

agent.sinks.k1.channel = c1

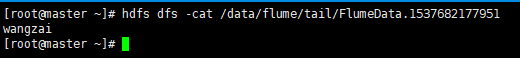

验证:

服务端

/usr/local/flume/bin/flume-ng agent -f flume-exec-hdfs.conf -n agent -Dflume.root.logger=INFO, console 客户端

echo "wangzai" > /root/test.log

结果如图:

#Kafka

cd /usr/local/flume/conf

vim flume-exec-kafka.conf

# Name the components on this agent

agent.sources = r1

agent.sinks = k1

agent.channels = c1 # Describe/configuration the source

agent.sources.r1.type = exec

agent.sources.r1.command = tail -f /root/test.log

agent.sources.r1.shell = /bin/bash -c ## kafka

#Describe the sink

agent.sinks.k1.type = org.apache.flume.sink.kafka.KafkaSink

agent.sinks.k1.topic = kafkatest

agent.sinks.k1.brokerList = master:

agent.sinks.k1.requiredAcks =

agent.sinks.k1.batchSize = # Use a channel which buffers events in memory

agent.channels.c1.type = memory

agent.channels.c1.capacity =

#agent.channels.c1.transactionCapacity = # Bind the source and sink to the channel

agent.sources.r1.channels = c1

agent.sinks.k1.channel = c1

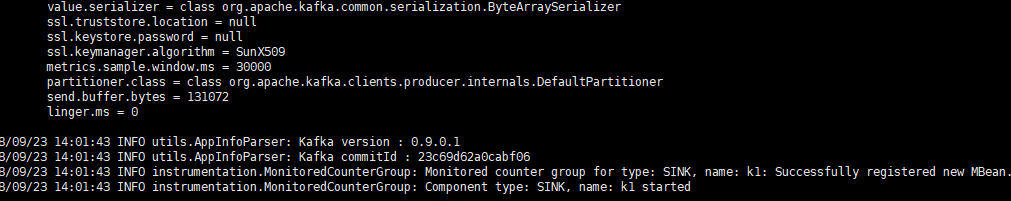

验证:

启动kafka,创建topic

/usr/local/kafka/bin/kafka-server-start.sh /usr/local/kafka/config/server.properties > /dev/null &

kafka-topics.sh --create --zookeeper master:,slave1:,slave2: --replication-factor --partitions --topic kafkatest

启动flume以及测试

服务端

/usr/local/flume/bin/flume-ng agent -f flume-exec-kafka.conf -n agent -Dflume.root.logger=INFO, console 客户端

echo "wangzai" > test.log

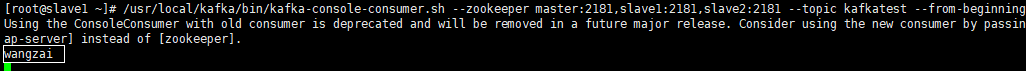

启动kafka客户端

/usr/local/kafka/bin/kafka-console-consumer.sh --zookeeper master:,slave1:,slave2: --topic kafkatest --from-beginning

结果如图:

flume服务端:

kafka客户端:

Flume环境安装的更多相关文章

- Flume1 初识Flume和虚拟机搭建Flume环境

前言: 工作中需要同步日志到hdfs,以前是找运维用rsync做同步,现在一般是用flume同步数据到hdfs.以前为了工作简单看个flume的一些东西,今天下午有时间自己利用虚拟机搭建了 ...

- 日志采集框架Flume以及Flume的安装部署(一个分布式、可靠、和高可用的海量日志采集、聚合和传输的系统)

Flume支持众多的source和sink类型,详细手册可参考官方文档,更多source和sink组件 http://flume.apache.org/FlumeUserGuide.html Flum ...

- Flume环境搭建_五种案例

Flume环境搭建_五种案例 http://flume.apache.org/FlumeUserGuide.html A simple example Here, we give an example ...

- 日志收集框架flume的安装及简单使用

flume介绍 Flume是一个分布式.可靠.和高可用的海量日志采集.聚合和传输的系统. Flume可以采集文件,socket数据包等各种形式源数据,又可以将采集到的数据输出到HDFS.hbase.h ...

- Flume环境搭建_五种案例(转)

Flume环境搭建_五种案例 http://flume.apache.org/FlumeUserGuide.html A simple example Here, we give an example ...

- 02_ Flume的安装部署及其简单使用

一.Flume的安装部署: Flume的安装非常简单,只需要解压即可,当然,前提是已有hadoop环境 安装包的下载地址为:http://www-us.apache.org/dist/flume/1. ...

- Flume介绍安装使用

APache Flume官网:http://flume.apache.org/releases/content/1.9.0/FlumeUserGuide.html#memory-channel 目录 ...

- Flume 组件安装配置

下载和解压 Flume 实验环境可能需要回至第四,五,六章(hadoop和hive),否则后面传输数据可能报错(猜测)! 可 以 从 官 网 下 载 Flume 组 件 安 装 包 , 下 载 地 址 ...

- 使用专业的消息队列产品rabbitmq之centos7环境安装

我们在项目开发的时候都不可避免的会有异步化的问题,比较好的解决方案就是使用消息队列,可供选择的队列产品也有很多,比如轻量级的redis, 当然还有重量级的专业产品rabbitmq,rabbitmq ...

随机推荐

- 使用ASIHTTPRequest xcode编译提示找不到"libxml/HTMLparser.h"

使用ASIHTTPRequest xcode编译提示找不到"libxml/HTMLparser.h",解决方法如下: 1>.在xcode中左边选中项目的root节点,在中间编 ...

- poj_1204 Trie图

题目大意 给出一个RxC的字符组成的puzzle,中间可以从左向右,从右到左,从上到下,从下到上,从左上到右下,从右下到左上,从左下到右上,从右上到左下,八个方向进行查找字符串. 给出M个字符 ...

- java高级---->Thread之Condition的使用

Condition 将 Object 监视器方法(wait.notify 和 notifyAll)分解成截然不同的对象,以便通过将这些对象与任意 Lock 实现组合使用,为每个对象提供多个等待 set ...

- java框架---->quartz整合spring(一)

今天我们学习一下quartz的定时器的使用.年轻时我们放弃,以为那只是一段感情,后来才知道,那其实是一生. quartz的简单实例 测试的项目结构如下: 一.pom.xml中定义quartz的依赖 & ...

- 转载-解决使用httpClient 4.3.x登陆 https时的证书报错问题

今天在使用httpClient4.3.6模拟登陆https网站的时候出现了证书报错的问题,这是在开源中国社区里找到的可行的答案(原文链接:http://www.oschina.net/question ...

- linux显示文件列表命令ls,使用ls --help列出所有命令参数

ls命令的相关参数 在提示符下输入ls --help ,屏幕会显示该命令的使用格式及参数信息: 先介绍一下ls命令的主要参数: -a 列出目录下的所有文件,包括以 . 开头的隐含文件. -A 显示除 ...

- 问答项目---用户注册的那些事儿(JS验证)

做注册的时候,由于每一个页面都有都要可以注册,可以把注册方法写到一个公用的方法里去,其他方法继承这个方法: 简单注册JS示例: <script type='text/javascript'> ...

- 次小生成树(poj1679)

The Unique MST Time Limit: 1000MS Memory Limit: 10000K Total Submissions: 20737 Accepted: 7281 D ...

- mysql 远程连接超时解决办法

设置mysql远程连接root权限 在远程连接mysql的时候应该都碰到过,root用户无法远程连接mysql,只可以本地连,对外拒绝连接. 需要建立一个允许远程登录的数据库帐户,这样才可以进行在远程 ...

- Docker企业级仓库Harbor的安装配置与使用

Harbor是一个用于存储和分发Docker镜像的企业级Registry服务器,通过添加一些企业必需的功能特性,例如安全.标识和管理等,扩展了开源Docker Distribution.作为一个企业级 ...