Gradient Vanishing Problem in Deep Learning

在所有依靠Gradient Descent和Backpropagation算法来学习的Neural Network中,普遍都会存在Gradient Vanishing Problem。Backpropagation的运作过程是,根据Cost Function进行反向传播,利用Chain Rule去计算n层之前某一weight上的梯度,从而更新该weight。而事实上,在网络层次较深的情况下,我们获得的weight梯度,随着反向传播层次的深入,会呈现越来越小的状态。从而,在靠近输出端的Layers中,weight可以被很好的更新,因为可以获得不错的gradient,而在靠近输入端的Layers中,weight则更新缓慢。

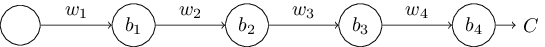

举个最简单的例子,来说明该问题。如下的神经网络有四层,每层有一个node:

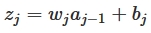

我们可知w是weight,b是bias,每一层的节点输入是z,输出是a,activation function是a=σ(z),我们可以得出:

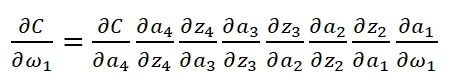

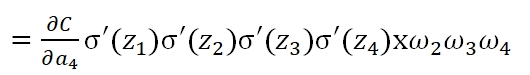

当我们已知Cost Function时,我们利用Backpropagation计算weight:

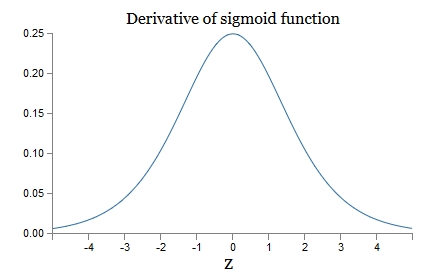

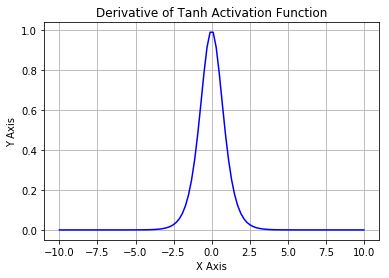

可以看到,第一层的weight梯度,依赖于之后各层activation function的一阶导数之积。而对于Machine Learning中常用的Sigmoid及tanh激励函数,其derivative图像如下:

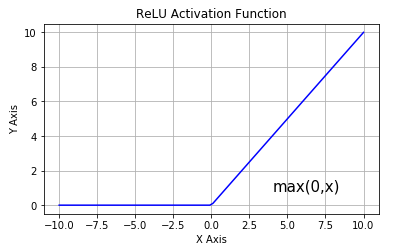

Sigmoid的derivative是[0,0.25]的,而tanh的derivative是[0,1]的。通过上式,我们看出,通过Backpropagation求梯度时,每往回传播一层,就要多乘以一项δ‘(z),也就是说,随着向回传递的深入,梯度会呈指数级的衰减,直至缩减到0,导致前层的权重无法更新。tanh要略好于sigmoid,但依然难以解决Gradient Vanishing的问题。所以Relu Function应运而生,并且在Deep Learning方面取得了巨大成功。Relu的表达式及图形如下:

其当x>0时,derivative是1,小于0时,derivative为0。该函数很好的解决了Gradient Vanishing Problem,在大多数情况下,我们构建Deep Learning时可以使用Relu作为默认的Activation Function。

Gradient Vanishing Problem in Deep Learning的更多相关文章

- (转)WHY DEEP LEARNING IS SUDDENLY CHANGING YOUR LIFE

Main Menu Fortune.com E-mail Tweet Facebook Linkedin Share icons By Roger Parloff Illustration ...

- Growing Pains for Deep Learning

Growing Pains for Deep Learning Advances in theory and computer hardware have allowed neural network ...

- Deep Learning Libraries by Language

Deep Learning Libraries by Language Tweet Python Theano is a python library for defining and ...

- Deep learning with Python

一.导论 1.1 人工智能.机器学习.深度学习 人工智能.机器学习 人工智能:1980年代达到高峰的是专家系统,符号AI是之前的,但不能解决模糊.复杂的问题. 机器学习是把数据.答案做输入,规则作输出 ...

- This instability is a fundamental problem for gradient-based learning in deep neural networks. vanishing exploding gradient problem

The unstable gradient problem: The fundamental problem here isn't so much the vanishing gradient pro ...

- Coursera Deep Learning 2 Improving Deep Neural Networks: Hyperparameter tuning, Regularization and Optimization - week1, Assignment(Gradient Checking)

声明:所有内容来自coursera,作为个人学习笔记记录在这里. Gradient Checking Welcome to the final assignment for this week! In ...

- 课程二(Improving Deep Neural Networks: Hyperparameter tuning, Regularization and Optimization),第一周(Practical aspects of Deep Learning) —— 4.Programming assignments:Gradient Checking

Gradient Checking Welcome to this week's third programming assignment! You will be implementing grad ...

- Deep Learning专栏--强化学习之从 Policy Gradient 到 A3C(3)

在之前的强化学习文章里,我们讲到了经典的MDP模型来描述强化学习,其解法包括value iteration和policy iteration,这类经典解法基于已知的转移概率矩阵P,而在实际应用中,我们 ...

- Deep Learning in a Nutshell: History and Training

Deep Learning in a Nutshell: History and Training This series of blog posts aims to provide an intui ...

随机推荐

- 说说 HTTP 和 HTTPS 区别??

HTTP 协议传输的数据都是未加密的,也就是明文的,因此使用 HTTP 协议传输隐私信息非常不安全,为了保证这些隐私数据能加密传输,于是网景公司设计了 SSL(Secure Sockets Layer ...

- github提交用户权限被拒

场景介绍: 之前登陆了朋友的github账号,保存了朋友的GitHub信息在本地.今天想重新提交一个项目到自己的GitHub账号时,一直用朋友的账号提交且提示权限被拒. 解决: 方法一,GitHub配 ...

- nativescript(angular2)——ListView组件

NativeScript是一个不使用webview的情况下构建跨平台并且原生的iOS和Android应用.使用Angular.TypeScript或JavaScript来获得原生UI和性能体验,同时可 ...

- ulimit 管理系统资源

具体的 options 含义以及简单示例可以参考以下表格. 选项 含义 例子 -H 设置硬资源限制,一旦设置不能增加. ulimit – Hs 64:限制硬资源,线程栈大小为 64K. -S 设置软资 ...

- Jupyter配置工作路径

在修改之前,C:\Users\Administrator\ .jupyter 目录下面只有一个“migrated”文件. 打开命令窗口(运行->cmd),进入python的Script目录下输入 ...

- MYSQL学习笔记——sql语句优化之索引

上一篇博客讲了可以使用慢查询日志定位耗时sql,使用explain命令查看mysql的执行计划,以及使用profiling工具查看语句执行真正耗时的地方,当定位了耗时之后怎样优化呢?这篇博客会介绍my ...

- [HEOI2015]兔子与樱花(贪心)

[HEOI2015]兔子与樱花 Description 很久很久之前,森林里住着一群兔子.有一天,兔子们突然决定要去看樱花.兔子们所在森林里的樱花树很特殊.樱花树由\(n\)个树枝分叉点组成,编号从\ ...

- 10 | MySQL为什么有时候会选错索引? 学习记录

<MySQL实战45讲>10 | MySQL为什么有时候会选错索引? 学习记录http://naotu.baidu.com/file/e7c521276650e80fe24584bc9a6 ...

- $_POST 和 php://input 的区别

手册中摘取的几句话: 当 HTTP POST 请求的 Content-Type 是 application/x-www-form-urlencoded 或 multipart/form-data 时, ...

- CSS3选择器 ::before和::after

:before和:after伪元素的用法 :before和:after伪元素的用法十分简单:下面的代码样例中, :before 和 :after 将会在 blockquote 元素之前和之后插入新内容 ...