OpenStack-T版+Ceph

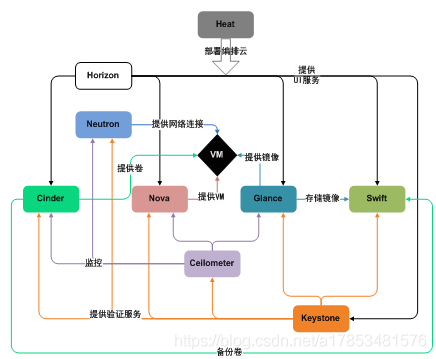

OpenStack

OpenStack 中有哪些组件

keystone:授权 [授权后各个组件才可以进行相应的功能]

Keystone 认证所有 OpenStack 服务并对其进行授权。同时,它也是所有服务的端点目录。

glance: 提供虚拟机镜像模板 [镜像模板是用于创建虚拟机的]

[Glance 可存储和检索多个位置的虚拟机磁盘镜像。]

nova: 主要作用: 提供虚拟机的运行环境; nova本身是没有虚拟化技术的,它是通过调用kvm来提供虚拟化功能的。[管理虚拟机]

[管理虚拟机的整个生命周期:创建、运行、挂起、调度、关闭、销毁等。这是真正的执行部件。接受 DashBoard 发來的命令并完成具体的动作。但是 Nova 不是虛拟机软件,所以还需要虚拟机软件(如 KVM、Xen、Hyper-v 等)配合]

neutron: 给虚拟机提供网络。

[Neutron 能够连接其他 OpenStack 服务并连接网络。]

dashboard [Horizon]:web管理界面

Swift:用于存储镜像 [对象存储]

[是一种高度容错的对象存储服务,使用 RESTful API 来存储和检索非结构数据对象。]

Cinder:给虚拟机添加硬盘

[通过自助服务 API 访问持久块存储。]

Ceilometer:监控流量,按量付费

Heat:编排

[启动10台云主机,每台云主机运行不同的脚本,形成自动化起服务]

OpenStack 安装

一、基本环境

1、虚拟机规划

| 节点 | 主机名 | 内存 | IP | 作用 | cpu | 磁盘空间 |

|---|---|---|---|---|---|---|

| 控制节点 | controller | 大于3G | 172.16.1.160 | 管理 | 打开虚拟化 | 50G |

| 计算节点 | compute1 | 大于1G | 172.16.1.161 | 运行虚拟机 | 打开虚拟化 | 50G |

2、配置yum 源(每个节点都要配置)

[root@controller ~]# cd /etc/yum.repos.d

[root@controller yum.repos.d]# mkdir backup && mv C* backup

[root@controller yum.repos.d]# wget https://mirrors.aliyun.com/repo/Centos-7.repo

[root@controller yum.repos.d]# yum repolist all

3、关闭安全服务(每个节点都要配置)

关闭防火墙

[root@controller ~]# systemctl stop firewalld.service; systemctl disable firewalld.service

关闭selinux

[root@controller ~]# sed -i 's/SELINUX=.*/SELINUX=disabled/g' /etc/selinux/config

[root@controller ~]# setenforce 0

[root@controller ~]# reboot

[root@controller ~]# getenforce

Disabled

4、设置时间服务(每个节点都要配置)

[root@controller ~]# yum install chrony -y

# 控制节点

[root@controller ~]# vim /etc/chrony.conf

……

server ntp6.aliyun.com iburst

……

allow 172.16.1.0/24 // 设置同步的网段, 也可以设置所有: all

local stratum 10

[root@controller ~]# systemctl restart chronyd && systemctl enable chronyd

# 计算节点

[root@computer1 ~]# yum install ntpdate -y

[root@computer1 ~]# vim /etc/chrony.conf

……

server 172.16.1.160 iburst

[root@computer1 ~]# systemctl restart chronyd && systemctl enable chronyd

[root@computer1 ~]# ntpdate 172.16.1.160

5、安装OpenStack(每个节点都要配置)

安装 OpenStack 客户端

[root@controller ~]# yum install centos-release-openstack-train -y

[root@controller ~]# yum install python-openstackclient -y

安装 OpenStack-selinux

[root@controller ~]# yum install openstack-selinux -y

6、SQL数据库

#在控制节点上安装

[root@controller ~]# yum -y install mariadb mariadb-server python2-PyMySQL # `python2-PyMySQL` python模块

[root@controller ~]# vim /etc/my.cnf.d/openstack.cnf

[mysqld]

bind-address = 172.16.1.160

default-storage-engine = innodb # 默认存储引擎

innodb_file_per_table # 独立表空间文件

max_connections = 4096 # 最大连接数

collation-server = utf8_general_ci

character-set-server = utf8 # 默认字符集 utf-8

[root@controller ~]# systemctl enable mariadb.service && systemctl start mariadb.service

[root@controller ~]# mysql_secure_installation

7、消息队列

#在控制节点安装

[root@controller ~]# yum install rabbitmq-server -y

[root@controller ~]# systemctl enable rabbitmq-server.service && systemctl start rabbitmq-server.service

[root@controller ~]# rabbitmqctl add_user openstack RABBIT_PASS ## 添加 openstack 用户 [使openstack所有服务都能用上消息队列]

可以用合适的密码替换 RABBIT_DBPASS,建议不修改,不然后面全部都要修改。

[root@controller ~]# rabbitmqctl set_permissions openstack ".*" ".*" ".*" ##给'openstack'用户配置写和读权限:

#启用 rabbitmq 的管理插件,方便以后做监控 < 可省略 >

[root@controller ~]# rabbitmq-plugins enable rabbitmq_management ##// 执行后会产生 15672 端口< 插件的 >

[root@controller ~]# netstat -altnp | grep 5672

tcp 0 0 0.0.0.0:15672 0.0.0.0:* LISTEN 2112/beam.smp

tcp 0 0 0.0.0.0:25672 0.0.0.0:* LISTEN 2112/beam.smp

tcp 0 0 172.16.1.160:37231 172.16.1.160:25672 TIME_WAIT -

tcp6 0 0 :::5672 :::* LISTEN 2112/beam.smp

# 访问

IP:15672

# 默认密码

用户: guest

密码: guest

8、Memcached

- 认证服务认证缓存使用Memcached缓存token。缓存服务memecached运行在控制节点。

- token: 用于验证用户登录信息, 利用memcached将token缓存下来,那么下次用户登录时,就不需要验证了[提高效率]

[root@controller ~]# yum install memcached python-memcached -y

[root@controller ~]# sed -i 's/127.0.0.1/172.16.1.160/g' /etc/sysconfig/memcached

[root@controller ~]# systemctl enable memcached.service && systemctl start memcached.service

二、Keystone认证服务

- 认证管理,授权管理和服务目录

- 服务目录 :用户创建镜像[9292],虚拟机[nova:8774],网络[9696]等服时,都要访问该服务的服务端口,而openstack的服务较多,用户记起来很麻烦,即keystone提供的服务目录解决了这一问题

1、先决条件

在你配置 OpenStack 身份认证服务前,你必须创建一个数据库和管理员令牌(token)。

创建数据库:

[root@controller ~]# mysql -u root -p

创建 keystone 数据库:

MariaDB [(none)]> CREATE DATABASE keystone;

Query OK, 1 row affected (0.00 sec)

对'keystone'数据库授予恰当的权限:

MariaDB [(none)]> GRANT ALL PRIVILEGES ON keystone.* TO 'keystone'@'172.16.1.160' IDENTIFIED BY 'KEYSTONE_DBPASS';

Query OK, 0 rows affected (0.00 sec)

MariaDB [(none)]> GRANT ALL PRIVILEGES ON keystone.* TO 'keystone'@'%' IDENTIFIED BY 'KEYSTONE_DBPASS';

Query OK, 0 rows affected (0.00 sec)

MariaDB [(none)]> flush privileges;

可以用合适的密码替换 KEYSTONE_DBPASS

2、安装并配置组件

[root@controller ~]# yum install openstack-keystone httpd mod_wsgi -y

[root@controller ~]# cp /etc/keystone/keystone.conf /etc/keystone/keystone.conf_bak

[root@controller ~]# egrep -v '^$|#' /etc/keystone/keystone.conf_bak > /etc/keystone/keystone.conf

编辑文件 /etc/keystone/keystone.conf 并完成如下动作:

在 [database] 部分,配置数据库访问:

[database]

...

connection = mysql+pymysql://keystone:KEYSTONE_DBPASS@controller/keystone

将``KEYSTONE_DBPASS``替换为你为数据库选择的密码。

在``[token]``部分,配置以Fernet(方式) UUID令牌的提供者。

[token]

...

provider = fernet

注:keystone 认证方式: UUID、 PKI、 Fernet;

# 都只是生成一段随机字符串的方法

检测

[root@controller ~]# md5sum /etc/keystone/keystone.conf

f6d8563afb1def91c1b6a884cef72f11 /etc/keystone/keystone.conf

3、同步数据库

[root@controller ~]# su -s /bin/sh -c "keystone-manage db_sync" keystone

su: 切换用户

-s: 指定 shell + **shell

-c: 指定执行的命令 + 命令

keystone: 用户

# 意思: 切换到 keystone 用户执行 /bin/shell < keystone-manage db_sync > 命令

mysql -u root -ppassword keystone -e "show tables;"

4、初始化Fernet

[root@controller ~]# keystone-manage fernet_setup --keystone-user keystone --keystone-group keystone

[root@controller ~]# keystone-manage credential_setup --keystone-user keystone --keystone-group keystone

5、引导身份服务

[root@controller ~]# keystone-manage bootstrap --bootstrap-password ADMIN_PASS --bootstrap-admin-url http://controller:5000/v3/ --bootstrap-internal-url http://controller:5000/v3/ --bootstrap-public-url http://controller:5000/v3/ --bootstrap-region-id RegionOne

#可替换ADMIN_PASS为适合管理用户的密码

6、配置 Apache HTTP 服务器

编辑/etc/httpd/conf/httpd.conf 文件,配置ServerName 选项为控制节点: [大约在95行]

[root@controller ~]# echo 'ServerName controller' >> /etc/httpd/conf/httpd.conf # 提高启动 http 速度

创建文件并编辑 /etc/httpd/conf.d/wsgi-keystone.conf

创建/usr/share/keystone/wsgi-keystone.conf文件的链接:

[root@controller ~]# ln -s /usr/share/keystone/wsgi-keystone.conf /etc/httpd/conf.d/

[root@controller ~]# systemctl enable httpd.service;systemctl restart httpd.service

7、配置环境变量

[root@controller ~]# export OS_TOKEN=ADMIN_TOKEN # 配置认证令牌

[root@controller ~]# export OS_URL=http://controller:5000/v3 # 配置端点URL

[root@controller ~]# export OS_IDENTITY_API_VERSION=3 # 配置认证 API 版本

8、查看环境变量

[root@controller keystone]# env | grep OS

HOSTNAME=controller

OS_IDENTITY_API_VERSION=3

OS_TOKEN=ADMIN_TOKEN

OS_URL=http://controller:5000/v3

9、创建域、项目、用户和角色

##注:默认创建了default域和admin项目、admin用户及角色,只需要创建service

1、创建域 default:

[root@controller keystone]# openstack domain create --description "Default Domain" default

+-------------+----------------------------------+

| Field | Value |

+-------------+----------------------------------+

| description | Default Domain |

| enabled | True |

| id | 5d191921be13447ba77bd25aeaad3c01 |

| name | default |

| tags | [] |

+-------------+----------------------------------+

2、在你的环境中,为进行管理操作,创建管理的项目、用户和角色:

(1)、创建 admin 项目:

[root@controller keystone]# openstack project create --domain default --description "Admin Project" admin

+-------------+----------------------------------+

| Field | Value |

+-------------+----------------------------------+

| description | Admin Project |

| domain_id | 5d191921be13447ba77bd25aeaad3c01 |

| enabled | True |

| id | 664c99b0582f452a9cd04b6847912e41 |

| is_domain | False |

| name | admin |

| parent_id | 5d191921be13447ba77bd25aeaad3c01 |

| tags | [] |

+-------------+----------------------------------+

(2)、创建 admin 用户: //这里是将官网的-prompt密码改为ADMIN_PASS

[root@controller keystone]# openstack user create --domain default --password ADMIN_PASS admin

+---------------------+----------------------------------+

| Field | Value |

+---------------------+----------------------------------+

| domain_id | 5d191921be13447ba77bd25aeaad3c01 |

| enabled | True |

| id | b8ee9f1c2b8640718f9628db33aad5f4 |

| name | admin |

| options | {} |

| password_expires_at | None |

+---------------------+----------------------------------+

(3)、创建 admin 角色:

[root@controller keystone]# openstack role create admin

+-----------+----------------------------------+

| Field | Value |

+-----------+----------------------------------+

| domain_id | None |

| id | 70c1f94f2edf4f6e9bdba9b7c3191a15 |

| name | admin |

+-----------+----------------------------------+

(4)、添加'admin' 角色到 admin 项目和用户上:

[root@controller keystone]# openstack role add --project admin --user admin admin

(5)、本指南使用一个你添加到你的环境中每个服务包含独有用户的service 项目。创建``service``项目:

[root@controller keystone]# openstack project create --domain default --description "Service Project" service

+-------------+----------------------------------+

| Field | Value |

+-------------+----------------------------------+

| description | Service Project |

| domain_id | 5d191921be13447ba77bd25aeaad3c01 |

| enabled | True |

| id | b2e04f3a01eb4994a2990d9e75f8de11 |

| is_domain | False |

| name | service |

| parent_id | 5d191921be13447ba77bd25aeaad3c01 |

| tags | [] |

+-------------+----------------------------------+

10、认证测试

[root@controller ~]# vim admin-openrc

export OS_PROJECT_DOMAIN_NAME=default

export OS_USER_DOMAIN_NAME=default

export OS_PROJECT_NAME=admin

export OS_USERNAME=admin

export OS_PASSWORD=ADMIN_PASS

export OS_AUTH_URL=http://controller:5000/v3

export OS_IDENTITY_API_VERSION=3

export OS_IMAGE_API_VERSION=2

# 加载环境变量

[root@controller ~]# source admin-openrc

# 开机自动挂载

[root@controller ~]# echo 'source admin-openrc' >> /root/.bashrc

# 退出登录

[root@controller ~]# logout

[root@controller ~]# openstack token issue

+------------+-----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------+

| Field | Value |

+------------+-----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------+

| expires | 2022-09-26T08:42:45+0000 |

| id | gAAAAABjMVf1pipTbv_5WNZHh3yhsAjfhRlFV8SQING_Ra_NT382uAUTOnYo1m0-VJMms8tP_ieSCCpavejPMqHphmj7Mvxw0jYjWwXHTY8lV69UeJt5SJPqCwtJ0wZJqlQkVzkicZI_QqXO3UyvBTTAvv19X5Q6GzXnhJMkk0rJ09CtrM1fPJI |

| project_id | 664c99b0582f452a9cd04b6847912e41 |

| user_id | b8ee9f1c2b8640718f9628db33aad5f4 |

+------------+-----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------+

三、Glance镜像服务

OpenStack镜像服务是IaaS的核心服务,如同 :ref:(https://docs.openstack.org/mitaka/zh_CN/install-guide-rdo/common/get_started_image_service.html#id1)get_started_conceptual_architecture所示。它接受磁盘镜像或服务器镜像API请求,和来自终端用户或OpenStack计算组件的元数据定义。它也支持包括OpenStack对象存储在内的多种类型仓库上的磁盘镜像或服务器镜像存储。

大量周期性进程运行于OpenStack镜像服务上以支持缓存。同步复制(Replication)服务保证集群中的一致性和可用性。其它周期性进程包括auditors, updaters, 和 reapers。

OpenStack镜像服务包括以下组件:

glance-api

接收镜像API的调用,诸如镜像发现、恢复、存储。

glance-registry

存储、处理和恢复镜像的元数据,元数据包括项诸如大小和类型。

1、先决条件

安装和配置镜像服务之前,你必须创建创建一个数据库、服务凭证和API端点。

(1)、创建数据库

[root@controller ~]# mysql -u root -p

MariaDB [(none)]> CREATE DATABASE glance;

Query OK, 1 row affected (0.00 sec)

MariaDB [(none)]> GRANT ALL PRIVILEGES ON glance.* TO 'glance'@'172.16.1.160' IDENTIFIED BY 'GLANCE_DBPASS';

Query OK, 0 rows affected (0.00 sec)

MariaDB [(none)]> GRANT ALL PRIVILEGES ON glance.* TO 'glance'@'%' IDENTIFIED BY 'GLANCE_DBPASS';

Query OK, 0 rows affected (0.00 sec)

MariaDB [(none)]> flush privileges;

Query OK, 0 rows affected (0.00 sec)

可用一个合适的密码替换 GLANCE_DBPASS

(2)、创建用户关联角色

(I)、创建 glance 用户:

[root@controller ~]# openstack user create --domain default --password GLANCE_PASS glance

+---------------------+----------------------------------+

| Field | Value |

+---------------------+----------------------------------+

| domain_id | 5d191921be13447ba77bd25aeaad3c01 |

| enabled | True |

| id | 821acc687c24458c9c643d5150fd266d |

| name | glance |

| options | {} |

| password_expires_at | None |

+---------------------+----------------------------------+

(II)、添加 admin 角色到 glance 用户和 service 项目上:

[root@controller ~]# openstack role add --project service --user glance admin

(3)、创建服务并注册 API

(I)、创建 glance 服务实体:

[root@controller ~]# openstack service create --name glance --description "OpenStack Image" image

+-------------+----------------------------------+

| Field | Value |

+-------------+----------------------------------+

| description | OpenStack Image |

| enabled | True |

| id | 3bf4df103e5e42c5b507d67ed97921e8 |

| name | glance |

| type | image |

+-------------+----------------------------------+

(II)、创建镜像服务的 API 端点:

[root@controller ~]# openstack endpoint create --region RegionOne image public http://controller:9292

+--------------+----------------------------------+

| Field | Value |

+--------------+----------------------------------+

| enabled | True |

| id | 8e2877750e0b4398aa54628f5039ad65 |

| interface | public |

| region | RegionOne |

| region_id | RegionOne |

| service_id | 3bf4df103e5e42c5b507d67ed97921e8 |

| service_name | glance |

| service_type | image |

| url | http://controller:9292 |

+--------------+----------------------------------+

[root@controller ~]# openstack endpoint create --region RegionOne image internal http://controller:9292

+--------------+----------------------------------+

| Field | Value |

+--------------+----------------------------------+

| enabled | True |

| id | 3a1041640a1b4206aa094376c84d4148 |

| interface | internal |

| region | RegionOne |

| region_id | RegionOne |

| service_id | 3bf4df103e5e42c5b507d67ed97921e8 |

| service_name | glance |

| service_type | image |

| url | http://controller:9292 |

+--------------+----------------------------------+

[root@controller ~]# openstack endpoint create --region RegionOne image admin http://controller:9292

+--------------+----------------------------------+

| Field | Value |

+--------------+----------------------------------+

| enabled | True |

| id | a6b14642d90e467da07da6b68e8ebeae |

| interface | admin |

| region | RegionOne |

| region_id | RegionOne |

| service_id | 3bf4df103e5e42c5b507d67ed97921e8 |

| service_name | glance |

| service_type | image |

| url | http://controller:9292 |

+--------------+----------------------------------+

2、安全并配置组件

(1)、安装软件包

[root@controller ~]# yum install openstack-glance -y

(2)、编辑文件 /etc/glance/glance-api.conf 并完成如下动作:

[root@controller ~]# cp /etc/glance/glance-api.conf /etc/glance/glance-api.conf_bak

[root@controller ~]# egrep -v "^$|#" /etc/glance/glance-api.conf_bak > /etc/glance/glance-api.conf

[root@controller ~]# vim /etc/glance/glance-api.conf

在

[database]部分,配置数据库访问:[database]

...

connection = mysql+pymysql://glance:GLANCE_DBPASS@controller/glance 将``GLANCE_DBPASS`` 替换为你为镜像服务选择的密码。

在

[keystone_authtoken]和[paste_deploy]部分,配置认证服务访问:[keystone_authtoken]

...

www_authenticate_uri = http://controller:5000

auth_url = http://controller:5000

memcached_servers = controller:11211

auth_type = password

project_domain_name = Default

user_domain_name = Default

project_name = service

username = glance

password = GLANCE_PASS [paste_deploy]

...

flavor = keystone 将 GLANCE_PASS 替换为你为认证服务中你为 glance 用户选择的密码。

在

[glance_store]部分,配置本地文件系统存储和镜像文件位置:[glance_store]

...

stores = file,http

default_store = file

filesystem_store_datadir = /var/lib/glance/images/

(3)、编辑文件'/etc/glance/glance-registry.conf'并完成如下动作:

[root@controller ~]# cp /etc/glance/glance-registry.conf /etc/glance/glance-registry.conf_bak

[root@controller ~]# egrep -v '^$|#' /etc/glance/glance-registry.conf_bak > /etc/glance/glance-registry.conf

[root@controller ~]# vim /etc/glance/glance-registry.conf

- 在

[database]部分,配置数据库访问:

[database]

...

connection = mysql+pymysql://glance:GLANCE_DBPASS@controller/glance

- 在

[keystone_authtoken]和[paste_deploy]部分,配置认证服务访问:

[keystone_authtoken]

...

www_authenticate_uri = http://controller:5000

auth_url = http://controller:5000

memcached_servers = controller:11211

auth_type = password

project_domain_name = Default

user_domain_name = Default

project_name = service

username = glance

password = GLANCE_PASS

[paste_deploy]

...

flavor = keystone

可将 GLANCE_PASS 替换为你为认证服务中你为 glance 用户选择的密码。

(4)、写入镜像服务数据库:

su -s /bin/sh -c "glance-manage db_sync" glance

验证:

mysql -u root -ppassword glance -e "show tables;"

3、启动服务

[root@controller ~]# systemctl enable openstack-glance-api.service openstack-glance-registry.service

[root@controller ~]# systemctl start openstack-glance-api.service openstack-glance-registry.service

4、验证测试

(1)、下载源镜像:

[root@controller ~]# wget http://cdit-support.thundersoft.com/download/System_ISO/ubuntu18.04/ubuntu-18.04.5-live-server-amd64.iso

(2)、使用 QCOW2 磁盘格式, bare 容器格式上传镜像到镜像服务并设置公共可见,这样所有的项目都可以访问它:

[root@controller ~]# openstack image create "ubuntu18.04-server" --file ubuntu-18.04.5-live-server-amd64.iso --disk-format qcow2 --container-format bare --public

+------------------+--------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------+

| Field | Value |

+------------------+--------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------+

| checksum | fcd77cd8aa585da4061655045f3f0511 |

| container_format | bare |

| created_at | 2022-09-27T07:11:16Z |

| disk_format | qcow2 |

| file | /v2/images/348a05ba-8b07-41bd-8b90-d1af41e5783b/file |

| id | 348a05ba-8b07-41bd-8b90-d1af41e5783b |

| min_disk | 0 |

| min_ram | 0 |

| name | ubuntu18.04-server |

| owner | 664c99b0582f452a9cd04b6847912e41 |

| properties | os_hash_algo='sha512', os_hash_value='5320be1a41792ec35ac05cdd7f5203c4fa6406dcfd7ca4a79042aa73c5803596e66962a01aabb35b8e64a2e37f19f7510bffabdd4955cff040e8522ff5e1ec1e', os_hidden='False' |

| protected | False |

| schema | /v2/schemas/image |

| size | 990904320 |

| status | active |

| tags | |

| updated_at | 2022-09-27T07:11:28Z |

| virtual_size | None |

| visibility | public |

+------------------+--------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------+

四、Placement安装

Placement 在 Openstack 中主要用于跟踪和监控各种资源的使用情况,例如,在 Openstack 中包括计算资源、存储资源、网络等各种资源。Placement 用来跟踪管理每种资源的当前使用情况。 Placement 服务在 Openstack 14.0.0 Newton 版本中被引入到 nova 库,并在 19.0.0 Stein 版本中被独立到 Placement 库中,即在 stein 版被独立成组件。 Placement 服务提供 REST API 堆栈和数据模型,用于跟踪资源提供者不同类型的资源的库存和使用情况。 资源提供者可以是计算资源、共享存储池、 IP 池等。例如,创建一个实例时会消耗计算节点的 CPU、内存,会消耗存储节点的存储;同时还会消耗网络节点的 IP 等等,所消耗资源的类型被跟踪为 类。Placement 提供了一组标准资源类(例如 DISK_GB、MEMORY_MB 和 VCPU),并提供了根据需要定义自定义资源类的能力。

(一)、先决条件

1、创建数据库并授权

[root@controller ~]# mysql -u root -p

MariaDB [(none)]> CREATE DATABASE placement;

Query OK, 1 row affected (0.000 sec)

MariaDB [(none)]> GRANT ALL PRIVILEGES ON placement.* TO 'placement'@'172.16.1.160' IDENTIFIED BY 'PLACEMENT_DBPASS';

Query OK, 0 rows affected (0.000 sec)

MariaDB [(none)]> GRANT ALL PRIVILEGES ON placement.* TO 'placement'@'%' IDENTIFIED BY 'PLACEMENT_DBPASS';

Query OK, 0 rows affected (0.000 sec)

可替换PLACEMENT_DBPASS为合适的密码

2、创建用户和 API 服务端点

#创建placment用户

[root@controller ~]# openstack user create --domain default --password PLACEMENT_PASS placement

+---------------------+----------------------------------+

| Field | Value |

+---------------------+----------------------------------+

| domain_id | default |

| enabled | True |

| id | 3aa59a7790734af593f1e0f0bb544860 |

| name | placement |

| options | {} |

| password_expires_at | None |

+---------------------+----------------------------------+

#将 Placement 用户添加到具有管理员角色的服务项目中

[root@controller ~]# openstack role add --project service --user placement admin

#创建placement服务实体

[root@controller ~]# openstack service create --name placement --description "Placement API" placement

+-------------+----------------------------------+

| Field | Value |

+-------------+----------------------------------+

| description | Placement API |

| enabled | True |

| id | dbb9e71a38584bedbda1ff318c38bdb2 |

| name | placement |

| type | placement |

+-------------+----------------------------------+

#创建 Placement API 服务端点

[root@controller ~]# openstack endpoint create --region RegionOne placement public http://controller:8778

+--------------+----------------------------------+

| Field | Value |

+--------------+----------------------------------+

| enabled | True |

| id | b73f0c0eba1741fa8c20c3656278f3a9 |

| interface | public |

| region | RegionOne |

| region_id | RegionOne |

| service_id | dbb9e71a38584bedbda1ff318c38bdb2 |

| service_name | placement |

| service_type | placement |

| url | http://controller:8778 |

+--------------+----------------------------------+

[root@controller ~]# openstack endpoint create --region RegionOne placement internal http://controller:8778

+--------------+----------------------------------+

| Field | Value |

+--------------+----------------------------------+

| enabled | True |

| id | 495bbf7aa8364210be166a39375d8121 |

| interface | internal |

| region | RegionOne |

| region_id | RegionOne |

| service_id | dbb9e71a38584bedbda1ff318c38bdb2 |

| service_name | placement |

| service_type | placement |

| url | http://controller:8778 |

+--------------+----------------------------------+

[root@controller ~]# openstack endpoint create --region RegionOne placement admin http://controller:8778

+--------------+----------------------------------+

| Field | Value |

+--------------+----------------------------------+

| enabled | True |

| id | 68a8c3a4e3b94a9eb30921b01170982a |

| interface | admin |

| region | RegionOne |

| region_id | RegionOne |

| service_id | dbb9e71a38584bedbda1ff318c38bdb2 |

| service_name | placement |

| service_type | placement |

| url | http://controller:8778 |

+--------------+----------------------------------+

(二)、安装和配置组件

1、安装软件包

[root@controller ~]# yum install openstack-placement-api -y

2、修改配置文件

编辑/etc/placement/placement.conf文件并完成以下操作:

[root@controller ~]# cp /etc/placement/placement.conf /etc/placement/placement.conf_bak

[root@controller ~]# egrep -v "^$|#" /etc/placement/placement.conf_bak > /etc/placement/placement.conf

[root@controller ~]# vim /etc/placement/placement.conf

在该

[placement_database]部分中,配置数据库访问:[placement_database]

# ...

connection = mysql+pymysql://placement:PLACEMENT_DBPASS@controller/placement

在

[api]和[keystone_authtoken]部分中,配置身份服务访问:[api]

# ...

auth_strategy = keystone [keystone_authtoken]

# ...

auth_url = http://controller:5000/v3

memcached_servers = controller:11211

auth_type = password

project_domain_name = Default

user_domain_name = Default

project_name = service

username = placement

password = PLACEMENT_PASS

3、同步数据库

[root@controller ~]# su -s /bin/sh -c "placement-manage db sync" placement

[root@controller ~]# mysql -uroot -ppassword placement -e "show tables;"

#忽略输出信息

4、重启服务

[root@controller ~]# systemctl restart httpd

(三)、验证测试

[root@controller ~]# placement-status upgrade check

+----------------------------------+

| Upgrade Check Results |

+----------------------------------+

| Check: Missing Root Provider IDs |

| Result: Success |

| Details: None |

+----------------------------------+

| Check: Incomplete Consumers |

| Result: Success |

| Details: None |

+----------------------------------+

五、Nova计算服务

(一)、控制节点配置

1、数据库授权

(1)、创建数据库

[root@controller ~]# mysql -u root -p

#创建 nova_api 和 nova 数据库:

MariaDB [(none)]> CREATE DATABASE nova_api;

MariaDB [(none)]> CREATE DATABASE nova;

MariaDB [(none)]> CREATE DATABASE nova_cell0;

#对数据库进行正确的授权:

MariaDB [(none)]> GRANT ALL PRIVILEGES ON nova_api.* TO 'nova'@'172.16.1.160' IDENTIFIED BY 'NOVA_DBPASS';

MariaDB [(none)]> GRANT ALL PRIVILEGES ON nova_api.* TO 'nova'@'%' IDENTIFIED BY 'NOVA_DBPASS';

MariaDB [(none)]> GRANT ALL PRIVILEGES ON nova.* TO 'nova'@'172.16.1.160' IDENTIFIED BY 'NOVA_DBPASS';

MariaDB [(none)]> GRANT ALL PRIVILEGES ON nova.* TO 'nova'@'%' IDENTIFIED BY 'NOVA_DBPASS';

MariaDB [(none)]> GRANT ALL PRIVILEGES ON nova_cell0.* TO 'nova'@'172.16.1.160' IDENTIFIED BY 'NOVA_DBPASS';

MariaDB [(none)]> GRANT ALL PRIVILEGES ON nova_cell0.* TO 'nova'@'%' IDENTIFIED BY 'NOVA_DBPASS';

MariaDB [(none)]> flush privileges;

Query OK, 0 rows affected (0.00 sec)

可用合适的密码代替 NOVA_DBPASS。

(2)、获得 admin 凭证来获取只有管理员能执行的命令的访问权限

. admin-openrc

(3)、创建用户并关联角色

#创建 nova 用户:

[root@controller ~]# openstack user create --domain default --password NOVA_PASS nova

+---------------------+----------------------------------+

| Field | Value |

+---------------------+----------------------------------+

| domain_id | default |

| enabled | True |

| id | f3e5c9439bf84f8cbb3a975eb852eb69 |

| name | nova |

| options | {} |

| password_expires_at | None |

+---------------------+----------------------------------+

#给 nova 用户添加 admin 角色:

[root@controller ~]# openstack role add --project service --user nova admin

#创建 nova 服务实体:

[root@controller ~]# openstack service create --name nova --description "OpenStack Compute" compute

+-------------+----------------------------------+

| Field | Value |

+-------------+----------------------------------+

| description | OpenStack Compute |

| enabled | True |

| id | ec3a89fcb73a4e0b9cf83f05372f78e8 |

| name | nova |

| type | compute |

+-------------+----------------------------------+

#查看endpoint信息

[root@controller ~]# openstack endpoint list

+----------------------------------+-----------+--------------+--------------+---------+-----------+----------------------------+

| ID | Region | Service Name | Service Type | Enabled | Interface | URL |

+----------------------------------+-----------+--------------+--------------+---------+-----------+----------------------------+

| 0a489b615f244b14ba1cbbeb915a5eb4 | RegionOne | glance | image | True | admin | http://controller:9292 |

| 1161fbff859d4ffe96756ed17e975267 | RegionOne | glance | image | True | internal | http://controller:9292 |

| 495bbf7aa8364210be166a39375d8121 | RegionOne | placement | placement | True | internal | http://controller:8778 |

| 68a8c3a4e3b94a9eb30921b01170982a | RegionOne | placement | placement | True | admin | http://controller:8778 |

| 81e21f9e5069492086f7368ae983b640 | RegionOne | glance | image | True | public | http://controller:9292 |

| a91af59e7fa448698db106f1c9d9178c | RegionOne | keystone | identity | True | internal | http://controller:5000/v3/ |

| b73f0c0eba1741fa8c20c3656278f3a9 | RegionOne | placement | placement | True | public | http://controller:8778 |

| b792451b1fc344bbb541f8a4a5b67b50 | RegionOne | keystone | identity | True | public | http://controller:5000/v3/ |

| d2fb160ceac747d1afef1c348db92812 | RegionOne | keystone | identity | True | admin | http://controller:5000/v3/ |

+----------------------------------+-----------+--------------+--------------+---------+-----------+----------------------------+

#创建 Compute 服务 API 端点 :

[root@controller ~]# openstack endpoint create --region RegionOne compute public http://controller:8774/v2.1

+--------------+----------------------------------+

| Field | Value |

+--------------+----------------------------------+

| enabled | True |

| id | 72aac47247aa4c62b719241487389fb4 |

| interface | public |

| region | RegionOne |

| region_id | RegionOne |

| service_id | ec3a89fcb73a4e0b9cf83f05372f78e8 |

| service_name | nova |

| service_type | compute |

| url | http://controller:8774/v2.1 |

+--------------+----------------------------------+

[root@controller ~]# openstack endpoint create --region RegionOne compute internal http://controller:8774/v2.1

+--------------+----------------------------------+

| Field | Value |

+--------------+----------------------------------+

| enabled | True |

| id | a60b87e2f3c24cc3b2e078d114a7296a |

| interface | internal |

| region | RegionOne |

| region_id | RegionOne |

| service_id | ec3a89fcb73a4e0b9cf83f05372f78e8 |

| service_name | nova |

| service_type | compute |

| url | http://controller:8774/v2.1 |

+--------------+----------------------------------+

[root@controller ~]# openstack endpoint create --region RegionOne compute admin http://controller:8774/v2.1

+--------------+----------------------------------+

| Field | Value |

+--------------+----------------------------------+

| enabled | True |

| id | 561b92669bb7487195d74956dd3e14b8 |

| interface | admin |

| region | RegionOne |

| region_id | RegionOne |

| service_id | ec3a89fcb73a4e0b9cf83f05372f78e8 |

| service_name | nova |

| service_type | compute |

| url | http://controller:8774/v2.1 |

+--------------+----------------------------------+

2、安装并配置组件

(1)、安装软件包

[root@controller ~]# yum install openstack-nova-api openstack-nova-conductor openstack-nova-novncproxy openstack-nova-scheduler

-y

openstack-nova-api: 接受并响应所有计算服务的请求, 管理云主机的生命周期

openstack-nova-conductor: 修改数据库中虚拟机的状态

openstack-nova-novncproxy : web版的VNC 直接操作云主机

openstack-nova-scheduler: 调度器

(2)、编辑 /etc/nova/nova.conf 文件并完成下面的操作

[root@controller ~]# cp /etc/nova/nova.conf /etc/nova/nova.conf_bak

[root@controller ~]# egrep -v "^$|#" /etc/nova/nova.conf_bak > /etc/nova/nova.conf

[root@controller ~]# vim /etc/nova/nova.conf

- 在

[DEFAULT]部分,只启用计算和元数据API

[DEFAULT]

...

enabled_apis = osapi_compute,metadata

- 在

[api_database]和[database]部分,配置数据库的连接

[api_database]

...

connection = mysql+pymysql://nova:NOVA_DBPASS@controller/nova_api

[database]

...

connection = mysql+pymysql://nova:NOVA_DBPASS@controller/nova

可用你为 Compute 数据库选择的密码来代替 NOVA_DBPASS

- 在 “[DEFAULT]” 和 “[oslo_messaging_rabbit]”部分,配置 “RabbitMQ” 消息队列访问

[DEFAULT]

...

transport_url = rabbit://openstack:RABBIT_PASS@controller:5672/

可用你在 “RabbitMQ” 中为 “openstack” 选择的密码替换 “RABBIT_PASS”

- 在 “[api]” 和 “[keystone_authtoken]” 部分,配置认证服务访问

[api]

...

auth_strategy = keystone

[keystone_authtoken]

...

www_authenticate_uri = http://controller:5000/

auth_url = http://controller:5000/

memcached_servers = controller:11211

auth_type = password

project_domain_name = Default

user_domain_name = Default

project_name = service

username = nova

password = NOVA_PASS

可使用你在身份认证服务中设置的'nova' 用户的密码替换'NOVA_PASS'

- 在

[DEFAULT部分,配置my_ip来使用控制节点的管理接口的IP 地址

[DEFAULT]

...

my_ip = 172.16.1.160

- 在

[DEFAULT]部分,使能 Networking 服务

[DEFAULT]

...

use_neutron = true

firewall_driver = nova.virt.firewall.NoopFirewallDriver

- 在

[vnc]部分,配置VNC代理使用控制节点的管理接口IP地址

[vnc]

...

enabled = true

vncserver_listen = $my_ip

vncserver_proxyclient_address = $my_ip

- 在

[glance]区域,配置镜像服务 API 的位置

[glance]

...

api_servers = http://controller:9292

- 在

[oslo_concurrency]部分,配置锁路径

[oslo_concurrency]

...

lock_path = /var/lib/nova/tmp

- 在该

[placement]部分中,配置对 Placement 服务的访问

[placement]

# ...

region_name = RegionOne

project_domain_name = Default

project_name = service

auth_type = password

user_domain_name = Default

auth_url = http://controller:5000/v3

username = placement

password = PLACEMENT_PASS

(3)、同步Compute 数据库

#同步nova-api数据库

[root@controller ~]# su -s /bin/sh -c "nova-manage api_db sync" nova

#注册cell0数据库

[root@controller ~]# su -s /bin/sh -c "nova-manage cell_v2 map_cell0" nova

#创建cell1单元格

[root@controller ~]# su -s /bin/sh -c "nova-manage cell_v2 create_cell --name=cell1 --verbose" nova

#同步nova数据库

[root@controller ~]# su -s /bin/sh -c "nova-manage db sync" nova

注:忽略输出信息,可以登陆数据库查看是否有表

#验证 nova cell0 和 cell1 是否正确注册:

[root@controller ~]# su -s /bin/sh -c "nova-manage cell_v2 list_cells" nova

+-------+--------------------------------------+------------------------------------------+-------------------------------------------------+----------+

| Name | UUID | Transport URL | Database Connection | Disabled |

+-------+--------------------------------------+------------------------------------------+-------------------------------------------------+----------+

| cell0 | 00000000-0000-0000-0000-000000000000 | none:/ | mysql+pymysql://nova:****@controller/nova_cell0 | False |

| cell1 | b3252455-b9dd-427d-9395-da68baeda7c5 | rabbit://openstack:****@controller:5672/ | mysql+pymysql://nova:****@controller/nova | False |

+-------+--------------------------------------+------------------------------------------+-------------------------------------------------+----------+

3、启动服务

[root@controller ~]# systemctl enable openstack-nova-api.service openstack-nova-scheduler.service openstack-nova-conductor.service openstack-nova-novncproxy.service

[root@controller ~]# systemctl start openstack-nova-api.service openstack-nova-scheduler.service openstack-nova-conductor.service openstack-nova-novncproxy.service

(二)、计算节点配置

1、安装并配置组件

(1)、安装软件包

[root@computer1 ~]# yum install openstack-nova-compute -y

(2)、编辑/etc/nova/nova.conf文件并完成下面的操作

[root@compute1 ~]# cp /etc/nova/nova.conf /etc/nova/nova.conf_bak

[root@compute1 yum.repos.d]# egrep -v "^$|#" /etc/nova/nova.conf_bak > /etc/nova/nova.conf

[root@compute1 ~]# vim /etc/nova/nova.conf

在该

[DEFAULT]部分中,仅启用计算和元数据 API:[DEFAULT]

# ...

enabled_apis = osapi_compute,metadata

在该

[DEFAULT]部分中,配置RabbitMQ消息队列访问:[DEFAULT]

# ...

transport_url = rabbit://openstack:RABBIT_PASS@controller

替换为您在 中为 帐户

RABBIT_PASS选择的密码。openstack``RabbitMQ在

[api]和[keystone_authtoken]部分中,配置身份服务访问:[api]

# ...

auth_strategy = keystone [keystone_authtoken]

# ...

www_authenticate_uri = http://controller:5000/

auth_url = http://controller:5000/

memcached_servers = controller:11211

auth_type = password

project_domain_name = Default

user_domain_name = Default

project_name = service

username = nova

password = NOVA_PASS 可替换为您在身份服务中`NOVA_PASS`为用户选择的密码。`nova`

在该

[DEFAULT]部分中,配置my_ip选项:[DEFAULT]

# ...

my_ip = 172.16.1.161

替换为计算节点上管理网络接口的 IP 地址,对于示例架构

MANAGEMENT_INTERFACE_IP_ADDRESS中的第一个节点,通常为 10.0.0.31 。在该

[DEFAULT]部分中,启用对网络服务的支持:[DEFAULT]

# ...

use_neutron = true

firewall_driver = nova.virt.firewall.NoopFirewallDriver

在该

[vnc]部分中,启用和配置远程控制台访问:[vnc]

# ...

enabled = true

server_listen = 0.0.0.0

server_proxyclient_address = $my_ip

novncproxy_base_url = http://172.16.1.160:6080/vnc_auto.html

在该

[glance]部分中,配置图像服务 API 的位置:[glance]

# ...

api_servers = http://controller:9292

在该

[oslo_concurrency]部分中,配置锁定路径:[oslo_concurrency]

# ...

lock_path = /var/lib/nova/tmp

在该

[placement]部分中,配置 Placement API:[placement]

# ...

region_name = RegionOne

project_domain_name = Default

project_name = service

auth_type = password

user_domain_name = Default

auth_url = http://controller:5000/v3

username = placement

password = PLACEMENT_PASS #替换为您在身份服务中`PLACEMENT_PASS`为用户选择的密码 。`placement`注释掉该`[placement]`部分中的任何其他选项

2、完成安装

(1)、确定您的计算节点是否支持虚拟机的硬件加速

[root@computer1 ~]# egrep -c '(vmx|svm)' /proc/cpuinfo

2

如果这个命令返回了 one or greater 的值,那么你的计算节点支持硬件加速且不需要额外的配置。

如果这个命令返回了 zero 值,那么你的计算节点不支持硬件加速。你必须配置 libvirt 来使用 QEMU 去代替 KVM;如果不支持,在 /etc/nova/nova.conf 文件的 [libvirt] 区域做出如下的编辑:

[libvirt]

...

virt_type = qemu

(2)、启动计算服务及其依赖,并将其配置为随系统自动启动

[root@computer1 ~]# systemctl enable libvirtd.service openstack-nova-compute.service

[root@computer1 ~]# systemctl start libvirtd.service openstack-nova-compute.service

(三)、验证

[root@controller ~]# openstack compute service list

+----+----------------+------------+----------+---------+-------+----------------------------+

| ID | Binary | Host | Zone | Status | State | Updated At |

+----+----------------+------------+----------+---------+-------+----------------------------+

| 1 | nova-conductor | controller | internal | enabled | up | 2022-10-10T09:18:59.000000 |

| 2 | nova-scheduler | controller | internal | enabled | up | 2022-10-10T09:19:03.000000 |

| 7 | nova-compute | compute1 | nova | enabled | up | 2022-10-10T09:19:04.000000 |

+----+----------------+------------+----------+---------+-------+----------------------------+

#列出身份服务中的 API 端点以验证与身份服务的连接:

[root@controller ~]# openstack catalog list

+-----------+-----------+-----------------------------------------+

| Name | Type | Endpoints |

+-----------+-----------+-----------------------------------------+

| glance | image | RegionOne |

| | | admin: http://controller:9292 |

| | | RegionOne |

| | | internal: http://controller:9292 |

| | | RegionOne |

| | | public: http://controller:9292 |

| | | |

| keystone | identity | RegionOne |

| | | internal: http://controller:5000/v3/ |

| | | RegionOne |

| | | public: http://controller:5000/v3/ |

| | | RegionOne |

| | | admin: http://controller:5000/v3/ |

| | | |

| placement | placement | RegionOne |

| | | internal: http://controller:8778 |

| | | RegionOne |

| | | admin: http://controller:8778 |

| | | RegionOne |

| | | public: http://controller:8778 |

| | | |

| nova | compute | RegionOne |

| | | admin: http://controller:8774/v2.1 |

| | | RegionOne |

| | | public: http://controller:8774/v2.1 |

| | | RegionOne |

| | | internal: http://controller:8774/v2.1 |

| | | |

+-----------+-----------+-----------------------------------------+

六、Networking网络服务

OpenStack 网络使用的是一个 SDN(Software Defined Networking)组件,即 Neutron,SDN 是一个可插拔的架构,支持插入交换机、防火墙、负载均 衡器等,这些都定义在软件中,从而实现对整个云基础设施的精细化管控。 前期规划,将 ens33 网口作为外部网络(在 Openstack 术语中,外部网络常被称之为 Provider 网络),同时也用作管理网络,便于测试访问,生产环境 建议分开;ens37 网络作为租户网络,即 vxlan 网络;ens38 作为 ceph 集群网络。

OpenStack 网络部署方式可选的有 OVS 和 LinuxBridge。此处选择 LinuxBridge 模式,部署大同小异。

在控制节点上要启动的服务 neutron-server.service neutron-linuxbridge-agent.service neutron-dhcp-agent.service neutron-metadata-agent.service neutron-l3-agent.service

(一)、控制节点配置

1、先决条件

(1)、创建数据库并授权

[root@controller ~]# mysql -u root -p

MariaDB [(none)]> CREATE DATABASE neutron;

Query OK, 1 row affected (0.00 sec)

MariaDB [(none)]> GRANT ALL PRIVILEGES ON neutron.* TO 'neutron'@'172.16.1.160' IDENTIFIED BY 'NEUTRON_DBPASS';

Query OK, 0 rows affected (0.00 sec)

可对'neutron' 数据库授予合适的访问权限,使用合适的密码替换'NEUTRON_DBPASS'

MariaDB [(none)]> GRANT ALL PRIVILEGES ON neutron.* TO 'neutron'@'%' IDENTIFIED BY 'NEUTRON_DBPASS';

Query OK, 0 rows affected (0.00 sec)

MariaDB [(none)]> flush privileges;

Query OK, 0 rows affected (0.00 sec)

(2)、获得 admin 凭证来获取只有管理员能执行的命令的访问权限

[root@controller ~]# .admin-openrc ##刷新环境变量

(3)、要创建服务证书

- 创建neutron用户

[root@controller ~]# openstack user create --domain default --password NEUTRON_PASS neutron

+---------------------+----------------------------------+

| Field | Value |

+---------------------+----------------------------------+

| domain_id | default |

| enabled | True |

| id | 81c68ee930884059835110c1b31b305c |

| name | neutron |

| options | {} |

| password_expires_at | None |

+---------------------+----------------------------------+

- 添加admin角色到neutron用户

[root@controller ~]# openstack role add --project service --user neutron admin

- 创建

neutron服务实体

[root@controller ~]# openstack service create --name neutron --description "OpenStack Networking" network

+-------------+----------------------------------+

| Field | Value |

+-------------+----------------------------------+

| description | OpenStack Networking |

| enabled | True |

| id | cac6a744698445a88092f67521973bc3 |

| name | neutron |

| type | network |

+-------------+----------------------------------+

- 创建网络服务API端点

[root@controller ~]# openstack endpoint create --region RegionOne network public http://controller:9696

+--------------+----------------------------------+

| Field | Value |

+--------------+----------------------------------+

| enabled | True |

| id | 040aa02925db4ec2b9d3ee43d94352e2 |

| interface | public |

| region | RegionOne |

| region_id | RegionOne |

| service_id | cac6a744698445a88092f67521973bc3 |

| service_name | neutron |

| service_type | network |

| url | http://controller:9696 |

+--------------+----------------------------------+

[root@controller ~]# openstack endpoint create --region RegionOne network internal http://controller:9696

+--------------+----------------------------------+

| Field | Value |

+--------------+----------------------------------+

| enabled | True |

| id | 92ccf1b6b687466493c2f05911510368 |

| interface | internal |

| region | RegionOne |

| region_id | RegionOne |

| service_id | cac6a744698445a88092f67521973bc3 |

| service_name | neutron |

| service_type | network |

| url | http://controller:9696 |

+--------------+----------------------------------+

[root@controller ~]# openstack endpoint create --region RegionOne network admin http://controller:9696

+--------------+----------------------------------+

| enabled | True |

| id | 6a0ddef76c81405f99500f70739ce945 |

| interface | admin |

| region | RegionOne |

| region_id | RegionOne |

| service_id | cac6a744698445a88092f67521973bc3 |

| service_name | neutron |

| service_type | network |

| url | http://controller:9696 |

+--------------+----------------------------------+

2、配置网络选项

一、提供商网络

1、安装组件

[root@controller ~]# yum install openstack-neutron openstack-neutron-ml2 openstack-neutron-linuxbridge ebtables -y

openstack-neutron-linuxbridge:网桥,用于创建桥接网卡

ebtables:防火墙规则

2、配置服务组件

编辑/etc/neutron/neutron.conf 文件并完成如下操作

[root@controller ~]# cp /etc/neutron/neutron.conf /etc/neutron/neutron.conf_bak

[root@controller ~]# egrep -v "^$|#" /etc/neutron/neutron.conf_bak > /etc/neutron/neutron.conf

[root@controller ~]# vim /etc/neutron/neutron.conf

- 在

[database]部分,配置数据库访问

[database]

...

connection = mysql+pymysql://neutron:NEUTRON_DBPASS@controller/neutron

- 在

[DEFAULT]部分,启用ML2插件并禁用其他插件

[DEFAULT]

...

core_plugin = ml2

service_plugins =

- 在 “[DEFAULT]” -配置 “RabbitMQ” 消息队列的连接

[DEFAULT]

...

transport_url = rabbit://openstack:RABBIT_PASS@controller

可用你在RabbitMQ中为``openstack``选择的密码替换 “RABBIT_PASS”

- 在 “[DEFAULT]” 和 “[keystone_authtoken]” 部分,配置认证服务访问

[DEFAULT]

...

auth_strategy = keystone

[keystone_authtoken]

...

www_authenticate_uri = http://controller:5000

auth_url = http://controller:5000

memcached_servers = controller:11211

auth_type = password

project_domain_name = default

user_domain_name = default

project_name = service

username = neutron

password = NEUTRON_PASS

可将 NEUTRON_PASS 替换为你在认证服务中为 neutron 用户选择的密码

- 在

[DEFAULT]和[nova]部分,配置网络服务来通知计算节点的网络拓扑变化

[DEFAULT]

...

notify_nova_on_port_status_changes = True

notify_nova_on_port_data_changes = True

[nova]

...

auth_url = http://controller:35357

auth_type = password

project_domain_name = default

user_domain_name = default

region_name = RegionOne

project_name = service

username = nova

password = NOVA_PASS

可使用你在身份认证服务中设置的``nova`` 用户的密码替换``NOVA_PASS``

- 在

[oslo_concurrency]部分,配置锁路径

[oslo_concurrency]

...

lock_path = /var/lib/neutron/tmp

3、配置 Modular Layer 2 (ML2) 插件

编辑/etc/neutron/plugins/ml2/ml2_conf.ini文件并完成以下操作

[root@controller ~]# cp /etc/neutron/plugins/ml2/ml2_conf.ini /etc/neutron/plugins/ml2/ml2_conf.ini_bak

[root@controller ~]# egrep -v "^$|#" /etc/neutron/plugins/ml2/ml2_conf.ini_bak > /etc/neutron/plugins/ml2/ml2_conf.ini

[root@controller ~]# vim /etc/neutron/plugins/ml2/ml2_conf.ini

- 在

[ml2]部分,启用flat和VLAN网络

[ml2]

...

type_drivers = flat,vlan

- 在

[ml2]部分,禁用私有网络

[ml2]

...

tenant_network_types =

- 在

[ml2]部分,启用Linuxbridge机制

[ml2]

...

mechanism_drivers = linuxbridge

- 在

[ml2]部分,启用端口安全扩展驱动

[ml2]

...

extension_drivers = port_security

- 在

[ml2_type_flat]部分,配置公共虚拟网络为flat网络

[ml2_type_flat]

...

flat_networks = provider

- 在该

[ml2_type_vxlan]部分中,为自助服务网络配置 VXLAN 网络标识符范围: - 在 [securitygroup]部分,启用 [ipset]增加安全组规则的高效性

[securitygroup]

...

enable_ipset = True

4、配置Linuxbridge代理

Linuxbridge代理为实例建立layer-2虚拟网络并且处理安全组规则。

编辑/etc/neutron/plugins/ml2/linuxbridge_agent.ini文件并且完成以下操作

[root@controller ~]# cp /etc/neutron/plugins/ml2/linuxbridge_agent.ini /etc/neutron/plugins/ml2/linuxbridge_agent.ini_bak

[root@controller ~]# egrep -v "^$|#" /etc/neutron/plugins/ml2/linuxbridge_agent.ini_bak > /etc/neutron/plugins/ml2/linuxbridge_agent.ini

[root@controller ~]# vim /etc/neutron/plugins/ml2/linuxbridge_agent.ini

- 在

[linux_bridge]部分,将公共虚拟网络和公共物理网络接口对应起来

[linux_bridge]

physical_interface_mappings = provider:PROVIDER_INTERFACE_NAME

将``PROVIDER_INTERFACE_NAME`` 替换为底层的物理公共网络接口,我这里是ens33

- 在

[vxlan]部分,禁止VXLAN覆盖网络

[vxlan]

enable_vxlan = False

- 在 [securitygroup]部分,启用安全组并配置 Linuxbridge iptables firewall driver

[securitygroup]

...

enable_security_group = True

firewall_driver = neutron.agent.linux.iptables_firewall.IptablesFirewallDriver

5、修改内核配置文件/etc/sysctl.conf,确保系统内核支持网桥过滤器

[root@controller ~]# vi /etc/sysctl.conf

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

[root@controller ~]# modprobe br_netfilter

[root@controller ~]# sysctl -p

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

[root@controller ~]# sed -i '$amodprobe br_netfilter' /etc/rc.local

[root@controller ~]# chmod +x /etc/rc.d/rc.local

6、配置DHCP代理

编辑/etc/neutron/dhcp_agent.ini文件并完成下面的操作

[root@controller ~]# cp /etc/neutron/dhcp_agent.ini /etc/neutron/dhcp_agent.ini_bak

[root@controller ~]# egrep -v "^$|#" /etc/neutron/dhcp_agent.ini_bak > /etc/neutron/dhcp_agent.ini

[root@controller ~]# vim /etc/neutron/dhcp_agent.ini

- 在

[DEFAULT]部分,配置Linuxbridge驱动接口,DHCP驱动并启用隔离元数据,这样在公共网络上的实例就可以通过网络来访问元数据

[DEFAULT]

...

interface_driver = linuxbridge

dhcp_driver = neutron.agent.linux.dhcp.Dnsmasq

enable_isolated_metadata = true

二、自助服务网络

1、安装组件

[root@controller ~]# yum install openstack-neutron openstack-neutron-ml2 openstack-neutron-linuxbridge ebtables -y

openstack-neutron-linuxbridge:网桥,用于创建桥接网卡

ebtables:防火墙规则

2、配置服务组件

编辑/etc/neutron/neutron.conf 文件并完成如下操作

[root@controller ~]# cp /etc/neutron/neutron.conf /etc/neutron/neutron.conf_bak

[root@controller ~]# egrep -v "^$|#" /etc/neutron/neutron.conf_bak > /etc/neutron/neutron.conf

[root@controller ~]# vim /etc/neutron/neutron.conf

- 在

[database]部分,配置数据库访问

[database]

...

connection = mysql+pymysql://neutron:NEUTRON_DBPASS@controller/neutron

- 在

[DEFAULT]部分,启用ML2插件并禁用其他插件

[DEFAULT]

...

core_plugin = ml2

service_plugins = router

allow_overlapping_ips = true

- 在 “[DEFAULT]” -配置 “RabbitMQ” 消息队列的连接

[DEFAULT]

...

transport_url = rabbit://openstack:RABBIT_PASS@controller

可用你在RabbitMQ中为``openstack``选择的密码替换 “RABBIT_PASS”

- 在 “[DEFAULT]” 和 “[keystone_authtoken]” 部分,配置认证服务访问

[DEFAULT]

...

auth_strategy = keystone

[keystone_authtoken]

...

www_authenticate_uri = http://controller:5000

auth_url = http://controller:5000

memcached_servers = controller:11211

auth_type = password

project_domain_name = default

user_domain_name = default

project_name = service

username = neutron

password = NEUTRON_PASS

可将 NEUTRON_PASS 替换为你在认证服务中为 neutron 用户选择的密码

- 在

[DEFAULT]和[nova]部分,配置网络服务来通知计算节点的网络拓扑变化

[DEFAULT]

...

notify_nova_on_port_status_changes = True

notify_nova_on_port_data_changes = True

[nova]

auth_url = http://controller:35357

auth_type = password

project_domain_name = default

user_domain_name = default

region_name = RegionOne

project_name = service

username = nova

password = NOVA_PASS

可使用你在身份认证服务中设置的``nova`` 用户的密码替换``NOVA_PASS``

- 在

[oslo_concurrency]部分,配置锁路径

[oslo_concurrency]

...

lock_path = /var/lib/neutron/tmp

3、配置 Modular Layer 2 (ML2) 插件

编辑/etc/neutron/plugins/ml2/ml2_conf.ini文件并完成以下操作

[root@controller ~]# cp /etc/neutron/plugins/ml2/ml2_conf.ini /etc/neutron/plugins/ml2/ml2_conf.ini_bak

[root@controller ~]# egrep -v "^$|#" /etc/neutron/plugins/ml2/ml2_conf.ini_bak > /etc/neutron/plugins/ml2/ml2_conf.ini

[root@controller ~]# vim /etc/neutron/plugins/ml2/ml2_conf.ini

- 在

[ml2]部分,启用flat和VLAN网络

[ml2]

...

type_drivers = flat,vlan,vxlan

- 在

[ml2]部分,禁用私有网络

[ml2]

...

tenant_network_types = vxlan

- 在

[ml2]部分,启用Linuxbridge机制

[ml2]

...

mechanism_drivers = linuxbridge,l2population

- 在

[ml2]部分,启用端口安全扩展驱动

[ml2]

...

extension_drivers = port_security

- 在

[ml2_type_flat]部分,配置公共虚拟网络为flat网络

[ml2_type_flat]

...

flat_networks = provider

- 在

[ml2_type_vxlan]部分中,为自助服务网络配置 VXLAN 网络标识符范围:

[ml2_type_vxlan]

# ...

vni_ranges = 1:1000

- 在 [securitygroup]部分,启用 [ipset]增加安全组规则的高效性

[securitygroup]

...

enable_ipset = True

4、配置Linuxbridge代理

Linuxbridge代理为实例建立layer-2虚拟网络并且处理安全组规则。

编辑/etc/neutron/plugins/ml2/linuxbridge_agent.ini文件并且完成以下操作

[root@controller ~]# cp /etc/neutron/plugins/ml2/linuxbridge_agent.ini /etc/neutron/plugins/ml2/linuxbridge_agent.ini_bak

[root@controller ~]# egrep -v "^$|#" /etc/neutron/plugins/ml2/linuxbridge_agent.ini_bak > /etc/neutron/plugins/ml2/linuxbridge_agent.ini

[root@controller ~]# vim /etc/neutron/plugins/ml2/linuxbridge_agent.ini

- 在

[linux_bridge]部分,将公共虚拟网络和公共物理网络接口对应起来

[linux_bridge]

physical_interface_mappings = provider:PROVIDER_INTERFACE_NAME

将``PROVIDER_INTERFACE_NAME`` 替换为底层的物理公共网络接口,我这里是ens33

- 在

[vxlan]部分,禁止VXLAN覆盖网络

[vxlan]

enable_vxlan = true

local_ip = OVERLAY_INTERFACE_IP_ADDRESS

l2_population = true

替换OVERLAY_INTERFACE_IP_ADDRESS为处理覆盖网络的底层物理网络接口的IP地址。示例架构使用管理接口将流量通过隧道传输到其他节点。因此,替换OVERLAY_INTERFACE_IP_ADDRESS为控制器节点的管理IP地址(10.10.10.1)。

- 在 [securitygroup]部分,启用安全组并配置 Linuxbridge iptables firewall driver

[securitygroup]

...

enable_security_group = True

firewall_driver = neutron.agent.linux.iptables_firewall.IptablesFirewallDriver

5、修改内核配置文件/etc/sysctl.conf,确保系统内核支持网桥过滤器

[root@controller ~]# vim /etc/sysctl.conf

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

[root@controller ~]# modprobe br_netfilter

[root@controller ~]# sysctl -p

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

[root@controller ~]# sed -i '$amodprobe br_netfilter' /etc/rc.local

[root@controller ~]# chmod +x /etc/rc.d/rc.local

6、配置第三层代理

第 3 层 (L3) 代理为自助服务虚拟网络提供路由和 NAT 服务

1、编辑/etc/neutron/l3_agent.ini文件并完成以下操作:

[root@controller neutron]# cp /etc/neutron/l3_agent.ini /etc/neutron/l3_agent.ini_bak

[root@controller neutron]# egrep -v "^$|#" /etc/neutron/l3_agent.ini_bak > /etc/neutron/l3_agent.ini

[root@controller neutron]# vim /etc/neutron/l3_agent.ini

在该[DEFAULT]部分中,配置 Linux 桥接接口驱动程序:

[DEFAULT]

# ...

interface_driver = linuxbridge

7、配置DHCP代理

编辑/etc/neutron/dhcp_agent.ini文件并完成下面的操作

[root@controller ~]# cp /etc/neutron/dhcp_agent.ini /etc/neutron/dhcp_agent.ini_bak

[root@controller ~]# egrep -v "^$|#" /etc/neutron/dhcp_agent.ini_bak > /etc/neutron/dhcp_agent.ini

[root@controller ~]# vim /etc/neutron/dhcp_agent.ini

- 在

[DEFAULT]部分,配置Linuxbridge驱动接口,DHCP驱动并启用隔离元数据,这样在公共网络上的实例就可以通过网络来访问元数据

[DEFAULT]

...

interface_driver = linuxbridge

dhcp_driver = neutron.agent.linux.dhcp.Dnsmasq

enable_isolated_metadata = true

3、配置元数据代理

作用:访问实例的凭证

编辑/etc/neutron/metadata_agent.ini文件并完成以下操作

[root@controller ~]# cp /etc/neutron/metadata_agent.ini /etc/neutron/metadata_agent.ini_bak

[root@controller ~]# egrep -v "^$|#" /etc/neutron/metadata_agent.ini_bak > /etc/neutron/metadata_agent.ini

[root@controller ~]# vim /etc/neutron/metadata_agent.ini

[DEFAULT]

...

nova_metadata_ip = controller

metadata_proxy_shared_secret = METADATA_SECRET

可用你为元数据代理设置的密码替换 METADATA_SECRET

4、为nova配置网络服务

编辑/etc/nova/nova.conf文件并完成以下操作

[root@controller ~]# vim /etc/nova/nova.conf

- 在

[neutron]部分,配置访问参数,启用元数据代理并设置密码

[neutron]

...

auth_url = http://controller:5000

auth_type = password

project_domain_name = default

user_domain_name = default

region_name = RegionOne

project_name = service

username = neutron

password = NEUTRON_PASS

service_metadata_proxy = true

metadata_proxy_shared_secret = METADATA_SECRET

将 NEUTRON_PASS 替换为你在认证服务中为 neutron 用户选择的密码。

可使用你为元数据代理设置的密码替换``METADATA_SECRET``

5、完成安装

(1)、网络服务初始化脚本需要一个超链接 /etc/neutron/plugin.ini``指向ML2插件配置文件/etc/neutron/plugins/ml2/ml2_conf.ini``。如果超链接不存在,使用下面的命令创建它

[root@controller ~]# ln -s /etc/neutron/plugins/ml2/ml2_conf.ini /etc/neutron/plugin.ini

(2)、同步数据库

[root@controller ~]# su -s /bin/sh -c "neutron-db-manage --config-file /etc/neutron/neutron.conf --config-file /etc/neutron/plugins/ml2/ml2_conf.ini upgrade head" neutron

(3)、重启计算API 服务

[root@controller ~]# systemctl restart openstack-nova-api.service

(4)、当系统启动时,启动 Networking 服务并配置它启动

- 对于两种网络选项:

[root@controller ~]# systemctl enable neutron-server.service neutron-linuxbridge-agent.service neutron-dhcp-agent.service neutron-metadata-agent.service

[root@controller ~]# systemctl start neutron-server.service neutron-linuxbridge-agent.service neutron-dhcp-agent.service neutron-metadata-agent.service

- 对于网络选项2,同样启用layer-3服务并设置其随系统自启动

[root@controller ~]# systemctl start neutron-l3-agent.service

[root@controller ~]# systemctl enable neutron-l3-agent.service

(二)、计算节点配置

1、安装组件

[root@computer1 ~]# yum install openstack-neutron-linuxbridge ebtables ipset -y

2、配置通用组件

Networking 通用组件的配置包括认证机制、消息队列和插件。

编辑/etc/neutron/neutron.conf 文件并完成如下操作

[root@computer1 ~]# cp /etc/neutron/neutron.conf /etc/neutron/neutron.conf_bak

[root@computer1 ~]# egrep -v "^$|#" /etc/neutron/neutron.conf_bak > /etc/neutron/neutron.conf

[root@computer1 ~]# vim /etc/neutron/neutron.conf

- 在 “[DEFAULT]” ,配置 “RabbitMQ” 消息队列的连接

[DEFAULT]

...

transport_url = rabbit://openstack:RABBIT_PASS@controller

可用你在RabbitMQ中为``openstack``选择的密码替换 “RABBIT_PASS”

- 在 “[DEFAULT]” 和 “[keystone_authtoken]” 部分,配置认证服务访问

[DEFAULT]

...

auth_strategy = keystone

[keystone_authtoken]

...

www_authenticate_uri = http://controller:5000

auth_url = http://controller:5000

memcached_servers = controller:11211

auth_type = password

project_domain_name = default

user_domain_name = default

project_name = service

username = neutron

password = NEUTRON_PASS

可将 NEUTRON_PASS 替换为你在认证服务中为 neutron 用户选择的密码

- 在

[oslo_concurrency]部分,配置锁路径

[oslo_concurrency]

...

lock_path = /var/lib/neutron/tmp

3、 配置网络选项

(一)、提供商网络

由于该配置与控制节点一样,即复制到计算节点即可

[root@computer1 ~]# scp -r root@controller:/etc/neutron/plugins/ml2/linuxbridge_agent.ini /etc/neutron/plugins/ml2/linuxbridge_agent.ini

修改内核配置文件/etc/sysctl.conf,确保系统内核支持网桥过滤器

[root@controller ~]# vi /etc/sysctl.conf

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

[root@controller ~]# modprobe br_netfilter

[root@controller ~]# sysctl -p

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

[root@controller ~]# sed -i '$amodprobe br_netfilter' /etc/rc.local

[root@controller ~]# chmod +x /etc/rc.d/rc.local

(二)、自助服务网络

1、配置 Linux 网桥代理

Linux 桥接代理为实例构建第 2 层(桥接和交换)虚拟网络基础架构并处理安全组。

1、编辑/etc/neutron/plugins/ml2/linuxbridge_agent.ini文件并完成以下操作

[root@controller ~]# cp /etc/neutron/plugins/ml2/linuxbridge_agent.ini /etc/neutron/plugins/ml2/linuxbridge_agent.ini_bak

[root@compute1 ~]# vim /etc/neutron/plugins/ml2/linuxbridge_agent.ini

在该[linux_bridge]部分中,将提供者虚拟网络映射到提供者物理网络接口:

[linux_bridge]

physical_interface_mappings = provider:PROVIDER_INTERFACE_NAME

替换PROVIDER_INTERFACE_NAME为底层提供者物理网络接口的名称。有关详细信息,请参阅主机网络 。

在该[vxlan]部分中,启用 VXLAN 覆盖网络,配置处理覆盖网络的物理网络接口的 IP 地址,并启用第 2 层填充:

[vxlan]

enable_vxlan = true

local_ip = OVERLAY_INTERFACE_IP_ADDRESS

l2_population = true

替换OVERLAY_INTERFACE_IP_ADDRESS为处理覆盖网络的底层物理网络接口的 IP 地址。示例架构使用管理接口将流量通过隧道传输到其他节点。因此,替换OVERLAY_INTERFACE_IP_ADDRESS为计算节点的管理IP地址。有关详细信息,请参阅 主机网络。

在该[securitygroup]部分中,启用安全组并配置 Linux 网桥 iptables 防火墙驱动程序:

[securitygroup]

# ...

enable_security_group = true

firewall_driver = neutron.agent.linux.iptables_firewall.IptablesFirewallDriver

2、修改内核配置文件/etc/sysctl.conf,确保系统内核支持网桥过滤器

[root@compute1 ~]# vim /etc/sysctl.conf

net.bridge.bridge-nf-call-iptables

net.bridge.bridge-nf-call-ip6tables

4、为nova配置网络服务

编辑/etc/nova/nova.conf文件并完成下面的操作

[root@computer1 ~]# vim /etc/nova/nova.conf

- 在

[neutron]部分,配置访问参数

[neutron]

...

auth_url = http://controller:5000

auth_type = password

project_domain_name = default

user_domain_name = default

region_name = RegionOne

project_name = service

username = neutron

password = NEUTRON_PASS

可将 NEUTRON_PASS 替换为你在认证服务中为 neutron 用户选择的密码

5、完成安装

- 重启计算服务

[root@computer1 ~]# systemctl restart openstack-nova-compute.service

- 启动Linuxbridge代理并配置它开机自启动

[root@computer1 ~]# systemctl enable neutron-linuxbridge-agent.service

[root@computer1 ~]# systemctl start neutron-linuxbridge-agent.service

(三)、验证操作

[root@controller ~]# openstack network agent list

+--------------------------------------+--------------------+------------+-------------------+-------+-------+---------------------------+

| ID | Agent Type | Host | Availability Zone | Alive | State | Binary |

+--------------------------------------+--------------------+------------+-------------------+-------+-------+---------------------------+

| 02199446-fe60-4f56-a0f0-3ea6827f6891 | Linux bridge agent | compute1 | None | :-) | UP | neutron-linuxbridge-agent |

| 1d8812ec-4237-4d75-937c-40a9fac82c65 | Metadata agent | controller | None | :-) | UP | neutron-metadata-agent |

| 2cd47568-54a1-4bea-b2fa-bb1d1b2fe935 | L3 agent | controller | nova | :-) | UP | neutron-l3-agent |

| 533aa260-78f3-4391-b14c-4a1639eda135 | DHCP agent | controller | nova | :-) | UP | neutron-dhcp-agent |

| fd1c7b5c-21ad-4e47-967d-4625e66c3962 | Linux bridge agent | controller | None | :-) | UP | neutron-linuxbridge-agent |

+--------------------------------------+--------------------+------------+-------------------+-------+-------+---------------------------+

(四)、创建一个实例(controller节点)

1、创建虚拟网络

- 提供商网络

1、执行admin凭证获取访问权限

[root@controller ~]# . admin-openrc

2、创建提供商网络

[root@controller ~]# openstack network create --share --external --provider-physical-network provider --provider-network-type flat provider

+---------------------------+---------------------------------------------------------------------------------------------------------------------------------------------------------+

| Field | Value |

+---------------------------+---------------------------------------------------------------------------------------------------------------------------------------------------------+

| admin_state_up | UP |

| availability_zone_hints | |

| availability_zones | |

| created_at | 2022-10-17T10:13:25Z |

| description | |

| dns_domain | None |

| id | 3ae54d14-14e6-48a2-ab7d-10329ce9bb93 |

| ipv4_address_scope | None |

| ipv6_address_scope | None |

| is_default | False |

| is_vlan_transparent | None |

| location | cloud='', project.domain_id=, project.domain_name='Default', project.id='2c9db0df0c9d4543816a07cec1e4d5d5', project.name='admin', region_name='', zone= |

| mtu | 1500 |

| name | provider |

| port_security_enabled | True |

| project_id | 2c9db0df0c9d4543816a07cec1e4d5d5 |

| provider:network_type | flat |

| provider:physical_network | provider |

| provider:segmentation_id | None |

| qos_policy_id | None |

| revision_number | 1 |

| router:external | External |

| segments | None |

| shared | True |

| status | ACTIVE |

| subnets | |

| tags | |

| updated_at | 2022-10-17T10:13:25Z |

+---------------------------+---------------------------------------------------------------------------------------------------------------------------------------------------------+

3、创建一个子网

[root@controller ~]# openstack subnet create --network provider --allocation-pool start=172.16.1.220,end=172.16.1.240 --dns-nameserver 192.168.87.8 --gateway 172.16.1.2 --subnet-range 172.16.1.0/24 provider

+-------------------+---------------------------------------------------------------------------------------------------------------------------------------------------------+

| Field | Value |

+-------------------+---------------------------------------------------------------------------------------------------------------------------------------------------------+

| allocation_pools | 172.16.1.220-172.16.1.240 |

| cidr | 172.16.1.0/24 |

| created_at | 2022-10-17T10:16:10Z |

| description | |

| dns_nameservers | 192.168.87.8 |

| enable_dhcp | True |

| gateway_ip | 172.16.1.2 |

| host_routes | |

| id | eba80af0-8b35-4b5d-9e61-4a524579f631 |

| ip_version | 4 |

| ipv6_address_mode | None |

| ipv6_ra_mode | None |

| location | cloud='', project.domain_id=, project.domain_name='Default', project.id='2c9db0df0c9d4543816a07cec1e4d5d5', project.name='admin', region_name='', zone= |

| name | provider |

| network_id | 3ae54d14-14e6-48a2-ab7d-10329ce9bb93 |

| prefix_length | None |

| project_id | 2c9db0df0c9d4543816a07cec1e4d5d5 |

| revision_number | 0 |

| segment_id | None |

| service_types | |

| subnetpool_id | None |

| tags | |

| updated_at | 2022-10-17T10:16:10Z |

+-------------------+---------------------------------------------------------------------------------------------------------------------------------------------------------+

- 自助服务网络

1、执行admin凭证获取访问权限

[root@controller ~]# . admin-openrc

2、创建自助服务网络

[root@controller ~]# openstack network create selfservice

+---------------------------+---------------------------------------------------------------------------------------------------------------------------------------------------------+

| Field | Value |

+---------------------------+---------------------------------------------------------------------------------------------------------------------------------------------------------+

| admin_state_up | UP |

| availability_zone_hints | |

| availability_zones | |

| created_at | 2022-10-17T09:51:04Z |

| description | |

| dns_domain | None |

| id | 7bf4d5b8-7190-4e05-b3cb-201dae570c1d |

| ipv4_address_scope | None |

| ipv6_address_scope | None |

| is_default | False |

| is_vlan_transparent | None |

| location | cloud='', project.domain_id=, project.domain_name='Default', project.id='2c9db0df0c9d4543816a07cec1e4d5d5', project.name='admin', region_name='', zone= |

| mtu | 1450 |

| name | selfservice |

| port_security_enabled | True |

| project_id | 2c9db0df0c9d4543816a07cec1e4d5d5 |

| provider:network_type | vxlan |

| provider:physical_network | None |

| provider:segmentation_id | 1 |

| qos_policy_id | None |

| revision_number | 1 |

| router:external | Internal |

| segments | None |

| shared | False |

| status | ACTIVE |

| subnets | |

| tags | |

| updated_at | 2022-10-17T09:51:04Z |

+---------------------------+---------------------------------------------------------------------------------------------------------------------------------------------------------+

3、创建一个子网

[root@controller ~]# openstack subnet create --network selfservice --dns-nameserver 114.114.114.114 --gateway 192.168.1.1 --subnet-range 192.168.1.0/24 selfservice

+-------------------+---------------------------------------------------------------------------------------------------------------------------------------------------------+

| Field | Value |

+-------------------+---------------------------------------------------------------------------------------------------------------------------------------------------------+

| allocation_pools | 10.10.10.1-10.10.10.253 |

| cidr | 10.10.10.0/24 |

| created_at | 2022-10-17T10:03:05Z |

| description | |

| dns_nameservers | 114.114.114.114 |

| enable_dhcp | True |

| gateway_ip | 10.10.10.254 |

| host_routes | |

| id | d5898751-981c-40e2-8e1b-bbe9812cdbf6 |

| ip_version | 4 |

| ipv6_address_mode | None |

| ipv6_ra_mode | None |

| location | cloud='', project.domain_id=, project.domain_name='Default', project.id='2c9db0df0c9d4543816a07cec1e4d5d5', project.name='admin', region_name='', zone= |

| name | selfservice |

| network_id | 7bf4d5b8-7190-4e05-b3cb-201dae570c1d |

| prefix_length | None |

| project_id | 2c9db0df0c9d4543816a07cec1e4d5d5 |

| revision_number | 0 |

| segment_id | None |

| service_types | |

| subnetpool_id | None |

| tags | |

| updated_at | 2022-10-17T10:03:05Z |

+-------------------+---------------------------------------------------------------------------------------------------------------------------------------------------------+

4、创建路由

[root@controller ~]# openstack router create router

+-------------------------+---------------------------------------------------------------------------------------------------------------------------------------------------------+

| Field | Value |

+-------------------------+---------------------------------------------------------------------------------------------------------------------------------------------------------+

| admin_state_up | UP |

| availability_zone_hints | |

| availability_zones | |

| created_at | 2022-10-17T10:04:49Z |

| description | |

| distributed | False |

| external_gateway_info | null |

| flavor_id | None |

| ha | False |

| id | 33c7f8f1-7798-49fc-a3d5-83786a70819b |

| location | cloud='', project.domain_id=, project.domain_name='Default', project.id='2c9db0df0c9d4543816a07cec1e4d5d5', project.name='admin', region_name='', zone= |

| name | router |

| project_id | 2c9db0df0c9d4543816a07cec1e4d5d5 |

| revision_number | 1 |

| routes | |

| status | ACTIVE |

| tags | |

| updated_at | 2022-10-17T10:04:49Z |

+-------------------------+---------------------------------------------------------------------------------------------------------------------------------------------------------+

5、在路由器上添加自助网络子网作为接口

[root@controller ~]# openstack router add subnet router selfservice

6、给路由器设置提供商网络网关

[root@controller ~]# openstack router set router --external-gateway provider

2、验证操作

1、列出网络命名空间。你应该可以看到一个’ qrouter ‘命名空间和两个’qdhcp ‘ 命名空间

[root@controller ~]# ip netns

qdhcp-3ae54d14-14e6-48a2-ab7d-10329ce9bb93 (id: 2)

qrouter-33c7f8f1-7798-49fc-a3d5-83786a70819b (id: 1)

qdhcp-7bf4d5b8-7190-4e05-b3cb-201dae570c1d (id: 0)

2、列出路由器上的端口来确定公网网关的IP 地址

[root@controller ~]# neutron router-port-list router

neutron CLI is deprecated and will be removed in the future. Use openstack CLI instead.

+--------------------------------------+------+----------------------------------+-------------------+-------------------------------------------------------------------------------------+

| id | name | tenant_id | mac_address | fixed_ips |

+--------------------------------------+------+----------------------------------+-------------------+-------------------------------------------------------------------------------------+

| 90943cea-ee27-42a3-9b4f-6b6a8f4c70ab | | 2c9db0df0c9d4543816a07cec1e4d5d5 | fa:16:3e:f3:31:ec | {"subnet_id": "d5898751-981c-40e2-8e1b-bbe9812cdbf6", "ip_address": "10.10.10.254"} |

| ae5858c5-03a1-4a51-9d8e-71d0d74b7900 | | | fa:16:3e:3b:60:2f | {"subnet_id": "eba80af0-8b35-4b5d-9e61-4a524579f631", "ip_address": "172.16.1.236"} |

+--------------------------------------+------+----------------------------------+-------------------+-------------------------------------------------------------------------------------+

3、创建一个实例类型

[root@controller ~]# openstack flavor create --id 0 --vcpus 2 --ram 512 --disk 1 m1.nano

+----------------------------+---------+

| Field | Value |

+----------------------------+---------+

| OS-FLV-DISABLED:disabled | False |

| OS-FLV-EXT-DATA:ephemeral | 0 |

| disk | 1 |

| id | 0 |

| name | m1.nano |

| os-flavor-access:is_public | True |

| properties | |

| ram | 512 |

| rxtx_factor | 1.0 |

| swap | |

| vcpus | 2 |

+----------------------------+---------+

4、生成一个键值对

#生成和添加密钥对

[root@controller ~]# ssh-keygen -q -N ""

[root@controller ~]# openstack keypair create --public-key ~/.ssh/id_rsa.pub mykey

+-------------+-------------------------------------------------+

| Field | Value |

+-------------+-------------------------------------------------+

| fingerprint | 78:21:ea:39:d0:e0:a0:12:26:55:5e:50:62:cb:f4:78 |

| name | mykey |

| user_id | 58126687cbcc4888bfa9ab73a2256f27 |

+-------------+-------------------------------------------------+

#验证公钥的添加

[root@controller ~]# openstack keypair list

+-------+-------------------------------------------------+

| Name | Fingerprint |

+-------+-------------------------------------------------+

| mykey | 78:21:ea:39:d0:e0:a0:12:26:55:5e:50:62:cb:f4:78 |

+-------+-------------------------------------------------+

5、增加安全组规则

添加规则到

default安全组允许 ICMP (ping):

[root@controller ~]# openstack security group rule create --proto icmp default

允许安全 shell (SSH) 的访问:

[root@controller ~]# openstack security group rule create --proto tcp --dst-port 22 default

6、启动一个实例

- 列出实例类型

[root@controller ~]# openstack flavor list

+----+---------+-----+------+-----------+-------+-----------+

| ID | Name | RAM | Disk | Ephemeral | VCPUs | Is Public |

+----+---------+-----+------+-----------+-------+-----------+

| 0 | m1.nano | 512 | 1 | 0 | 2 | True |

+----+---------+-----+------+-----------+-------+-----------+

- 列出可用镜像

[root@controller ~]# openstack image list

+--------------------------------------+-----------------------+--------+

| ID | Name | Status |

+--------------------------------------+-----------------------+--------+

| d8e30d01-3b95-4ec7-9b22-785cd0076ae4 | cirros | active |

| f09fe2d3-5a6e-4169-926b-cb13cd5e6018 | ubuntu-18.04-server | active |

| cae84d10-2034-4f8b-8ae0-3d0115d90a68 | ubuntu2004-01Snapshot | active |

| 2ada7482-a406-487e-a9b3-d7bd235fe29f | vm5 | active |

+--------------------------------------+-----------------------+--------+

- 列出安全组

[root@controller ~]# openstack security group list

+--------------------------------------+---------+------------------------+----------------------------------+------+

| ID | Name | Description | Project | Tags |

+--------------------------------------+---------+------------------------+----------------------------------+------+

| 9fa1a67a-1d4e-41ca-a86a-b0ed03a06c37 | default | Default security group | 2c9db0df0c9d4543816a07cec1e4d5d5 | [] |

| a3258f5d-039b-4ece-ba94-aa95a2ea82f4 | default | Default security group | 9c18512ba8d241619aef8a8018d25587 | [] |

+--------------------------------------+---------+------------------------+----------------------------------+------+

- 列出可用网络

[root@controller ~]# openstack network list

+--------------------------------------+-------------+--------------------------------------+

| ID | Name | Subnets |

+--------------------------------------+-------------+--------------------------------------+

| 3ae54d14-14e6-48a2-ab7d-10329ce9bb93 | provider | eba80af0-8b35-4b5d-9e61-4a524579f631 |