Spark GraphX图计算简单案例【代码实现,源码分析】

一.简介

参考:https://www.cnblogs.com/yszd/p/10186556.html

二.代码实现

package big.data.analyse.graphx

import org.apache.log4j.{Level, Logger}

import org.apache.spark.graphx._

import org.apache.spark.rdd.RDD

import org.apache.spark.sql.SparkSession

class VertexProperty()

case class UserProperty(val name: String) extends VertexProperty

case class ProductProperty(val name: String, val price: Double) extends VertexProperty

/*class Graph[VD, ED]{

val vertices: VertexRDD[VD]

val edges: EdgeRDD[ED]

}*/

/**

* Created by zhen on 2019/10/4.

*/

object GraphXTest {

/**

* 设置日志级别

*/

Logger.getLogger("org").setLevel(Level.WARN)

def main(args: Array[String]) {

val spark = SparkSession.builder().appName("GraphXTest").master("local[2]").getOrCreate()

val sc = spark.sparkContext

/**

* 创建vertices的RDD

*/

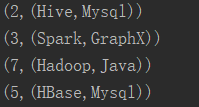

val users : RDD[(VertexId, (String, String))] = sc.parallelize(

Array((3L, ("Spark", "GraphX")), (7L, ("Hadoop", "Java")),

(5L, ("HBase", "Mysql")), (2L, ("Hive", "Mysql"))))

/**

* 创建edges的RDD

*/

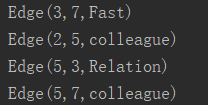

val relationships: RDD[Edge[String]] = sc.parallelize(

Array(Edge(3L, 7L, "Fast"), Edge(5L, 3L, "Relation"),

Edge(2L, 5L, "colleague"), Edge(5L, 7L, "colleague")))

/**

* 定义默认用户

*/

val defualtUser = ("Machical", "Missing")

/**

* 构建初始化图

*/

val graph = Graph(users, relationships, defualtUser)

/**

* 使用三元组视图呈现顶点之间关系

*/

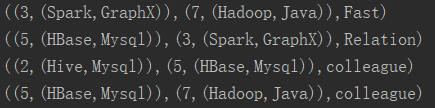

val facts : RDD[String] = graph.triplets.map(triplet =>

triplet.srcAttr._1 + " is the " + triplet.attr + " with " + triplet.dstAttr._1)

facts.collect().foreach(println)

graph.vertices.foreach(println) //顶点

graph.edges.foreach(println) //边

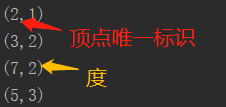

graph.ops.degrees.foreach(println) // 各顶点的度

graph.triplets.foreach(println) // 顶点,边,关系

println(graph.ops.numEdges) // 边的数量

println(graph.ops.numVertices) // 顶点的数量

}

}

三.结果

1.三元组视图

2.顶点

3.边

4.各顶点的度

5.三元组视图

6.边/顶点数量

四.源码分析

class Graph[VD, ED] {

// Information about the Graph

val numEdges: Long

val numVertices:Long

val inDegrees: VertexRDD[Int]

val outDegrees: VertexRDD[Int]

val degrees: VertexRDD[Int]

// Views of the graph as collections

val vertices: VertexRDD[VD]

val edges: EdgeRDD[ED]

val triplets: RDD[EdgeTriplet[VD,ED]]

//Functions for caching graphs

def persist(newLevel1:StorageLevel = StorageLevel.MEMORY_ONLY): Graph[VD, ED]//默认存储级别为MEMORY_ONLY

def cache(): Graph[VD, ED]

def unpersistVertices(blocking: Boolean = true): Graph[VD, ED]

// Change the partitioning heuristic

def partitionBy(partitionStrategy: PartitionStrategy)

// Transform vertex and edge attributes

def mapVertices[VD2](map: (VertexId, VD) => VD2): Graph[VD2, ED]

def mapEdges[ED2](map: Edge[ED] => ED2): Graph[VD, ED2]

def mapEdges[ED2](map: (PartitionID, Iterator[Edge[ED]]) => Iterator[ED2]): Graph[VD, ED2]

def mapTriplets[ED2](map: EdgeTriplet[VD, ED] => ED2): Graph[VD, ED2]

def mapTriplets[ED2](map: (PartitionID, Iterator[EdgeTriplet[VD, ED]]) => Iterator[ED2]): Graph[VD, ED2]

// Modify the graph structure

def reverse: Graph[VD, ED]

def subgraph(epred: EdgeTriplet[VD,ED] => Boolean,vpred: (VertexId, VD) => Boolean): Graph[VD, ED]

def mask[VD2, ED2](other: Graph[VD2, ED2]): Graph[VD, ED] // 返回当前图和其它图的公共子图

def groupEdges(merge: (ED, ED) => ED): Graph[VD,ED]

// Join RDDs with the graph

def joinVertices[U](table: RDD[(VertexId, U)])(mapFunc: (VertexId, VD, U) => VD): Graph[VD, ED]

def outerJoinVertices[U, VD2](other: RDD[(VertexId, U)])(mapFunc: (VertexId, VD, Option[U]))

// Aggregate information about adjacent triplets

def collectNeighborIds(edgeDirection: EdgeDirection): VertexRDD[Array[VertexId]]

def collectNeighbors(edgeDirection: EdgeDirection): VertexRDD[Array[(VertexId, VD)]]

def aggregateMessages[Msg: ClassTag](sendMsg: EdgeContext[VD, ED, Msg] => Unit, merageMsg: (Msg, Msg) => Msg, tripletFields: TripletFields: TripletFields = TripletFields.All): VertexRDD[A]

//Iterative graph-parallel computation

def pregel[A](initialMsg: A, maxIterations: Int, activeDirection: EdgeDiection)(vprog: (VertexId, VD, A) => VD, sendMsg: EdgeTriplet[VD, ED] => Iterator[(VertexId, A)], mergeMsg: (A, A) => A): Graph[VD, ED]

// Basic graph algorithms

def pageRank(tol: Double, resetProb: Double = 0.15): Graph[Double, Double]

def connectedComponents(): Graph[VertexId, ED]

def triangleCount(): Graph[Int, ED]

def stronglyConnectedComponents(numIter: Int): Graph[VertexId, ED]

}

Spark GraphX图计算简单案例【代码实现,源码分析】的更多相关文章

- Spark GraphX图计算核心源码分析【图构建器、顶点、边】

一.图构建器 GraphX提供了几种从RDD或磁盘上的顶点和边的集合构建图形的方法.默认情况下,没有图构建器会重新划分图的边:相反,边保留在默认分区中.Graph.groupEdges要求对图进行重新 ...

- Spark技术内幕:Stage划分及提交源码分析

http://blog.csdn.net/anzhsoft/article/details/39859463 当触发一个RDD的action后,以count为例,调用关系如下: org.apache. ...

- 5.Spark Streaming流计算框架的运行流程源码分析2

1 spark streaming 程序代码实例 代码如下: object OnlineTheTop3ItemForEachCategory2DB { def main(args: Array[Str ...

- 仿爱奇艺视频,腾讯视频,搜狐视频首页推荐位轮播图(二)之SuperIndicator源码分析

转载请把头部出处链接和尾部二维码一起转载,本文出自逆流的鱼:http://blog.csdn.net/hejjunlin/article/details/52510431 背景:仿爱奇艺视频,腾讯视频 ...

- Spark大师之路:广播变量(Broadcast)源码分析

概述 最近工作上忙死了……广播变量这一块其实早就看过了,一直没有贴出来. 本文基于Spark 1.0源码分析,主要探讨广播变量的初始化.创建.读取以及清除. 类关系 BroadcastManager类 ...

- 史上最简单的的HashTable源码分析

HashTable源码分析 1.前言 Hashtable 一个元老级的集合类,早在 JDK 1.0 就诞生了 1.1.摘要 在集合系列的第一章,咱们了解到,Map 的实现类有 HashMap.Link ...

- 65、Spark Streaming:数据接收原理剖析与源码分析

一.数据接收原理 二.源码分析 入口包org.apache.spark.streaming.receiver下ReceiverSupervisorImpl类的onStart()方法 ### overr ...

- struts2 paramsPrepareParamsStack拦截器简化代码(源码分析)

目录 一.在讲 paramsPrepareParamsStack 之前,先看一个增删改查的例子. 1. Dao.java准备数据和提供增删改查 2. Employee.java 为model 3. E ...

- Spark GraphX图计算核心算子实战【AggreagteMessage】

一.简介 参考博客:https://www.cnblogs.com/yszd/p/10186556.html 二.代码实现 package graphx import org.apache.log4j ...

随机推荐

- 10-numpy笔记-np.random.randint

b_idx = np.random.randint(0, 9, 90) >>> b_idx array([0, 1, 5, 4, 7, 2, 7, 0, 0, 4, 2, 2, 3, ...

- Spring data redis的使用

Spring data redis的使用 一.Redis的安装和使用 Redis是用C语言开发的一个高性能键值对数据库,可用于数据缓存,主要用于处理大量数据的高访问负载. 下载地址:https://g ...

- svn服务器端程序安装(二)

1.下载 Setup-Subversion-1.8.9-1.msi 2. 双击,一直next (1) 修改安装地址,要求是非中文无空格 3. 安装完成后,检查是否已添加到系统的环境变量PATH中,若没 ...

- 使用dva 的思考的一个问题,数组复制的必要

*getTags({ payload }, { call, put }) { const response = yield call(getTags, payload); const arr = re ...

- win/zabbix_agent.conf

# This is a configuration file for Zabbix agent service (Windows) # To get more information about Za ...

- Kettle Unable to get list of element types for namespace 'pentaho'

我把公司的kettle5.0升级到7.0之后遇到了这个问题,困扰了很久,百度谷歌都查不到结果,所以只能自己查找原因. 由于已经被搞好了,现在无法截图了,总之就是下面这行报错,遇到这个错误的同学估计也不 ...

- [POI2011]Lightening Conductor(决策单调性)

好久没写过决策单调性了. 这题其实就是 $p_i=\lceil\max\limits_{j}(a_j-a_i+\sqrt{|i-j|})\rceil$. 拆成两边,先只考虑 $j<i$,然后反过 ...

- Linux性能优化实战学习笔记:第五十七讲

一.上节回顾 上一节,我带你一起梳理了常见的性能优化思路,先简单回顾一下.我们可以从系统和应用程序两个角度,来进行性能优化. 从系统的角度来说,主要是对 CPU.内存.网络.磁盘 I/O 以及内核软件 ...

- [LeetCode] 685. Redundant Connection II 冗余的连接之二

In this problem, a rooted tree is a directed graph such that, there is exactly one node (the root) f ...

- bower安装教程

进入node.js官网下载相应操作系统的安装文件http://www.nodejs.org/download/ ,windows环境下载msi文件即可 打开下载的文件,一直点击下一步,完成安装 安装完 ...