ML | SVM

What's xxx

An SVM model is a representation of the examples as points in space, mapped so that the examples of the separate categories are divided by a clear gap that is as wide as possible. New examples are then mapped into that same space and predicted to belong to a category based on which side of the gap they fall on.

In addition to performing linear classification, SVMs can efficiently perform a non-linear classification using what is called the kernel trick, implicitly mapping their inputs into high-dimensional feature spaces. The transformation may be nonlinear and the transformed space high dimensional; thus though the classifier is a hyperplane in the high-dimensional feature space, it may be nonlinear in the original input space.

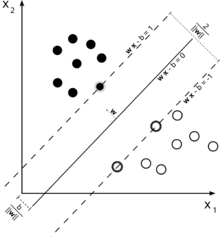

Classifying data is a common task in machine learning. Suppose some given data points each belong to one of two classes, and the goal is to decide which class a new data point will be in. In the case of support vector machines, a data point is viewed as a p-dimensional vector (a list of p numbers), and we want to know whether we can separate such points with a (p − 1)-dimensional hyperplane. This is called a linear classifier. We choose the hyperplane so that the distance from it to the nearest data point on each side is maximized. If such a hyperplane exists, it is known as the maximum-margin hyperplane and the linear classifier it defines is known as a maximum margin classifier.

Any hyperplane can be written as the set of points $\mathbf{x}$ satisfying

$\mathbf{w}\cdot\mathbf{x} - b=0,\,$

where $\cdot$ denotes the dot product and ${\mathbf{w}}$ the (not necessarily normalized) normal vector to the hyperplane.

Maximum-margin hyperplane and margins for an SVM trained with samples from two classes. Samples on the margin are called the support vectors.

It was converted into a quadratic programming optimization problem. The solution can be expressed as a linear combination of the training vectors

$\mathbf{w} = \sum_{i=1}^n{\alpha_i y_i\mathbf{x_i}}.$

Only a few $\alpha_i$ will be greater than zero. The corresponding $\mathbf{x_i}$ are exactly the support vectors, which lie on the margin and satisfy $y_i(\mathbf{w}\cdot\mathbf{x_i} - b) = 1$. From this one can derive that the support vectors also satisfy

$\mathbf{w}\cdot\mathbf{x_i} - b = 1 / y_i = y_i \iff b = \mathbf{w}\cdot\mathbf{x_i} - y_i$

which allows one to define the offset b. In practice, it is more robust to average over all $N_{SV}$ support vectors:

$b = \frac{1}{N_{SV}} \sum_{i=1}^{N_{SV}}{(\mathbf{w}\cdot\mathbf{x_i} - y_i)}$

Writing the classification rule in its unconstrained dual form reveals that the maximum-margin hyperplane and therefore the classification task is only a function of the support vectors, the subset of the training data that lie on the margin.

Using the fact that $\|\mathbf{w}\|^2 = \mathbf{w}\cdot \mathbf{w}$ and substituting $\mathbf{w} = \sum_{i=1}^n{\alpha_i y_i\mathbf{x_i}}$, one can show that the dual of the SVM reduces to the following optimization problem:

Maximize (in $\alpha_i$ )

$\tilde{L}(\mathbf{\alpha})=\sum_{i=1}^n \alpha_i - \frac{1}{2}\sum_{i, j} \alpha_i \alpha_j y_i y_j \mathbf{x}_i^T \mathbf{x}_j=\sum_{i=1}^n \alpha_i - \frac{1}{2}\sum_{i, j} \alpha_i \alpha_j y_i y_j k(\mathbf{x}_i, \mathbf{x}_j)$

subject to (for any $i = 1, \dots, n$)

$\alpha_i \geq 0,\, $

and to the constraint from the minimization in $b$

$\sum_{i=1}^n \alpha_i y_i = 0.$

Here the kernel is defined by $k(\mathbf{x}_i,\mathbf{x}_j)=\mathbf{x}_i\cdot\mathbf{x}_j.$

$W$ can be computed thanks to the $\alpha$ terms:

$\mathbf{w} = \sum_i \alpha_i y_i \mathbf{x}_i.$

ML | SVM的更多相关文章

- OpenCV3 Ref SVM : cv::ml::SVM Class Reference

OpenCV3 Ref SVM : cv::ml::SVM Class Reference OpenCV2: #include <opencv2/core/core.hpp>#inclu ...

- Unknown/unsupported SVM type in function 'cv::ml::SVMImpl::checkParams'

1.在使用PYTHON[Python 3.6.8]训练样本时报错如下: Traceback (most recent call last): File "I:\Eclipse\Python\ ...

- 【OpenCV】opencv3.0中的SVM训练 mnist 手写字体识别

前言: SVM(支持向量机)一种训练分类器的学习方法 mnist 是一个手写字体图像数据库,训练样本有60000个,测试样本有10000个 LibSVM 一个常用的SVM框架 OpenCV3.0 中的 ...

- SVM+HOG特征训练分类器

#1,概念 在机器学习领域,支持向量机SVM(Support Vector Machine)是一个有监督的学习模型,通常用来进行模式识别.分类.以及回归分析. SVM的主要思想可以概括为两点:⑴它是针 ...

- [code segments] OpenCV3.0 SVM with C++ interface

talk is cheap, show you the code: /***************************************************************** ...

- EasyPR源码剖析(7):车牌判断之SVM

前面的文章中我们主要介绍了车牌定位的相关技术,但是定位出来的相关区域可能并非是真实的车牌区域,EasyPR通过SVM支持向量机,一种机器学习算法来判定截取的图块是否是真的“车牌”,本节主要对相关的技术 ...

- opencv3.1线性可分svm例子及函数分析

https://www.cnblogs.com/qinguoyi/p/7272218.html //摘自:http://docs.opencv.org/2.4/doc/tutorials/ml/int ...

- 简单HOG+SVM mnist手写数字分类

使用工具 :VS2013 + OpenCV 3.1 数据集:minst 训练数据:60000张 测试数据:10000张 输出模型:HOG_SVM_DATA.xml 数据准备 train-images- ...

- opencv 视觉项目学习笔记(二): 基于 svm 和 knn 车牌识别

车牌识别的属于常见的 模式识别 ,其基本流程为下面三个步骤: 1) 分割: 检测并检测图像中感兴趣区域: 2)特征提取: 对字符图像集中的每个部分进行提取: 3)分类: 判断图像快是不是车牌或者 每个 ...

随机推荐

- DeepFaceLab小白入门(3):软件使用!

换脸程序执行步骤,大部分程序都是类似.DeepFaceLab 虽然没有可视化界面,但是将整个过程分成了8个步骤,每个步骤只需点击BAT文件即可执行.只要看着序号,一个个点过去就可以了,这样的操作应该不 ...

- Python基础——赋值机制

使用id()函数用于获取对象的内存地址. 使用is来判断是不是指向同一个内存. 把一个对象赋值给另一个对象,两个对象都指向同一个内存地址. test=1000 test1=test id(test) ...

- manjaro kde netease-cloud-music 网易云音乐

- 20181205(模块循环导入解决方案,json&pickle模块,time,date,random介绍)

一.补充内容 循环导入 解决方案: 1.将导入的语句挪到后面. 2.将导入语句放入函数,函数在定义阶段不运行 #m1.pyprint('正在导入m1') #②能够正常打印from m2 imp ...

- Python爬取全站妹子图片,差点硬盘走火了!

在这严寒的冬日,为了点燃我们的热情,今天小编可是给大家带来了偷偷收藏了很久的好东西.大家要注意点哈,我第一次使用的时候,大意导致差点坏了大事哈! 1.所需库安装 2.网站分析 首先打开妹子图的官网(m ...

- 循环字典进行操作时出现:RuntimeError: dictionary changed size during iteration的解决方案

在做对员工信息增删改查这个作业时,有一个需求是通过用户输入的id删除用户信息.我把用户信息从文件提取出来储存在了字典里,其中key是用户id,value是用户的其他信息.在循环字典的时候,当用户id和 ...

- SQL前后端分页

/class Page<T> package com.neusoft.bean; import java.util.List; public class Page<T> { p ...

- stm32启动地址

理论上,CM3中规定上电后CPU是从0地址开始执行,但是这里中断向量表却被烧写在0x0800 0000地址里(Flash memory启动方式),那启动时不就找不到中断向量表了?既然CM3定下的规矩是 ...

- hdu-1231 连续最大子序列(动态规划)

Time limit1000 ms Memory limit32768 kB 给定K个整数的序列{ N1, N2, ..., NK },其任意连续子序列可表示为{ Ni, Ni+1, ..., Nj ...

- Linux学习-透过 systemctl 管理服务

透过 systemctl 管理单一服务 (service unit) 的启动/开机启动与观察状态 一般来说,服务的启动有两个阶段,一 个是『开机的时候设定要不要启动这个服务』, 以及『你现在要不要启动 ...