2.安装hdfs yarn

设置hadoop环境变量

设置hdfs环境变量

设置yarn环境变量

设置mapreduce环境变量

修改hadoop配置

设置core-site.xml

设置hdfs-site.xml

设置yarn-site.xml

设置mapred-site.xml

设置slave文件

分发配置

启动hdfs

格式化namenode

启动hdfs

检查hdfs启动情况

启动yarn

测试mr任务

hadoop本地库

hdfs yarn和mapreduce参数

下载hadoop压缩包

去hadoop官网下载hadoop-2.8.0压缩包到hadoop1.然后放到/opt下并解压.

$ gunzip hadoop-2.8.0.tar.gz

$ tar -xvf hadoop-2.8.0.tar然后修改hadoop-2.8.0的目录权限,使hdfs和yarn均有权限读写该目录:

# chown -R hdfs:hadoop /opt/hadoop-2.8.0设置hadoop环境变量

编辑/etc/profile:

export HADOOP_HOME=/opt/hadoop-2.8.0

export HADOOP_PREFIX=/opt/hadoop-2.8.0

export HADOOP_YARN_HOME=$HADOOP_HOME

export HADOOP_CONF_DIR=${HADOOP_HOME}/etc/hadoop

export LD_LIBRARY_PATH=$JAVA_HOME/jre/lib/amd64/server

export PATH=${HADOOP_HOME}/bin:$PATH设置hdfs环境变量

编辑/opt/hadoop-2.8.0/ect/hadoop/hadoop-env.sh

#export JAVA_HOME=/usr/local/java/jdk1.8.0_121

#export HADOOP_HOME=/opt/hadoop/hadoop-2.7.3

#hadoop进程的最大heapsize包括namenode/datanode/ secondarynamenode等,默认1000M

#export HADOOP_HEAPSIZE=

#namenode的初始heapsize,默认取上面的值,按需要分配

#export HADOOP_NAMENODE_INIT_HEAPSIZE=""

#JVM启动参数,默认为空

#export HADOOP_OPTS="$HADOOP_OPTS -Djava.net.preferIPv4Stack=true"

#还可以单独配置各个组件的内存:

#export HADOOP_NAMENODE_OPTS=

#export HADOOP_DATANODE_OPTS

#export HADOOP_SECONDARYNAMENODE_OPTS

#设置hadoop日志,默认是$HADOOP_HOME/log

#export HADOOP_LOG_DIR=${HADOOP_LOG_DIR}/$USER

export HADOOP_LOG_DIR=/var/log/hadoop/根据自己系统的规划来设置各个参数.要注意namenode所用的blockmap和namespace空间都在heapsize中,所以生产环境要设较大的heapsize.

注意所有组件使用的内存和,生产给linux系统留5-15%的内存(一般留10G).根据自己系统的规划来设置各个参数.要注意namenode所用的blockmap和namespace空间都在heapsize中,所以生产环境要设较大的heapsize.

注意所有组件使用的内存和,生产给linux系统留5-15%的内存(一般留10G).

设置yarn环境变量

编辑/opt/hadoop-2.8.0/ect/hadoop/yarn-env.sh

#export JAVA_HOME=/usr/local/java/jdk1.8.0_121

#JAVA_HEAP_MAX=-Xmx1000m

#YARN_HEAPSIZE=1000 #yarn 守护进程heapsize

#export YARN_RESOURCEMANAGER_HEAPSIZE=1000 #单独设置RESOURCEMANAGER的HEAPSIZE

#export YARN_TIMELINESERVER_HEAPSIZE=1000 #单独设置TIMELINESERVER(jobhistoryServer)的HEAPSIZE

#export YARN_RESOURCEMANAGER_OPTS= #单独设置RESOURCEMANAGER的JVM选项

#export YARN_NODEMANAGER_HEAPSIZE=1000 #单独设置NODEMANAGER的HEAPSIZE

#export YARN_NODEMANAGER_OPTS= #单独设置NODEMANAGER的JVM选项

export YARN_LOG_DIR=/var/log/yarn #设置yarn的日志目录根据环境配置,这里不设置,生产环境注意JVM参数及日志文件位置

设置mapreduce环境变量

# export JAVA_HOME=/home/y/libexec/jdk1.6.0/

#export HADOOP_JOB_HISTORYSERVER_HEAPSIZE=1000

#export HADOOP_MAPRED_ROOT_LOGGER=INFO,RFA

#export HADOOP_JOB_HISTORYSERVER_OPTS=

#export HADOOP_MAPRED_LOG_DIR="" # Where log files are stored. $HADOOP_MAPRED_HOME/logs by default.

#export HADOOP_JHS_LOGGER=INFO,RFA # Hadoop JobSummary logger.

#export HADOOP_MAPRED_PID_DIR= # The pid files are stored. /tmp by default.

#export HADOOP_MAPRED_IDENT_STRING= #A string representing this instance of hadoop. $USER by default

#export HADOOP_MAPRED_NICENESS= #The scheduling priority for daemons. Defaults to 0.

export export HADOOP_MAPRED_LOG_DIR=/var/log/yarn

# export JAVA_HOME=/home/y/libexec/jdk1.6.0/

#export HADOOP_JOB_HISTORYSERVER_HEAPSIZE=1000

#export HADOOP_MAPRED_ROOT_LOGGER=INFO,RFA

#export HADOOP_JOB_HISTORYSERVER_OPTS=

#export HADOOP_MAPRED_LOG_DIR="" # Where log files are stored. $HADOOP_MAPRED_HOME/logs by default.

#export HADOOP_JHS_LOGGER=INFO,RFA # Hadoop JobSummary logger.

#export HADOOP_MAPRED_PID_DIR= # The pid files are stored. /tmp by default.

#export HADOOP_MAPRED_IDENT_STRING= #A string representing this instance of hadoop. $USER by default

#export HADOOP_MAPRED_NICENESS= #The scheduling priority for daemons. Defaults to 0.

export export HADOOP_MAPRED_LOG_DIR=/var/log/yarn根据环境配置,这里不设置,生产环境注意JVM参数及日志文件位置

修改hadoop配置

参下的设置在官网http://hadoop.apache.org/docs/r2.8.0/hadoop-project-dist/hadoop-common/ClusterSetup.html 都可以找到

设置core-site.xml

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://hadoop1:9000</value>

<description>HDFS 端口</description>

</property>

<property>

<name>io.file.buffer.size</name>

<value>131072</value>

</property>

<property>

<name>fs.trash.interval</name>

<value>1440</value>

<description>启动hdfs回收站,回收站保留时间1440分钟</description>

</property>

<property>

<name>hadoop.tmp.dir</name>

<value>/opt/hadoop-2.8.0/tmp</value>

<description>默认值/tmp/hadoop-${user.name},修改成持久化的目录</description>

</property>

</configuration>core-site.xml里有众多的参数,但只修改这两个就能启动,其它参数请参考官方文档.

设置hdfs-site.xml

这里只设置以下只个参数:

<configuration>

<property>

<name>dfs.replication</name>

<value>1</value>

<description>数据块的备份数量,生产建议为3</description>

</property>

<property>

<name>dfs.namenode.name.dir</name>

<value>/opt/hadoop-2.8.0/namenodedir</value>

<description>保存namenode元数据的目录,生产上放在raid中</description>

</property>

<property>

<name>dfs.blocksize</name>

<value>134217728</value>

<description>数据块大小,128M,根据业务场景设置,大文件多就设更大值.</description>

</property>

<property>

<name>dfs.namenode.handler.count</name>

<value>100</value>

<description>namenode处理的rpc请求数,大集群设置更大的值</description>

</property>

<property>

<name>dfs.datanode.data.dir</name>

<value>/opt/hadoop-2.8.0/datadir</value>

<description>datanode保存数据目录,生产上设置成每个磁盘的路径,不建议用raid</description>

</property>

</configuration>设置yarn-site.xml

这里只设置以下只个参数,其它参数请参考官网.

<configuration>

<property>

<name>yarn.resourcemanager.hostname</name>

<value>hadoop1</value>

<description>设置resourcemanager节点</description>

</property>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

<description>设置nodemanager的aux服务</description>

</property>

<property>

<name>yarn.scheduler.minimum-allocation-mb</name>

<value>32</value>

<description>每个container的最小大小MB</description>

</property>

<property>

<name>yarn.scheduler.maximum-allocation-mb</name>

<value>128</value>

<description>每个container的最大大小MB</description>

</property>

<property>

<name>yarn.nodemanager.resource.memory-mb</name>

<value>1024</value>

<description>为nodemanager分配的最大内存MB</description>

</property>

<property>

<name>yarn.nodemanager.local-dirs</name>

<value>/home/yarn/nm-local-dir</value>

<description>nodemanager本地目录</description>

</property>

<property>

<name>yarn.nodemanager.resource.cpu-vcores</name>

<value>1</value>

<description>每个nodemanger机器上可用的CPU,默认为-1,即让yarn自动检测CPU个数,但是当前yarn无法检测,实际上该值是8</description>

</property>

</configuration>生产上请设置:

ResourceManager的参数:

| Parameter | Value | Notes |

|---|---|---|

| yarn.resourcemanager.address | ResourceManager host:port for clients to submit jobs. | host:port If set, overrides the hostname set in yarn.resourcemanager.hostname. resourcemanager的地址,格式 主机:端口 |

| yarn.resourcemanager.scheduler.address | ResourceManager host:port for ApplicationMasters to talk to Scheduler to obtain resources. | host:port If set, overrides the hostname set in yarn.resourcemanager.hostname. 调度器地址 ,覆盖yarn.resourcemanager.hostname |

| yarn.resourcemanager.resource-tracker.address | ResourceManager host:port for NodeManagers. | host:port If set, overrides the hostname set in yarn.resourcemanager.hostname. datanode像rm报告的端口, 覆盖 yarn.resourcemanager.hostname |

| yarn.resourcemanager.admin.address ResourceManager host:port for administrative commands. | host:port If set, overrides the hostname set in yarn.resourcemanager.hostname. RM管理地址,覆盖 yarn.resourcemanager.hostname | |

| yarn.resourcemanager.webapp.address | ResourceManager web-ui host:port. | host:port If set, overrides the hostname set in yarn.resourcemanager.hostname. RM web地址,有默认值 |

| yarn.resourcemanager.hostname | ResourceManager host. | host Single hostname that can be set in place of setting allyarn.resourcemanager*address resources. Results in default ports for ResourceManager components. RM的主机,使用默认端口 |

| yarn.resourcemanager.scheduler.class | ResourceManager Scheduler class. | CapacityScheduler (recommended), FairScheduler (also recommended), or FifoScheduler |

| yarn.scheduler.minimum-allocation-mb | Minimum limit of memory to allocate to each container request at the Resource Manager. | In MBs 最小容器内存(每个container最小内存) |

| yarn.scheduler.maximum-allocation-mb | Maximum limit of memory to allocate to each container request at the Resource Manager. | In MBs 最大容器内存(每个container最大内存) |

| yarn.resourcemanager.nodes.include-path /yarn.resourcemanager.nodes.exclude-path | List of permitted/excluded NodeManagers. | If necessary, use these files to control the list of allowable NodeManagers. 哪些datanode可以被RM管理 |

NodeManager的参数:

| Parameter | Value | Notes |

|---|---|---|

| yarn.nodemanager.resource.memory-mb | Resource i.e. available physical memory, in MB, for given NodeManager | Defines total available resources on the NodeManager to be made available to running containers Yarn在NodeManager最大内存 |

| yarn.nodemanager.vmem-pmem-ratio | Maximum ratio by which virtual memory usage of tasks may exceed physical memory | The virtual memory usage of each task may exceed its physical memory limit by this ratio. The total amount of virtual memory used by tasks on the NodeManager may exceed its physical memory usage by this ratio. 任务使用的虚拟内存超过被允许的推理内存的比率,超过则kill掉 |

| yarn.nodemanager.local-dirs | Comma-separated list of paths on the local filesystem where intermediate data is written. | Multiple paths help spread disk i/o. mr运行时中间数据的存放目录,建议用多个磁盘分摊I/O,,默认是HADOOPYARNHOME/log,默认是" role="presentation" style="font-size: 100%; display: inline-block; position: relative;">默认是HADOOPYARNHOME/log,默认是HADOOP_YARN_HOME/log |

| yarn.nodemanager.log-dirs | Comma-separated list of paths on the local filesystem where logs are written. | Multiple paths help spread disk i/o. mr任务日志的目录,建议用多个磁盘分摊I/O,,默认是HADOOPYARNHOME/log,默认是" role="presentation" style="font-size: 100%; display: inline-block; position: relative;">默认是HADOOPYARNHOME/log,默认是HADOOP_YARN_HOME/log/userlog |

| yarn.nodemanager.log.retain-seconds | 10800 | Default time (in seconds) to retain log files on the NodeManager Only applicable if log-aggregation is disabled. |

| yarn.nodemanager.remote-app-log-dir | /logs | HDFS directory where the application logs are moved on application completion. Need to set appropriate permissions. Only applicable if log-aggregation is enabled. |

| yarn.nodemanager.aux-services | mapreduce_shuffle | Shuffle service that needs to be set for Map Reduce applications. shuffle服务类型 |

设置mapred-site.xml

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

<description>使用yarn来管理mr</description>

</property>

<property>

<name>mapreduce.jobhistory.address</name>

<value>hadoop2:10020</value>

<description>jobhistory主机的地址</description>

</property>

<property>

<name>mapreduce.jobhistory.webapp.address</name>

<value>hadoop2:19888</value>

<description>jobhistory web的主机地址</description>

</property>

<property>

<name>mapreduce.jobhistory.intermediate-done-dir</name>

<value>/opt/hadoop/hadoop-2.8.0/mrHtmp</value>

<description>正在的mr任务监控内容的存放目录</description>

</property>

<property>

<name>mapreduce.jobhistory.done-dir</name>

<value>/opt/hadoop/hadoop-2.8.0/mrhHdone</value>

<description>执行完毕的mr任务监控内容的存放目录</description>

</property>

</configuration>设置slave文件

在/opt/hadoop-2.8.0/ect/hadoop/slave中写上从节点

hadoop3

hadoop4

hadoop5

分发配置

将 /etc/profile /opt/* 复制到其它节点上

$ scp hdfs@hadoop1:/etc/profile /etc

$ scp -r hdfs@hadoop1:/opt/* /opt/建议先压缩再传….

启动hdfs

格式化namenode

$HADOOP_HOME/bin/hdfs namenode -format

启动hdfs

$HADOOP_HOME/bin/hdfs namenode -format以hdfs使用 $HADOOP_HOME/sbin/start-dfs.sh启动整个hdfs集群或者,使用

$HADOOP_HOME/sbin/hadoop-daemon.sh --config $HADOOP_CONF_DIR --script hdfs start namenode #启动单个namenode

$HADOOP_HOME/sbin/hadoop-daemon.sh --config $HADOOP_CONF_DIR --script hdfs start datanode #启动单个datanode启动日志会写在$HADOOP_HOME/log下,可以在hadoop-env.sh里设置日志路径

检查hdfs启动情况

打 http://hadoop1:50070 或者执行 hdfs dfs -mkdir /test测试

启动yarn

在hadoop1上启resourcemanager:

yarn $ $HADOOP_YARN_HOME/sbin/yarn-daemon.sh --config $HADOOP_CONF_DIR start resourcemanager在hadoop3 hadoop4 hadoop5上启动nodemanager:

yarn $ $HADOOP_YARN_HOME/sbin/yarn-daemon.sh --config $HADOOP_CONF_DIR start nodemanager如果设置了slave文件并且以yarn配置了ssh互信,那可以在任意一个节点执行:start-yarn.sh即可启动整个集群

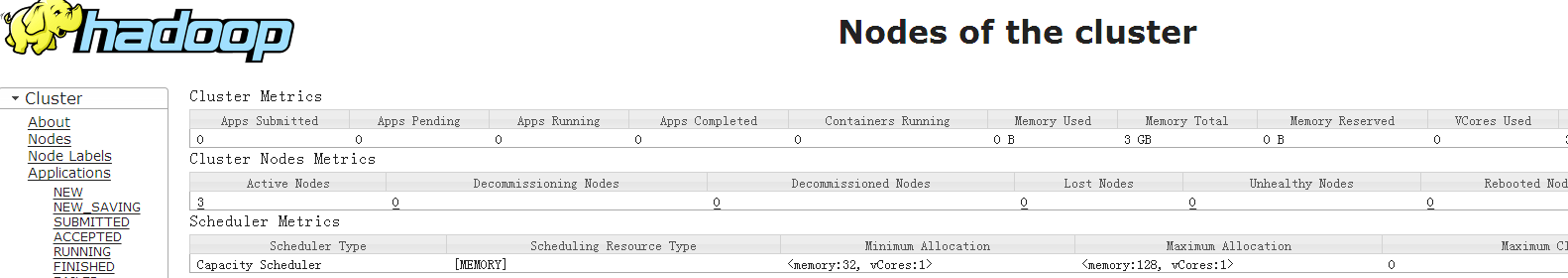

然后打开RM页面:

如果启动有问题,查看在yarn-evn.sh设置的YARN_LOG_DIR下的日志查找原因.注意yarn启动时用的目录的权限.

测试mr任务

[hdfs@hadoop1 hadoop-2.8.0]$ hdfs dfs -mkdir -p /user/hdfs/input

[hdfs@hadoop1 hadoop-2.8.0]$ hdfs dfs -put etc/hadoop/ /user/hdfs/input

[hdfs@hadoop1 hadoop-2.8.0]$ bin/hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-examples-2.8.0.jar grep input output 'dfs[a-z.]+'

17/06/27 04:16:45 INFO mapreduce.JobSubmitter: Cleaning up the staging area /tmp/hadoop-yarn/staging/hdfs/.staging/job_1498507021248_0003

java.io.IOException: org.apache.hadoop.yarn.exceptions.InvalidResourceRequestException: Invalid resource request, requested memory < 0, or requested memory > max configured, requestedMemory=1536, maxMemory=128

at org.apache.hadoop.yarn.server.resourcemanager.scheduler.SchedulerUtils.validateResourceRequest(SchedulerUtils.java:279)然后就报错了.请求的最大内存是1536MB,最大内存是128M.1536是MR任务默认请求的最小资源.最大资源是128M?我的集群明明有3G的资源.这里信息应该是错误的,当一个container的最在内存不能满足单个个map任务用的最小内存时会报错,报的是container的内存大小而不是集群的总内存.当前的集群配置是,每个container最小使用32MB内存,最大使用128MB内存,而一个map默认最小使用1024MB的内存.

现在,修改下每个map和reduce任务用的最小资源:

修改mapred-site.xml,添加:

<property>

<name>mapreduce.map.memory.mb</name>

<value>128</value>

<description>map任务最小使用的内存</description>

</property>

<property>

<name>mapreduce.reduce.memory.mb</name>

<value>128</value>

<description>reduce任务最小使用的内存</description>

</property>

<property>

<name>yarn.app.mapreduce.am.resource.mb</name>

<value>128</value>

<description>mapreduce任务默认使用的内存</description>

</property> 再次执行:

[hdfs@hadoop1 hadoop-2.8.0]$ bin/hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-examples-2.8.0.jar grep input output 'dfs[a-z.]+'

17/06/27 05:04:26 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform… using builtin-java classes where applicable

……..

17/06/27 05:04:36 INFO mapreduce.JobSubmitter: Cleaning up the staging area /tmp/hadoop-yarn/staging/hdfs/.staging/job_1498510574463_0006

org.apache.hadoop.mapreduce.lib.input.InvalidInputException: Input path does not exist: hdfs://hadoop1:9000/user/hdfs/grep-temp-2069706136

……..资源的问题解决了,也验证了我的想法.但是这次又报了一个错误,缺少目录.在2.6.3以及2.7.3中,我都测试过,没发现这个问题,暂且不管个.至于MR的可用性,以后会再用其它方式验证.怀疑jar包有问题.

hadoop本地库

不知道大家注意到没有,每次执行hdfs命令时,都会报:

WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform… using builtin-java classes where applicable这是由于不能使用本地库的原因.hadoop依赖于linux上一次本地库,比如zlib等来提高效率.

关于本地库,请看我的另一篇文章:

hdfs yarn和mapreduce参数

关于参数,我会另起一篇介绍比较重要的参数

下一篇,设置HDFS的HA

2.安装hdfs yarn的更多相关文章

- Eclipse 安装 HDFS 插件

Eclipse 安装 hdfs 连接插件 1.插件安装 在$HADOOP_HOME/contrib/eclipse-plugin/文件夹中有个hadoop-eclipse-plugin-0.20.20 ...

- 使用阿里云ECS安装HDFS的小问题

毕设涉及HDFS,理论看的感觉差不多了,想搭起来测试一下性能来验证以便进行开题报告,万万没想到装HDFS花费了许多天,踩了许多坑,记录一下. 背景:使用两台阿里云学生机ECS,分处不同账号不同区域,一 ...

- HBase伪分布式安装(HDFS)+ZooKeeper安装+HBase数据操作+HBase架构体系

HBase1.2.2伪分布式安装(HDFS)+ZooKeeper-3.4.8安装配置+HBase表和数据操作+HBase的架构体系+单例安装,记录了在Ubuntu下对HBase1.2.2的实践操作,H ...

- Hue联合(hdfs yarn hive) 后续......................

1.启动hdfs,yarn start-all.sh 2.启动hive $ bin/hive $ bin/hive --service metastore & $ bin/hive --ser ...

- 阿里云安装配置yarn,Nginx

1.和npm 相比yarn 的优势在于 1.比npm快.npm是一个个安装包,yarn 是并行安装. 2.npm 可能会有情况 同样的 package.json 文件在不同的机器上安装的包不一样.导致 ...

- Hadoop(HDFS,YARN)的HA集群安装

搭建Hadoop的HDFS HA及YARN HA集群,基于2.7.1版本安装. 安装规划 角色规划 IP/机器名 安装软件 运行进程 namenode1 zdh-240 hadoop NameNode ...

- hadoop/hdfs/yarn 详细命令搬运

转载自文章 http://www.cnblogs.com/davidwang456/p/5074108.html 安装完hadoop后,在hadoop的bin目录下有一系列命令: container- ...

- centos7 hdfs yarn spark 搭建笔记

1.搭建3台虚拟机 2.建立账户及信任关系 3.安装java wget jdk-xxx rpm -i jdk-xxx 4.添加环境变量(全部) export JAVA_HOME=/usr/java/j ...

- Hadoop集群搭建-05安装配置YARN

Hadoop集群搭建-04安装配置HDFS Hadoop集群搭建-03编译安装hadoop Hadoop集群搭建-02安装配置Zookeeper Hadoop集群搭建-01前期准备 先保证集群5台虚 ...

随机推荐

- luajit 64位 for cocos2dx 编译ios解决方法

最近luajit发布了64位beta版,由于appstore上线必须是64位的应用,而且我的游戏项目用到lua脚本,所以必须要用到64位的luajit来编译lua脚本. 方法如下: 在luajit官网 ...

- Linux Mysql 卸载

Linux下mysql的卸载: 1.查找以前是否装有mysql 命令:rpm -qa|grep -i mysql 可以看到mysql的两个包: mysql-4.1.12-3.RHEL4.1 mysql ...

- 自己花了2天时间,重新整理了个全面的vue2的模板

自己花了2天时间,重新整理了个全面的vue2的模板,基本vue中需要的部分都整理封装好了,希望大家喜欢^ ^.欢迎大家star或者fork呀~,https://github.com/qianxiaon ...

- 关于Date的冷门知识记录

最近在做项目的时候,用到了Date.toLocaleString来处理当前日期.在这之前,我都是通过get*等方式来获取数据进行拼接.无意间,发现了toLocaleString方法.遂想写一篇文章来记 ...

- 『ACM C++』 Codeforces | 1003C - Intense Heat

今日兴趣新闻: NASA 研制最强推进器,加速度可达每秒 40 公里,飞火星全靠它 链接:https://mbd.baidu.com/newspage/data/landingsuper?contex ...

- PHP 使用GD库合成带二维码的海报步骤以及源码实现

PHP 使用GD库合成带二维码的海报步骤以及源码实现 在做微信项目开发过程中,经常会遇到图片合成的问题,比如将用户的二维码合成到宣传海报中,那么,遇到这种情况,利用PHP的GD库也是很容易实现的,实现 ...

- FMX相关

ListView的ItemAppearance的样式效果表: Navicat for 插入图片步骤: 如果最后一条记录的图片有问题,可以先插入下一条再导入图片.

- Oracle之基础操作

sqlplus常用命令: 进入sqlplus模式:sqlplus /nolog 管理员登录: conn / as sysdba 登录本机的数据库 conn sys/123456 as sysdba 普 ...

- 【转载++】C/C++错误分析errno,perror,strerror和GetLastError()函数返回的错误代码的意义

本文是上一篇“fopen返回0(空指针NULL)且GetLastError是0”的侧面回应.听赶来多么地正确和不容置疑,返回NULL时调用GetLastError来看看报错啊,但当时却返回了0,大家都 ...

- Java异常链

是什么 一种面向对象的编程技术,将捕获到的异常重新封装到一个新的异常中,并重新抛出. 有什么用 可以保留每一层的异常信息,用户查看异常的时候,能够从顶层异常信息看到底层异常信息. 怎么用 catch异 ...