spark教程(二)-shell操作

spark 支持 shell 操作

shell 主要用于调试,所以简单介绍用法即可

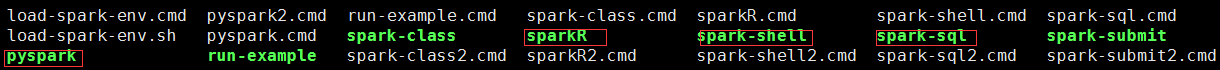

支持多种语言的 shell

包括 scala shell、python shell、R shell、SQL shell 等

spark-shell 用于在 scala 的 shell 模式下操作 spark

pyspark 用于在 python 的 shell 模式下操作 spark

spark-sql 用于在 spark-sql 模式下运行 sql,后续会讲 sparkSQL

支持 3 种模式的 shell

local 模式、 standalone 模式、yarn模式

不同的模式需要指定 master

python 模式的 shell 命令

master 参数指定了运行模式

[root@hadoop10 spark]# bin/pyspark --help

Usage: ./bin/pyspark [options] Options:

--master MASTER_URL spark://host:port, mesos://host:port, yarn, # 设定 master,即在哪里运行 spark,

# mesos://host:port一般不用;yarn需要把spark部署到yarn上

k8s://https://host:port, or local (Default: local[*]). # local 本地模式,local 表示单线程,local[num]表示num个进程,

# local[*]表示服务器cpu是几核就是几个进程

--deploy-mode DEPLOY_MODE Whether to launch the driver program locally ("client") or

on one of the worker machines inside the cluster ("cluster")

(Default: client).

--class CLASS_NAME Your application's main class (for Java / Scala apps). # 要执行的 class 类名

--name NAME A name of your application.

--jars JARS Comma-separated list of jars to include on the driver

and executor classpaths.

--packages Comma-separated list of maven coordinates of jars to include # 逗号隔开的 maven 列表,给 当前会话 添加依赖

on the driver and executor classpaths. Will search the local

maven repo, then maven central and any additional remote

repositories given by --repositories. The format for the

coordinates should be groupId:artifactId:version.

--exclude-packages Comma-separated list of groupId:artifactId, to exclude while

resolving the dependencies provided in --packages to avoid

dependency conflicts.

--repositories Comma-separated list of additional remote repositories to

search for the maven coordinates given with --packages.

--py-files PY_FILES Comma-separated list of .zip, .egg, or .py files to place # 逗号隔开的 zip.文件列表,替代 PYTHONPATH 的作用,

on the PYTHONPATH for Python apps. # 也就是说如果不设置 PYTHONPATH,就需要这个参数,才能导入 文件中的模块

--files FILES Comma-separated list of files to be placed in the working

directory of each executor. File paths of these files

in executors can be accessed via SparkFiles.get(fileName). --conf PROP=VALUE Arbitrary Spark configuration property.

--properties-file FILE Path to a file from which to load extra properties. If not

specified, this will look for conf/spark-defaults.conf. --driver-memory MEM Memory for driver (e.g. 1000M, 2G) (Default: 1024M).

--driver-java-options Extra Java options to pass to the driver.

--driver-library-path Extra library path entries to pass to the driver.

--driver-class-path Extra class path entries to pass to the driver. Note that

jars added with --jars are automatically included in the

classpath. --executor-memory MEM Memory per executor (e.g. 1000M, 2G) (Default: 1G). --proxy-user NAME User to impersonate when submitting the application.

This argument does not work with --principal / --keytab. --help, -h Show this help message and exit.

--verbose, -v Print additional debug output.

--version, Print the version of current Spark. Cluster deploy mode only:

--driver-cores NUM Number of cores used by the driver, only in cluster mode

(Default: 1). Spark standalone or Mesos with cluster deploy mode only:

--supervise If given, restarts the driver on failure.

--kill SUBMISSION_ID If given, kills the driver specified.

--status SUBMISSION_ID If given, requests the status of the driver specified. Spark standalone and Mesos only:

--total-executor-cores NUM Total cores for all executors. Spark standalone and YARN only:

--executor-cores NUM Number of cores per executor. (Default: 1 in YARN mode,

or all available cores on the worker in standalone mode) YARN-only:

--queue QUEUE_NAME The YARN queue to submit to (Default: "default").

--num-executors NUM Number of executors to launch (Default: 2).

If dynamic allocation is enabled, the initial number of

executors will be at least NUM.

--archives ARCHIVES Comma separated list of archives to be extracted into the

working directory of each executor.

--principal PRINCIPAL Principal to be used to login to KDC, while running on

secure HDFS.

--keytab KEYTAB The full path to the file that contains the keytab for the

principal specified above. This keytab will be copied to

the node running the Application Master via the Secure

Distributed Cache, for renewing the login tickets and the

delegation tokens periodically.

进入 python shell 模式

[root@hadoop10 spark]# bin/pyspark

Python 2.7.12 (default, Oct 2 2019, 19:43:15)

[GCC 4.4.7 20120313 (Red Hat 4.4.7-4)] on linux2

Type "help", "copyright", "credits" or "license" for more information.

19/10/09 18:10:53 WARN NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

Using Spark's default log4j profile: org/apache/spark/log4j-defaults.properties

Setting default log level to "WARN".

To adjust logging level use sc.setLogLevel(newLevel). For SparkR, use setLogLevel(newLevel).

Welcome to

____ __

/ __/__ ___ _____/ /__

_\ \/ _ \/ _ `/ __/ '_/

/__ / .__/\_,_/_/ /_/\_\ version 2.4.4

/_/ Using Python version 2.7.12 (default, Oct 2 2019 19:43:15)

SparkSession available as 'spark'. # 自带 spark

shell 模式可以通过 http://192.168.10.10:4040 查看任务

shell 操作语法与 脚本 相同,示例如下

>>> distFile = sc.textFile('README.md')

>>> distFile.map(lambda x: len(x)).reduce(lambda a, b: a + b)

3847

>>> distFile.count()

105

spark-submit 命令

spark-submit 命令 用于提交 spark 任务,执行 脚本文件,后面会以 python 为例进行讲解。

spark教程(二)-shell操作的更多相关文章

- MongoDB学习笔记二—Shell操作

数据类型 MongoDB在保留JSON基本键/值对特性的基础上,添加了其他一些数据类型. null null用于表示空值或者不存在的字段:{“x”:null} 布尔型 布尔类型有两个值true和fal ...

- HBase(3)-安装与Shell操作

一. 安装 1. 启动Zookeeper集群 2. 启动Hadoop集群 3. 上传并解压HBase -bin.tar.gz -C /opt/module 4. 修改配置文件 #修改habse-env ...

- Shell脚本系列教程二: 开始Shell编程

Shell脚本系列教程二: 开始Shell编程 2.1 如何写shell script? (1) 最常用的是使用vi或者mcedit来编写shell脚本, 但是你也可以使用任何你喜欢的编辑器; (2) ...

- 二、spark SQL交互scala操作示例

一.安装spark spark SQL是spark的一个功能模块,所以我们事先要安装配置spark,参考: https://www.cnblogs.com/lay2017/p/10006935.htm ...

- Hbase(二)【shell操作】

目录 一.基础操作 1.进入shell命令行 2.帮助查看命令 二.命名空间操作 1.创建namespace 2.查看namespace 3.删除命名空间 三.表操作 1.查看所有表 2.创建表 3. ...

- Hadoop读书笔记(二)HDFS的shell操作

Hadoop读书笔记(一)Hadoop介绍:http://blog.csdn.net/caicongyang/article/details/39898629 1.shell操作 1.1全部的HDFS ...

- hadoop学习二:hadoop基本架构与shell操作

1.hadoop1.0与hadoop2.0的区别:

- Python爬虫与数据分析之进阶教程:文件操作、lambda表达式、递归、yield生成器

专栏目录: Python爬虫与数据分析之python教学视频.python源码分享,python Python爬虫与数据分析之基础教程:Python的语法.字典.元组.列表 Python爬虫与数据分析 ...

- Android ADB命令教程二——ADB命令详解

Android ADB命令教程二——ADB命令详解 转载▼ 原文链接:http://www.tbk.ren/article/249.html 我们使用 adb -h 来看看,adb命令里面 ...

随机推荐

- JSP通过URL给Servlet传值

jsp传数据: <a id="a1" href="" ></a> <script> $("#a1").a ...

- DRL Hands-on book

代码:https://github.com/PacktPublishing/Deep-Reinforcement-Learning-Hands-On Chapter 1 What is Reinfor ...

- 邻居子系统 之 邻居项创建__neigh_create

概述 IP层输出数据包会根据路由的下一跳查询邻居项,如果不存在则会调用__neigh_create创建邻居项,然后调用邻居项的output函数进行输出: __neigh_create完成邻居项的创建, ...

- 20165207 Exp8 Web基础

目录 20165207 Exp8 Web基础 0. 环境配置 0.1. apache 0.2. MySQL 0.3. php 0.4. php-mysql编程库 1. 前台HTML编写静态网页 2. ...

- Robotframework之SSHLibrary库

Robotframework之SSHLibrary库 使用robotframework做自动化测试,在流程中可能需要远程连接机器做一些简单操作,比如连接linux服务器,外面平时用的工具去连接 ...

- LeetCode 470. 用 Rand7() 实现 Rand10()(Implement Rand10() Using Rand7())

题目描述 已有方法 rand7 可生成 1 到 7 范围内的均匀随机整数,试写一个方法 rand10 生成 1 到 10 范围内的均匀随机整数. 不要使用系统的 Math.random() 方法. 示 ...

- 查询出与jack互为好友的人名字

建表 /* Navicat MySQL Data Transfer Source Server : connect1 Source Server Version : 50611 Source Host ...

- java代码实现将集合中的重复元素去掉

package com.loaderman.test; import java.util.ArrayList; import java.util.LinkedHashSet; import java. ...

- 本地安装完oracle,plsql 连接不上

原因是本地装的oracle版本是12c,oracle客户端装的是11,所以连接不上,没有匹配的验证协议 客户端换成12,成功连接.

- java.sql.SQLException: Listener refused the connection with the following error: ORA-12505, TNS:list

package DisplayAuthors; import java.sql.*; public class DisplayAuthors { private static final Str ...