(转) Supercharging Style Transfer

Pastiche. A French word, it designates a work of art that imitates the style of another one (not to be confused with its more humorous Greek cousin, parody). Although it has been used for a long time in visual art, music and literature, pastiche has been getting mass attention lately with online forums dedicated to images that have been modified to be in the style of famous paintings. Using a technique known as style transfer, these images are generated by phone or web apps that allow a user to render their favorite picture in the style of a well known work of art.

Although users have already produced gorgeous pastiches using the current technology, we feel that it could be made even more engaging. Right now, each painting is its own island, so to speak: the user provides a content image, selects an artistic style and gets a pastiche back. But what if one could combine many different styles, exploring unique mixtures of well known artists to create an entirely unique pastiche?

Learning a representation for artistic style

In our recent paper titled “A Learned Representation for Artistic Style”, we introduce a simple method to allow a single deep convolutional style transfer network to learn multiple styles at the same time. The network, having learned multiple styles, is able to do style interpolation, where the pastiche varies smoothly from one style to another. Our method enables style interpolation in real-time as well, allowing this to be applied not only to static images, but also videos.

| Credit: awesome dog role played by Google Brain team office dog Picabo. |

In the video above, multiple styles are combined in real-time and the resulting style is applied using a single style transfer network. The user is provided with a set of 13 different painting styles and adjusts their relative strengths in the final style via sliders. In this demonstration, the user is an active participant in producing the pastiche.

A Quick History of Style Transfer

While transferring the style of one image to another has existed for nearly 15 years [1] [2], leveraging neural networks to accomplish it is both very recent and very fascinating. In “A Neural Algorithm of Artistic Style” [3], researchers Gatys, Ecker & Bethge introduced a method that uses deep convolutional neural network (CNN) classifiers. The pastiche image is found via optimization: the algorithm looks for an image which elicits the same kind of activations in the CNN’s lower layers - which capture the overall rough aesthetic of the style input (broad brushstrokes, cubist patterns, etc.) - yet produces activations in the higher layers - which capture the things that make the subject recognizable - that are close to those produced by the content image. From some starting point (e.g. random noise, or the content image itself), the pastiche image is progressively refined until these requirements are met.

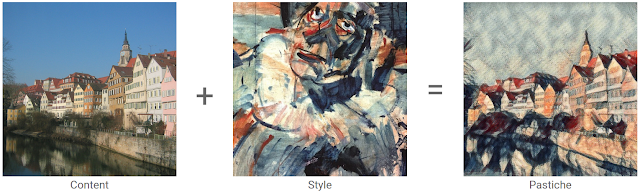

|

| Content image: The Tübingen Neckarfront by Andreas Praefcke, Style painting: “Head of a Clown”, by Georges Rouault. |

The pastiches produced via this algorithm look spectacular:

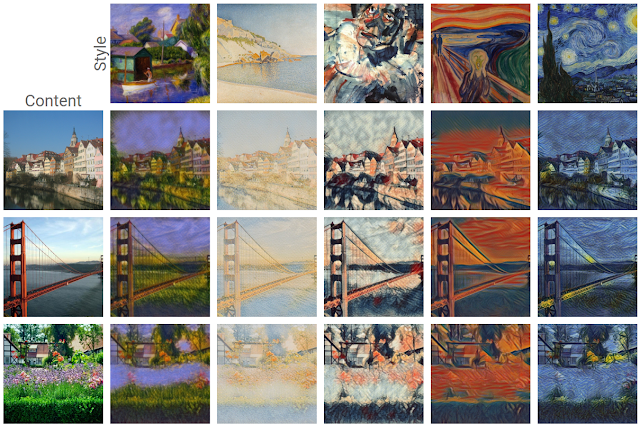

|

| Figure adapted from L. Gatys et al. "A Neural Algorithm of Artistic Style" (2015). |

This work is considered a breakthrough in the field of deep learning research because it provided the first proof of concept for neural network-based style transfer. Unfortunately this method for stylizing an individual image is computationally demanding. For instance, in the first demos available on the web, one would upload a photo to a server, and then still have plenty of time to go grab a cup of coffee before a result was available.

This process was sped up significantly by subsequent research [4, 5] that recognized that this optimization problem may be recast as an image transformation problem, where one wishes to apply a single, fixed painting style to an arbitrary content image (e.g. a photograph). The problem can then be solved by teaching a feed-forward, deep convolutional neural network to alter a corpus of content images to match the style of a painting. The goal of the trained network is two-fold: maintain the content of the original image while matching the visual style of the painting.

The end result of this was that what once took a few minutes for a single static image, could now be run real time (e.g. applying style transfer to a live video). However, the increase in speed that allowed real-time style transfer came with a cost - a given style transfer network is tied to the style of a single painting, losing some flexibility of the original algorithm, which was not tied to any one style. This means that to build a style transfer system capable of modeling 100 paintings, one has to train and store 100 separate style transfer networks.

Our Contribution: Learning and Combining Multiple Styles

We started from the observation that many artists from the impressionist period employ similar brush stroke techniques and color palettes. Furthermore, painting by say, Monet, are even more visually similar.

|

| Poppy Field (left) and Impression, Sunrise (right) by Claude Monet. Images from Wikipedia |

We leveraged this observation in our training of a machine learning system. That is, we trained a single system that is able to capture and generalize across many Monet paintings or even a diverse array of artists across genres. The pastiches produced are qualitatively comparable to those produced in previous work, while originating from the same style transfer network.

|

| Pastiches produced by our single network, trained on 32 varied styles. These pastiches are qualitatively equivalent to those created by single-style networks: Image Credit: (from top to bottom) content photographs by Andreas Praefcke, Rich Niewiroski Jr. and J.-H. Janßen, (from left to right) style paintings by William Glackens, Paul Signac, Georges Rouault, Edvard Munch and Vincent van Gogh. |

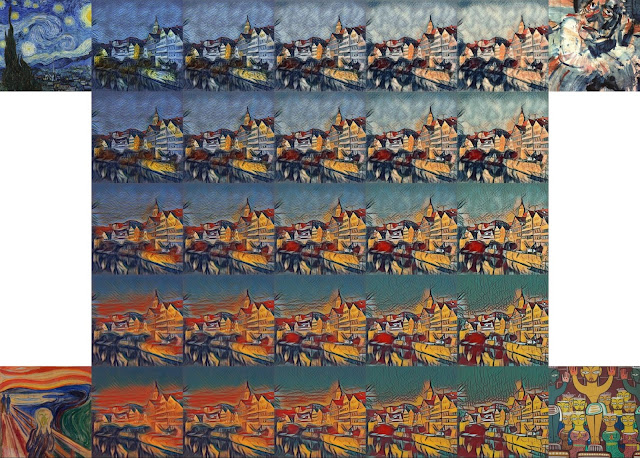

The technique we developed is simple to implement and is not memory intensive. Furthermore, our network, trained on several artistic styles, permits arbitrary combining multiple painting styles in real-time, as shown in the video above. Here are four styles being combined in different proportions on a photograph of Tübingen:

Unlike previous approaches to fast style transfer, we feel that this method of modeling multiple styles at the same time opens the door to exciting new ways for users to interact with style transfer algorithms, not only allowing the freedom to create new styles based on the mixture of several others, but to do it in real-time. Stay tuned for a future post on the Magenta blog, in which we will describe the algorithm in more detail and release the TensorFlow source code to run this model and demo yourself. We also recommend that you check out Nat & Lo’s fantastic video explanationon the subject of style transfer.

References

[1] Efros, Alexei A., and William T. Freeman. Image quilting for texture synthesis and transfer (2001).

[2] Hertzmann, Aaron, Charles E. Jacobs, Nuria Oliver, Brian Curless, and David H. Salesin. Image analogies (2001).

[3] Gatys, Leon A., Alexander S. Ecker, and Matthias Bethge. A Neural Algorithm of Artistic Style(2015).

[4] Ulyanov, Dmitry, Vadim Lebedev, Andrea Vedaldi, and Victor Lempitsky. Texture Networks: Feed-forward Synthesis of Textures and Stylized Images (2016).

[5] Johnson, Justin, Alexandre Alahi, and Li Fei-Fei. Perceptual Losses for Real-Time Style Transfer and Super-Resolution (2016).

(转) Supercharging Style Transfer的更多相关文章

- Image Style Transfer:多风格 TensorFlow 实现

·其实这是一个选修课的present,整理一下作为一篇博客,希望对你有用.讲解风格迁移的博客蛮多的,我就不过多的赘述了.讲一点几个关键的地方吧,当然最后的代码和ppt也希望对你有用. 1.引入: 风格 ...

- 项目总结四:神经风格迁移项目(Art generation with Neural Style Transfer)

1.项目介绍 神经风格转换 (NST) 是深部学习中最有趣的技术之一.它合并两个图像, 即 内容图像 C(content image) 和 样式图像S(style image), 以生成图像 G(ge ...

- DeepLearning.ai-Week4-Deep Learning & Art: Neural Style Transfer

1 - Task Implement the neural style transfer algorithm Generate novel artistic images using your alg ...

- 课程四(Convolutional Neural Networks),第四 周(Special applications: Face recognition & Neural style transfer) —— 2.Programming assignments:Art generation with Neural Style Transfer

Deep Learning & Art: Neural Style Transfer Welcome to the second assignment of this week. In thi ...

- Art: Neural Style Transfer

Andrew Ng deeplearning courese-4:Convolutional Neural Network Convolutional Neural Networks: Step by ...

- Perceptual Losses for Real-Time Style Transfer and Super-Resolution and Super-Resolution 论文笔记

Perceptual Losses for Real-Time Style Transfer and Super-Resolution and Super-Resolution 论文笔记 ECCV 2 ...

- pytorch实现style transfer

说是实现,其实并不是我自己实现的 亮出代码:https://github.com/yunjey/pytorch-tutorial/tree/master/tutorials/03-advanced/n ...

- fast neural style transfer图像风格迁移基于tensorflow实现

引自:深度学习实践:使用Tensorflow实现快速风格迁移 一.风格迁移简介 风格迁移(Style Transfer)是深度学习众多应用中非常有趣的一种,如图,我们可以使用这种方法把一张图片的风格“ ...

- 《Perceptual Losses for Real-Time Style Transfer and Super-Resolution》论文笔记

参考 http://blog.csdn.net/u011534057/article/details/55052304 代码 https://github.com/yusuketomoto/chain ...

随机推荐

- hdu2295DLX重复覆盖+二分

题目是说 给了n个城市 m个雷达 你只能选择其中的k个雷达进行使用 你可以设置每个雷达的半径,最后使得所有城市都被覆盖,要求雷达的半径尽可能的小(所有雷达的半径是一样的) 二分最小半径,然后每次重新建 ...

- JavaScript 函数声明与函数表达式的区别 函数声明提升(function declaration hoisting)

解析器在向执行环境中加载数据时,对函数声明和函数表达式并非一视同仁.解析器会率先读取函数声明,并使其在执行任何代码之前可用(可以访问).至于函数表达式,则必须等到解析器执行到它所在的代码行,才会真的被 ...

- Linux基础命令---查找用户信息finger

finger finger指令用来查找.显示指定用户的信息.查询远程主机信息是,可以用user@localhost来指定用户. 此命令的适用范围:RedHat.RHEL.Ubuntu.CentOS.S ...

- document.createDocumentFragment 以及创建节点速度比较

document.createDocumentFragment document.createDocumentFragment()方法创建一个新空白的DocumentFragment对象. Docum ...

- JustOj 1032: 习题6.7 完数

题目描述 一个数如果恰好等于它的因子之和,这个数就称为"完数". 例如,6的因子为1.2.3,而6=1+2+3,因此6是"完数". 编程序找出N之内的所有完数, ...

- TCP协议、算法和原理

TCP是一个巨复杂的协议,因为他要解决很多问题,而这些问题又带出了很多子问题和阴暗面.所以学习TCP本身是个比较痛苦的过程,但对于学习的过程却能让人有很多收获. 关于TCP这个协议的细节,我还是推荐你 ...

- MySQL 主表与从表

通过上一篇随笔,笔者了解到,实体完整性是通过主键约束实现的,而参照完整性是通过外键约束实现的,两者都是为了保证数据的完整性和一致性. 主键约束比较好理解,就是主键值不能为空且不重复,已经强调好多次,所 ...

- Android Camera2 预览,拍照,人脸检测并实时展现

https://www.jianshu.com/p/5414ba2b5508 背景 最近需要做一个人脸检测并实时预览的功能.就是边检测人脸,边在预览界面上框出来. 当然本人并不是专门做 ...

- 开启redis-server提示 # Creating Server TCP listening socket *:6379: bind: Address already in use--解决方法

在bin目录中开启Redis服务器,完整提示如下: 3496:C 25 Apr 00:56:48.717 # Warning: no config file specified, using the ...

- mysql Column count doesn't match value count at row 1

今天执行批量插入的操作,发现报了错 mysql Column count doesn't match value count at row 1. 后来发现原因:是由于写的SQL语句里列的数目和后面的值 ...