(转) Supercharging Style Transfer

Pastiche. A French word, it designates a work of art that imitates the style of another one (not to be confused with its more humorous Greek cousin, parody). Although it has been used for a long time in visual art, music and literature, pastiche has been getting mass attention lately with online forums dedicated to images that have been modified to be in the style of famous paintings. Using a technique known as style transfer, these images are generated by phone or web apps that allow a user to render their favorite picture in the style of a well known work of art.

Although users have already produced gorgeous pastiches using the current technology, we feel that it could be made even more engaging. Right now, each painting is its own island, so to speak: the user provides a content image, selects an artistic style and gets a pastiche back. But what if one could combine many different styles, exploring unique mixtures of well known artists to create an entirely unique pastiche?

Learning a representation for artistic style

In our recent paper titled “A Learned Representation for Artistic Style”, we introduce a simple method to allow a single deep convolutional style transfer network to learn multiple styles at the same time. The network, having learned multiple styles, is able to do style interpolation, where the pastiche varies smoothly from one style to another. Our method enables style interpolation in real-time as well, allowing this to be applied not only to static images, but also videos.

| Credit: awesome dog role played by Google Brain team office dog Picabo. |

In the video above, multiple styles are combined in real-time and the resulting style is applied using a single style transfer network. The user is provided with a set of 13 different painting styles and adjusts their relative strengths in the final style via sliders. In this demonstration, the user is an active participant in producing the pastiche.

A Quick History of Style Transfer

While transferring the style of one image to another has existed for nearly 15 years [1] [2], leveraging neural networks to accomplish it is both very recent and very fascinating. In “A Neural Algorithm of Artistic Style” [3], researchers Gatys, Ecker & Bethge introduced a method that uses deep convolutional neural network (CNN) classifiers. The pastiche image is found via optimization: the algorithm looks for an image which elicits the same kind of activations in the CNN’s lower layers - which capture the overall rough aesthetic of the style input (broad brushstrokes, cubist patterns, etc.) - yet produces activations in the higher layers - which capture the things that make the subject recognizable - that are close to those produced by the content image. From some starting point (e.g. random noise, or the content image itself), the pastiche image is progressively refined until these requirements are met.

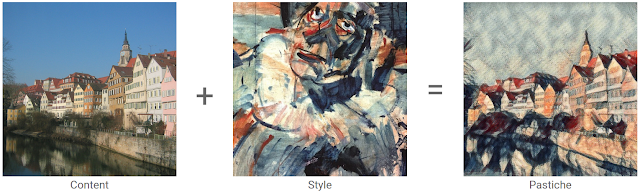

|

| Content image: The Tübingen Neckarfront by Andreas Praefcke, Style painting: “Head of a Clown”, by Georges Rouault. |

The pastiches produced via this algorithm look spectacular:

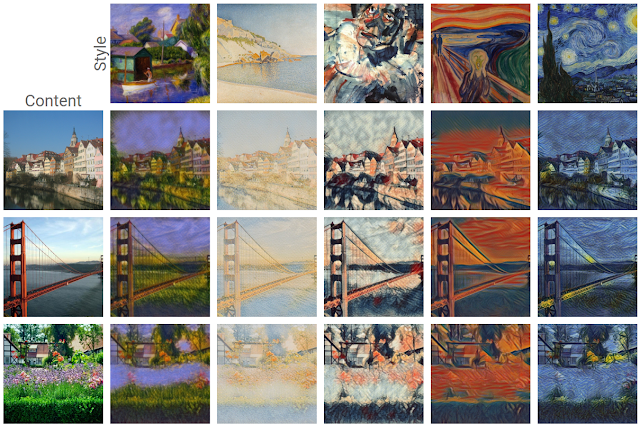

|

| Figure adapted from L. Gatys et al. "A Neural Algorithm of Artistic Style" (2015). |

This work is considered a breakthrough in the field of deep learning research because it provided the first proof of concept for neural network-based style transfer. Unfortunately this method for stylizing an individual image is computationally demanding. For instance, in the first demos available on the web, one would upload a photo to a server, and then still have plenty of time to go grab a cup of coffee before a result was available.

This process was sped up significantly by subsequent research [4, 5] that recognized that this optimization problem may be recast as an image transformation problem, where one wishes to apply a single, fixed painting style to an arbitrary content image (e.g. a photograph). The problem can then be solved by teaching a feed-forward, deep convolutional neural network to alter a corpus of content images to match the style of a painting. The goal of the trained network is two-fold: maintain the content of the original image while matching the visual style of the painting.

The end result of this was that what once took a few minutes for a single static image, could now be run real time (e.g. applying style transfer to a live video). However, the increase in speed that allowed real-time style transfer came with a cost - a given style transfer network is tied to the style of a single painting, losing some flexibility of the original algorithm, which was not tied to any one style. This means that to build a style transfer system capable of modeling 100 paintings, one has to train and store 100 separate style transfer networks.

Our Contribution: Learning and Combining Multiple Styles

We started from the observation that many artists from the impressionist period employ similar brush stroke techniques and color palettes. Furthermore, painting by say, Monet, are even more visually similar.

|

| Poppy Field (left) and Impression, Sunrise (right) by Claude Monet. Images from Wikipedia |

We leveraged this observation in our training of a machine learning system. That is, we trained a single system that is able to capture and generalize across many Monet paintings or even a diverse array of artists across genres. The pastiches produced are qualitatively comparable to those produced in previous work, while originating from the same style transfer network.

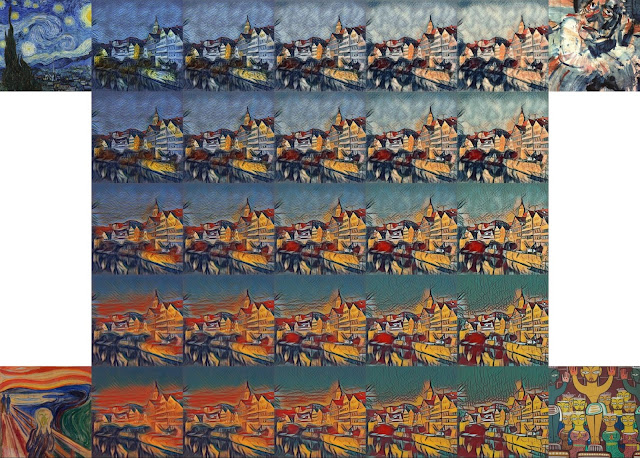

|

| Pastiches produced by our single network, trained on 32 varied styles. These pastiches are qualitatively equivalent to those created by single-style networks: Image Credit: (from top to bottom) content photographs by Andreas Praefcke, Rich Niewiroski Jr. and J.-H. Janßen, (from left to right) style paintings by William Glackens, Paul Signac, Georges Rouault, Edvard Munch and Vincent van Gogh. |

The technique we developed is simple to implement and is not memory intensive. Furthermore, our network, trained on several artistic styles, permits arbitrary combining multiple painting styles in real-time, as shown in the video above. Here are four styles being combined in different proportions on a photograph of Tübingen:

Unlike previous approaches to fast style transfer, we feel that this method of modeling multiple styles at the same time opens the door to exciting new ways for users to interact with style transfer algorithms, not only allowing the freedom to create new styles based on the mixture of several others, but to do it in real-time. Stay tuned for a future post on the Magenta blog, in which we will describe the algorithm in more detail and release the TensorFlow source code to run this model and demo yourself. We also recommend that you check out Nat & Lo’s fantastic video explanationon the subject of style transfer.

References

[1] Efros, Alexei A., and William T. Freeman. Image quilting for texture synthesis and transfer (2001).

[2] Hertzmann, Aaron, Charles E. Jacobs, Nuria Oliver, Brian Curless, and David H. Salesin. Image analogies (2001).

[3] Gatys, Leon A., Alexander S. Ecker, and Matthias Bethge. A Neural Algorithm of Artistic Style(2015).

[4] Ulyanov, Dmitry, Vadim Lebedev, Andrea Vedaldi, and Victor Lempitsky. Texture Networks: Feed-forward Synthesis of Textures and Stylized Images (2016).

[5] Johnson, Justin, Alexandre Alahi, and Li Fei-Fei. Perceptual Losses for Real-Time Style Transfer and Super-Resolution (2016).

(转) Supercharging Style Transfer的更多相关文章

- Image Style Transfer:多风格 TensorFlow 实现

·其实这是一个选修课的present,整理一下作为一篇博客,希望对你有用.讲解风格迁移的博客蛮多的,我就不过多的赘述了.讲一点几个关键的地方吧,当然最后的代码和ppt也希望对你有用. 1.引入: 风格 ...

- 项目总结四:神经风格迁移项目(Art generation with Neural Style Transfer)

1.项目介绍 神经风格转换 (NST) 是深部学习中最有趣的技术之一.它合并两个图像, 即 内容图像 C(content image) 和 样式图像S(style image), 以生成图像 G(ge ...

- DeepLearning.ai-Week4-Deep Learning & Art: Neural Style Transfer

1 - Task Implement the neural style transfer algorithm Generate novel artistic images using your alg ...

- 课程四(Convolutional Neural Networks),第四 周(Special applications: Face recognition & Neural style transfer) —— 2.Programming assignments:Art generation with Neural Style Transfer

Deep Learning & Art: Neural Style Transfer Welcome to the second assignment of this week. In thi ...

- Art: Neural Style Transfer

Andrew Ng deeplearning courese-4:Convolutional Neural Network Convolutional Neural Networks: Step by ...

- Perceptual Losses for Real-Time Style Transfer and Super-Resolution and Super-Resolution 论文笔记

Perceptual Losses for Real-Time Style Transfer and Super-Resolution and Super-Resolution 论文笔记 ECCV 2 ...

- pytorch实现style transfer

说是实现,其实并不是我自己实现的 亮出代码:https://github.com/yunjey/pytorch-tutorial/tree/master/tutorials/03-advanced/n ...

- fast neural style transfer图像风格迁移基于tensorflow实现

引自:深度学习实践:使用Tensorflow实现快速风格迁移 一.风格迁移简介 风格迁移(Style Transfer)是深度学习众多应用中非常有趣的一种,如图,我们可以使用这种方法把一张图片的风格“ ...

- 《Perceptual Losses for Real-Time Style Transfer and Super-Resolution》论文笔记

参考 http://blog.csdn.net/u011534057/article/details/55052304 代码 https://github.com/yusuketomoto/chain ...

随机推荐

- html5-css列表和表格

td{ /*width: 150px; height: 60px;*/ padding: 10px; text-align: center;} table{ width ...

- poj1741 树上的分治

题意是说给了n个点的树n<=10000,问有多少个点对例如(a,b)他们的之间的距离小于等于k 采用树的分治做 #include <iostream> #include <cs ...

- [openjudge-搜索]广度优先搜索之鸣人和佐助

题目描述 描述 佐助被大蛇丸诱骗走了,鸣人在多少时间内能追上他呢?已知一张地图(以二维矩阵的形式表示)以及佐助和鸣人的位置.地图上的每个位置都可以走到,只不过有些位置上有大蛇丸的手下,需要先打败大蛇丸 ...

- laravel中使用的PDF扩展包——laravel-dompdf和laravel-snappy

这两天项目中需要将HTML页面转换为PDF文件方便打印,我在网上搜了很多资料.先后尝试了laravel-dompdf和laravel-snappy两种扩展包,个人感觉laravel-snappy比较好 ...

- Hive批量删除历史分区

批量删除历史分区和数据可以采用如下操作: -- 删除20180101之前的所有分区 alter table example_table_name drop if exists partition (d ...

- linux常用命令:mv 命令

mv命令是move的缩写,可以用来移动文件或者将文件改名(move (rename) files),是Linux系统下常用的命令,经常用来备份文件或者目录. 1.命令格式: mv [选项] 源文件或目 ...

- 实现Winform 跨线程安全访问UI控件

在多线程操作WinForm窗体上的控件时,出现“线程间操作无效:从不是创建控件XXXX的线程访问它”,那是因为默认情况下,在Windows应用程序中,.NET Framework不允许在一个线程中直接 ...

- 系统调用号、errno

最近老需要看系统调用号,errno,所以这里记一下 CentOS Linux release 7.2.1511 (Core) 3.10.0-327.el7.x86_64 [root@localhost ...

- GUI颜色、字体设置对话框

%颜色设置对话框 uisetcolor %c 红色 c=uisetcolor %默认规定颜色 c=uisetcolor([ ]); %设置曲线颜色 h = plot([:]); c = uisetco ...

- JustOj 1036: 习题6.11 迭代法求平方根

题目描述 用迭代法求 .求平方根的迭代公式为: X[n+1]=1/2(X[n]+a/X[n]) 要求前后两次求出的得差的绝对值少于0.00001. 输出保留3位小数 输入 X 输出 X的平方根 样例输 ...