python调用scala或java包

项目中用到python操作hdfs的问题,一般都是使用python的hdfs包,然而这个包初始化起来太麻烦,需要:

from pyspark impport SparkConf, SparkContext

from hdfs import *

client = Client("http://127.0.0.1:50070")

可以看到python需要指定master的地址,平时Scala使用的时候不用这样,如下:

import org.apache.hadoop.fs.{FileSystem, Path}

import org.apache.spark.{SparkConf, SparkContext}

hdfs = FileSystem.get(sc.hadoopConfiguration)

如果我们要在本地测试和生产打包发布的时候,python这样需要每次修改master地址的方式很不方便,而且一般本地调试的时候一般hadoop需要的时候才开起来,Scala启动的时候是在项目目录的根目录直接启动hdfs,但是python调用hadoop的话需要本地开启hadoop服务,通过localhost:50070监听。于是想通过python调用Scala的Filesystem来实现这个操作。

阅读spark的源码发现python是使用py4j这个py文件和java交互的,通过gateway启动jvm,这里的源码有很大用途,于是我做了修改:

#!/usr/bin/env python

# coding:utf-8 import re

import jieba

import atexit

import os

import select

import signal

import shlex

import socket

import platform

from subprocess import Popen, PIPE

from py4j.java_gateway import java_import, JavaGateway, GatewayClient

from common.Tools import loadData

from pyspark import SparkContext

from pyspark.serializers import read_int if "PYSPARK_GATEWAY_PORT" in os.environ:

gateway_port = int(os.environ["PYSPARK_GATEWAY_PORT"])

else:

SPARK_HOME = os.environ["SPARK_HOME"]

# Launch the Py4j gateway using Spark's run command so that we pick up the

# proper classpath and settings from spark-env.sh

on_windows = platform.system() == "Windows"

script = "./bin/spark-submit.cmd" if on_windows else "./bin/spark-submit"

submit_args = os.environ.get("PYSPARK_SUBMIT_ARGS", "pyspark-shell")

if os.environ.get("SPARK_TESTING"):

submit_args = ' '.join([

"--conf spark.ui.enabled=false",

submit_args

])

command = [os.path.join(SPARK_HOME, script)] + shlex.split(submit_args) # Start a socket that will be used by PythonGatewayServer to communicate its port to us

callback_socket = socket.socket(socket.AF_INET, socket.SOCK_STREAM)

callback_socket.bind(('127.0.0.1', 0))

callback_socket.listen(1)

callback_host, callback_port = callback_socket.getsockname()

env = dict(os.environ)

env['_PYSPARK_DRIVER_CALLBACK_HOST'] = callback_host

env['_PYSPARK_DRIVER_CALLBACK_PORT'] = str(callback_port) # Launch the Java gateway.

# We open a pipe to stdin so that the Java gateway can die when the pipe is broken

if not on_windows:

# Don't send ctrl-c / SIGINT to the Java gateway:

def preexec_func():

signal.signal(signal.SIGINT, signal.SIG_IGN) proc = Popen(command, stdin=PIPE, preexec_fn=preexec_func, env=env)

else:

# preexec_fn not supported on Windows

proc = Popen(command, stdin=PIPE, env=env) gateway_port = None

# We use select() here in order to avoid blocking indefinitely if the subprocess dies

# before connecting

while gateway_port is None and proc.poll() is None:

timeout = 1 # (seconds)

readable, _, _ = select.select([callback_socket], [], [], timeout)

if callback_socket in readable:

gateway_connection = callback_socket.accept()[0]

# Determine which ephemeral port the server started on:

gateway_port = read_int(gateway_connection.makefile(mode="rb"))

gateway_connection.close()

callback_socket.close()

if gateway_port is None:

raise Exception("Java gateway process exited before sending the driver its port number") # In Windows, ensure the Java child processes do not linger after Python has exited.

# In UNIX-based systems, the child process can kill itself on broken pipe (i.e. when

# the parent process' stdin sends an EOF). In Windows, however, this is not possible

# because java.lang.Process reads directly from the parent process' stdin, contending

# with any opportunity to read an EOF from the parent. Note that this is only best

# effort and will not take effect if the python process is violently terminated.

if on_windows:

# In Windows, the child process here is "spark-submit.cmd", not the JVM itself

# (because the UNIX "exec" command is not available). This means we cannot simply

# call proc.kill(), which kills only the "spark-submit.cmd" process but not the

# JVMs. Instead, we use "taskkill" with the tree-kill option "/t" to terminate all

# child processes in the tree (http://technet.microsoft.com/en-us/library/bb491009.aspx)

def killChild():

Popen(["cmd", "/c", "taskkill", "/f", "/t", "/pid", str(proc.pid)]) atexit.register(killChild) # Connect to the gateway

gateway = JavaGateway(GatewayClient(port=gateway_port), auto_convert=True) # Import the classes used by PySpark

java_import(gateway.jvm, "org.apache.spark.SparkConf")

java_import(gateway.jvm, "org.apache.spark.api.java.*")

java_import(gateway.jvm, "org.apache.spark.api.python.*")

java_import(gateway.jvm, "org.apache.spark.ml.python.*")

java_import(gateway.jvm, "org.apache.spark.mllib.api.python.*")

# TODO(davies): move into sql

java_import(gateway.jvm, "org.apache.spark.sql.*")

java_import(gateway.jvm, "org.apache.spark.sql.hive.*")

java_import(gateway.jvm, "scala.Tuple2")

java_import(gateway.jvm, "org.apache.hadoop.fs.{FileSystem, Path}")

java_import(gateway.jvm, "org.apache.hadoop.conf.Configuration")

java_import(gateway.jvm, "org.apache.hadoop.*")

java_import(gateway.jvm, "org.apache.spark.{SparkConf, SparkContext}")

jvm = gateway.jvm

conf = jvm.org.apache.spark.SparkConf()

conf.setMaster("local").setAppName("test hdfs")

sc = jvm.org.apache.spark.SparkContext(conf)

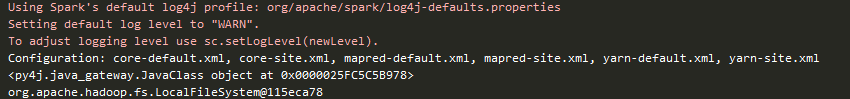

print(sc.hadoopConfiguration())

FileSystem = jvm.org.apache.hadoop.fs.FileSystem

print(repr(FileSystem))

Path = jvm.org.apache.hadoop.fs.Path

hdfs = FileSystem.get(sc.hadoopConfiguration())

hdfs.delete(Path("/DATA/*/*/TMP/KAIVEN/*"))

print(‘目录删除成功’)

可以参考py4j.java_gateway.launch_gateway,这个方法是python启动jvm的,本人做了一点小小的修改用java_import 调用了

org.apache.hadoop.fs.{FileSystem, Path},

org.apache.hadoop.conf.Configuration

这样的话sparkConf起来的时候就自动配置了Scala的配置。

python调用scala或java包的更多相关文章

- R、Python、Scala和Java,到底该使用哪一种大数据编程语言?

有一个大数据项目,你知道问题领域(problem domain),也知道使用什么基础设施,甚至可能已决定使用哪种框架来处理所有这些数据,但是有一个决定迟迟未能做出:我该选择哪种语言?(或者可能更有针对 ...

- Python调用Java(基于Ubuntu 18.04)

最近实习,需要使用Python编程,其中牵涉到一些算法的编写.由于不熟悉Python,又懒得从头学,而且要写的算法自己之前又用Java实现过,就想着能不能用Python调用Java.经过查找资料,方法 ...

- python 调用nmap

1.系统中需要安装nmap 2.系统中安装pip 2.安装python调用nmap的lib包 命令为:pip install python-nmap 以下是在centos系统下安装成功后的截图 在命令 ...

- python 调用 java代码

一.JPype简述 1.JPype是什么? JPype是一个能够让 python 代码方便地调用 Java 代码的工具,从而克服了 python 在某些领域(如服务器端编程)中的不足. 2.JPype ...

- python调用hanlp分词包手记

python调用hanlp分词包手记 Hanlp作为一款重要的分词工具,本月初的时候看到大快搜索发布了hanlp的1.7版本,新增了文本聚类.流水线分词等功能.关于hanlp1.7版本的新功能,后 ...

- python实战===用python调用jar包(原创)

一个困扰我很久的问题,今天终于解决了.用python调用jar包 很简单,但是网上的人就是乱转载.自己试都不试就转载,让我走了很多弯路 背景:python3.6 32位 + jre 32位 + ...

- Python调用Java代码部署及初步使用

Python调用Java代码部署: jpype下载地址:https://www.lfd.uci.edu/~gohlke/pythonlibs/#jpype 下载的时候需要使用Chrome浏览器进行下载 ...

- python调用java API

JPype documentation JPype is an effort to allow python programs full access to java class libraries. ...

- python 调用java脚本的加密(没试过,先记录在此)

http://lemfix.com/topics/344 前言 自动化测试应用越来越多了,尤其是接口自动化测试. 在接口测试数据传递方面,很多公司都会选择对请求数据进行加密处理. 而目前为主,大部分公 ...

随机推荐

- IntelliJ IDEA 2017.3百度-----树状结构

------------恢复内容开始------------ ------------恢复内容结束------------

- python之路之面向对象3

一.知识点拾遗 1.多继承的易错点 二.设计模式 1.设计模式介绍 Gof设计模式 大话设计模式 2.单例模式 当所有实例中封装的数据相同时,使用单例模式 静态方法+静态字段 单例就是只有一个实例 a ...

- HTML的网页基本结构

写在前面 <!DOCTYPE html><html lang="en"><head> <meta charset=& ...

- vscode中vim插件对ctrl键的设置

vim配置 在使用中经常想使用ctrl-c,虽然在vscode中有配置选项可以让vim与ctrl键解绑,但是这样就使用不了vim的VISUAL BLOCK.所以进行了自定义设置. 设置 - Vim C ...

- AcWing 831. KMP字符串

#include <iostream> using namespace std; , M = ; int n, m; int ne[N];//ne[i] : 以i为结尾的部分匹配的值 ch ...

- Pacemaker+ISCSI实现Apache高可用-配置

一.配置文件系统 任意节点用ISCSI的共享磁盘创建LVM node1 pvcreate /dev/sdb vgcreate my_vg /dev/sdb lvcreate -L 1G -n web_ ...

- js实现左右自动滚动

<!DOCTYPE html> <html lang="en"> <head> <meta charset="UTF-8&quo ...

- 同步块:synchronized(同步监视器对象){同步运行代码片段}

package seday10; import seday03.Test2; /** * @author xingsir * 同步块:synchronized(同步监视器对象){需要同步运行的代码片段 ...

- python测量代码运行时间方法

Python 社区有句俗语: “python自己带着电池” ,别自己写计时框架. Python3.2具备一个叫做 timeit 的完美计时工具可以测量python代码的运行时间. timeit 模块: ...

- ubuntu14.04安装google chrome

安装好Ubuntu14.04之后安装google chrome浏览器 1.按下 Ctrl + Alt + t 键盘组合键,启动终端 2.在终端中,输入以下命令 (将下载源加入到系统的源列表.命令的反馈 ...