Deep Learning Specialization 笔记

1. numpy中的几种矩阵相乘:

# x1: axn, x2:nxb

np.dot(x1, x2): axn * nxb

np.outer(x1, x2): nx1*1xn # 实质为: np.ravel(x1)*np.ravel(x2)

np.multiply(x1, x2): [[x1[0][0]*x2[0][0], x1[0][1]*x2[0][1], ...]

2. Bugs' hometown

Many software bugs in deep learning come from having matrix/vector dimensions that don't fit. If you can keep your matrix/vector dimensions straight you will go a long way toward eliminating many bugs.

3. Common steps for pre-processing a new dataset are:

- Figure out the dimensions and shapes of the problem (m_train, m_test, num_px, ...)

- Reshape the datasets such that each example is now a vector of size (num_px \* num_px \* 3, 1)

- "Standardize" the data

4. Unstructured data:

Unstructured data is a generic label for describing data that is not contained in a database or some other type of data structure . Unstructured data can be textual or non-textual. Textual unstructured data is generated in media like email messages, PowerPoint presentations, Word documents, collaboration software and instant messages. Non-textual unstructured data is generated in media like JPEG images, MP3 audio files and Flash video files

5. Chapter of Activation Function:

Choice of activation function:

- If output is either 0 or 1 -- sigmoid for the output layer and the other units on ReLU.

- Except for the output layer, tanh does better than sigmoid.

- ReLU ---level up--> leaky ReLU.

Why are ReLU and leaky ReLU often superior to sigmoid and tanh?

-- The derivatives of the former ones is much bigger than 0, so the learning would be much faster.

A linear hidden layer is more or less useless, yet the activation function is a exception.

6. Regularization:

Initially, \(J(w, b) = \frac{1}{m} * \sum_{i=1}^{m}{L({\hat{Y}^(i), y^{(i)}}) + \frac{\lambda}{2*m}||w||_2^2}\)

L2 regularization: \(\frac{\lambda}{2*m}\sum_{j=1}^{n_x}||w_j||^2 = \frac{\lambda}{2*m}||w||_2\)

One aspect that tanh is better thatn sigmoid(in terms of regularization) -- When x is very close to 0, the derivative of tanh(x) is almost linear, while that of the sigmoid(x) is alomst 0.

Dropout:

Method: Make certain values of weights be zeros randomly, just like -- W= np.multiply(W, C), where C is a 0-1 array.

Matters need attention: Don't use dropout in test procedure -- Time costly, result randomly.

Work principle:

Intuition: Can't rely on any one feature, so have to spread out weights(shrinking weights).

Besides, you can set different rates of "Dropout", like lower ones on more complex layer, which are called "key prop".

- Data augmentation:

Do some operation on your data images, such as flipping, rotation, zooming, etc, without changing their labels, in order to prevent from over-fitting on some aspects, such as the direction of faces, the size of cats.

- Early stopping.

7. Solution to "gradient vanishing or exploding":

Set WL = np.random.randn(shape) * np.sqrt(\(\frac{2}{n^{[L-1]}}\)) if activation_function == "ReLU"

else: np.random.randn(shape) * np.sqrt(\(\frac{1}{n^{[L-1]}}\)) or np.sqrt(\(\sqrt{\frac{2}{n^{[L-1]}+n^{[L]}}}\))(Xavier initialization)

8. Gradient Checking:

for i in range(len(\(\theta\))):

to check if (d\(\theta_{approx}[i] = \frac{J(\theta_1, \theta_2, ..., \theta_i+\epsilon, ...) - J(\theta_1, \theta_2, ..., \theta_i-\epsilon, ...)}{2\epsilon}\)) ?= \(d\theta[i] = \frac{\partial{J}}{\partial{\theta_i}}\)

<==> \(d\theta_{approx} ?= d\theta\)

<==> \(\frac{||d\theta_{approx} - d\theta||_2}{||d\theta_{approx}||_2+||d\theta||_2}\) in an accent range: \(10^{-7}\) is great, and \(10^{-3}\) is wrong.

Tips:

- Only to debug, instead of training.

- If algorithm fails grad check, look at components(\(db^{[L]}, dw^{[L]}\)) to try to identify bug.

- Remember regularization.

- Doesn't work together with dropout.

- Run at random initialization; perhaps again after some training.

9. Exponentially weighted averages:

Definition: let \(V_{t} = {\beta}V_{t-1} + (1 - \beta)\theta_t\) (_V_s are the averages, and the _\(\theta\)_s are the initial discrete data).

and \(V_{t} = \frac{V_{t}}{1 - {\beta}^t}\) (To correct initial bias).

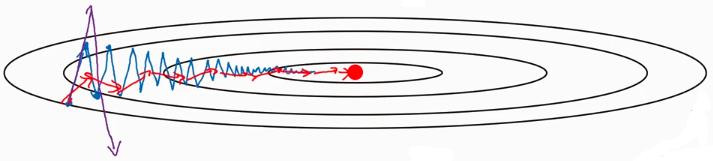

Usage: when it comes to this situation:

Since the average of the distance vertical movement is almost zeros, you can use EWA to average it, prevent it from divergence.

On iteration t:

Compute dW on the current mini-batch

\(v_{dW} = {\beta}v_{dW} + (1 - \beta)dW\)

\(v_{db} = {\beta}v_{db} + (1 - \beta)db\)

\(W = W - {\alpha}v_{dW}, b = b - {\alpha}v_{db}\)

Hyperparameters: \(\alpha\), \({\beta}(=0.9)\)

Deep Learning Specialization 笔记的更多相关文章

- Deep Learning论文笔记之(四)CNN卷积神经网络推导和实现(转)

Deep Learning论文笔记之(四)CNN卷积神经网络推导和实现 zouxy09@qq.com http://blog.csdn.net/zouxy09 自己平时看了一些论文, ...

- Deep Learning论文笔记之(八)Deep Learning最新综述

Deep Learning论文笔记之(八)Deep Learning最新综述 zouxy09@qq.com http://blog.csdn.net/zouxy09 自己平时看了一些论文,但老感觉看完 ...

- Deep Learning论文笔记之(六)Multi-Stage多级架构分析

Deep Learning论文笔记之(六)Multi-Stage多级架构分析 zouxy09@qq.com http://blog.csdn.net/zouxy09 自己平时看了一些 ...

- 【deep learning学习笔记】注释yusugomori的DA代码 --- dA.h

DA就是“Denoising Autoencoders”的缩写.继续给yusugomori做注释,边注释边学习.看了一些DA的材料,基本上都在前面“转载”了.学习中间总有个疑问:DA和RBM到底啥区别 ...

- Deep Learning论文笔记之(一)K-means特征学习

Deep Learning论文笔记之(一)K-means特征学习 zouxy09@qq.com http://blog.csdn.net/zouxy09 自己平时看了一些论文,但老感 ...

- Deep Learning论文笔记之(三)单层非监督学习网络分析

Deep Learning论文笔记之(三)单层非监督学习网络分析 zouxy09@qq.com http://blog.csdn.net/zouxy09 自己平时看了一些论文,但老感 ...

- Spectral Norm Regularization for Improving the Generalizability of Deep Learning论文笔记

Spectral Norm Regularization for Improving the Generalizability of Deep Learning论文笔记 2018年12月03日 00: ...

- Deep Learning论文笔记之(四)CNN卷积神经网络推导和实现

https://blog.csdn.net/zouxy09/article/details/9993371 自己平时看了一些论文,但老感觉看完过后就会慢慢的淡忘,某一天重新拾起来的时候又好像没有看过一 ...

- [置顶]

Deep Learning 学习笔记

一.文章来由 好久没写原创博客了,一直处于学习新知识的阶段.来新加坡也有一个星期,搞定签证.入学等杂事之后,今天上午与导师确定了接下来的研究任务,我平时基本也是把博客当作联机版的云笔记~~如果有写的不 ...

随机推荐

- Spring基于注解开发的注解使用之AOP(部分源代码分析)

AOP底层实现动态代理 1.导入spring-aop包依赖 <!--aopV1--> <dependency> <groupId>org.springframewo ...

- 使用Python对MySQL数据库插入二十万条数据

1.当我们测试的时候需要大量的数据的时候,往往需要我们自己造数据,一条一条的加是不现实的,这时候就需要使用脚本来批量生成数据了. import pymysql import random import ...

- (08)-Python3之--类和对象

1.定义 类:类是抽象的,一类事物的共性的体现. 有共性的属性和行为. 对象:具体化,实例化.有具体的属性值,有具体做的行为. 一个类 对应N多个对象. 类包含属性以及方法. class 类名: 属 ...

- flume agent的内部原理

flume agent 内部原理 1.Source采集数据,EventBuilder.withBody(body)将数据封装成Event对象,source.getChannelProcessor( ...

- Dubbo 总结:关于 Dubbo 的重要知识点

一 重要的概念 1.1 什么是 Dubbo? Apache Dubbo (incubating) |ˈdʌbəʊ| 是一款高性能.轻量级的开源Java RPC 框架,它提供了三大核心能力:面向接口的远 ...

- 如何在windows下切换node版本

安装nvm 最近的项目中,一个是用vue项目开发,一个是使用react开发,但是ant design pro使用了umi框架,所需要的node版本>10.0.0,vue那个项目中又不兼容node ...

- swap交换2变量

#define swap(x,y) {(x)=(x)+(y); (y)=(x)-(y); (x)=(x)-(y);} void swap(int i, int offset){ int temp; t ...

- Java并发包源码学习系列:阻塞队列实现之LinkedTransferQueue源码解析

目录 LinkedTransferQueue概述 TransferQueue 类图结构及重要字段 Node节点 前置:xfer方法的定义 队列操作三大类 插入元素put.add.offer 获取元素t ...

- thymeleaf第一篇:什么是-->为什么要使用-->有啥好处这玩意

Thymeleaf3.0版本官方地址 1 Introducing Thymeleaf Thymeleaf 是一个跟 Velocity.FreeMarker 类似的模板引擎,它可以完全替代 JSP . ...

- 【STM32】时钟

1. 在STM32中,有五个时钟源,为HSI.HSE.LSI.LSE.PLL: ① HSI是高速内部时钟,RC振荡器,频率为8MHz: ② HSE是高速外部时钟,可接石英/陶瓷谐振器,或者接外部时钟源 ...