Introducing Deep Reinforcement

The manuscript of Deep Reinforcement Learning is available now! It makes significant improvements to Deep Reinforcement Learning: An Overview, which has received 100+ citations, by extending its latest version more than one year ago from 70 pages to 150 pages.

It draws a big picture of deep reinforcement learning (RL) with many details. It covers contemporary work in historical contexts. It endeavours to answer the following questions: 1) Why deep? 2) What is the state of the art? and, 3) What are the issues, and potential solutions? It attempts to help those who want to get more familiar with deep RL, and to serve as a reference for people interested in this fascinating area, like professors, researchers, students, engineers, managers, investors, etc. Shortcomings and mistakes are inevitable; comments and criticisms are welcome.

The manuscript introduces AI, machine learning, and deep learning briefly, and provides a mini tutorial for reinforcement learning. The following figure illustrates relationships among these concepts, with major contents for machine learning and AI .Deep reinforcement learning is reinforcement learning integrated with deep learning, or deep artificial neural networks. A blog is dedicated to Resources for Deep Reinforcement Learning.

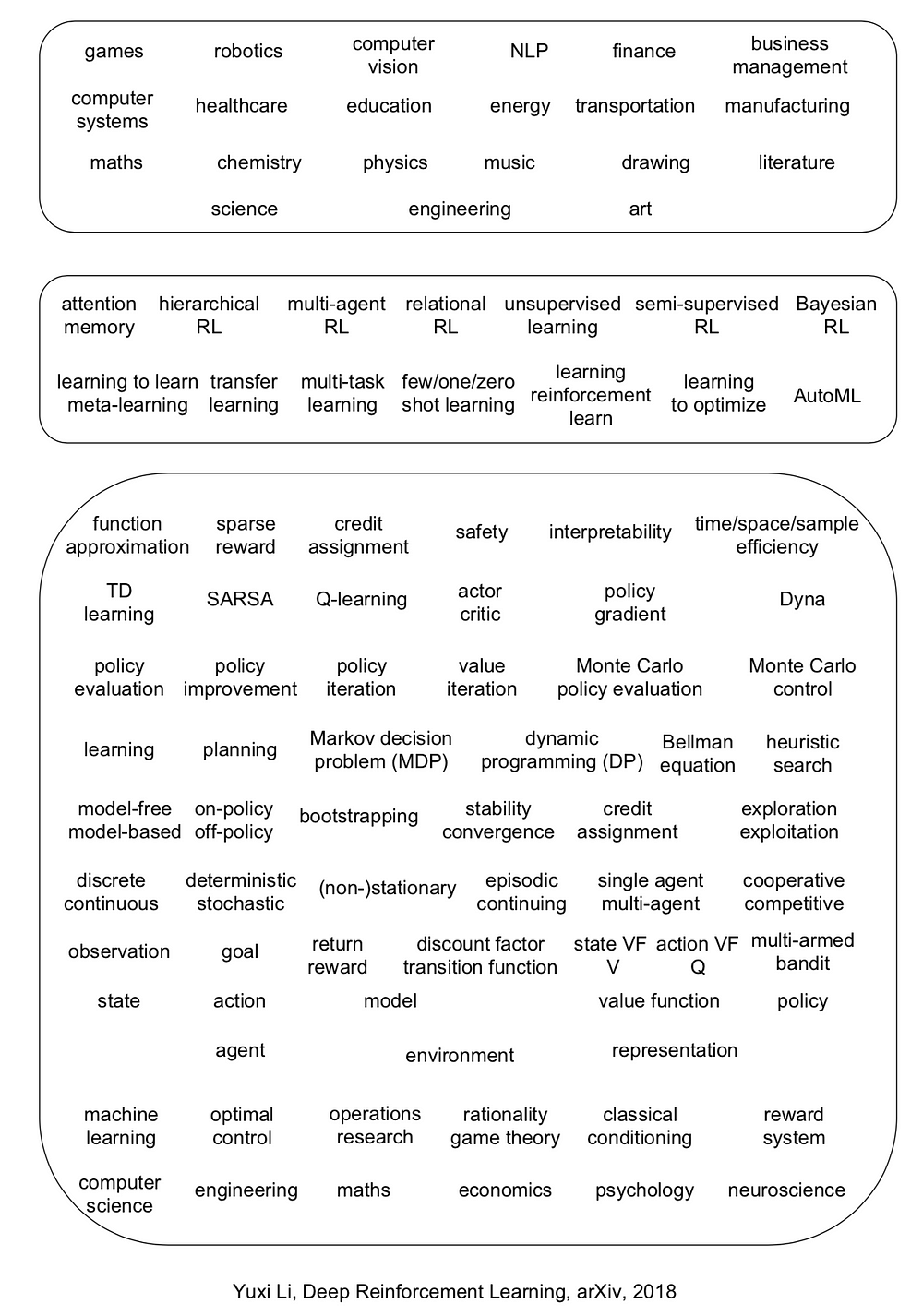

The manuscript covers six core elements: value function, policy, reward, model, exploration vs. exploitation, and representation; six important mechanisms: attention and memory, unsupervised learning, hierarchical RL, multi-agent RL, relational RL, and learning to learn; and twelve applications: games, robotics, natural language processing (NLP), computer vision, finance, business management, healthcare, education, energy, transportation, computer systems, and, science, engineering, and art.

Deep reinforcement learning has made exceptional achievements, e.g., DQN applying to Atari games ignited this wave of deep RL, and AlphaGo (Zero) and DeepStack set landmarks for AI. Deep RL has many newly invented algorithms/architectures, e.g., DQN, A3C, TRPO, PPO, DDPG, Trust-PCL, GPS, UNREAL, DNC, etc. Moreover, deep RL has been enjoying very abound and diverse applications, e.g., Capture the Flag, Dota 2, StarCraft II, robotics, character animation, conversational AI, neural architecture design (AutoML), data center cooling, recommender systems, data augmentation, model compression, combinatorial optimization, program synthesis, theorem proving, medical imaging, music, and chemical retrosynthesis, so on and so forth. A blog is dedicated to Reinforcement Learning applications.

In general, RL is probably helpful, if a problem can be regarded as or transformed to a sequential decision making problem, and states, actions, maybe rewards, can be constructed; sometimes the problem may not appear as an RL problem on the surface. Roughly speaking, if a task involves some manual designed “strategy”, then there is a chance for reinforcement learning to help. Creativity would push the frontiers of deep RL further with respect to core elements, important mechanisms, and applications.

Albeit being so successful, deep RL encounters many issues, like credit assignment, sparse reward, sample efficiency, instability, divergence, interpretability, safety, etc.; even reproducibility is an issue.

Six research directions are proposed as both challenges and opporrtunities. There are already some progress in these directions, e.g., Dopamine, TStarBots, MOREL, GQN, visual reasoning, neural-symbolic learning, UPN, causal InfoGAN, meta-gradient RL, along with many applications as above.

- systematic, comparative study of deep RL algorithms

- “solve” multi-agent problems

- learn from entities, but not just raw inputs

- design an optimal representation for RL

- AutoRL

- develop killer applications for (deep) RL

It is desirable to integrate RL more deeply with AI, with more intelligence in the end-to-end mapping from raw inputs to decisions, to incorporate knowledge, to have common sense, to be more efficient, to be more interpretable, and to avoid obvious mistakes, etc., rather than working as a blackbox.

Deep learning and reinforcement learning, being selected as one of the MIT Technology Review 10 Breakthrough Technologies in 2013 and 2017 respectively, will play their crucial roles in achieving artificial general intelligence. David Silver proposed a conjecture: artificial intelligence = reinforcement learning + deep learning (AI = RL + DL). We will see both deep learning and reinforcement learning prospering in the coming years and beyond. Deep learning is exploding. It is the right time to nurture, educate and lead the market for reinforcement learning.

Deep learning, in this third wave of AI, will have deeper influences, as we have already seen from its many achievements. Reinforcement learning, as a more general learning and decision making paradigm, will deeply influence deep learning, machine learning, and artificial intelligence in general.

Introducing Deep Reinforcement的更多相关文章

- 论文笔记之:Asynchronous Methods for Deep Reinforcement Learning

Asynchronous Methods for Deep Reinforcement Learning ICML 2016 深度强化学习最近被人发现貌似不太稳定,有人提出很多改善的方法,这些方法有很 ...

- (转) Playing FPS games with deep reinforcement learning

Playing FPS games with deep reinforcement learning 博文转自:https://blog.acolyer.org/2016/11/23/playing- ...

- (zhuan) Deep Reinforcement Learning Papers

Deep Reinforcement Learning Papers A list of recent papers regarding deep reinforcement learning. Th ...

- Learning Roadmap of Deep Reinforcement Learning

1. 知乎上关于DQN入门的系列文章 1.1 DQN 从入门到放弃 DQN 从入门到放弃1 DQN与增强学习 DQN 从入门到放弃2 增强学习与MDP DQN 从入门到放弃3 价值函数与Bellman ...

- (转) Deep Reinforcement Learning: Playing a Racing Game

Byte Tank Posts Archive Deep Reinforcement Learning: Playing a Racing Game OCT 6TH, 2016 Agent playi ...

- 论文笔记之:Dueling Network Architectures for Deep Reinforcement Learning

Dueling Network Architectures for Deep Reinforcement Learning ICML 2016 Best Paper 摘要:本文的贡献点主要是在 DQN ...

- getting started with building a ROS simulation platform for Deep Reinforcement Learning

Apparently, this ongoing work is to make a preparation for futural research on Deep Reinforcement Le ...

- (转) Deep Reinforcement Learning: Pong from Pixels

Andrej Karpathy blog About Hacker's guide to Neural Networks Deep Reinforcement Learning: Pong from ...

- 论文笔记之:Deep Reinforcement Learning with Double Q-learning

Deep Reinforcement Learning with Double Q-learning Google DeepMind Abstract 主流的 Q-learning 算法过高的估计在特 ...

随机推荐

- 《利用Python进行数据分析》笔记---第5章pandas入门

写在前面的话: 实例中的所有数据都是在GitHub上下载的,打包下载即可. 地址是:http://github.com/pydata/pydata-book 还有一定要说明的: 我使用的是Python ...

- linux下yum安装jdk1.8(rpm包)和tomcat-8.5

Java是目前可移植性较高的语言,相当火热,tomcat运行就需要Java语言环境 ========= 完美的分割线 ========= 0.java简介 1)tomcat运行需要对应的Java环境, ...

- rar ubuntu

http://jingyan.baidu.com/article/1612d5004095eee20e1eeeab.html sudo 7z x ***.rar

- 大家一起做训练 第一场 A Next Test

题目来源:CodeForce #27 A 题目的意思简而言之就是要你输出一个没有出现过的最小的正整数. 题意如此简单明了,做法也很明了. 直接读入所有的数,然后排个序,设置个变量从1开始,出现过+1, ...

- Windows系统清除远程连接记录的方法

=============================== 1.点击“开始->运行”,在输入框中键入regedit并回车. 在打开的注册表编辑器中, 找到“HKEY_CURRENT_USER ...

- test20181006 石头剪刀布

题意 分析 考场做法同题解一样. std代码. #include<bits/stdc++.h> using namespace std; template <typename T&g ...

- vue数据传递的特殊实现技巧

最近碰到了比较多的关于vue的eventBus的问题,之前定技术选型的时候也被问到了,vuex和eventBus的使用范围.所以简单的写一下.同时有一种特殊的实现方案. 有这么几种数据传递方式,vue ...

- Vue中的“混合”——mixins使用方法

混合是一种灵活的分布式复用 Vue 组件的方式.混合对象可以包含任意组件选项.以组件使用混合对象时,所有混合对象的选项将被混入该组件本身的选项.当组件和混合对象含有同名选项时,这些选项将以恰当的方式混 ...

- vue-echarts-v3 使用

github地址:https://github.com/xlsdg/vue-echarts-v3 官方说明:无论多少个组件代码里写 import IEcharts from 'vue-echarts- ...

- nginx 各参数说明

nginx 各参数说明: 参数 所在上下文 含义