自然语言23_Text Classification with NLTK

sklearn实战-乳腺癌细胞数据挖掘(博主亲自录制视频教程)

QQ:231469242

欢迎喜欢nltk朋友交流

https://www.pythonprogramming.net/text-classification-nltk-tutorial/?completed=/wordnet-nltk-tutorial/

Text Classification with NLTK

Now that we're comfortable with NLTK, let's try to tackle text

classification. The goal with text classification can be pretty broad.

Maybe we're trying to classify text as about politics or the military.

Maybe we're trying to classify it by the gender of the author who wrote

it. A fairly popular text classification task is to identify a body of

text as either spam or not spam, for things like email filters. In our

case, we're going to try to create a sentiment analysis algorithm.

To do this, we're going to start by trying to use the movie

reviews database that is part of the NLTK corpus. From there we'll try

to use words as "features" which are a part of either a positive or

negative movie review. The NLTK corpus movie_reviews data set has the

reviews, and they are labeled already as positive or negative. This

means we can train and test with this data. First, let's wrangle our

data.

import nltk

import random

from nltk.corpus import movie_reviews documents = [(list(movie_reviews.words(fileid)), category)

for category in movie_reviews.categories()

for fileid in movie_reviews.fileids(category)] random.shuffle(documents) print(documents[1]) all_words = []

for w in movie_reviews.words():

all_words.append(w.lower()) all_words = nltk.FreqDist(all_words)

print(all_words.most_common(15))

print(all_words["stupid"])

It may take a moment to run this script, as the movie reviews dataset is somewhat large. Let's cover what is happening here.

After importing the data set we want, you see:

documents = [(list(movie_reviews.words(fileid)), category)

for category in movie_reviews.categories()

for fileid in movie_reviews.fileids(category)]

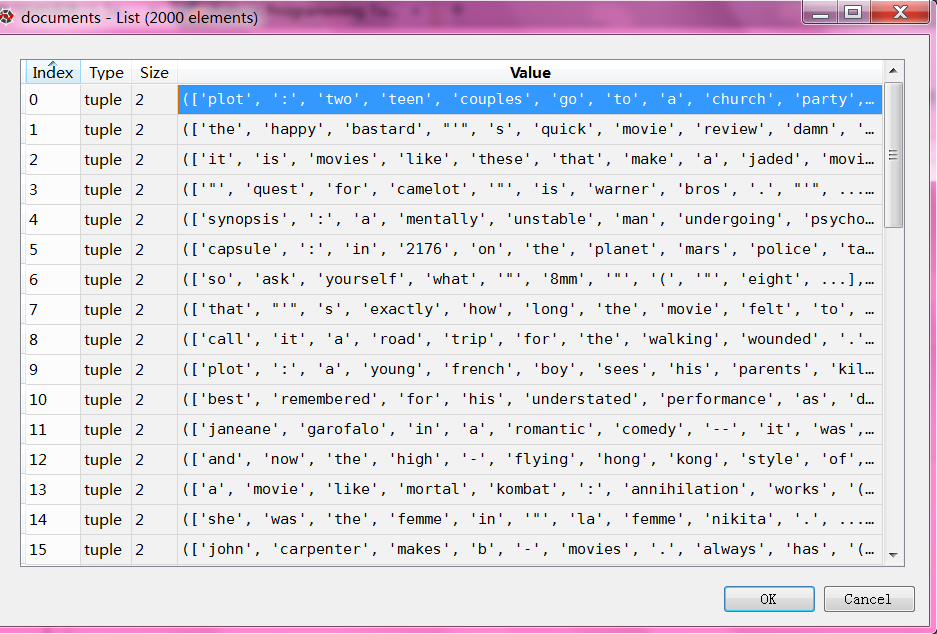

Basically, in plain English, the above code is translated to: In each category (we have pos or neg), take all of the file IDs (each review has its own ID), then store the word_tokenized version (a list of words) for the file ID, followed by the positive or negative label in one big list.

Next, we use random to shuffle our documents. This is because we're going to be training and testing. If we left them in order, chances are we'd train on all of the negatives, some positives, and then test only against positives. We don't want that, so we shuffle the data.

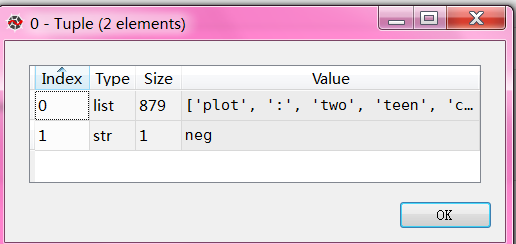

Then, just so you can see the data you are working with, we print out documents[1], which is a big list, where the first element is a list the words, and the 2nd element is the "pos" or "neg" label.

Next, we want to collect all words that we find, so we can have a massive list of typical words. From here, we can perform a frequency distribution, to then find out the most common words. As you will see, the most popular "words" are actually things like punctuation, "the," "a" and so on, but quickly we get to legitimate words. We intend to store a few thousand of the most popular words, so this shouldn't be a problem.

print(all_words.most_common(15))

The above gives you the 15 most common words. You can also find out how many occurences a word has by doing:

print(all_words["stupid"])

Next up, we'll begin storing our words as features of either positive or negative movie reviews.

导入corpus语料库的movie_reviews 影评

all_words 是所有电影影评的所有文字,一共有150多万字

#all_words 是所有电影影评的所有文字,一共有150多万字

all_words=movie_reviews.words()

'''

all_words

Out[37]: ['plot', ':', 'two', 'teen', 'couples', 'go', 'to', ...] len(all_words)

Out[38]: 1583820

'''

影评的分类category只有两种,neg负面,pos正面

import nltk

import random

from nltk.corpus import movie_reviews for category in movie_reviews.categories():

print(category) '''

neg

pos

'''

列出关于neg负面的文件ID

movie_reviews.fileids("neg")

'''

'neg/cv502_10970.txt',

'neg/cv503_11196.txt',

'neg/cv504_29120.txt',

'neg/cv505_12926.txt',

'neg/cv506_17521.txt',

'neg/cv507_9509.txt',

'neg/cv508_17742.txt',

'neg/cv509_17354.txt',

'neg/cv510_24758.txt',

'neg/cv511_10360.txt',

'neg/cv512_17618.txt'

.......

'''

列出关于pos正面的文件ID

movie_reviews.fileids("pos")

'pos/cv989_15824.txt',

'pos/cv990_11591.txt',

'pos/cv991_18645.txt',

'pos/cv992_11962.txt',

'pos/cv993_29737.txt',

'pos/cv994_12270.txt',

'pos/cv995_21821.txt',

'pos/cv996_11592.txt',

'pos/cv997_5046.txt',

'pos/cv998_14111.txt',

'pos/cv999_13106.txt'

输出neg/cv987_7394.txt 的文字,一共有872个

list_words=movie_reviews.words("neg/cv987_7394.txt")

'''

['please', 'don', "'", 't', 'mind', 'this', 'windbag', ...]

'''

len(list_words)

'''

Out[30]: 872

'''

tuple1=(list(movie_reviews.words("neg/cv987_7394.txt")), 'neg')

'''

Out[32]: (['please', 'don', "'", 't', 'mind', 'this', 'windbag', ...], 'neg')

'''

#用列表解析最终比较方便

#展示形式多条(['please', 'don', "'", 't', 'mind', 'this', 'windbag', ...], 'neg')

documents = [(list(movie_reviews.words(fileid)), category)

for category in movie_reviews.categories()

for fileid in movie_reviews.fileids(category)]

一共有2000个文件

每个文件由一窜单词和评论neg/pos组成

完整测试代码

# -*- coding: utf-8 -*-

"""

Created on Sun Dec 4 09:27:48 2016 @author: daxiong

"""

import nltk

import random

from nltk.corpus import movie_reviews documents = [(list(movie_reviews.words(fileid)), category)

for category in movie_reviews.categories()

for fileid in movie_reviews.fileids(category)] random.shuffle(documents) #print(documents[1]) all_words = []

for w in movie_reviews.words():

all_words.append(w.lower()) all_words = nltk.FreqDist(all_words)

#print(all_words.most_common(15))

print(all_words["stupid"])

自然语言23_Text Classification with NLTK的更多相关文章

- 自然语言处理(1)之NLTK与PYTHON

自然语言处理(1)之NLTK与PYTHON 题记: 由于现在的项目是搜索引擎,所以不由的对自然语言处理产生了好奇,再加上一直以来都想学Python,只是没有机会与时间.碰巧这几天在亚马逊上找书时发现了 ...

- 自然语言20_The corpora with NLTK

QQ:231469242 欢迎喜欢nltk朋友交流 https://www.pythonprogramming.net/nltk-corpus-corpora-tutorial/?completed= ...

- 自然语言19.1_Lemmatizing with NLTK(单词变体还原)

QQ:231469242 欢迎喜欢nltk朋友交流 https://www.pythonprogramming.net/lemmatizing-nltk-tutorial/?completed=/na ...

- 自然语言14_Stemming words with NLTK

https://www.pythonprogramming.net/stemming-nltk-tutorial/?completed=/stop-words-nltk-tutorial/ # -*- ...

- 自然语言13_Stop words with NLTK

https://www.pythonprogramming.net/stop-words-nltk-tutorial/?completed=/tokenizing-words-sentences-nl ...

- 自然语言处理2.1——NLTK文本语料库

1.获取文本语料库 NLTK库中包含了大量的语料库,下面一一介绍几个: (1)古腾堡语料库:NLTK包含古腾堡项目电子文本档案的一小部分文本.该项目目前大约有36000本免费的电子图书. >&g ...

- python自然语言处理函数库nltk从入门到精通

1. 关于Python安装的补充 若在ubuntu系统中同时安装了Python2和python3,则输入python或python2命令打开python2.x版本的控制台:输入python3命令打开p ...

- Python自然语言处理实践: 在NLTK中使用斯坦福中文分词器

http://www.52nlp.cn/python%E8%87%AA%E7%84%B6%E8%AF%AD%E8%A8%80%E5%A4%84%E7%90%86%E5%AE%9E%E8%B7%B5-% ...

- 推荐《用Python进行自然语言处理》中文翻译-NLTK配套书

NLTK配套书<用Python进行自然语言处理>(Natural Language Processing with Python)已经出版好几年了,但是国内一直没有翻译的中文版,虽然读英文 ...

随机推荐

- Yii 字段验证

关于验证的属性: $enableClientValidation:是否在客户端验证,也即是否生成前端js验证脚本(如果在form中设置了ajax验证,也会生成这个js脚本). $enableAjaxV ...

- oracle递归查询树的SQL语句

来自互联网 SELECT * FROM a_ParkingLot AWHERE A.REGIONID IN( SELECT r.ID FROM a_region r START WITH ...

- 各种图(流程图,思维导图,UML,拓扑图,ER图)简介

来源于:http://www.cnblogs.com/jiqing9006/p/3344221.html 流程图 1.定义:流程图是对过程.算法.流程的一种图像表示,在技术设计.交流及商业简报等领域有 ...

- Android网络开发之实时获取最新数据

在实际开发中更多的是需要我们实时获取最新数据,比如道路流量.实时天气信息等,这时就需要通过一个线程来控制视图的更新. 示例:我们首先创建一个网页来显示系统当前的时间,然后在Android程序中每隔5秒 ...

- git之旅【第二篇】

1,git的安装 最早Git是在Linux上开发的,很长一段时间内,Git也只能在Linux和Unix系统上跑.不过,慢慢地有人把它移植到了Windows上.现在,Git可以在Linux.Unix.M ...

- JACASCRIPT--的奇技技巧的44招

JavaScript是一个绝冠全球的编程语言,可用于Web开发.移动应用开发(PhoneGap.Appcelerator).服务器端开发(Node.js和Wakanda)等等.JavaScript还是 ...

- NOI WC2016滚粗记

Day-4 报到日,今年居然没有发包QAQ,中午到的,志愿者很热情,食堂吃不了(也有可能是吃不惯),空调打不热,有拖线板(好评),有wifi覆盖(虽然听说连上要看脸)(反正我是没连过,用的自己的流量) ...

- 深入了解Mvc路由系统

请求一个MVC页面的处理过程 1.浏览器发送一个Home/Index 的链接请求到iis.iis发现时一个asp.net处理程序.则调用asp.net_isapi 扩展程序发送asp.net框架 2. ...

- 【spoj8222】Substrings

#include <iostream> #include <cstdio> #include <cstring> #include <cmath> #i ...

- 使用StyleCop进行代码审查

使用StyleCop进行代码审查 工欲善其事,必先利其器,上篇简单介绍了怎样使用Astyle进行代码格式化,使编写的代码具有一致的风格.今天简单介绍下怎样使用StyleCop对原代码进行审查,看编写的 ...