opencv4 mask_rcnn模型调(c++)

昨天有人问我关于调用mask_rcnn模型的问题,忽然想到最近三个月都没用opencv调用训练好的mask_rcnn模型了,今晚做个尝试,所以重新编译了 opencv4,跑个案例试试

#include <fstream>

#include <sstream>

#include <iostream>

#include <string.h> #include <opencv2/dnn.hpp>

#include <opencv2/imgproc.hpp>

#include <opencv2/highgui.hpp> using namespace cv;

using namespace dnn;

using namespace std; RNG rng1; // Initialize the parameters

float confThreshold = 0.5; // Confidence threshold

float maskThreshold = 0.3; // Mask threshold //vector<string> classes;

//vector<Scalar> colors; // Draw the predicted bounding box

void drawBox(Mat& frame, int classId, float conf, Rect box, Mat& objectMask); // Postprocess the neural network's output for each frame

void postprocess(Mat& frame, const vector<Mat>& outs); int main()

{

// Give the configuration and weight files for the model

//String textGraph = "./mask_rcnn_inception_v2_coco_2018_01_28/mask_rcnn_inception_v2_coco_2018_01_28.pbtxt";

//String modelWeights = "./mask_rcnn_inception_v2_coco_2018_01_28/frozen_inference_graph.pb"; String modelWeights = "E:\\Opencv\\model_1\\mask_rcnn_inception_v2_coco_2018_01_28\\frozen_inference_graph.pb";

String textGraph = "E:\\Opencv\\model_1\\mask_rcnn_inception_v2_coco_2018_01_28\\mask_rcnn_inception_v2_coco_2018_01_28.pbtxt";

// Load the network

Net net = readNetFromTensorflow(modelWeights, textGraph);

net.setPreferableBackend(DNN_BACKEND_OPENCV);

net.setPreferableTarget(DNN_TARGET_CPU); // Open a video file or an image file or a camera stream.

string str, outputFile;

VideoCapture cap();//根据摄像头端口id不同,修改下即可

//VideoWriter video;

Mat frame, blob; // Create a window

static const string kWinName = "Deep learning object detection in OpenCV";

namedWindow(kWinName, WINDOW_NORMAL); // Process frames.

if (>)

{

// get frame from the video

//cap >> frame;

frame = cv::imread("D:\\image\\5.png"); // Stop the program if reached end of video

if (frame.empty())

{

cout << "Done processing !!!" << endl;

cout << "Output file is stored as " << outputFile << endl;

}

// Create a 4D blob from a frame.

blobFromImage(frame, blob, 1.0, Size(frame.cols, frame.rows), Scalar(), true, false);

//blobFromImage(frame, blob); //Sets the input to the network

net.setInput(blob); // Runs the forward pass to get output from the output layers

std::vector<String> outNames();

outNames[] = "detection_out_final";

outNames[] = "detection_masks";

vector<Mat> outs;

net.forward(outs, outNames); // Extract the bounding box and mask for each of the detected objects

postprocess(frame, outs); // Put efficiency information. The function getPerfProfile returns the overall time for inference(t) and the timings for each of the layers(in layersTimes)

vector<double> layersTimes;

double freq = getTickFrequency() / ;

double t = net.getPerfProfile(layersTimes) / freq;

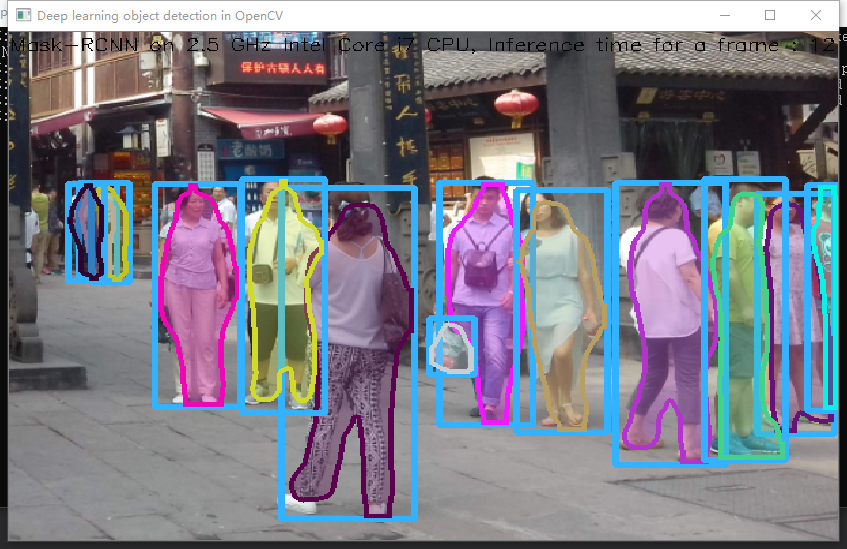

string label = format("Mask-RCNN on 2.5 GHz Intel Core i7 CPU, Inference time for a frame : %0.0f ms", t);

putText(frame, label, Point(, ), FONT_HERSHEY_SIMPLEX, 0.5, Scalar(, , )); // Write the frame with the detection boxes

Mat detectedFrame;

frame.convertTo(detectedFrame, CV_8U); imshow(kWinName, frame); }

//cap.release();

waitKey();

return ;

} // For each frame, extract the bounding box and mask for each detected object

void postprocess(Mat& frame, const vector<Mat>& outs)

{

Mat outDetections = outs[];

Mat outMasks = outs[]; // Output size of masks is NxCxHxW where

// N - number of detected boxes

// C - number of classes (excluding background)

// HxW - segmentation shape

const int numDetections = outDetections.size[];

const int numClasses = outMasks.size[]; outDetections = outDetections.reshape(, outDetections.total() / );

for (int i = ; i < numDetections; ++i)

{

float score = outDetections.at<float>(i, );

if (score > confThreshold)

{

// Extract the bounding box

int classId = static_cast<int>(outDetections.at<float>(i, ));

int left = static_cast<int>(frame.cols * outDetections.at<float>(i, ));

int top = static_cast<int>(frame.rows * outDetections.at<float>(i, ));

int right = static_cast<int>(frame.cols * outDetections.at<float>(i, ));

int bottom = static_cast<int>(frame.rows * outDetections.at<float>(i, )); left = max(, min(left, frame.cols - ));

top = max(, min(top, frame.rows - ));

right = max(, min(right, frame.cols - ));

bottom = max(, min(bottom, frame.rows - ));

Rect box = Rect(left, top, right - left + , bottom - top + ); // Extract the mask for the object

Mat objectMask(outMasks.size[], outMasks.size[], CV_32F, outMasks.ptr<float>(i, classId)); // Draw bounding box, colorize and show the mask on the image

drawBox(frame, classId, score, box, objectMask); }

}

} // Draw the predicted bounding box, colorize and show the mask on the image

void drawBox(Mat& frame, int classId, float conf, Rect box, Mat& objectMask)

{

//Draw a rectangle displaying the bounding box

rectangle(frame, Point(box.x, box.y), Point(box.x + box.width, box.y + box.height), Scalar(, , ), ); //Get the label for the class name and its confidence

/*string label = format("%.2f", conf);

if (!classes.empty())

{

CV_Assert(classId < (int)classes.size());

label = classes[classId] + ":" + label;

}*/ //Display the label at the top of the bounding box

/*

int baseLine;

Size labelSize = getTextSize(label, FONT_HERSHEY_SIMPLEX, 0.5, 1, &baseLine);

box.y = max(box.y, labelSize.height);

rectangle(frame, Point(box.x, box.y - round(1.5*labelSize.height)), Point(box.x + round(1.5*labelSize.width), box.y + baseLine), Scalar(255, 255, 255), FILLED);

putText(frame, label, Point(box.x, box.y), FONT_HERSHEY_SIMPLEX, 0.75, Scalar(0, 0, 0), 1);

*/

//Scalar color = colors[classId%colors.size()];

Scalar color = Scalar(rng1.uniform(, ), rng1.uniform(, ), rng1.uniform(, )); // Resize the mask, threshold, color and apply it on the image

resize(objectMask, objectMask, Size(box.width, box.height));

Mat mask = (objectMask > maskThreshold);

Mat coloredRoi = (0.3 * color + 0.7 * frame(box));

coloredRoi.convertTo(coloredRoi, CV_8UC3); // Draw the contours on the image

vector<Mat> contours;

Mat hierarchy;

mask.convertTo(mask, CV_8U);

findContours(mask, contours, hierarchy, RETR_CCOMP, CHAIN_APPROX_SIMPLE);

drawContours(coloredRoi, contours, -, color, , LINE_8, hierarchy, );

coloredRoi.copyTo(frame(box), mask); }

检测速度和python比起来偏慢

运行日志:

[ INFO:0] global E:\Opencv\opencv-4.1.1\modules\videoio\src\videoio_registry.cpp (187) cv::`anonymous-namespace'::VideoBackendRegistry::VideoBackendRegistry VIDEOIO: Enabled backends(7, sorted by priority): FFMPEG(1000); GSTREAMER(990); INTEL_MFX(980); MSMF(970); DSHOW(960); CV_IMAGES(950); CV_MJPEG(940)

[ INFO:0] global E:\Opencv\opencv-4.1.1\modules\videoio\src\backend_plugin.cpp (340) cv::impl::getPluginCandidates Found 2 plugin(s) for GSTREAMER

[ INFO:0] global E:\Opencv\opencv-4.1.1\modules\videoio\src\backend_plugin.cpp (172) cv::impl::DynamicLib::libraryLoad load E:\Opencv\opencv_4_1_1_install\bin\opencv_videoio_gstreamer411_64.dll => FAILED

[ INFO:0] global E:\Opencv\opencv-4.1.1\modules\videoio\src\backend_plugin.cpp (172) cv::impl::DynamicLib::libraryLoad load opencv_videoio_gstreamer411_64.dll => FAILED

[ INFO:0] global E:\Opencv\opencv-4.1.1\modules\core\src\ocl.cpp (888) cv::ocl::haveOpenCL Initialize OpenCL runtime...

opencv4 mask_rcnn模型调(c++)的更多相关文章

- 使用sklearn进行数据挖掘-房价预测(6)—模型调优

通过上一节的探索,我们会得到几个相对比较满意的模型,本节我们就对模型进行调优 网格搜索 列举出参数组合,直到找到比较满意的参数组合,这是一种调优方法,当然如果手动选择并一一进行实验这是一个十分繁琐的工 ...

- 【新人赛】阿里云恶意程序检测 -- 实践记录10.27 - TF-IDF模型调参 / 数据可视化

TF-IDF模型调参 1. 调TfidfVectorizer的参数 ngram_range, min_df, max_df: 上一篇博客调了ngram_range这个参数,得出了ngram_range ...

- 深度学习模型调优方法(Deep Learning学习记录)

深度学习模型的调优,首先需要对各方面进行评估,主要包括定义函数.模型在训练集和测试集拟合效果.交叉验证.激活函数和优化算法的选择等. 那如何对我们自己的模型进行判断呢?——通过模型训练跑代码,我们可以 ...

- python的随机森林模型调参

一.一般的模型调参原则 1.调参前提:模型调参其实是没有定论,需要根据不同的数据集和不同的模型去调.但是有一些调参的思想是有规律可循的,首先我们可以知道,模型不准确只有两种情况:一是过拟合,而是欠拟合 ...

- 机器学习笔记——模型调参利器 GridSearchCV(网格搜索)参数的说明

GridSearchCV,它存在的意义就是自动调参,只要把参数输进去,就能给出最优化的结果和参数.但是这个方法适合于小数据集,一旦数据的量级上去了,很难得出结果.这个时候就是需要动脑筋了.数据量比较大 ...

- 【新人赛】阿里云恶意程序检测 -- 实践记录11.3 - n-gram模型调参

主要工作 本周主要是跑了下n-gram模型,并调了下参数.大概看了几篇论文,有几个处理方法不错,准备下周代码实现一下. xgboost参数设置为: param = {'max_depth': 6, ' ...

- 【新人赛】阿里云恶意程序检测 -- 实践记录10.20 - 数据预处理 / 训练数据分析 / TF-IDF模型调参

Colab连接与数据预处理 Colab连接方法见上一篇博客 数据预处理: import pandas as pd import pickle import numpy as np # 训练数据和测试数 ...

- tenorflow 模型调优

# Create the Timeline object, and write it to a json from tensorflow.python.client import timeline t ...

- SPSS数据分析-时间序列模型

我们在分析数据时,经常会碰到一种数据,它是由时间累积起来的,并按照时间顺序排列的一系列观测值,我们称为时间序列,它有点类似于重复测量数据,但是区别在于重复测量数据的时间点不会很多,而时间序列的时间点非 ...

随机推荐

- Java并发(九)【转载】不可不说的Java“锁”事

转载自 美团技术团队,原文链接 不可不说的Java“锁”事 前言 Java提供了种类丰富的锁,每种锁因其特性的不同,在适当的场景下能够展现出非常高的效率.本文旨在对锁相关源码(本文中的源码来自JDK ...

- 前端学习笔记--css案例

要实现的案例: 1.分析布局 2.划分文件结构: 3.编写css代码 * { padding: 0; margin: 0; } body { font-size: 16px; color: burly ...

- PCL安装与配置

一.配置环境 1.win7 64位2.Visual Studio 2015 二 .准备工作 安装包准备: 移步:https://www.cnblogs.com/weiyouqing/p/8046387 ...

- jquery页面多个倒计时效果

<div class="timeBox" data-times="2019/06/30,23:59:59"> 距结束 <span class= ...

- Springboot如何优雅的解决ajax+自定义headers的跨域请求[转]

1.什么是跨域 由于浏览器同源策略(同源策略,它是由Netscape提出的一个著名的安全策略.现在所有支持JavaScript 的浏览器都会使用这个策略.所谓同源是指,域名,协议,端口相同.),凡是发 ...

- C语言十六进制转换成十进制:要从右到左用二进制的每个数去乘以16的相应次方

#include <stdio.h> /* 十六进制转换成十进制:要从右到左用二进制的每个数去乘以16的相应次方: 在16进制中:a(A)=10 b(B)=11 c(C)=12 d(D)= ...

- Uoj #35. 后缀排序(后缀数组)

35. 后缀排序 统计 描述 提交 自定义测试 这是一道模板题. 读入一个长度为 nn 的由小写英文字母组成的字符串,请把这个字符串的所有非空后缀按字典序从小到大排序,然后按顺序输出后缀的第一个字符在 ...

- Pytest权威教程26-示例和自定义技巧

目录 示例和自定义技巧 返回: Pytest权威教程 示例和自定义技巧 这是一个(不断增长的)示例列表.如果你需要更多示例或有疑问,请联系我们.另请参阅包含许多示例代码段的 综合文档.此外,stack ...

- Sphinx全文索引引擎

一.什么是sphinx 原理:sphinx将数据库中的表建立索引,php操作sphinx时,将要查询的关键字进行匹配,返回一个id,php通过id到数据库中查询数据. 二.下载 链接:https:// ...

- BufferedReader和BufferedWriter简介

BufferedReader和BufferedWriter简介 为了提高字符流读写的效率,引入了缓冲机制,进行字符批量的读写,提高了单个字符读写的效率.BufferedReader用于加快读取字符的速 ...