urllib库详解 --Python3

相关:urllib是python内置的http请求库,本文介绍urllib三个模块:请求模块urllib.request、异常处理模块urllib.error、url解析模块urllib.parse。

1、请求模块:urllib.request

1、python2

import urllib2

response = urllib2.urlopen('http://httpbin.org/robots.txt')

2、python3

import urllib.request

res = urllib.request.urlopen('http://httpbin.org/robots.txt')

urllib.request.urlopen(url, data=None, [timeout, ]*, cafile=None, capath=None, cadefault=False, context=None)

urlopen()方法中的url参数可以是字符串,也可以是一个Request对象

#url可以是字符串

import urllib.request

resp = urllib.request.urlopen('http://www.baidu.com')

print(resp.read().decode('utf-8')) # read()获取响应体的内容,内容是bytes字节流,需要转换成字符串

##url可以也是Request对象

import urllib.request

request = urllib.request.Request('http://httpbin.org')

response = urllib.request.urlopen(request)

print(response.read().decode('utf-8'))

data参数:post请求

# coding:utf8

import urllib.request, urllib.parse

data = bytes(urllib.parse.urlencode({'word': 'hello'}), encoding='utf8')

resp = urllib.request.urlopen('http://httpbin.org/post', data=data)

print(resp.read())

urlopen()中的参数timeout:设置请求超时时间:

# coding:utf8

#设置请求超时时间

import urllib.request

resp = urllib.request.urlopen('http://httpbin.org/get', timeout=0.1)

print(resp.read().decode('utf-8'))

响应类型:

# coding:utf8

#响应类型

import urllib.request

resp = urllib.request.urlopen('http://httpbin.org/get')

print(type(resp))

响应的状态码、响应头:

# coding:utf8

#响应的状态码、响应头

import urllib.request

resp = urllib.request.urlopen('http://www.baidu.com')

print(resp.status)

print(resp.getheaders()) # 数组(元组列表)

print(resp.getheader('Server')) # "Server"大小写不区分

200

[('Bdpagetype', '1'), ('Bdqid', '0xa6d873bb003836ce'), ('Cache-Control', 'private'), ('Content-Type', 'text/html'), ('Cxy_all', 'baidu+b8704ff7c06fb8466a83df26d7f0ad23'), ('Date', 'Sun, 21 Apr 2019 15:18:24 GMT'), ('Expires', 'Sun, 21 Apr 2019 15:18:03 GMT'), ('P3p', 'CP=" OTI DSP COR IVA OUR IND COM "'), ('Server', 'BWS/1.1'), ('Set-Cookie', 'BAIDUID=8C61C3A67C1281B5952199E456EEC61E:FG=1; expires=Thu, 31-Dec-37 23:55:55 GMT; max-age=2147483647; path=/; domain=.baidu.com'), ('Set-Cookie', 'BIDUPSID=8C61C3A67C1281B5952199E456EEC61E; expires=Thu, 31-Dec-37 23:55:55 GMT; max-age=2147483647; path=/; domain=.baidu.com'), ('Set-Cookie', 'PSTM=1555859904; expires=Thu, 31-Dec-37 23:55:55 GMT; max-age=2147483647; path=/; domain=.baidu.com'), ('Set-Cookie', 'delPer=0; path=/; domain=.baidu.com'), ('Set-Cookie', 'BDSVRTM=0; path=/'), ('Set-Cookie', 'BD_HOME=0; path=/'), ('Set-Cookie', 'H_PS_PSSID=1452_28777_21078_28775_28722_28557_28838_28584_28604; path=/; domain=.baidu.com'), ('Vary', 'Accept-Encoding'), ('X-Ua-Compatible', 'IE=Edge,chrome=1'), ('Connection', 'close'), ('Transfer-Encoding', 'chunked')]

BWS/1.1

使用代理:urllib.request.ProxyHandler():

# coding:utf8

proxy_handler = urllib.request.ProxyHandler({'http': 'http://www.example.com:3128/'})

proxy_auth_handler = urllib.request.ProxyBasicAuthHandler()

proxy_auth_handler.add_password('realm', 'host', 'username', 'password')

opener = urllib.request.build_opener(proxy_handler, proxy_auth_handler)

# This time, rather than install the OpenerDirector, we use it directly:

resp = opener.open('http://www.example.com/login.html')

print(resp.read())

2、异常处理模块:urllib.error

异常处理实例1:

# coding:utf8

from urllib import error, request

try:

resp = request.urlopen('http://www.blueflags.cn')

except error.URLError as e:

print(e.reason)

异常处理实例2:

# coding:utf8

from urllib import error, request

try:

resp = request.urlopen('http://www.baidu.com')

except error.HTTPError as e:

print(e.reason, e.code, e.headers, sep='\n')

except error.URLError as e:

print(e.reason)

else:

print('request successfully')

异常处理实例3:

# coding:utf8

import socket, urllib.request, urllib.error

try:

resp = urllib.request.urlopen('http://www.baidu.com', timeout=0.01)

except urllib.error.URLError as e:

print(type(e.reason))

if isinstance(e.reason,socket.timeout):

print('time out')

3、url解析模块:urllib.parse

parse.urlencode

# coding:utf8

from urllib import request, parse

url = 'http://httpbin.org/post'

headers = {

'Host': 'httpbin.org',

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/72.0.3626.109 Safari/537.36'

}

dict = {'name': 'Germey'}

data = bytes(parse.urlencode(dict), encoding='utf8')

req = request.Request(url=url, data=data, headers=headers, method='POST')

resp = request.urlopen(req)

print(resp.read().decode('utf-8'))

{

"args": {},

"data": "",

"files": {},

"form": {

"name": "Thanlon"

},

"headers": {

"Accept-Encoding": "identity",

"Content-Length": "12",

"Content-Type": "application/x-www-form-urlencoded",

"Host": "httpbin.org",

"User-Agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/72.0.3626.109 Safari/537.36"

},

"json": null,

"origin": "117.136.78.194, 117.136.78.194",

"url": "https://httpbin.org/post"

}

add_header方法添加请求头:

# coding:utf8

from urllib import request, parse

url = 'http://httpbin.org/post'

dict = {'name': 'Thanlon'}

data = bytes(parse.urlencode(dict), encoding='utf8')

req = request.Request(url=url, data=data, method='POST')

req.add_header('User-Agent',

'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/72.0.3626.109 Safari/537.36')

resp = request.urlopen(req)

print(resp.read().decode('utf-8'))

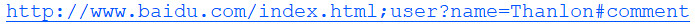

parse.urlparse:

# coding:utf8

from urllib.parse import urlparse

result = urlparse('http://www.baidu.com/index.html;user?id=1#comment')

print(type(result))

print(result)

<class 'urllib.parse.ParseResult'>

ParseResult(scheme='http', netloc='www.baidu.com', path='/index.html', params='user', query='id=1', fragment='comment')

from urllib.parse import urlparse

result = urlparse('www.baidu.com/index.html;user?id=1#comment', scheme='https')

print(type(result))

print(result)

<class 'urllib.parse.ParseResult'>

ParseResult(scheme='https', netloc='', path='www.baidu.com/index.html', params='user', query='id=1', fragment='comment')

# coding:utf8

from urllib.parse import urlparse

result = urlparse('http://www.baidu.com/index.html;user?id=1#comment', scheme='https')

print(result)

ParseResult(scheme='http', netloc='www.baidu.com', path='/index.html', params='user', query='id=1', fragment='comment')

# coding:utf8

from urllib.parse import urlparse

result = urlparse('http://www.baidu.com/index.html;user?id=1#comment',allow_fragments=False)

print(result)

ParseResult(scheme='http', netloc='www.baidu.com', path='/index.html', params='user', query='id=1', fragment='comment')

parse.urlunparse:

# coding:utf8

from urllib.parse import urlunparse

data = ['http', 'www.baidu.com', 'index.html', 'user', 'name=Thanlon', 'comment']

print(urlunparse(data))

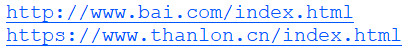

parse.urljoin:

# coding:utf8

from urllib.parse import urljoin

print(urljoin('http://www.bai.com', 'index.html'))

print(urljoin('http://www.baicu.com', 'https://www.thanlon.cn/index.html'))#以后面为基准

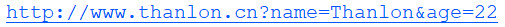

urlencode将字典对象转换成get请求的参数:

# coding:utf8

from urllib.parse import urlencode

params = {

'name': 'Thanlon',

'age': 22

}

baseUrl = 'http://www.thanlon.cn?'

url = baseUrl + urlencode(params)

print(url)

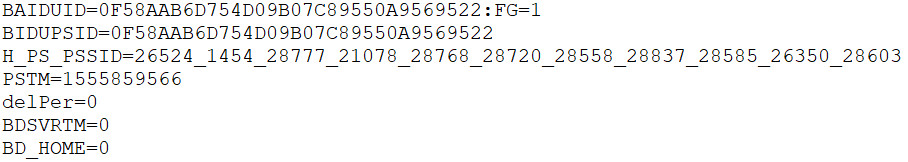

4、Cookie

cookie的获取(保持登录会话信息):

# coding:utf8

#cookie的获取(保持登录会话信息)

import urllib.request, http.cookiejar

cookie = http.cookiejar.CookieJar()

handler = urllib.request.HTTPCookieProcessor(cookie)

opener = urllib.request.build_opener(handler)

res = opener.open('http://www.baidu.com')

for item in cookie:

print(item.name + '=' + item.value)

MozillaCookieJar(filename)形式保存cookie

# coding:utf8

#将cookie保存为cookie.txt

import http.cookiejar, urllib.request

filename = 'cookie.txt'

cookie = http.cookiejar.MozillaCookieJar(filename)

handler = urllib.request.HTTPCookieProcessor(cookie)

opener = urllib.request.build_opener(handler)

res = opener.open('http://www.baidu.com')

cookie.save(ignore_discard=True, ignore_expires=True)

LWPCookieJar(filename)形式保存cookie:

# coding:utf8

import http.cookiejar, urllib.request

filename = 'cookie.txt'

cookie = http.cookiejar.LWPCookieJar(filename)

handler = urllib.request.HTTPCookieProcessor(cookie)

opener = urllib.request.build_opener(handler)

res = opener.open('http://www.baidu.com')

cookie.save(ignore_discard=True, ignore_expires=True)

读取cookie请求,获取登陆后的信息

# coding:utf8

import http.cookiejar, urllib.request

cookie = http.cookiejar.LWPCookieJar()

cookie.load('cookie.txt', ignore_discard=True, ignore_expires=True)

handler = urllib.request.HTTPCookieProcessor(cookie)

opener = urllib.request.build_opener(handler)

resp = opener.open('http://www.baidu.com')

print(resp.read().decode('utf-8'))

urllib库详解 --Python3的更多相关文章

- Python爬虫系列-Urllib库详解

Urllib库详解 Python内置的Http请求库: * urllib.request 请求模块 * urllib.error 异常处理模块 * urllib.parse url解析模块 * url ...

- 爬虫入门之urllib库详解(二)

爬虫入门之urllib库详解(二) 1 urllib模块 urllib模块是一个运用于URL的包 urllib.request用于访问和读取URLS urllib.error包括了所有urllib.r ...

- python爬虫知识点总结(三)urllib库详解

一.什么是Urllib? 官方学习文档:https://docs.python.org/3/library/urllib.html 廖雪峰的网站:https://www.liaoxuefeng.com ...

- 爬虫(二):Urllib库详解

什么是Urllib: python内置的HTTP请求库 urllib.request : 请求模块 urllib.error : 异常处理模块 urllib.parse: url解析模块 urllib ...

- requests库详解 --Python3

本文介绍了requests库的基本使用,希望对大家有所帮助. requests库官方文档:https://2.python-requests.org/en/master/ 一.请求: 1.GET请求 ...

- 爬虫--Urllib库详解

1.什么是Urllib? 2.相比Python2的变化 3.用法讲解 (1)urlopen urlllb.request.urlopen(url,data=None[timeout,],cahle=N ...

- Lua的协程和协程库详解

我们首先介绍一下什么是协程.然后详细介绍一下coroutine库,然后介绍一下协程的简单用法,最后介绍一下协程的复杂用法. 一.协程是什么? (1)线程 首先复习一下多线程.我们都知道线程——Thre ...

- Python--urllib3库详解1

Python--urllib3库详解1 Urllib3是一个功能强大,条理清晰,用于HTTP客户端的Python库,许多Python的原生系统已经开始使用urllib3.Urllib3提供了很多pyt ...

- Struts标签库详解【3】

struts2标签库详解 要在jsp中使用Struts2的标志,先要指明标志的引入.通过jsp的代码的顶部加入以下的代码: <%@taglib prefix="s" uri= ...

随机推荐

- windows----------Windows10 远程桌面连接失败,报CredSSP加密oracle修正错误解决办法

1.通过运行gpedit.msc进入组策略配置(需要win10专业版,家庭版无解),策略路径:“计算机配置”->“管理模板”->“系统”->“凭据分配”,设置名称: 加密 Oracl ...

- java实现人民币数字转大写(转)

原文:http://www.codeceo.com/article/java-currency-upcase.html 0 希望转换出来的结果为: 零元零角零分 1234 希望转换出来的结果为: 壹仟 ...

- openwrt路由器进入安全模式

openwrt路由器型号:WNDR3800 一.实验背景 在pc机上通过xshell软件登录openwrt路由器,pc机通过网线与openwrt路由器的LAN接口相连.openwrt路由器自带两块无线 ...

- Python数据基础--列表、元组、字典、函数

一.数据结构 列表(List)和元组 序列是Python中最基本的数据结构.序列中的每个元素都分配一个数字 - 它的位置,或索引,第一个索引是0,第二个索引是1,依此类推. Python有6个序列的内 ...

- 通过Shell脚本将VSS项目批量创建并且提交迁移至Gitlab

脚本运行环境:Git Bash 系统环境:Windows 10 Pro 1709 VSS版本:Microsoft Visual SourceSafe 2005 我的VSS工作目录结构如下: D:\wo ...

- 虚拟蜜罐honeyd安装使用

转https://blog.csdn.net/jack237/article/details/6828771

- 运用java反射机制获取实体方法报错,java.lang.NoSuchMethodException: int.<init>(java.lang.String)

错误的原因是我的Student实体,成员变量数据类型,使用了int基本数据类型,改成Integer包装类型即可.

- QSettings 类

一 .QSettings介绍: 用户通常希望应用程序记住其设置.在windows中,这些设置保存在注册表中,ios在属性文件列表中,而unix,在缺乏标准的情况下,其存储在ini文本中.QSettin ...

- django admin使用-后台数据库管理界面

admin是django提供的基于web的管理数据库的工具,它是django.contrib 的一部分,可以在项目的settings.py中的INSTALLED_APPS看到. 一.admin管理工具 ...

- 自制操作系统Antz(13) 显示图片

显示图片只是在多媒体课上看着bmp格式图片的突发奇想,然后就实现在了我自己的操作系统 Antz系统更新地址 Linux内核源码分析地址 Github项目地址 效果图: 显示图片的原理 在之前显卡操作时 ...