python之scrapy模块下载中间件

知识点

使用方法:

编写一个Downloader Middlewares和我们编写一个pipeline一样,定义一个类,然后在setting中开启 Downloader Middlewares默认的方法:

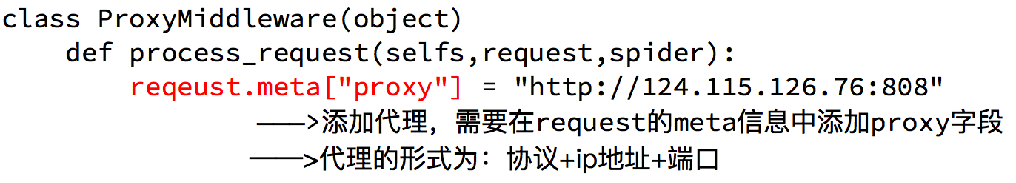

process_request(self, request, spider):

当每个request通过下载中间件时,该方法被调用。

process_response(self, request, response, spider):

当下载器完成http请求,传递响应给引擎的时候调用

1、学习官网网址

https://docs.scrapy.org/

2、settings文件,USER_AGENTS代理池

# -*- coding: utf-8 -*- # Scrapy settings for zjh project

#

# For simplicity, this file contains only settings considered important or

# commonly used. You can find more settings consulting the documentation:

#

# https://doc.scrapy.org/en/latest/topics/settings.html

# https://doc.scrapy.org/en/latest/topics/downloader-middleware.html

# https://doc.scrapy.org/en/latest/topics/spider-middleware.html BOT_NAME = 'zjh' SPIDER_MODULES = ['zjh.spiders']

NEWSPIDER_MODULE = 'zjh.spiders' LOG_LEVEL = "WARNING"

# Crawl responsibly by identifying yourself (and your website) on the user-agent

#USER_AGENT = 'zjh (+http://www.yourdomain.com)'

USER_AGENT = 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/74.0.3729.131 Safari/537.36'

# Obey robots.txt rules

ROBOTSTXT_OBEY = True # Configure maximum concurrent requests performed by Scrapy (default: 16)

#CONCURRENT_REQUESTS = 32 # Configure a delay for requests for the same website (default: 0)

# See https://doc.scrapy.org/en/latest/topics/settings.html#download-delay

# See also autothrottle settings and docs

#DOWNLOAD_DELAY = 3

# The download delay setting will honor only one of:

#CONCURRENT_REQUESTS_PER_DOMAIN = 16

#CONCURRENT_REQUESTS_PER_IP = 16 # Disable cookies (enabled by default)

#COOKIES_ENABLED = False # Disable Telnet Console (enabled by default)

#TELNETCONSOLE_ENABLED = False # Override the default request headers:

#DEFAULT_REQUEST_HEADERS = {

# 'Accept': 'text/html,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8',

# 'Accept-Language': 'en',

#} # Enable or disable spider middlewares

# See https://doc.scrapy.org/en/latest/topics/spider-middleware.html

#SPIDER_MIDDLEWARES = {

# 'zjh.middlewares.ZjhSpiderMiddleware': 543,

#} # Enable or disable downloader middlewares

# See https://doc.scrapy.org/en/latest/topics/downloader-middleware.html

#开启下载中间件

DOWNLOADER_MIDDLEWARES = {

'zjh.middlewares.RandomUserAgentMiddleware': 543,

'zjh.middlewares.CheckUserAgent': 544,

} # Enable or disable extensions

# See https://doc.scrapy.org/en/latest/topics/extensions.html

#EXTENSIONS = {

# 'scrapy.extensions.telnet.TelnetConsole': None,

#} # Configure item pipelines

# See https://doc.scrapy.org/en/latest/topics/item-pipeline.html

#ITEM_PIPELINES = {

# 'zjh.pipelines.ZjhPipeline': 300,

#} # Enable and configure the AutoThrottle extension (disabled by default)

# See https://doc.scrapy.org/en/latest/topics/autothrottle.html

#AUTOTHROTTLE_ENABLED = True

# The initial download delay

#AUTOTHROTTLE_START_DELAY = 5

# The maximum download delay to be set in case of high latencies

#AUTOTHROTTLE_MAX_DELAY = 60

# The average number of requests Scrapy should be sending in parallel to

# each remote server

#AUTOTHROTTLE_TARGET_CONCURRENCY = 1.0

# Enable showing throttling stats for every response received:

#AUTOTHROTTLE_DEBUG = False # Enable and configure HTTP caching (disabled by default)

# See https://doc.scrapy.org/en/latest/topics/downloader-middleware.html#httpcache-middleware-settings

#HTTPCACHE_ENABLED = True

#HTTPCACHE_EXPIRATION_SECS = 0

#HTTPCACHE_DIR = 'httpcache'

#HTTPCACHE_IGNORE_HTTP_CODES = []

#HTTPCACHE_STORAGE = 'scrapy.extensions.httpcache.FilesystemCacheStorage' USER_AGENTS = [ "Mozilla/5.0 (compatible; MSIE 9.0; Windows NT 6.1; Win64; x64; Trident/5.0; .NET CLR 3.5.30729; .NET CLR 3.0.30729; .NET CLR 2.0.50727; Media Center PC 6.0)", "Mozilla/5.0 (compatible; MSIE 8.0; Windows NT 6.0; Trident/4.0; WOW64; Trident/4.0; SLCC2; .NET CLR 2.0.50727; .NET CLR 3.5.30729; .NET CLR 3.0.30729; .NET CLR 1.0.3705; .NET CLR 1.1.4322)", "Mozilla/4.0 (compatible; MSIE 7.0b; Windows NT 5.2; .NET CLR 1.1.4322; .NET CLR 2.0.50727; InfoPath.2; .NET CLR 3.0.04506.30)", "Mozilla/5.0 (Windows; U; Windows NT 5.1; zh-CN) AppleWebKit/523.15 (KHTML, like Gecko, Safari/419.3) Arora/0.3 (Change: 287 c9dfb30)", "Mozilla/5.0 (X11; U; Linux; en-US) AppleWebKit/527+ (KHTML, like Gecko, Safari/419.3) Arora/0.6", "Mozilla/5.0 (Windows; U; Windows NT 5.1; en-US; rv:1.8.1.2pre) Gecko/20070215 K-Ninja/2.1.1", "Mozilla/5.0 (Windows; U; Windows NT 5.1; zh-CN; rv:1.9) Gecko/20080705 Firefox/3.0 Kapiko/3.0", "Mozilla/5.0 (X11; Linux i686; U;) Gecko/20070322 Kazehakase/0.4.5" ]

3、middleware.py处理代码池

# -*- coding: utf-8 -*- # Define here the models for your spider middleware

#

# See documentation in:

# https://doc.scrapy.org/en/latest/topics/spider-middleware.html from scrapy import signals import random

class RandomUserAgentMiddleware:

"""

下载中间件一般用于反爬虫,代理IP,自定义USER_AGENTS

"""

def process_request(self,request,spider):

ua = random.choice(spider.settings.get("USER_AGENTS"))

request.headers["User-Agent"] = ua class CheckUserAgent:

def process_response(self,request,response,spider):

print(dir(response.request))

print(request.headers["User-Agent"])

# return 必须有,表示响应经过引擎交给爬虫

return response

4、参考学习

a)代理UserAgent

b) 代理ip

python之scrapy模块下载中间件的更多相关文章

- python之poplib模块下载并解析邮件

# -*- coding: utf-8 -*- #python 27 #xiaodeng #python之poplib模块下载并解析邮件 #https://github.com/michaelliao ...

- Scrapy——5 下载中间件常用函数、scrapy怎么对接selenium、常用的Setting内置设置有哪些

Scrapy——5 下载中间件常用的函数 Scrapy怎样对接selenium 常用的setting内置设置 对接selenium实战 (Downloader Middleware)下载中间件常用函数 ...

- UA池 代理IP池 scrapy的下载中间件

# 一些概念 - 在scrapy中如何给所有的请求对象尽可能多的设置不一样的请求载体身份标识 - UA池,process_request(request) - 在scrapy中如何给发生异常的请求设置 ...

- Scrapy的下载中间件

下载中间件 简介 下载器,无法执行js代码,本身不支持代理 下载中间件用来hooks进Scrapy的request/response处理过程的框架,一个轻量级的底层系统,用来全局修改scrapy的re ...

- python之scrapy模块scrapy-redis使用

1.redis的使用,自己可以多学习下,个人也是在学习 https://www.cnblogs.com/ywjfx/p/10262662.html官网可以自己搜索下. 2.下载安装scrapy-red ...

- python使用requests模块下载文件并获取进度提示

一.概述 使用python3写了一个获取某网站文件的小脚本,使用了requests模块的get方法得到内容,然后通过文件读写的方式保存到硬盘同时需要实现下载进度的显示 二.代码实现 安装模块 pip3 ...

- python使用you-get模块下载视频

pip install you-get # 安装先 怎么用 进入命令行: you-get url 暂停下载:ctrl + c ,继续下载重复 you-get url 官网地址:https:// ...

- python 安装 Scrapy 模块

环境的安装总是让人多愁善感,爱恨交叉... 本人安装环境:win7 64 + python2.7 先来几个网站 https://doc.scrapy.org/en/latest/intro/insta ...

- Python使用requests模块下载图片

MySQL中事先保存好爬取到的图片链接地址. 然后使用多线程把图片下载到本地. # coding: utf-8 import MySQLdb import requests import os imp ...

随机推荐

- IDEA找不到maven仓库无法下载依赖解决办法

1.确认Maven安装正常,在cmd窗口输入mvn -version 可以获得版本号: 2. 确认maven安装包下/conf/setting.xml配置文件正确 本地仓库位置: <localR ...

- Bootstrap treegrid 实现树形表格结构

前言 :最近的项目中需要实现树形表格功能,由于前端框架用的是bootstrap,但是bootstrapTable没有这个功能所以就找了一个前端的treegrid第三方组件进行了封装.现在把这个封装的组 ...

- 08_Hive中的各种Join操作

1.关于hive中的各种join Hive中有许多的Join操作,例如:LEFT.RIGHT和FULL OUTER JOIN,INNER JOIN,LEFT SEMI JOIN等: 1.1.准备两组数 ...

- LoadRunner(3)

一.性能测试的策略 重要的:基准测试.并发测试.在线综合场景测试 递增测试.极限测试... 1.基准测试:Benchmark Testing 含义:就是单用户测试,单用户.单测试点.执行n次: 作为后 ...

- HashMap源码分析二

jdk1.2中HashMap的源码和jdk1.3中HashMap的源码基本上没变.在上篇中,我纠结的那个11和101的问题,在这边中找到答案了. jdk1.2 public HashMap() ...

- Spring实战(第4版)

第1部分 Spring的核心 Spring的两个核心:依赖注入(dependency injection,DI)和面向切面编程(aspec-oriented programming,AOP) POJO ...

- BZOJ3032 七夕祭[中位数]

发现是一个类似于“纸牌均分”的问题.然后发现,只要列数整除目标.行数整除目标就一定可以. 如果只移动列,并不会影响行,也就是同一行不会多不会少.只移动行同理. 所以可以把两个问题分开来看,处理起来互不 ...

- hadoop关闭安全模式

执行以下语句即可 hadoop dfsadmin -safemode leave

- oracle split函数

PL/SQL 中没有split函数,需要自己写. 代码: ); --创建一个 type ,如果为了使split函数具有通用性,请将其size 设大些. --创建function create or r ...

- Python JSONⅢ

JSON 函数 encode Python encode() 函数用于将 Python 对象编码成 JSON 字符串. 语法 实例 以下实例将数组编码为 JSON 格式数据: 以上代码执行结果为: d ...